AI-Generated Code Is Clean, But Nobody Understands It — Google Engineer Warns of 'Comprehension Debt'

Developers who generated code with AI tools scored 17% lower on comprehension tests. Google engineer Addy Osmani introduces the concept of 'Comprehension Debt,' warning about the danger of AI-produced code that nobody truly understands.

AI coding tools produce code fast. It passes tests, and there are no lint (code quality check) warnings. But there's one problem — nobody actually understands the code.

Senior engineer Addy Osmani, who works on Google Cloud and the Gemini team, gave this phenomenon a name: 'Comprehension Debt' — the growing gap between the amount of code that exists in a system and the amount of code that people actually understand.

An Invisible Risk, Different from Technical Debt

The technical debt we're all familiar with is visible. The code is messy, and you run into it every time you make a change. But comprehension debt is different. The code looks clean and passes all the tests, yet nobody knows why it was built that way.

Osmani cites the case of a student team. They developed quickly using AI over seven weeks, but eventually couldn't even make simple modifications. "No one on the team could explain why certain design decisions were made or how the different parts of the system worked together" — that was the root cause.

Anthropic Study: AI Delivers Similar Speed but 17% Lower Comprehension

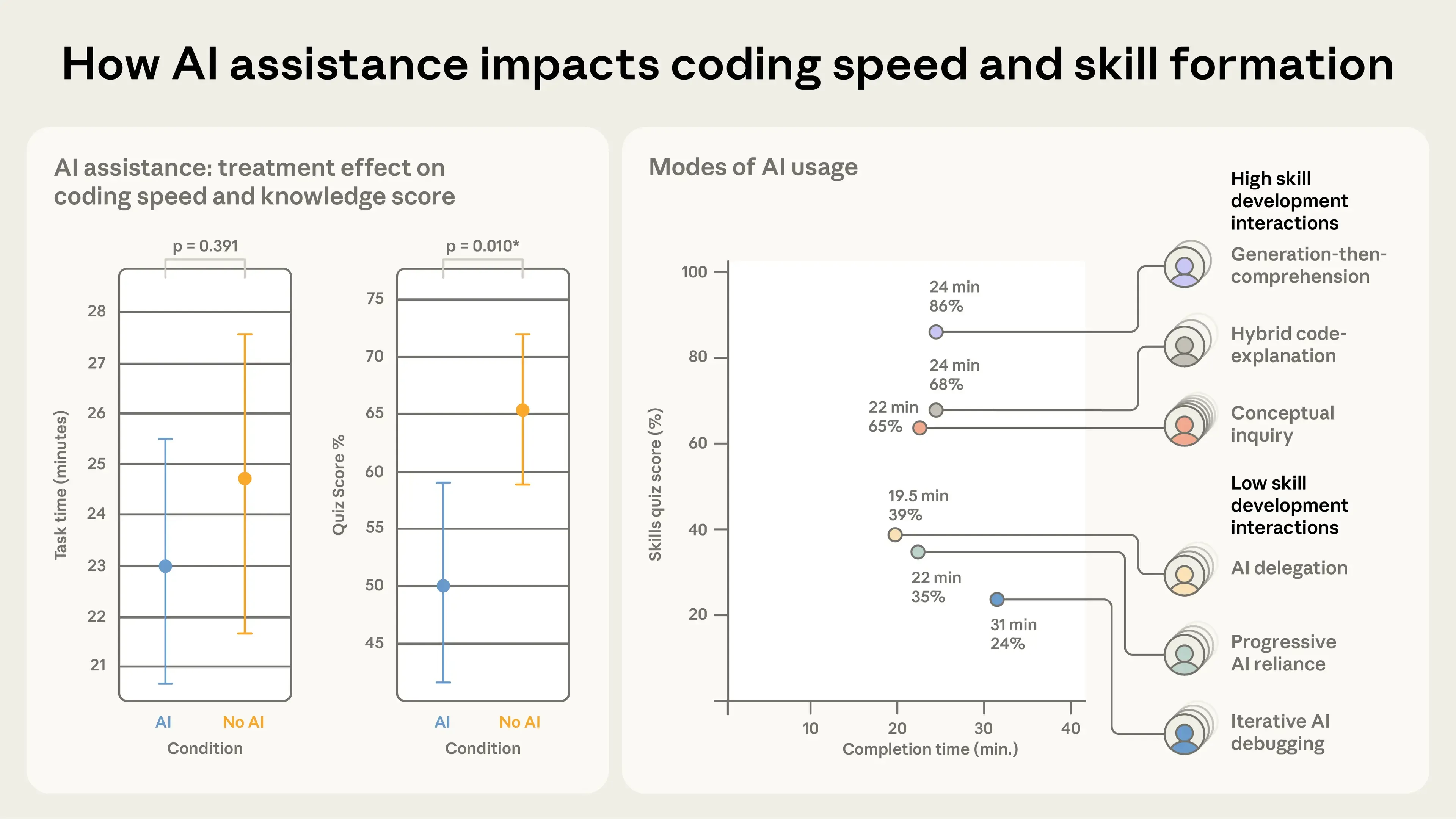

The Anthropic study on 'The Impact of AI on Skill Acquisition' cited by Osmani tracked 52 engineers as they learned a new library. The results were striking.

3 Key Findings

• The AI-assisted group and the non-AI group had nearly identical task completion times (23 min vs. 25 min)

• However, on a follow-up comprehension quiz, the AI group scored 50 points while the non-AI group scored 67 points — a 17% gap (p=0.010)

• The largest decline appeared in debugging (finding and fixing errors) ability

What's even more interesting is that results varied dramatically depending on how people used AI. The research team identified 7 distinct AI usage patterns.

AI Usage Patterns That Improve Comprehension

Conceptual Inquiry — Asking the AI "explain why this code works this way." Comprehension score: 65%, Time spent: 22 min.

Hybrid Code-Explanation — Requesting code but also asking for an explanation of why it was written that way. Comprehension score: 68%, Time spent: 24 min.

Generation-then-Comprehension — Generating code first, then adding a step to analyze and understand it. Comprehension score: 86%, Time spent: 24 min. The highest score of all.

AI Usage Patterns That Hurt Comprehension

AI Delegation — Saying "build this for me" and just copy-pasting the result. Comprehension score: 35%, Time spent: 22 min.

Iterative AI Debugging — Repeatedly asking the AI to "fix it" whenever an error occurs. Comprehension score: 24%, Time spent: 31 min. The slowest and worst for learning.

Even using the same AI tool, the comprehension gap between those who said "build it for me" and those who asked "explain why this works" was 3.6x.

AI Writes Code Faster Than Humans, but Humans Can't Review It That Fast

The core issue Osmani identifies is asymmetric speed. AI generates hundreds of lines of code in seconds, but it still takes people a long time to carefully read and understand that code.

In the past, code reviews served a dual purpose: quality control and knowledge sharing. A senior developer would read a junior's code and teach them: "This part would be better done this way." But when AI generates the code, this feedback loop breaks. Junior developers can produce code faster than their seniors, yet nobody truly understands what was built.

Why Passing Tests Doesn't Mean It's Safe

"All the tests pass, so it's fine, right?" You might think so. Osmani pushes back clearly on this.

"Who writes a test that a dragged item shouldn't become fully transparent? You can't test for a possibility you never even considered."

Tests only catch problems you can anticipate. When AI modifies hundreds of test cases at once, reviewers can't tell whether every change was actually necessary. Complex behaviors only surface through real-world use — by which point the code is already deployed to production (the live service environment).

The Era Where People Who Use AI Well Become More Valuable

Osmani's conclusion isn't to abandon AI. "Making code cheap to produce doesn't mean you can skip understanding it. Understanding is the job", he says.

Here's a summary of his recommended approach.

4 Principles to Reduce 'Comprehension Debt' in the AI Era

1. Define what you want to build before asking AI to build it — Instead of immediately telling the AI "build it," start by clearly writing out the requirements.

2. Start with "explain it" instead of "build it" — In the Anthropic study, the group with the highest comprehension scores asked the AI about concepts first.

3. Always read and understand AI-generated code before committing it — After generating code, asking "explain what this code does" just once more boosts comprehension to 86%.

4. Develop people who can hold the entire system in their head — Engineers with deep system understanding become even more valuable in the AI era.

This Applies Beyond Developers

This isn't just a developer problem. The same principle applies to everyone who uses AI to write reports, analyze data, or draft proposals. If you use AI-generated output without understanding it, you won't be able to answer questions when they come up or fix problems when they arise.

The people who get the most out of AI aren't those who take the output as-is — they're the ones who ask AI "why?" and learn in the process. The 3.6x comprehension gap from the Anthropic study proves it.

Related Content — Get Started with EasyCl-Co AI | Free Learning Guide | More AI News

Stay updated on AI news

Simple explanations of the latest AI developments