Microsoft's BitNet runs a 100B AI model on a single CPU

Microsoft's BitNet.cpp lets you run massive AI models on ordinary computers — no GPU needed. 6x faster, 82% less energy. 36K GitHub stars.

Running powerful AI models usually requires expensive graphics cards costing thousands of dollars. Microsoft's BitNet.cpp changes that — it can run a 100-billion-parameter AI model on a single CPU at 5-7 words per second. No GPU required. The project has 35,600 GitHub stars and gained over 6,400 this week.

The Trick: 1-Bit AI Models

Traditional AI models store each piece of their "brain" as a detailed number (like 3.14159). 1-bit models simplify this to essentially just three values: -1, 0, or 1. It's like the difference between a high-resolution photograph and a sketch — you lose some detail, but the sketch still captures the important shapes, and it's dramatically smaller and faster to process.

The remarkable part? Microsoft says this simplification causes zero quality loss. The AI gives the same quality answers — it just needs far less computing power to do it.

The Numbers Speak for Themselves

On ARM chips (like Apple Silicon Macs): 1.4x to 5x faster, using 55-70% less energy

On Intel/AMD chips (most Windows PCs): 2.4x to 6.2x faster, using 72-82% less energy

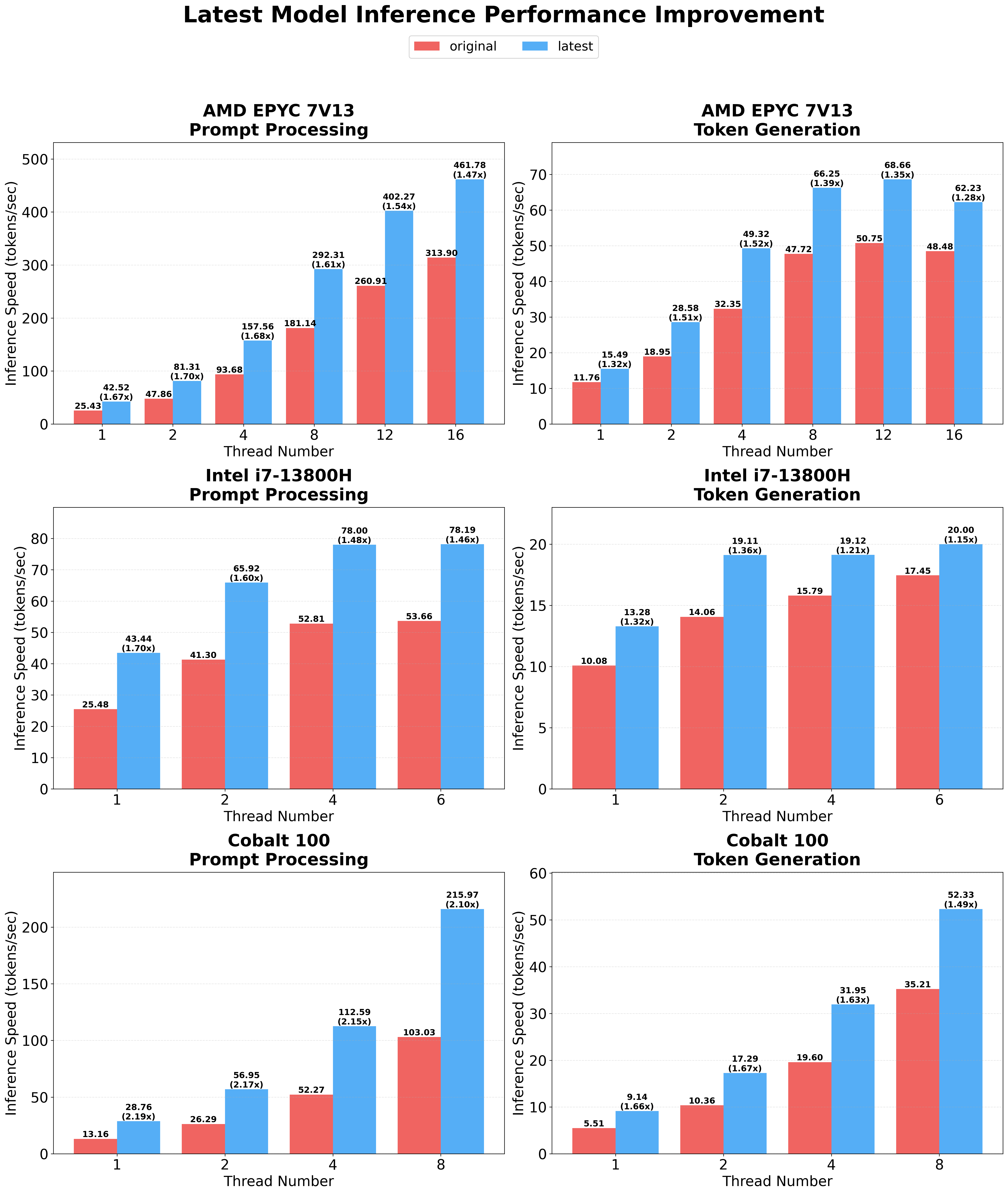

Latest optimization: An additional 1.15x to 2.1x speedup on top of those numbers

What Models Can You Run?

BitNet supports several pre-built models ranging from tiny to massive:

BitNet-b1.58-2B-4T — Microsoft's official model with 2.4 billion parameters. Small enough for any modern computer.

Llama3-8B-1.58 — A 1-bit version of Meta's popular Llama 3 model with 8 billion parameters.

Falcon3 Family — Models from 1 to 10 billion parameters, covering a range of capabilities and hardware requirements.

Why This Changes the Game for Local AI

The biggest barrier to running AI privately on your own computer has been hardware cost. A decent GPU for AI work costs $1,000-$10,000+. BitNet eliminates that barrier entirely — your existing CPU is enough.

For businesses concerned about data privacy: you can now run capable AI models without sending any data to the cloud. Everything stays on your machine.

For developers in resource-constrained environments: edge devices (devices that process data locally rather than in the cloud), embedded systems, and even mobile devices become viable AI platforms.

For anyone paying for cloud AI: the 72-82% energy reduction translates directly to lower costs when running AI at scale.

Getting Started

BitNet requires Python 3.9+, CMake, and a C compiler. Here's the quickstart:

git clone --recursive https://github.com/microsoft/BitNet.git

cd BitNet

conda create -n bitnet-cpp python=3.9 -y

conda activate bitnet-cpp

pip install -r requirements.txt

huggingface-cli download microsoft/BitNet-b1.58-2B-4T-gguf --local-dir models/BitNet-b1.58-2B-4T

python setup_env.py -md models/BitNet-b1.58-2B-4T -q i2_s

python run_inference.py -m models/BitNet-b1.58-2B-4T/ggml-model-i2_s.gguf -p "You are a helpful assistant" -cnvMicrosoft's approach to 1-bit models could fundamentally shift who gets to run AI. When a 100-billion-parameter model fits on an ordinary computer, the expensive GPU barrier that keeps AI centralized in cloud data centers starts to crumble.

Related Content — Get Started with Easy Claude Code | Free Learning Guides | More AI News

Sources

Stay updated on AI news

Simple explanations of the latest AI developments