MIT's AI sees through walls using ordinary Wi-Fi signals

MIT researchers used generative AI to let robots detect hidden objects through walls using Wi-Fi-like signals — achieving 20% better accuracy than previous methods.

MIT researchers just taught AI to see through walls — using the same type of wireless signals your Wi-Fi router sends. The breakthrough could let warehouse robots verify package contents without opening boxes, or help smart home devices understand room layouts without cameras.

Wi-Fi signals that act like X-ray vision

The system uses millimeter wave signals (mmWave) — a type of radio wave similar to what your Wi-Fi router and 5G phone use. These signals can pass through common barriers like drywall, plastic, and cardboard, then bounce off objects hidden behind them.

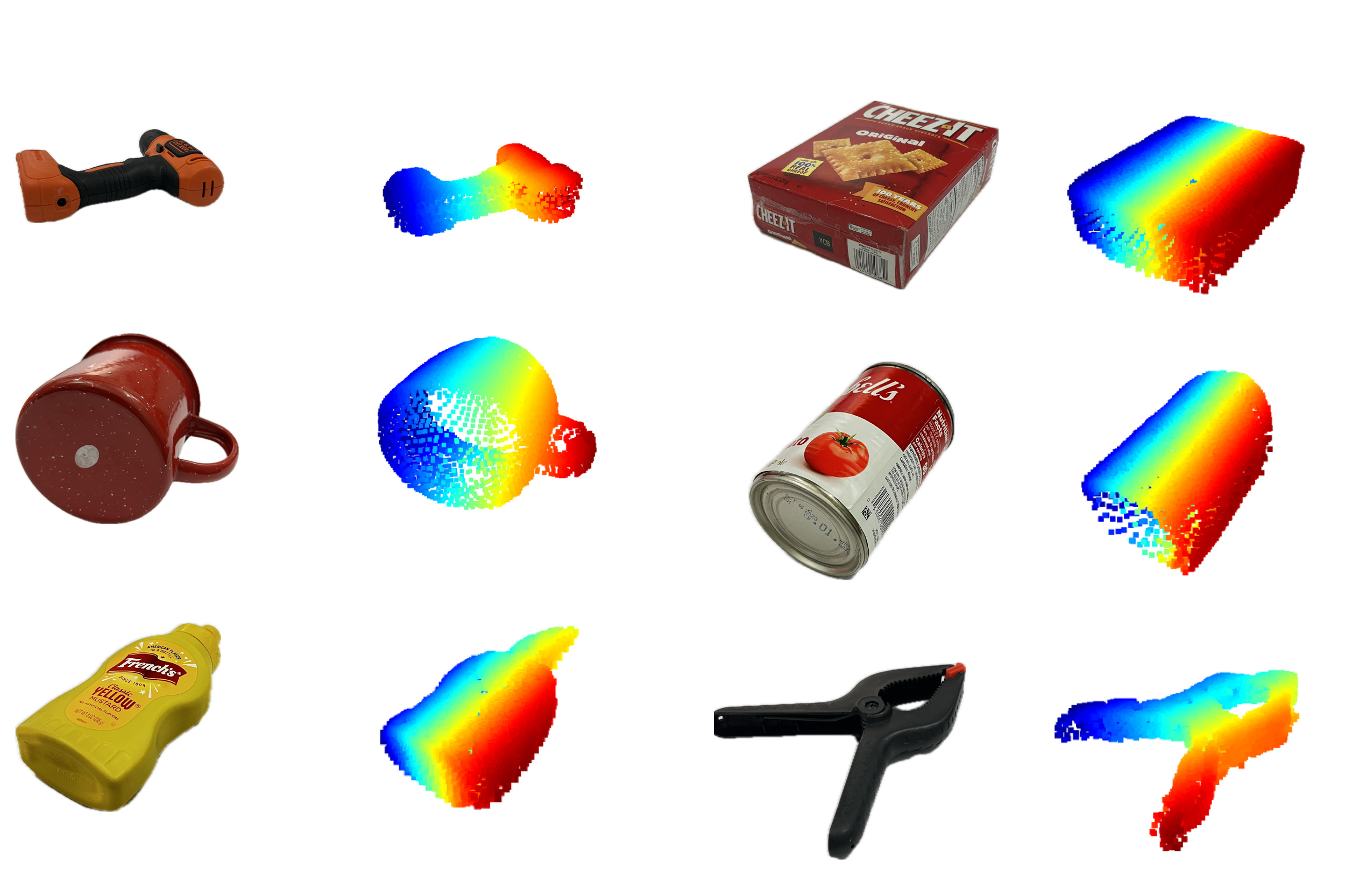

The problem? When radio waves hit a surface, they bounce in only one direction (like a flashlight beam off a mirror). That means sensors can only "see" a tiny fraction of a hidden object. Imagine trying to understand the shape of a coffee mug by seeing just one thin stripe of light reflected off it.

How generative AI fills in the blanks

Here's where generative AI (the same type of technology behind image generators like Midjourney and DALL-E) comes in. The MIT team trained AI models to complete the missing shape information — essentially guessing what the rest of an object looks like based on the small slice that wireless signals can detect.

The clever trick: since no large wireless sensing datasets existed, the researchers adapted existing computer vision datasets (collections of regular photos) to simulate how wireless reflections would look. They essentially taught the AI the physics of radio waves using modified photos.

Nearly 20% more accurate — and twice as precise for rooms

The team built two systems that both dramatically outperform existing approaches:

• Wave-Former (for single objects): Achieved nearly 20% better accuracy at reconstructing 3D shapes of ~70 everyday objects hidden behind walls, plastic, and cardboard

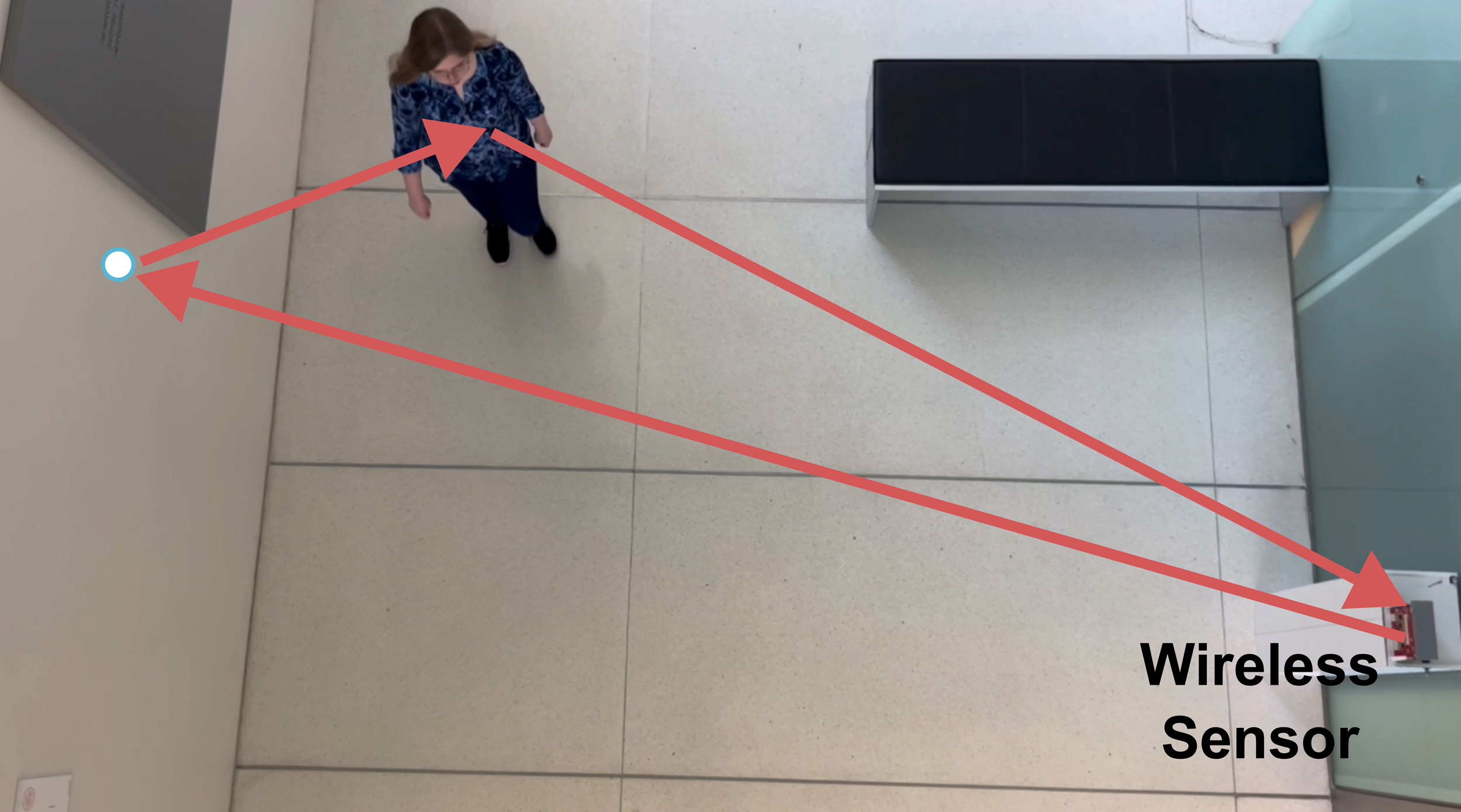

• RISE (for entire rooms): Generated room reconstructions that were approximately twice as precise as current techniques, using 100+ human movement trajectories to map indoor scenes

As lead researcher Fadel Adib puts it: "We are using AI to finally unlock wireless vision."

Real-world uses that could arrive soon

Warehouse and logistics: A robot could scan a sealed package and verify its contents without opening it — checking that the right items were packed before shipping.

Smart homes without cameras: Instead of security cameras (which raise privacy concerns), a Wi-Fi-based system could understand who's in a room and what they're doing — without recording any video. The system only "sees" wireless reflections, not actual images of people.

Search and rescue: First responders could detect people trapped behind rubble or walls without line-of-sight access.

From MIT's Signal Kinetics lab

The research comes from associate professor Fadel Adib's Signal Kinetics group at MIT's Media Lab, with researchers Laura Dodds, Kaichen Zhou, and others contributing. The team has been working on wireless sensing for years, but generative AI gave them the breakthrough needed to go from partial reflections to full 3D reconstructions.

Related Content — Get Started with Easy Claude Code | Free Learning Guides | More AI News

Stay updated on AI news

Simple explanations of the latest AI developments