This AI agent runs on your laptop — sandboxed in 2 lines of code

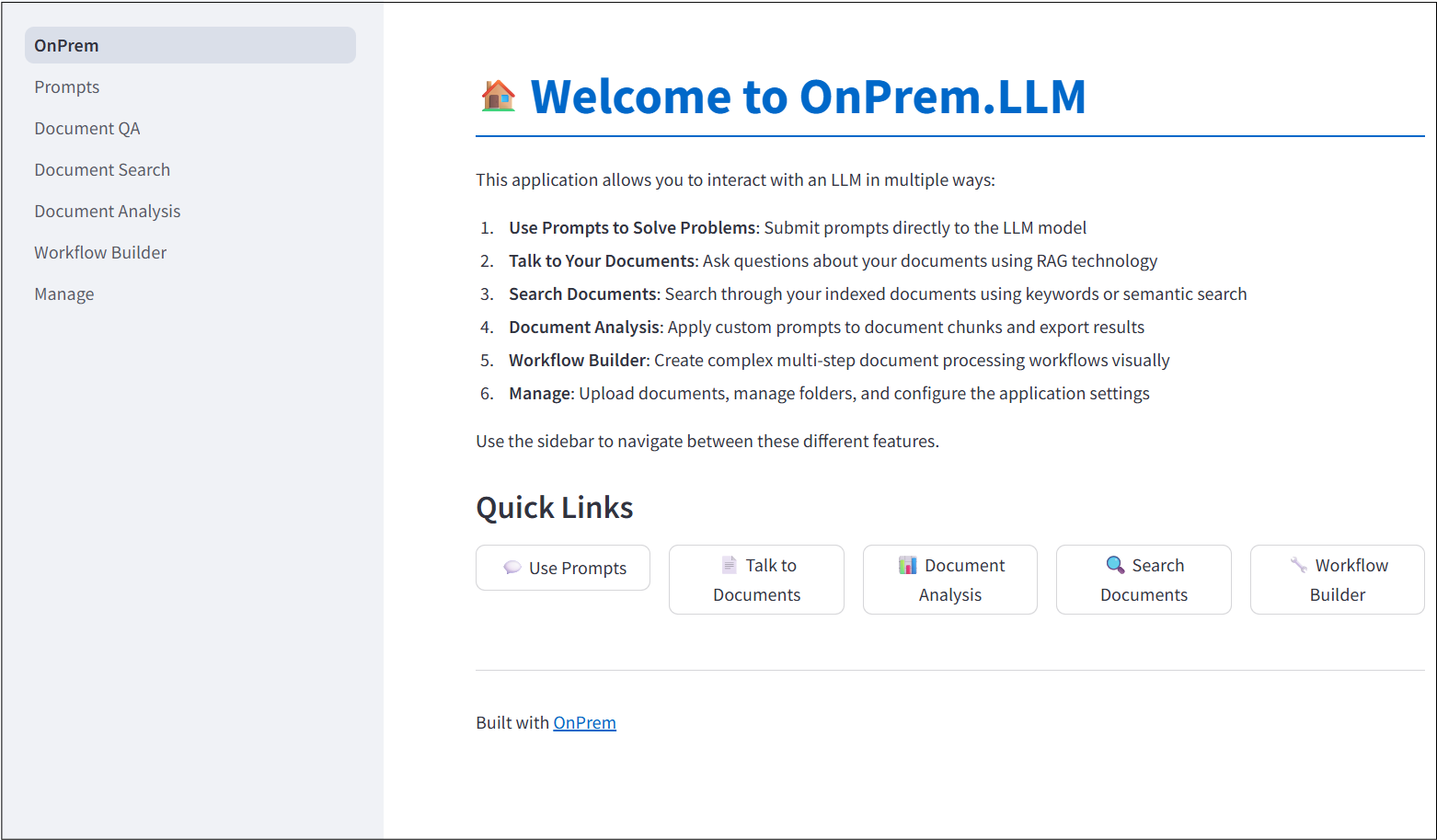

OnPrem.LLM v0.22.0 lets you launch autonomous AI agents in a secure sandbox on your own computer. No cloud, no data leaks — just pip install and go.

What if you could launch an AI agent that reads files, writes code, searches the web, and runs shell commands — all from your own laptop, without sending a single byte to the cloud? That's exactly what OnPrem.LLM just made possible with its new v0.22.0 release.

The update introduces AgentExecutor, a feature that lets you spin up a fully autonomous AI agent inside a secure sandbox (an isolated container that prevents the agent from touching anything outside its workspace). Two lines of Python code. That's it.

What Can This Agent Actually Do?

The agent comes with 9 built-in tools: it can read and edit files, search through code, find documents, run shell commands, search the web, and fetch web pages. Think of it like giving an AI assistant full access to your computer — except it's locked inside a safe room where it can't break anything.

- A complete Python calculator app with tests — built and tested in one go

- A quantum computing research report — auto-searched and formatted as a document

- Sales data analysis with charts — from raw CSV to visualized insights

- Stock market analysis — using custom financial tools to fetch prices and calculate risk

The Safety Net That Makes It Different

Other AI coding agents give the AI free rein over your system. OnPrem takes a different approach with three layers of protection:

1. Shell lockdown — flip one switch (disable_shell=True) and the agent can't run any system commands

2. Directory jail — restrict the agent to only access files in a specific folder

3. Container sandbox — run the entire agent inside a disposable Docker container that gets destroyed when it's done

You also get full transparency: every thinking step, every tool call, and every cost calculation is logged so you can see exactly what the agent did and why.

Works With Almost Any AI Model

The agent isn't locked to one AI provider. It works with Claude, GPT-5, Gemini, and local models through Ollama — so you can choose between cloud power and total privacy. Running a local model means your data never leaves your machine.

Try It Right Now

If you have Python installed, you can have an AI agent running in under a minute:

pip install onprem[agent]

# Then in Python:

from onprem import AgentExecutor

agent = AgentExecutor(model='anthropic/claude-sonnet-4-5', sandbox=True)

agent.execute('Create a Python script that analyzes my sales data')Want it fully local with no cloud at all? Use Ollama instead:

agent = AgentExecutor(model='ollama/llama3', sandbox=True)

agent.execute('Build me a budget tracker app')Who Should Care About This

Freelancers and solo developers — let the agent handle boilerplate code while you focus on design decisions. Data analysts — hand off CSV crunching and chart generation to the agent. Anyone handling sensitive data (lawyers, healthcare workers, financial analysts) — the local-first approach means client data stays on your machine.

OnPrem.LLM has 832 GitHub stars and is actively maintained. The v0.22.0 release dropped on March 17, 2026, and it's already trending on Hacker News with developers praising the sandboxed execution model.

The full project is open-source under the Apache 2.0 license on GitHub.

Related Content — Get Started with Easy Claude Code | Free Learning Guides | More AI News

Stay updated on AI news

Simple explanations of the latest AI developments