A developer proved your AI 'spec' is just code in disguise

A viral blog post argues that writing detailed specs for AI coding agents is no easier than writing code itself — and OpenAI's own Symphony project proves it.

A blog post titled "A Sufficiently Detailed Spec is Code" is tearing through Hacker News right now, and it challenges one of the biggest promises of the AI coding revolution: that you can skip writing code by writing specifications instead.

The argument is simple and devastating: when you make a spec detailed enough for an AI to actually build working software from it, that spec basically becomes code. You haven't saved any work — you've just disguised it.

OpenAI's Symphony: a spec that's secretly code

Author Gabriella Gonzalez zeroes in on OpenAI's Symphony project — a workflow automation system that claims to be built entirely from a specification document (SPEC.md). The pitch: write what you want, and let AI agents build it.

But Gonzalez found that Symphony's "spec" is packed with database schema dumps, pseudocode, actual algorithms, and explicit "cheat sheets" designed to help AI models generate the implementation. In other words, the spec IS code — just written in prose instead of a programming language.

The numbers back this up: Symphony's SPEC.md is already one-sixth the size of the actual Elixir implementation. To make the spec precise enough for an AI to reliably build from, you'd need to expand it until it's essentially the same size as the code itself.

Why this matters for anyone using AI to build software

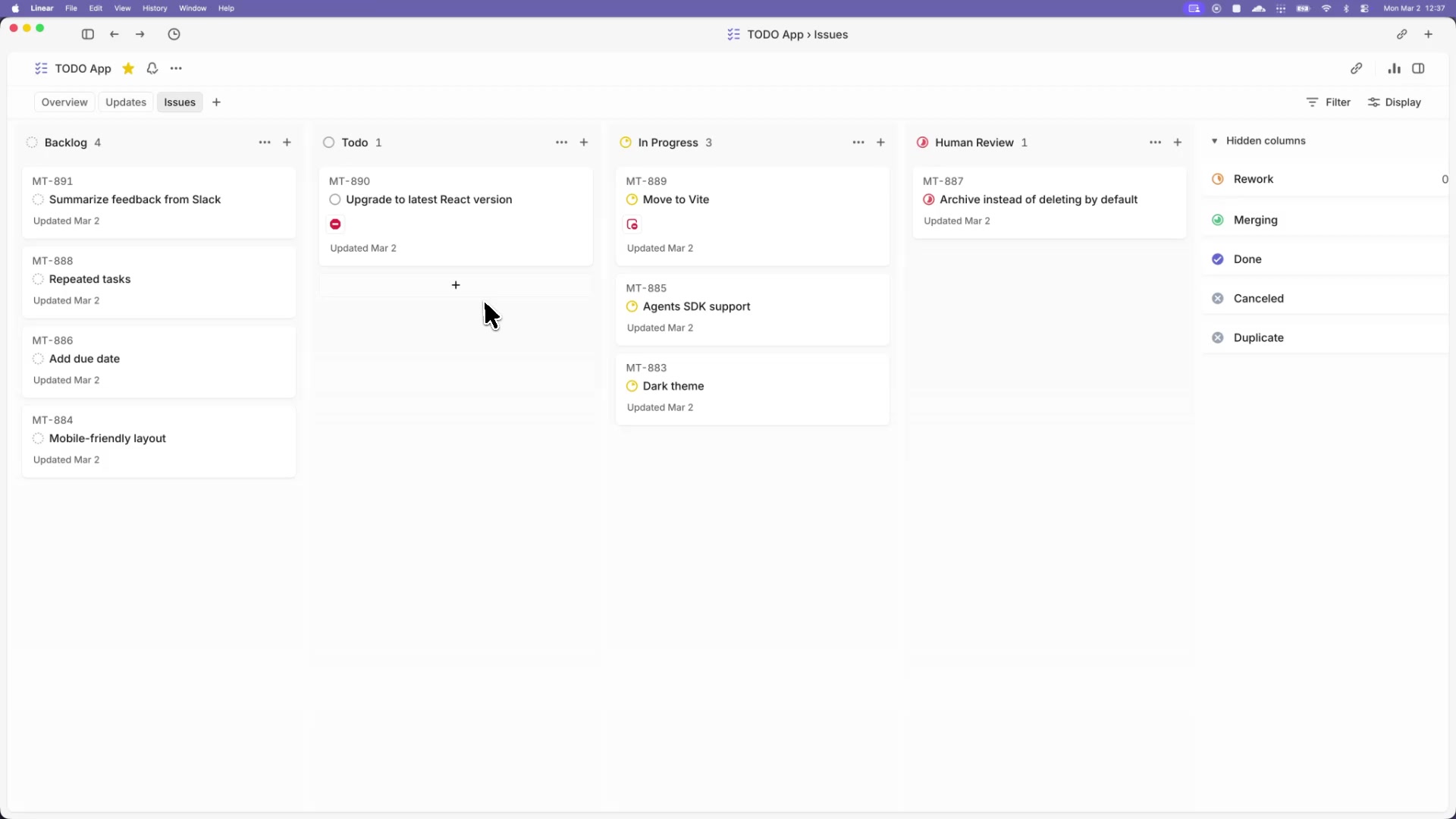

If you've tried tools like Claude Code, Cursor, or ChatGPT to build an app from a written description, you've probably hit this wall yourself. The AI builds something — but it's wrong, or it silently fails, or it misses a critical detail.

Gonzalez tested this directly: she gave Claude Code the Symphony spec and asked it to build the project in Haskell. The result? Multiple bugs, silent failures, and tasks that simply didn't complete — despite no error messages appearing.

The core insight: When you tell an AI "build me a project management tool," it fills in thousands of ambiguous details on its own. Most of those guesses will be wrong. Making the description precise enough to prevent wrong guesses means spelling out every detail — which is essentially programming.

The Dijkstra parallel: precision requires formalism

Gonzalez invokes computer science legend Edsger Dijkstra, who observed that human progress in mathematics only took off when people stopped describing ideas in everyday language and switched to formal symbols. The reason? Natural language is too vague for precision work.

The same applies to AI specs. You can write "handle user authentication" in a document, but there are hundreds of ways to implement it. An AI will pick one — probably not the one you wanted. The only way to guarantee the right outcome is to specify it with the same precision as code, at which point... you're coding.

The Hacker News debate: can smarter AI fix this?

The Hacker News discussion (70+ points, active debate) reveals a split in the developer community:

- The optimists argue that as AI models get smarter, they'll handle vague specs better — you won't need to be precise because the AI will figure it out

- The skeptics point out that even mature specifications like YAML (a data format with thousands of pages of documentation) still have implementations that don't fully conform

- The pragmatists suggest a new dialect — "LLMSpeak" — will emerge, a language optimized for communicating precisely with AI, which would essentially become a new programming language

What actually works right now

The takeaway isn't that AI coding tools are useless — they're genuinely powerful. But the "just write a spec and let AI do the rest" promise is oversold. Here's what experienced builders recommend instead:

- Use AI for small, well-defined tasks — generating a function, writing tests, refactoring a file — rather than building entire projects from a description

- Review every output — AI-generated code can look correct while silently failing. Gonzalez found bugs that produced no error messages at all

- Think of specs as planning tools, not shortcuts — writing a spec helps you think through a problem, but expecting an AI to flawlessly execute it is like expecting a contractor to build a house from a napkin sketch

- Invest in context engineering — giving AI coding agents the right examples, rules, and patterns produces far better results than longer specs

The bigger picture: 'garbage in, garbage out' still applies

As AI coding tools become more popular — especially with non-programmers picking them up for the first time — this message is critical. The fantasy of "just describe what you want and AI builds it" will leave many people frustrated and confused when their projects don't work.

Gonzalez puts it bluntly: "Garbage in, garbage out." If the specification is vague, the AI output will be unpredictable. If the specification is precise, you've done the hard work that coding requires — just in a different format.

The honest truth about AI coding in 2026? It's a powerful assistant, not a replacement for thinking through what you actually want to build.

Related Content — Get Started with Easy Claude Code | Free Learning Guides | More AI News

Stay updated on AI news

Simple explanations of the latest AI developments