Karpathy's AI ran 700 experiments in 8 hours — for $309

Researchers scaled Andrej Karpathy's Autoresearch from 1 GPU to 16 — letting an AI agent run 700 experiments overnight for under $310 in cloud costs.

What happens when you give an AI research agent not one GPU, but 16 at once? It runs 700 machine-learning experiments in 8 hours, discovers optimization strategies humans didn't suggest, and costs less than a nice dinner. That's the result of a new experiment that scaled Andrej Karpathy's Autoresearch (43,400 GitHub stars) from a single-GPU hobby project into a parallel research machine.

From overnight hobby to research lab

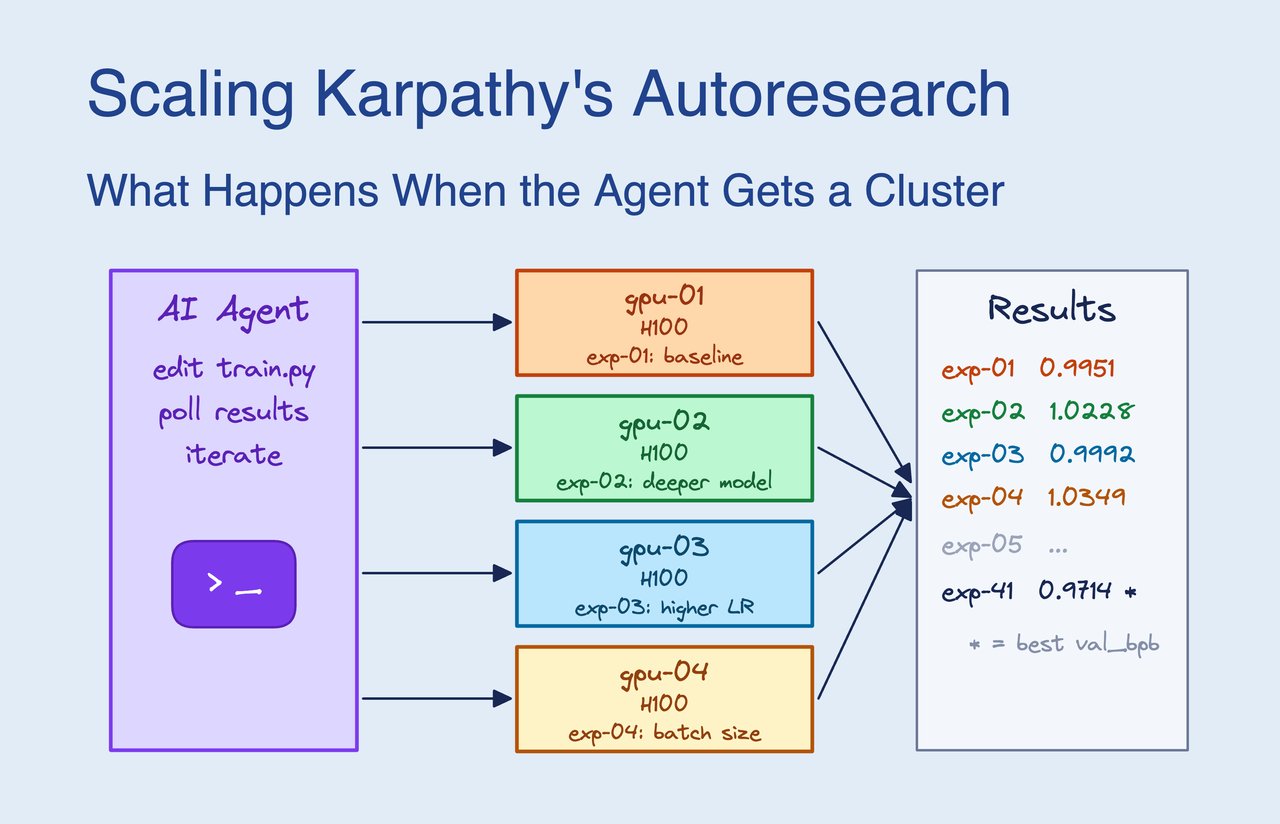

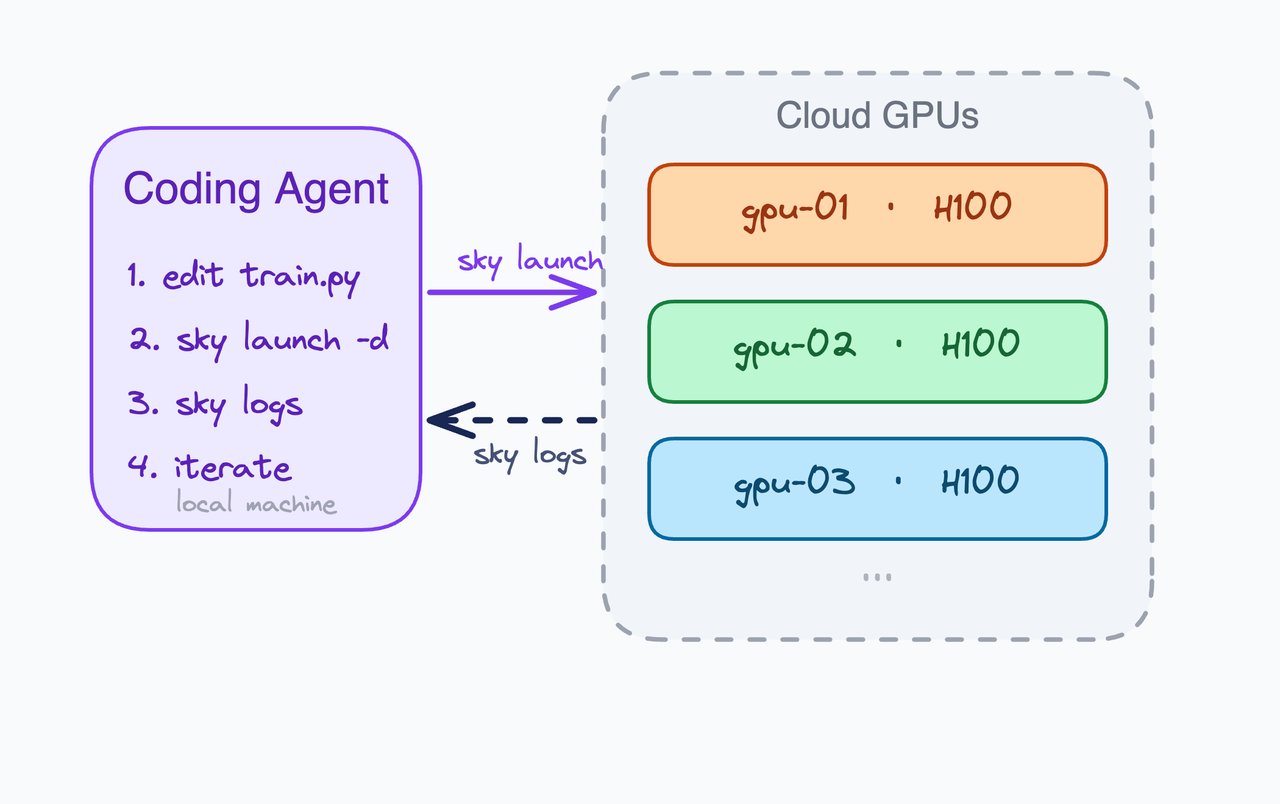

Karpathy released Autoresearch on March 7 — a 630-line Python script that lets an AI agent improve a neural network (a type of AI model) on its own. The agent modifies the training code, runs a 5-minute experiment, checks if results improved, keeps or discards the change, and repeats. On a single GPU, it manages about 10 experiments per hour — roughly 100 overnight.

Researchers at SkyPilot (a cloud infrastructure tool) asked: what if we removed the bottleneck? They connected the same AI agent to 13 NVIDIA H100 and 3 H200 GPU clusters running in parallel.

The numbers that matter

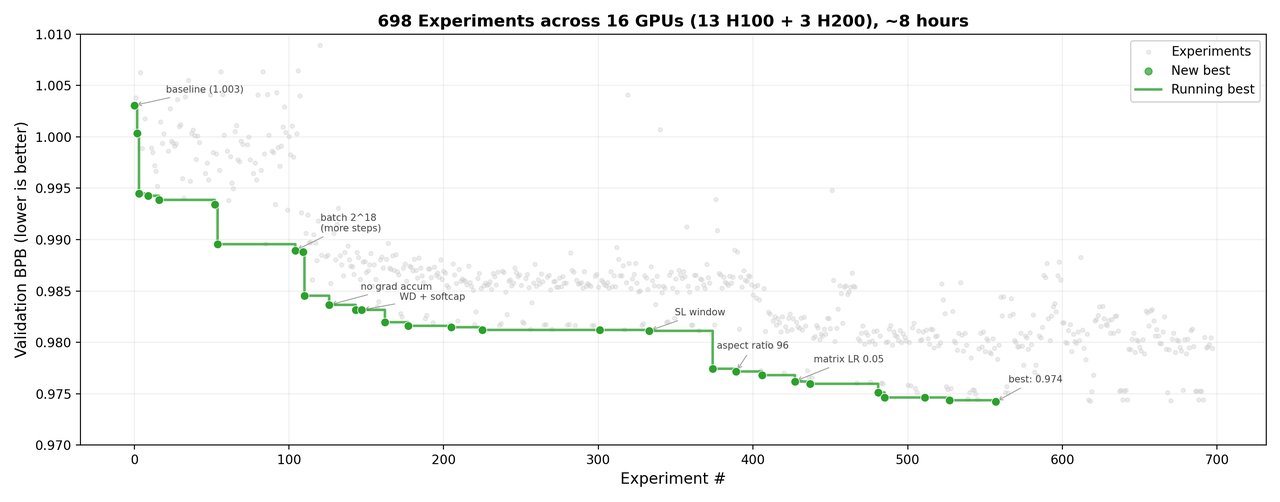

📊 Throughput: ~90 experiments/hour (vs. ~10/hour on one GPU) — a 9x speedup

⏱️ Wall clock: 8 hours of parallel work = 72 hours of sequential work

🧪 Total experiments: ~910 submitted, ~700 with valid results

💰 Total cost: ~$309 ($300 GPU compute + $9 AI API calls)

📈 Model improvement: 2.87% better validation score (from 1.003 to 0.974)

The AI taught itself to use faster hardware

The most surprising finding: the agent independently discovered that different GPU types perform differently. Without being told, it developed a "two-tier strategy" — screening hypotheses on the H100 machines, then promoting winners to the faster H200 machines for confirmation. This is the kind of workflow a human researcher might design, but the AI figured it out on its own.

The agent's research progressed through natural phases, just like a human would:

🔹 Phase 1 (experiments 1–200): Broad sweeps of settings — the biggest gains came here (0.022 improvement)

🔹 Phase 2 (200–420): Architecture changes — found that doubling the model's width was optimal

🔹 Phase 3 (420–560): Fine-tuning learning rates

🔹 Phase 4 (560–700): Optimizer tuning — diminishing returns

🔹 Phase 5 (700–910): Marginal gains below 0.0001 per experiment

Why Fortune called it 'a glimpse of where AI is heading'

Fortune magazine described the pattern as "The Karpathy Loop" — an AI that designs its own experiments, runs them, evaluates results, and iterates. The human's role shifts from doing research to defining what "better" means and letting the machine explore.

This isn't theoretical anymore. For $309 and 8 hours, an AI agent did what would take a human researcher weeks of manual experimentation. The model improvement (2.87%) is modest, but the methodology is what matters: autonomous research at scale is now accessible to anyone with a credit card and a cloud account.

Try it yourself

# Clone Karpathy's autoresearch

git clone https://github.com/karpathy/autoresearch

cd autoresearch

# Run on a single GPU (the simple version)

python run.py

# For multi-GPU scaling with SkyPilot:

pip install skypilot-nightly

sky launch -c ar autoresearch.yamlThe single-GPU version runs about 12 experiments per hour — you can leave it overnight and wake up to 100+ completed experiments. Scaling to multiple GPUs requires SkyPilot for cloud cluster management, but the core script is just 630 lines of Python.

Related Content — Get Started with Easy Claude Code | Free Learning Guides | More AI News

Sources

Stay updated on AI news

Simple explanations of the latest AI developments