MiniMax just released an AI that improves itself

MiniMax M2.7 can run 100+ self-improvement cycles and handle 30-50% of its own development — a first step toward AI that evolves without human help.

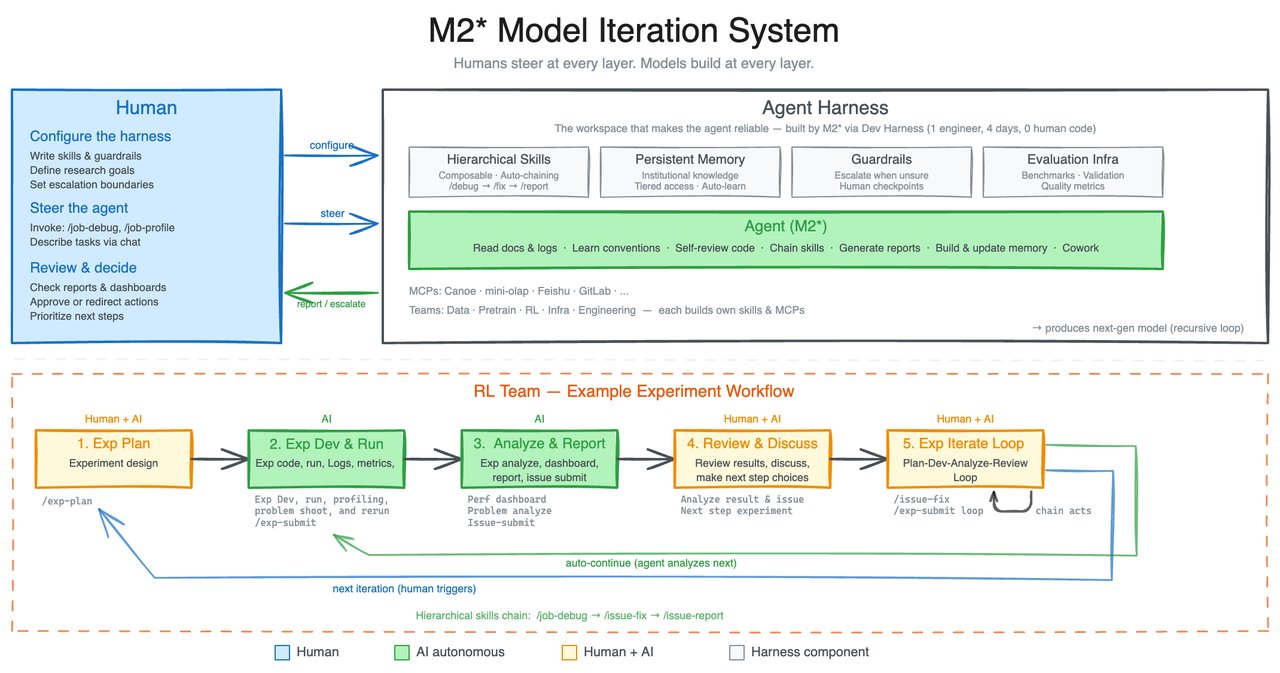

Chinese AI company MiniMax just released M2.7, an AI model that does something no mainstream model has done before: it actively participates in making itself better. Instead of waiting for engineers to train the next version, M2.7 runs its own experiments, finds its own mistakes, and fixes them — over and over.

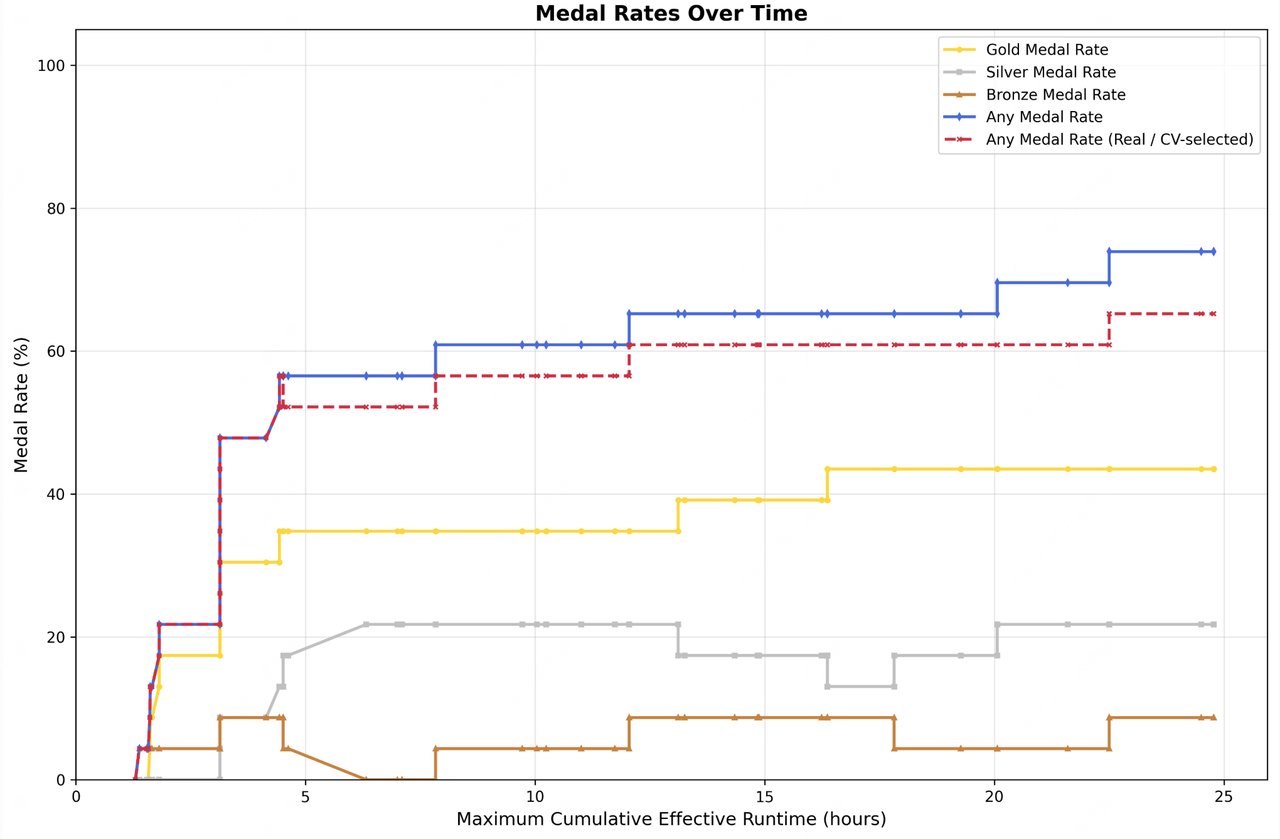

The result? 100+ autonomous improvement cycles, a 30% performance boost on internal tests, and the ability to handle 30–50% of the research workload that used to require a full team of AI engineers.

An AI That Debugs, Tests, and Upgrades Itself

Here's how it works in plain language: most AI models are like a finished textbook — they know what they know, and that's it until someone publishes a new edition. M2.7 is more like a student who reviews their own exam, figures out where they went wrong, rewrites their notes, and retakes the test. Repeatedly.

MiniMax calls this "self-evolution." The model autonomously reads its own logs, identifies failures, adjusts its approach, runs the experiment again, and compares results. In internal testing, it executed this loop over 100 times — finding optimal settings that human researchers hadn't discovered.

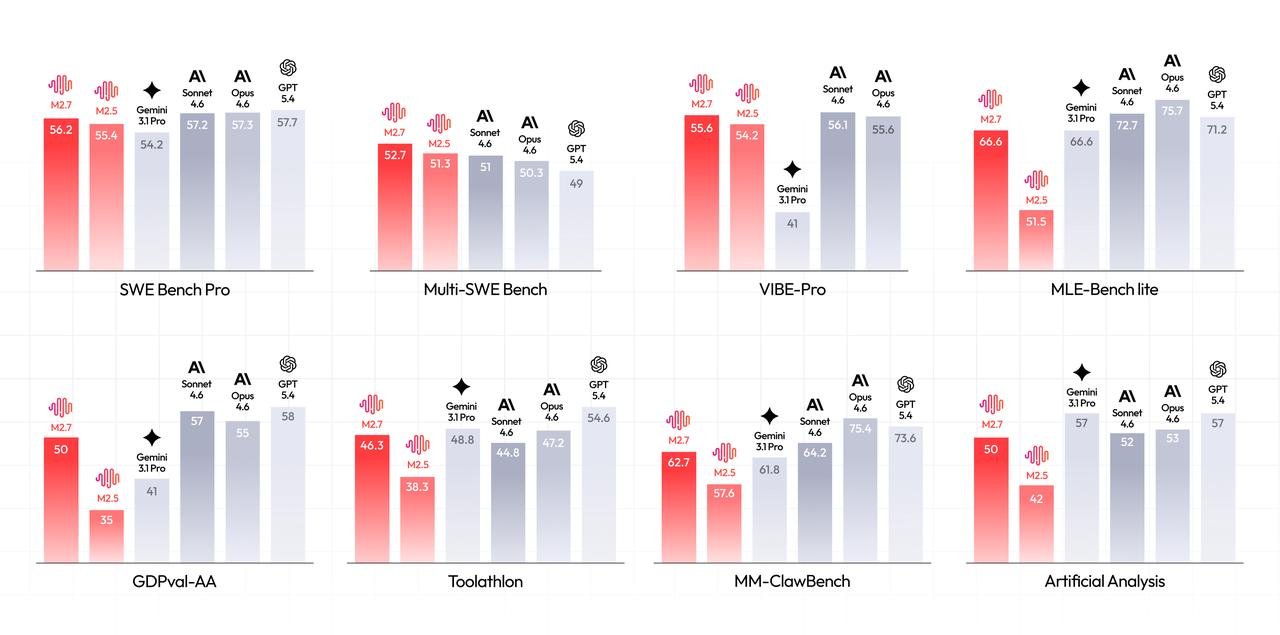

• SWE-Pro: 56.22% — matches GPT-5.3 Codex on real-world coding tasks

• VIBE-Pro: 55.6% — can deliver entire software projects, not just code snippets

• GDPval-AA ELO: 1,495 — highest score among publicly accessible models for office work

• 97% skill adherence — follows complex instructions (2,000+ words) almost perfectly

• MLE Bench Lite: 9 gold, 5 silver, 1 bronze across 22 AI competitions

From Debugging to Documents — What It Can Actually Do

M2.7 isn't just a research curiosity. MiniMax built it to handle real work across three areas:

Software Debugging in Under 3 Minutes

When something breaks in production, M2.7 traces the problem through monitoring data, identifies the root cause, and proposes a fix. MiniMax reports incident recovery times under three minutes — compared to the hours that typically take human teams.

Office Productivity at Near-Human Quality

The model generates Word documents, Excel spreadsheets, and PowerPoint presentations from templates, maintaining formatting and context across multiple rounds of edits. Its hallucination rate (making up false information) dropped massively — from a score of negative 40 to positive 1 on MiniMax's accuracy index.

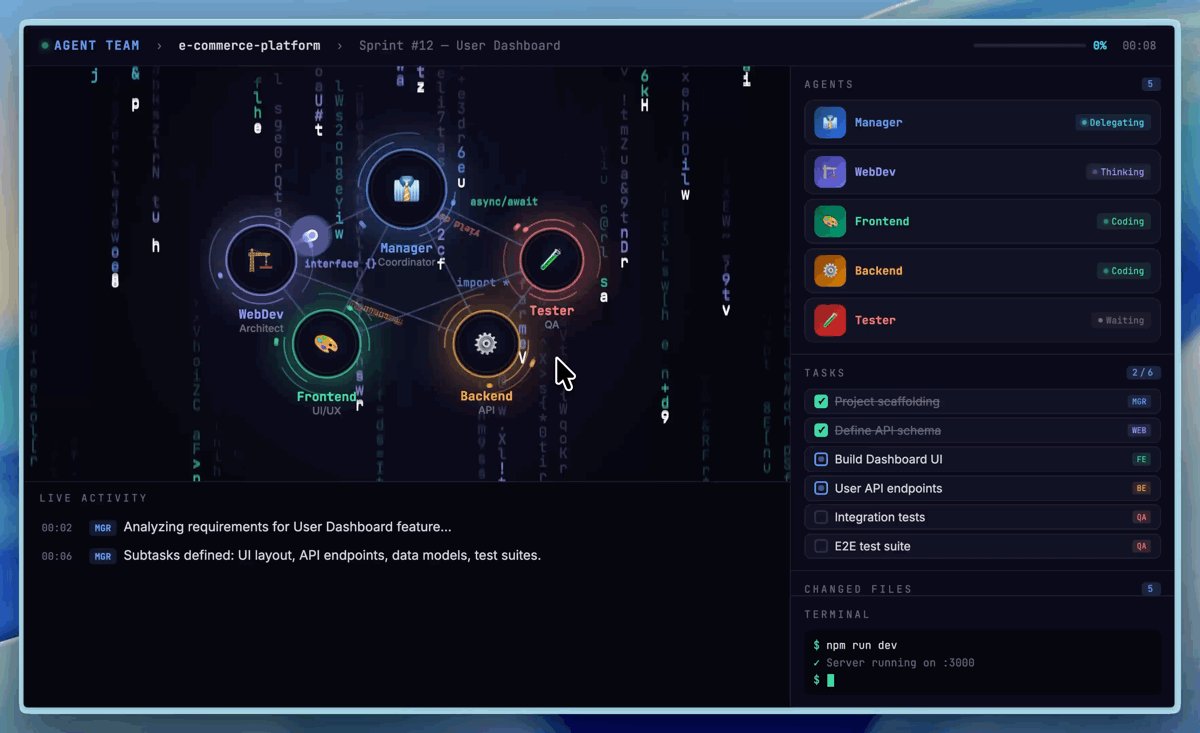

Multi-Agent Teams That Collaborate

M2.7 introduces Agent Teams — where multiple AI agents work together on a single task, each with a specific role. Think of it like an AI project team: one agent researches, another writes, a third reviews. The team maintains 97% adherence to role assignments even across 40+ complex tasks.

How Does It Compare to Claude, GPT, and Gemini?

On raw benchmarks, M2.7 sits just below the top tier. It trails Claude Opus 4.6 (ELO ~1,550 vs. M2.7's 1,495 on office tasks) and GPT-5.4 on the hardest ML benchmarks, but ties with Gemini 3.1 and beats all previous MiniMax models by a wide margin.

The real differentiator isn't raw scores — it's the self-improvement loop. No other commercially available model can autonomously run experiments to upgrade its own performance. If MiniMax scales this approach, future versions could improve exponentially faster than models that depend entirely on human-led training.

Who Should Pay Attention

If you're a developer: M2.7 handles end-to-end project delivery across Web, Android, iOS, and simulation projects. Its SWE-Pro score matches GPT-5.3 Codex, and the multi-agent teams mean you could orchestrate complex coding workflows through the API.

If you work with documents daily: The office productivity scores are the highest among publicly accessible models. The model generates presentation-ready deliverables from earnings reports, research papers, and raw data — with dramatically fewer hallucinations than its predecessor.

If you follow AI trends: Self-evolution is the headline story here. The idea of AI that participates in its own training has been theoretical for years. M2.7 is the first commercial release to demonstrate it working at scale. Whether this becomes mainstream depends on whether the improvement loop compounds reliably — but the early data is striking.

Try It Now

M2.7 is available today through two channels:

Chat interface: Visit agent.minimax.io to try M2.7 directly in your browser.

API access: Developers can integrate via platform.minimax.io with a free tier for testing. Requires Python 3.10+ or Node.js 18+.

MiniMax also open-sourced OpenRoom, a browser-based desktop demo where M2.7 controls apps through natural language — available at openroom.ai and on GitHub.

Related Content — Get Started with Easy Claude Code | Free Learning Guides | More AI News

Sources

Stay updated on AI news

Simple explanations of the latest AI developments