AI trained on 10x less data matches full-scale models

Q Labs's NanoGPT Slowrun proves AI can learn 10x more from the same data. Eight researchers hit the milestone in weeks — Karpathy endorsed it.

What if an AI model could learn just as much from one-tenth the training data? A team of eight researchers at Q Labs just proved it's possible — and they did it in a matter of weeks.

Their project, NanoGPT Slowrun, achieved 10x data efficiency: an ensemble of AI models trained on just 100 million text tokens (a relatively tiny dataset) matched the performance of models that typically need 1 billion tokens. That's like a student acing an exam after reading 10 pages instead of 100.

Flipping the AI training playbook

Most AI training today follows a simple recipe: throw more data at bigger models. This approach, known as "scaling laws" (the rules that predict how much data a model needs to perform well), has driven every major AI breakthrough from GPT-3 to Claude.

But Q Labs asked a different question: what happens when data is the bottleneck, not computing power? Instead of limiting time and maximizing data, they gave researchers unlimited computing time and fixed the data at just 100 million tokens from FineWeb (a curated collection of high-quality web text).

The result is a competitive benchmark — like a hackathon where the goal is to squeeze every drop of learning from limited data. And the community response has been explosive.

How they pulled it off

The winning techniques read like a masterclass in creative AI engineering:

Team learning (ensemble training) — Instead of training one model, they trained eight models in sequence. Each new model learned from the previous one, like a relay race where each runner passes knowledge forward.

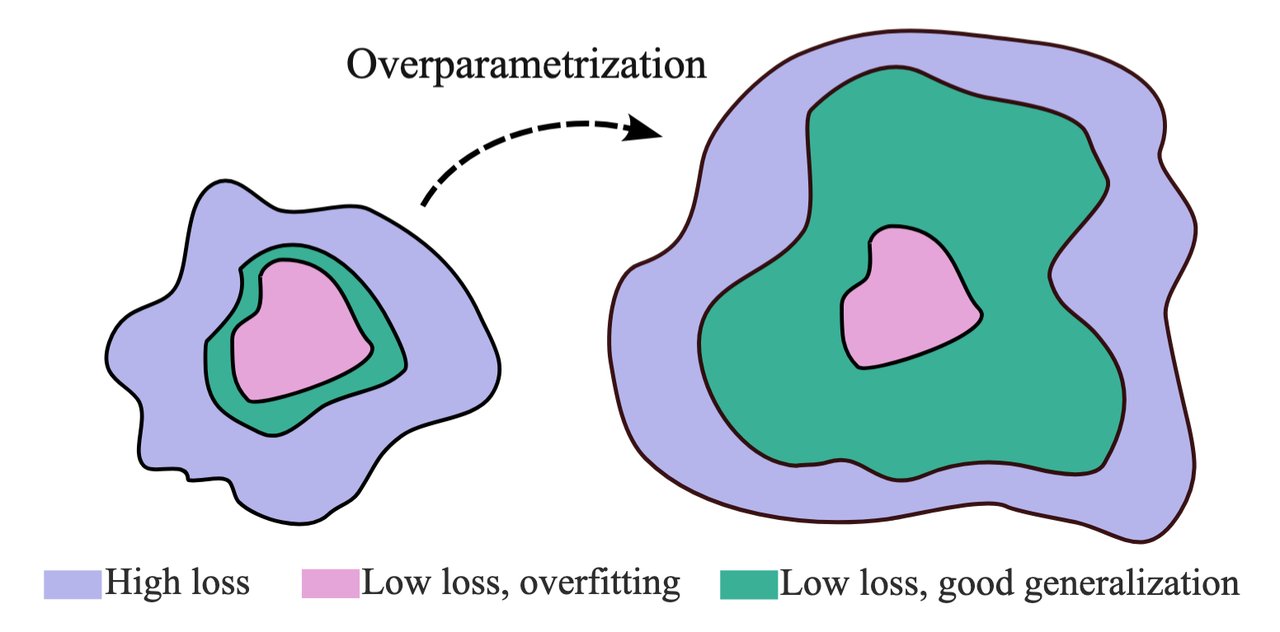

Extreme discipline (aggressive regularization) — They applied 16x the normal amount of "weight decay" (a technique that prevents AI from memorizing training data instead of learning general patterns). Think of it as forcing a student to understand concepts rather than memorize answers.

Looped thinking — Mid-training, certain layers of the model were set to process data four times per pass, letting the AI think harder about each piece of text without needing more parameters (the adjustable values that determine what an AI has learned).

Architectural tricks — Skip connections (shortcuts that let information flow between distant parts of the network), improved activation functions (the math that decides which neurons fire), and weight averaging strategies.

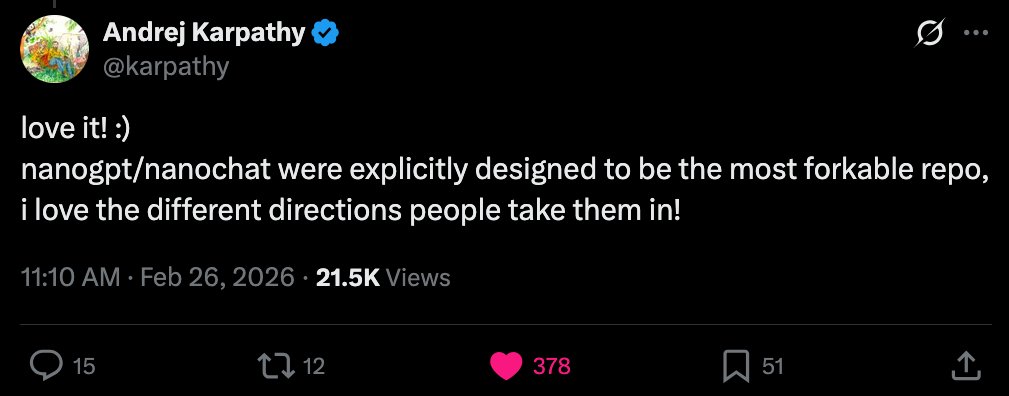

Karpathy noticed — and the community piled in

The project caught the attention of Andrej Karpathy, the former Tesla AI director and OpenAI researcher who created the original NanoGPT speedrun benchmark. His endorsement helped the project gain rapid traction on Hacker News and GitHub.

Within the first week, community contributors pushed data efficiency from 2.4x to 5.5x — more than doubling performance with "relatively straightforward changes," as one Hacker News commenter noted. The jump from 5.5x to 10x followed shortly after.

The project now runs three competitive tracks:

Tiny Track (15 minutes, 8 GPUs) — Best validation loss: 3.365

Limited Track (1 hour, 8 GPUs) — Best validation loss: 3.252

Unlimited Track (no time limit) — Best validation loss: 3.045 (achieved March 19, ~44 hours on 16 GPUs)

Why cheaper AI training matters for everyone

Right now, training a frontier AI model costs hundreds of millions of dollars, largely because of the massive datasets required. If data efficiency can improve by 10x or even 100x, the implications are enormous:

For businesses: Smaller companies could train specialized AI models on their own data — think a law firm training AI on its case history, or a hospital training on its patient records — without needing Google-scale budgets.

For privacy: Less data needed means AI models could be trained on smaller, more carefully curated datasets, reducing the need to scrape the entire internet.

For specialized fields: Industries with limited data (medical imaging, rare languages, scientific research) could build powerful AI tools that were previously impossible.

As one researcher put it: "If high-quality training data becomes the real bottleneck, then the interesting question is how much signal you can extract." Q Labs is answering that question — and the answer is getting bigger every week.

Try it yourself

The full project is open source on GitHub with 320+ stars. You'll need access to GPUs to participate in the benchmark, but the code and techniques are free to study.

git clone https://github.com/qlabs-eng/slowrun.git && cd slowrun

pip install -r requirements.txt

python prepare_data.py

torchrun --standalone --nproc_per_node=8 train.pyQ Labs projects that 100x data efficiency may be within reach with further algorithmic innovation. If they're right, the economics of AI training are about to change fundamentally.

Related Content — Get Started with Easy Claude Code | Free Learning Guides | More AI News

Sources

Stay updated on AI news

Simple explanations of the latest AI developments