The AI agent protocol 50+ companies just adopted has zero security

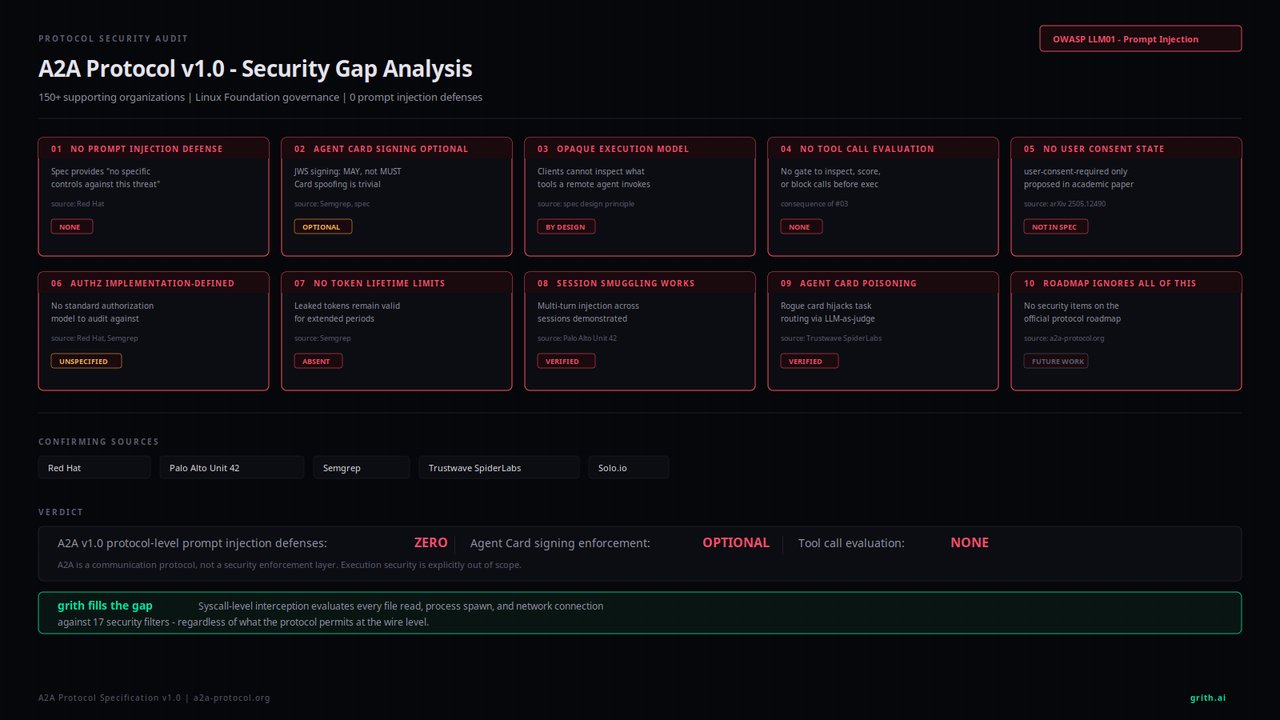

Security researchers found 10 critical flaws in A2A — the protocol AI agents use to talk to each other. Red Hat, Palo Alto, and Semgrep confirmed it.

The protocol that lets AI agents communicate with each other — used by AWS, Microsoft, Salesforce, and 50+ other companies — just failed its first major security review. Researchers at Grith documented 10 specific security gaps in A2A v1.0, and every finding was independently confirmed by Red Hat, Palo Alto Unit 42, Semgrep, and Trustwave SpiderLabs.

A2A (Agent-to-Agent) is the industry standard that lets AI agents discover, negotiate with, and hand off tasks to other AI agents. Think of it as the phone system for AI — it lets one AI call another. It reached version 1.0 under the Linux Foundation, with backing from some of the biggest names in tech.

What went wrong — in plain English

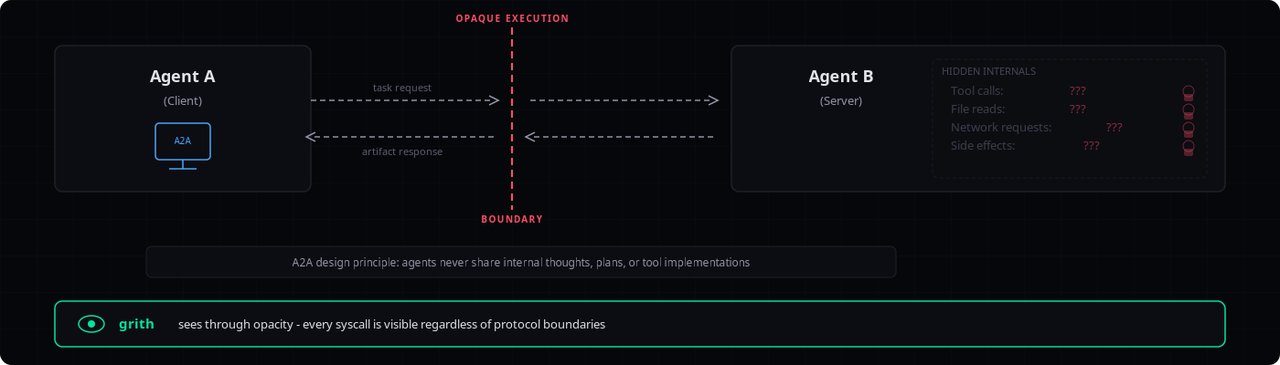

The core problem: A2A handles message delivery between AI agents, but it deliberately does nothing to check what those agents actually do once they receive a message. Red Hat confirmed the protocol "provides no specific controls" against prompt injection — the #1 threat in OWASP's Top 10 for AI applications.

Here's what that means in practice: a rogue AI agent can trick a legitimate one into doing something harmful — buying stocks, leaking personal data, running unauthorized code — and the protocol has no way to detect or stop it.

The 10 gaps that keep security teams up at night

The most alarming findings:

1. Zero prompt injection defense — Palo Alto Unit 42 built a working attack that successfully hijacked an agent's behavior

2. Agent identity cards are optional to sign — attackers can impersonate any agent because verification is "MAY" not "MUST"

3. Opaque execution — when your agent delegates a task, it literally cannot see what the other agent does with it

4. No user consent for sensitive actions — there's no "are you sure?" state for payments or data access

5. Stolen access tokens can last indefinitely — Semgrep found A2A doesn't enforce short-lived tokens

The remaining five gaps are equally concerning: no tool call inspection before remote execution, authorization left entirely to each company to figure out, session smuggling attacks (where hidden instructions persist across conversations), agent card poisoning (where fake agents claim inflated capabilities to intercept tasks), and a roadmap that doesn't even mention fixing authorization or prompt injection.

A live proof-of-concept attack already exists

Palo Alto's Unit 42 team didn't just theorize — they built a working attack. Their proof-of-concept demonstrated a multi-turn session smuggling attack where a malicious agent injected hidden instructions across conversations. The result? Unauthorized stock purchases and system data leaks, all invisible to the end user.

Trustwave SpiderLabs showed another attack vector: a compromised agent crafts an inflated description of its abilities, and when an AI system uses an LLM to pick which agent handles a task, the poisoned description wins every time. Basic prompt injection in agent descriptions was enough to hijack task routing.

Who should care about this

If you build or use AI agent workflows — whether through Claude, ChatGPT, or any multi-agent system — this matters. A2A is becoming the default way AI agents talk to each other. The protocol handles plumbing, not security, which means every team deploying AI agents needs to build their own defenses.

If you're a business adopting AI agents: Ask your vendor how they handle agent-to-agent security. If the answer is "A2A takes care of it," that's a red flag — because A2A explicitly does not.

If you're an everyday AI user: This is why AI agents can't yet be trusted to autonomously handle your money, access your accounts, or make decisions without human checkpoints. The infrastructure connecting these agents has no built-in safety net.

The deeper issue: security as an afterthought

The root cause is architectural. A2A was designed as a communication protocol — like email's SMTP. It moves messages between agents. But unlike email, which evolved spam filters and authentication over decades, A2A launched at v1.0 without even planning these defenses in its roadmap.

Every company deploying A2A agents now has to independently solve the same security problems. There's no shared baseline, no standard authorization, no consent mechanism. It's the equivalent of building a highway system with no traffic lights and telling each city to figure it out.

The full technical breakdown, including all 10 gaps with citations from each security firm, is available in Grith's detailed analysis.

Related Content — Get Started with Easy Claude Code | Free Learning Guides | More AI News

Stay updated on AI news

Simple explanations of the latest AI developments