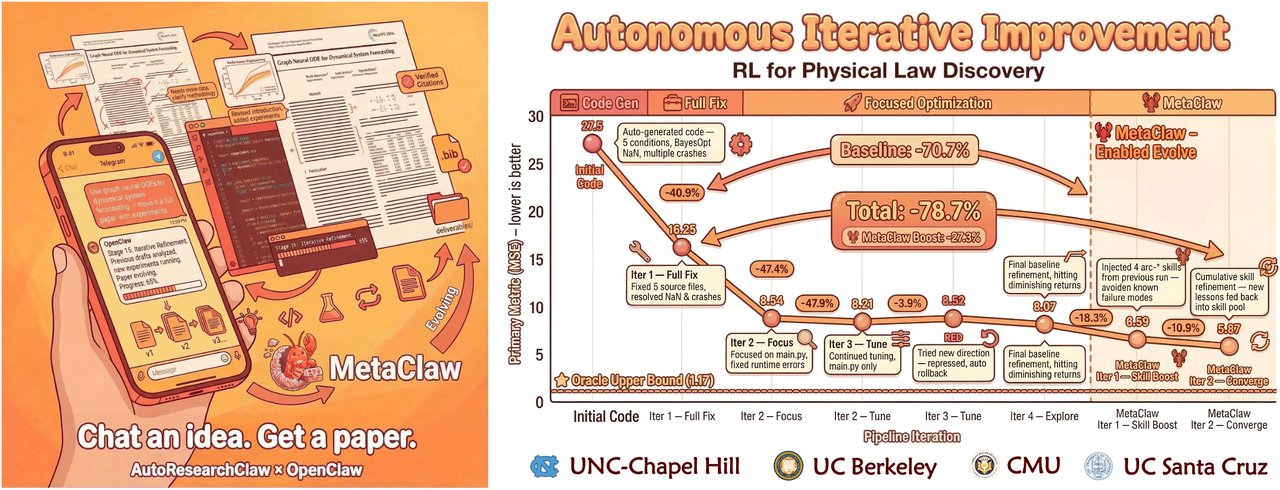

This AI just wrote a full academic paper — from idea to citations

AutoResearchClaw turns one sentence into a conference-ready paper with real citations, working experiments, and peer review. 7K GitHub stars and rising.

What if you could type a single idea — like "How does data augmentation affect small-sample image classification?" — and get back a complete academic paper with real citations, working experiments, and even an AI peer review? That's exactly what AutoResearchClaw does.

This free, open-source tool just crossed 7,100 GitHub stars and is trending globally. It runs a fully autonomous 23-stage pipeline (a series of automated steps) that transforms a single sentence into a conference-ready paper — formatted for top venues like NeurIPS, ICML, and ICLR (the world's most prestigious AI conferences).

From one sentence to a 6,000-word paper — here's how it works

The pipeline runs through 8 phases and 23 stages, each fully automated:

Phase 1-2: Find real papers. The system searches OpenAlex, Semantic Scholar, and arXiv (the largest open databases of academic papers) to find relevant literature. No made-up sources — every citation is verified through a 4-layer check.

Phase 3: Three AI agents debate your hypothesis. An "Innovator," a "Pragmatist," and a "Contrarian" argue over the best approach — mimicking how real researchers challenge each other's ideas.

Phase 4-5: Run actual experiments. The AI writes code, detects whether you have a GPU (graphics card for fast computing) or just a regular CPU, and runs the experiment in a sandbox (an isolated environment so nothing breaks on your computer).

Phase 6-8: Write, review, and polish. The system drafts the full paper (5,000–6,500 words), runs an AI peer review to check methodology and evidence, then outputs a ready-to-compile LaTeX document with charts and references.

It actually catches fake citations

One of the biggest problems with AI-generated text is hallucinated references — citations that look real but don't exist. AutoResearchClaw attacks this with a 4-layer verification system:

- Checks arXiv IDs against the actual database

- Validates DOIs (digital object identifiers — unique codes for published papers) through CrossRef and DataCite

- Cross-references against Semantic Scholar records

- Uses AI scoring to check if the cited paper actually supports the claim

The result: every citation in the final paper points to a real, verifiable source.

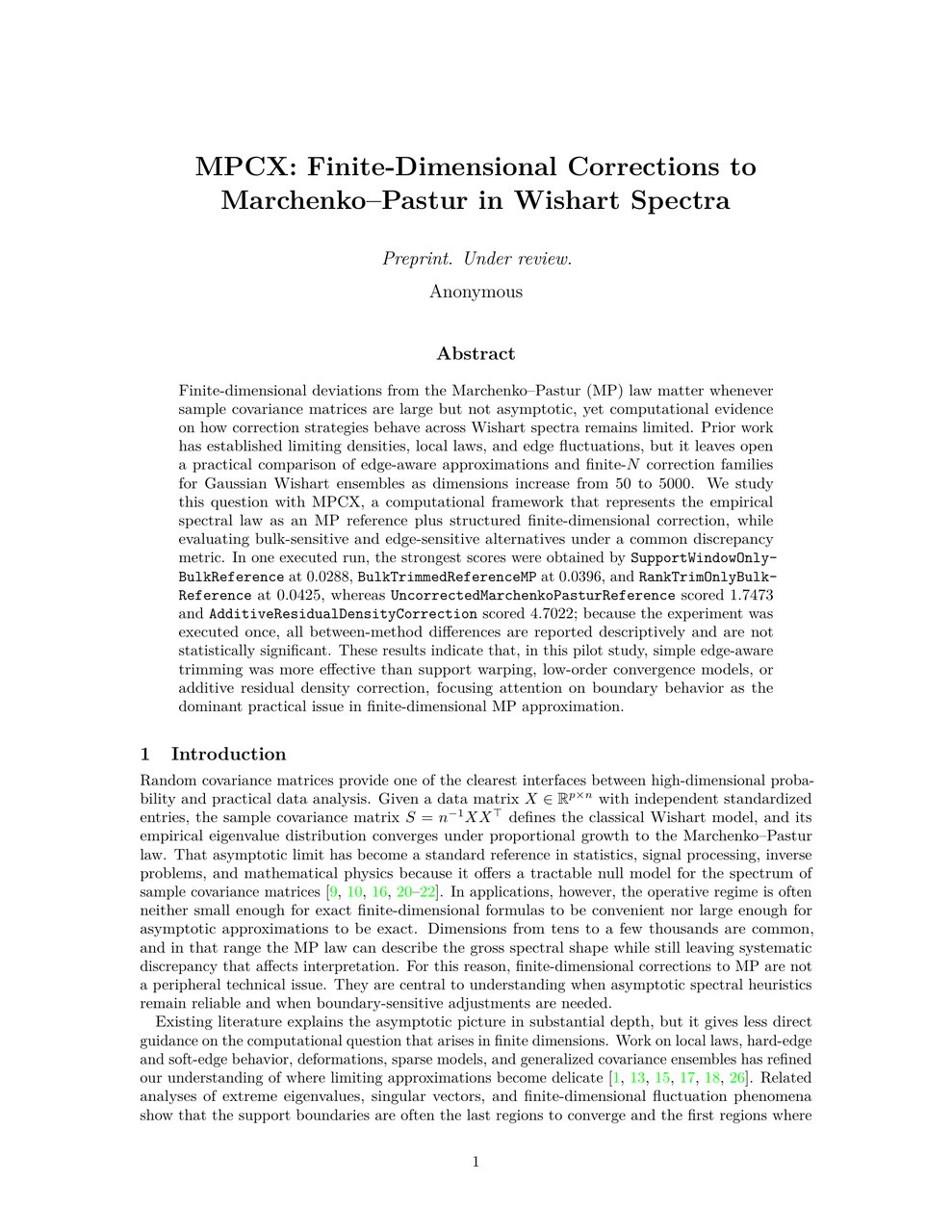

It learns from its own failures

The optional MetaClaw system (a self-learning module) captures what went wrong in each run and converts failures into reusable lessons. In controlled tests, this delivered:

- 24.8% fewer retries across pipeline stages

- 40% fewer refinement cycles needed

- 18.3% overall robustness improvement

In other words, the more you use it, the better it gets.

Who is this actually for?

Graduate students and academics: Use it as a starting point for literature reviews and experiment design. It won't replace your expertise, but it can save days of preliminary work.

Startup founders and product teams: Need a quick feasibility study on a technical approach? AutoResearchClaw can generate a structured analysis with real data in hours instead of weeks.

AI enthusiasts learning to do research: It's a masterclass in how research papers are structured — watch the pipeline work and learn the process.

Try it yourself

git clone https://github.com/aiming-lab/AutoResearchClaw.git

cd AutoResearchClaw

python3 -m venv .venv && source .venv/bin/activate

pip install -e .

researchclaw setup && researchclaw init

researchclaw run --topic "Your research idea here" --auto-approveYou'll need Python 3.11+, an API key for an LLM provider (OpenAI, DeepSeek, or others), and optionally Docker and LaTeX for PDF generation. It also works with Claude Code and other AI coding assistants through the ACP protocol.

Important caveat: AutoResearchClaw is a powerful research assistant, not a replacement for domain expertise. The papers it generates are starting points that still need human review — especially before submission to any conference or journal. ICML recently caught 497 reviewers using AI, and conferences are actively cracking down.

Related Content — Get Started with Easy Claude Code | Free Learning Guides | More AI News

Stay updated on AI news

Simple explanations of the latest AI developments