Hackers just broke into a 146K-star AI tool — in 20 hours

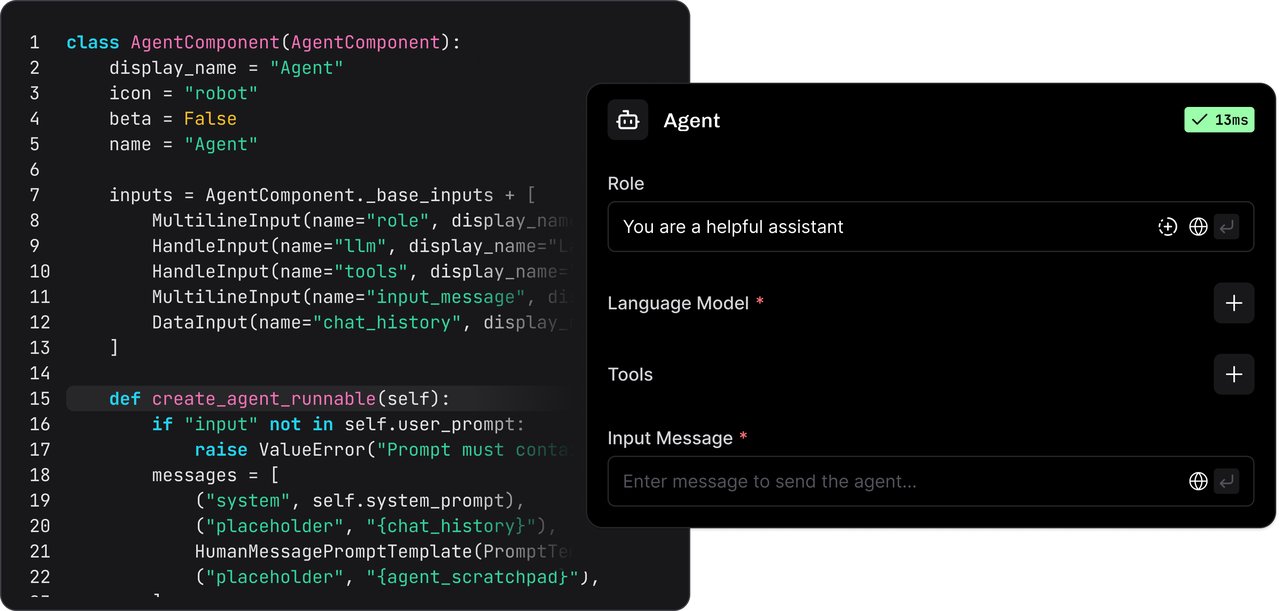

A critical flaw in Langflow — the popular drag-and-drop AI builder — let attackers steal API keys and cloud credentials within 20 hours of disclosure. Here's who's at risk.

A critical security flaw in Langflow — one of the most popular tools for building AI workflows without writing code — was exploited by hackers within just 20 hours of being publicly disclosed. Attackers used a single web request to run malicious code on servers, stealing API keys for services like OpenAI, Anthropic, and AWS.

Langflow has 146,000 GitHub stars, over 15 million downloads, and is used by thousands of developers and startups to build AI agents and automation pipelines. If you've ever used it — or run it on a server — this affects you directly.

What happened

On March 17, a security advisory was published for Langflow describing a vulnerability tracked as CVE-2026-33017 with a severity score of 9.3 out of 10 — classified as critical.

The flaw is in a feature that lets users share AI workflows publicly. The problem: anyone on the internet could send a specially crafted request to a Langflow server, and the server would execute whatever code the attacker included — no login required, no password, no authentication of any kind.

Think of it like leaving the keys in the ignition of an unlocked car — except the car is your entire AI infrastructure.

- March 17, 8:05 PM UTC — Security advisory goes public on GitHub

- March 18, 4:04 PM UTC — First exploitation attempt detected (just ~20 hours later)

- March 18, 8:55 PM UTC — Attackers escalate to full credential theft

- Within 48 hours — Six separate hacker groups were actively exploiting the flaw

What the attackers stole

Cloud security firm Sysdig tracked the attacks in real time using honeypots (decoy servers designed to attract hackers). Here's what they observed:

Phase 1 — Automated scanning (hours 20-21): Four different IP addresses ran automated scans looking for vulnerable Langflow servers across the entire internet.

Phase 2 — Custom attacks (hours 21-24): Attackers deployed hand-written scripts to explore compromised servers, reading system files and attempting to install additional malware.

Phase 3 — Credential harvesting (hours 24-30): The most sophisticated attackers systematically extracted API keys, database passwords, and cloud account credentials from configuration files and environment variables.

The stolen credentials could give attackers access to:

- Your OpenAI, Anthropic, or other AI API accounts (running up massive bills)

- Your AWS, Google Cloud, or Azure accounts

- Your databases containing business data

- Any other service whose keys were stored on the server

No public exploit existed — attackers built their own

Here's what makes this especially alarming: no ready-made hacking tool existed when the attacks began. The attackers read the security advisory and built working exploits from scratch — directly from the vulnerability description.

According to Sysdig's report, the average organization takes about 20 days to install security patches. But attackers are now exploiting vulnerabilities within 20 hours. That gap — between disclosure and patching — is where the damage happens.

Who should worry

You may be affected if you:

- Run a Langflow instance that's accessible from the internet

- Use Langflow in your company to build AI chatbots, RAG pipelines (systems that let AI search your documents), or AI agents

- Store API keys or database credentials on a server where Langflow is installed

- Use any AI workflow tool with public-facing endpoints — the broader lesson applies

How to protect yourself right now

Langflow released a patch on March 20 (version 1.8.2). Here's what you should do immediately:

# Update Langflow to the patched version

pip install langflow --upgrade

# Or pull the latest Docker image

docker pull langflowai/langflow:latestBeyond updating, security experts recommend these steps:

- Rotate all credentials — Change every API key, database password, and cloud access token stored on your Langflow server

- Block public access — Put Langflow behind a firewall or a reverse proxy that requires login

- Check your logs — Look for unexpected outbound connections, especially to unfamiliar IP addresses

- Audit your .env files — If attackers accessed your server, they likely read these files first

The bigger picture for AI tool users

This isn't just a Langflow problem. It reveals a growing pattern: AI tools are becoming prime targets for hackers because they often store high-value credentials (API keys for expensive AI services) and are frequently deployed by data science teams who may not follow standard security practices.

A recent report found that the median time from vulnerability publication to it appearing on the U.S. government's official "Known Exploited Vulnerabilities" list has dropped to just 5 days. The window between "vulnerability discovered" and "you get hacked" is shrinking fast.

If you use any AI automation tool — Langflow, Flowise, n8n, or even self-hosted AI platforms — the takeaway is clear: never expose these tools to the public internet without authentication, and keep them updated.

Related Content — Get Started with Easy Claude Code | Free Learning Guides | More AI News

Stay updated on AI news

Simple explanations of the latest AI developments