This AI gives glasses and robots a memory — just raised $16M

Memories.ai built a searchable visual memory for AI glasses and robots. Backed by $16M and an NVIDIA partnership, it remembers everything a device sees.

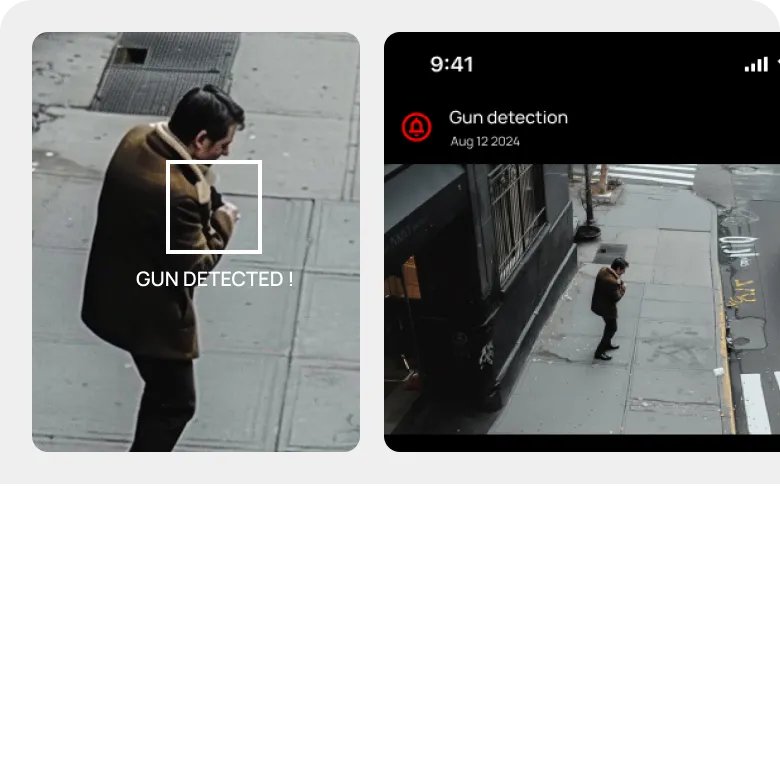

Imagine asking your smart glasses "Where did I leave my keys yesterday?" and getting an instant answer — not from a camera feed you manually scrub through, but from an AI that remembers everything the device has ever seen.

That's what Memories.ai just built. The San Francisco startup raised $16 million, partnered with NVIDIA, and unveiled what it calls the world's first Large Visual Memory Model (LVMM) — a system that gives wearables and robots a searchable, permanent visual memory.

Born inside Meta's Ray-Ban glasses team

The founders aren't newcomers. Dr. Shawn Shen (CEO) and Ben Zhou (CTO) built the AI system behind Meta's Ray-Ban smart glasses. While working on those glasses, they realized a fundamental problem: even if a device records everything you see, there's no way to find anything in that data later.

They looked for a solution. When they couldn't find one, they left Meta and built it themselves.

As Shen put it: "The real world should be as searchable as the digital world."

How it works — video becomes searchable memory

The LVMM takes raw, continuous video from a device's camera and turns it into structured, searchable memory. Here's the pipeline:

1. Encode — Each video frame is analyzed and tagged with what's in it: objects, people, locations, activities, spatial relationships.

2. Compress — The tagged data is compressed so an entire workday of video fits on a single device, not in the cloud.

3. Index — A fast search index is built so you can query anything in under one second.

4. Query — Ask in plain language ("Where's my coffee mug?") or show an image cue. The system finds the exact moment.

The system understands that "where's my coffee mug" and "ceramic cup location" are the same question — it searches by meaning, not keywords.

NVIDIA partnership — runs on the same chips as AI robots

At NVIDIA GTC 2026, Memories.ai announced it's using two key NVIDIA technologies:

NVIDIA Cosmos Reason 2 — a vision AI model that understands what it sees in video, providing the "reasoning" layer for visual memory.

NVIDIA Metropolis — a framework for video search and summarization, powering the retrieval engine.

The combination runs on NVIDIA RTX GPUs for desktop/enterprise use and Jetson Orin processors for on-device deployment in robots and wearables. A Qualcomm integration is planned for later this year — targeting smartphones and lightweight glasses.

Three worlds where this changes everything

Smart glasses and wearables: Your AI glasses remind you of someone's name when you meet them again, surface relevant documents before a meeting based on what you discussed last time, and flag things you noticed but forgot.

Warehouse and factory robots: A robot remembers where inventory was placed three shifts ago, which routes were congested, and how a product was successfully assembled before. It gets better over time — not by retraining, but by remembering.

Your computer: An AI assistant that watched your workday could draft emails using context from earlier meetings, find the file you were editing yesterday afternoon, or recall what a colleague said during a screen share.

Who's backing it

The $16 million round was backed by Susa Ventures, Samsung Next, Crane Venture Partners, Fusion Fund, Seedcamp, and Creator Ventures. Samsung's investment arm being involved hints at where this technology might show up first — potentially in Samsung's own wearable devices.

The market is massive — and wide open

Analysts project over 1 billion smart glasses and AI wearables in use by 2030, with 2.8 million robots shipping annually. Every one of those devices needs to remember what it sees. Right now, none of them truly can.

That's the gap Memories.ai is filling. They're not competing with ChatGPT or Claude — they're building the memory layer that those AI assistants currently lack when they operate in the physical world.

The technology is available now via API and a web-based chatbot where you can upload videos and search them using natural language.

Related Content — Get Started with Easy Claude Code | Free Learning Guides | More AI News

Stay updated on AI news

Simple explanations of the latest AI developments