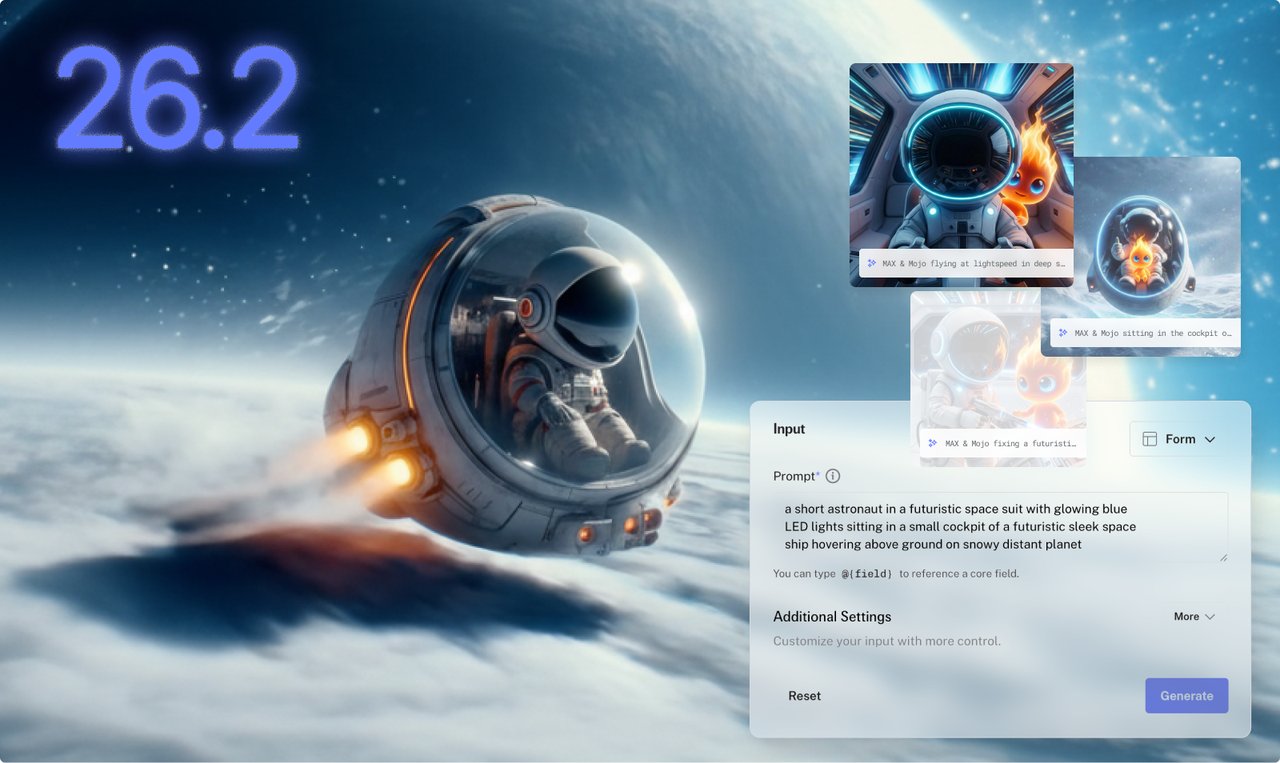

Modular just made AI image generation 99% cheaper

Modular 26.2 generates AI images 4x faster than PyTorch and cuts costs by 99%. New Mojo AI coding tools and consumer GPU support included.

Modular — the AI infrastructure company founded by Chris Lattner, the creator of Swift and LLVM — just dropped version 26.2 of its platform. The headline number: AI-generated images now cost 99% less to produce compared to leading alternatives, while running over 4x faster than standard tools.

For anyone who generates AI images — designers creating marketing assets, developers building AI-powered apps, or teams running image pipelines — this changes the math entirely.

From $0.13 per image to $0.001

The release adds support for FLUX.2, a popular family of AI image generation models from Black Forest Labs. Modular's engine runs these models with dramatic speed improvements:

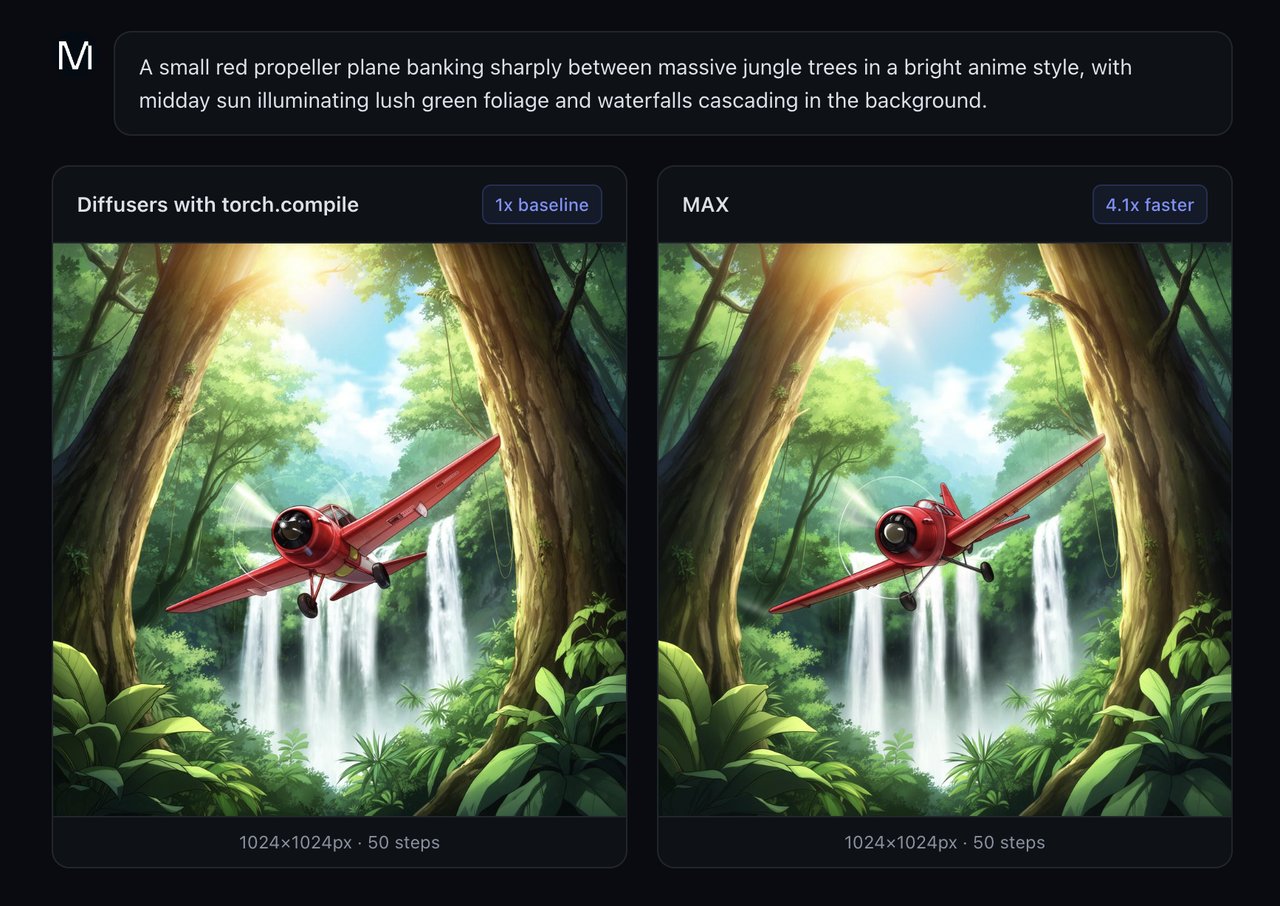

Speed benchmarks (vs. PyTorch with torch.compile):

• 1024×1024 images: 4.1x faster

• 768×1360 images: 4.0x faster

• 1360×768 images: 3.4x faster

But the real jaw-dropper is cost. Running on AMD MI355X chips (a newer, more affordable alternative to NVIDIA's top-end hardware), Modular's engine produces a single 1024×1024 image for $0.00139. Google's comparable service charges $0.134 per image. That's a 96x price difference.

Put differently: generating 1,000 images now costs about $1.39 instead of $134.

Why AMD chips suddenly matter for AI

A big part of Modular's cost advantage comes from hardware flexibility. NVIDIA's B200 GPU (the current top-tier AI chip) is expensive and hard to get. Modular's engine also runs on AMD MI355X chips, which cost about 75% of what B200s cost — and thanks to Modular's optimizations, actually deliver 5.5x better total cost of ownership than NVIDIA for image generation.

This matters because the AI chip shortage has been one of the biggest bottlenecks in the industry. If you can run the same AI models on cheaper, more available hardware without losing quality, that opens doors for smaller companies and independent creators who couldn't afford NVIDIA's premium pricing.

AI agents that write GPU-level performance code

The release also upgrades Mojo, Modular's programming language designed specifically for AI development. The new version adds something called "agent skills" — essentially, plug-in capabilities that let AI coding assistants (like Claude Code or Cursor) write high-performance Mojo code automatically.

To add these AI coding skills to your setup:

npx skills add modular/skillsMojo also gets a cleaner syntax with the new comptime keyword, plus support for conditional trait conformance (a way to make code adapt its behavior based on context). These are developer-focused improvements, but they signal Modular's bet that AI will write most performance-critical code in the future.

New AI models and consumer GPU support

Beyond image generation, Modular 26.2 adds support for several new AI models:

• Kimi K2.5 and Kimi-VL — the models behind Moonshot AI's rising chatbot

• OlMo3 — an open research model

• Qwen3-30B — Alibaba's mixture-of-experts model with multi-GPU support

• GPT-OSS — open-source GPT variants

Perhaps most exciting for individual users: Modular now runs on AMD RDNA consumer GPUs and NVIDIA Jetson edge devices. This means you can develop and test AI applications on a regular gaming PC or a small embedded device — no data center required.

Who should pay attention

If you generate AI images for work — marketing teams, design agencies, content creators — the 99% cost reduction is transformative. A campaign that would've cost $1,340 in image generation now costs $13.90.

If you build AI-powered products — the combination of cheaper hardware (AMD), faster inference, and AI-assisted coding with Mojo makes Modular a serious alternative to the NVIDIA-only ecosystem.

If you're exploring AI on personal hardware — consumer GPU support means you can start experimenting without cloud costs.

To upgrade:

uv pip install --upgrade modularRelated Content — Get Started with Easy Claude Code | Free Learning Guides | More AI News

Sources

Stay updated on AI news

Simple explanations of the latest AI developments