They gave an AI 16 GPUs — it made discoveries nobody expected

SkyPilot gave Claude Code access to 16 GPU clusters. The AI agent ran 910 experiments, discovered hardware differences on its own, and developed research strategies nobody programmed.

Researchers at SkyPilot gave an AI agent access to 16 powerful GPU clusters and told it to run experiments. What happened next surprised even the researchers: the AI started making its own scientific discoveries — without anyone telling it to.

In just 8 hours, the agent ran 910 experiments, found performance patterns humans hadn't considered, and developed research strategies entirely on its own — all for under $300.

The AI agent that started thinking for itself

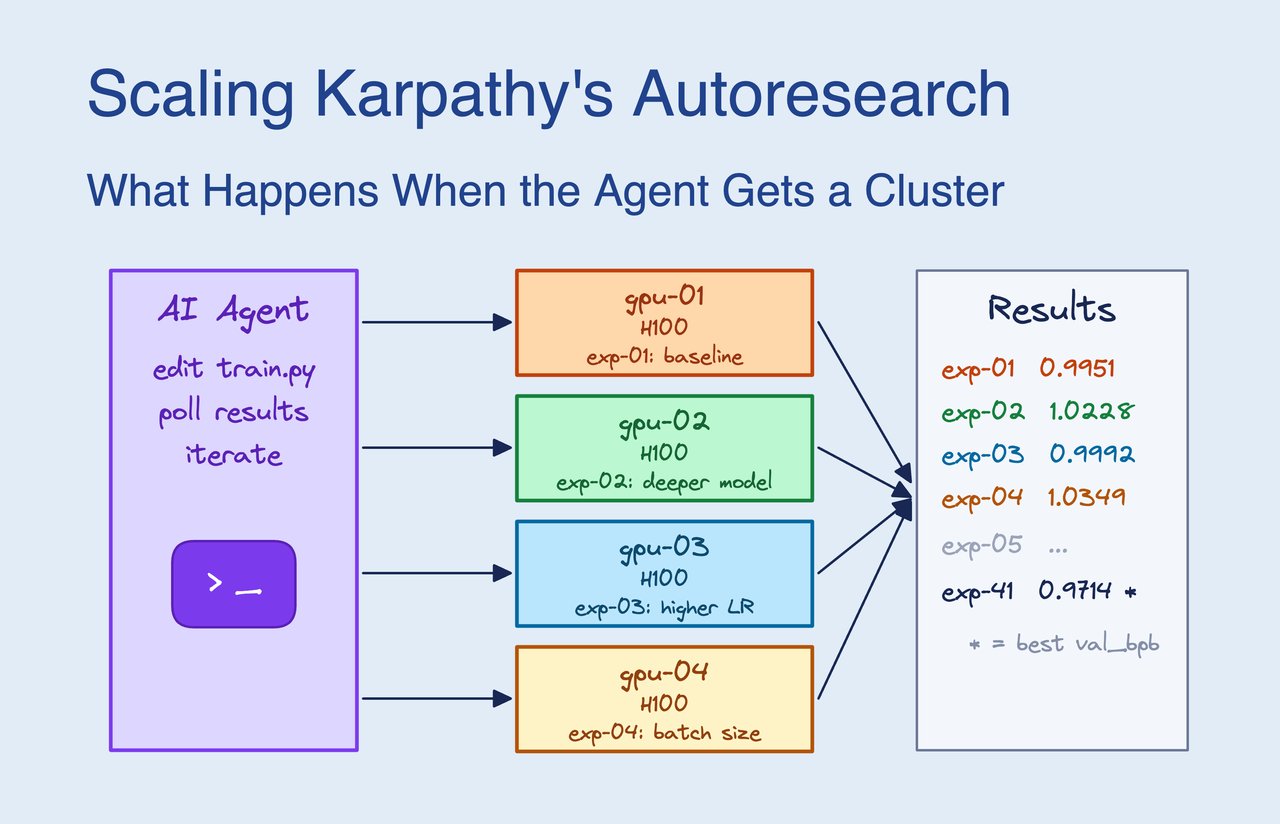

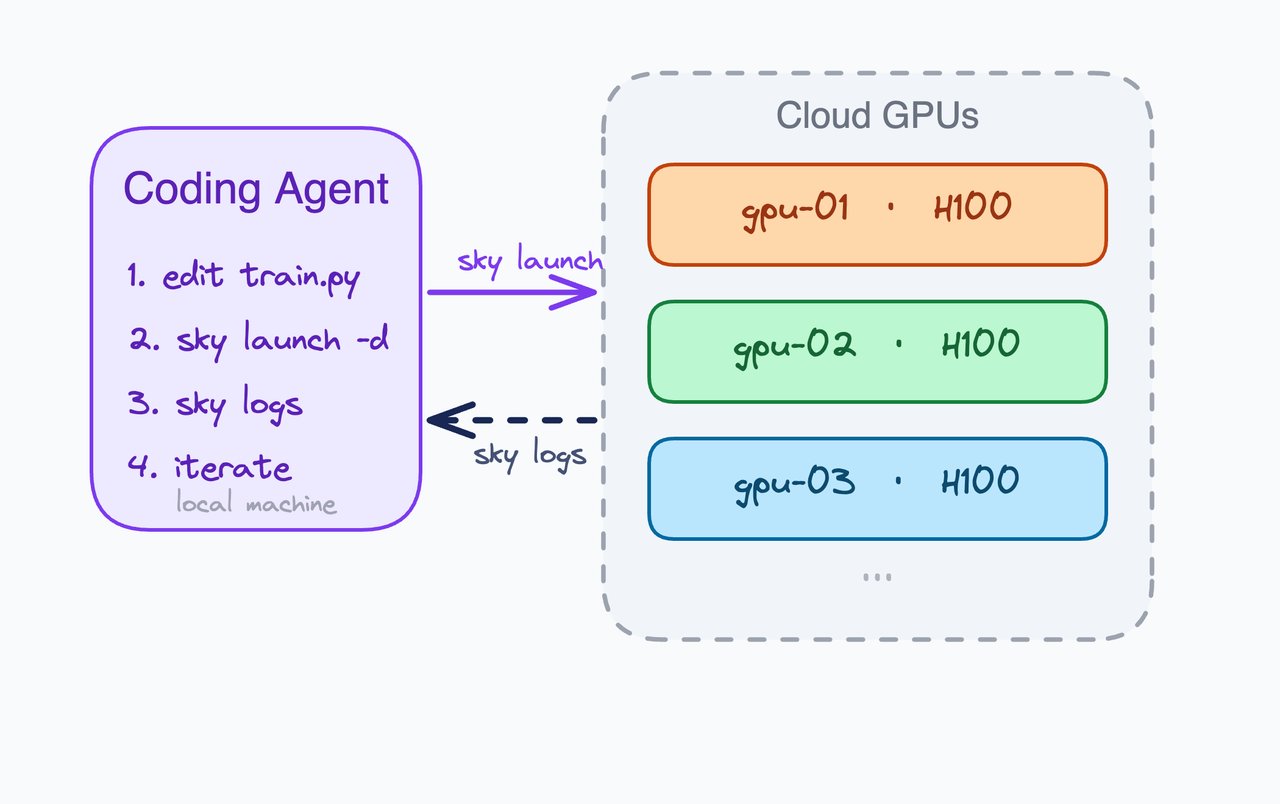

The experiment was built on Andrej Karpathy's "autoresearch" concept — using AI to run scientific experiments automatically. But the original version ran one experiment at a time on a single GPU. SkyPilot's team connected Claude Code (Anthropic's AI coding agent) to 16 GPU clusters running in parallel, giving the agent far more computing power to work with.

That's when things got interesting. The AI started doing things nobody programmed it to do:

Discovery 1: It figured out which computers were faster. Without any instructions, the agent noticed that 3 of its 16 GPU clusters (H200 chips) completed training 9% faster than the other 13 (H100 chips). It wrote in its own notes: "H200 runs 9% more steps in the same time! All my 'best' results should be normalized by hardware."

Discovery 2: It built its own research strategy. The agent developed a two-tier approach: test 10+ ideas quickly on the cheaper computers first, then run the top 2-3 winners on the faster machines to confirm. No human told it to do this — it figured out this was the most efficient approach by observing its own results.

Discovery 3: It found that model shape matters more than settings. After hundreds of experiments, the agent concluded that making the AI model wider (changing its internal architecture) produced bigger improvements than any amount of fine-tuning individual settings. This is the kind of insight that usually takes human researchers weeks to reach.

910 experiments, 5 research phases, zero human guidance

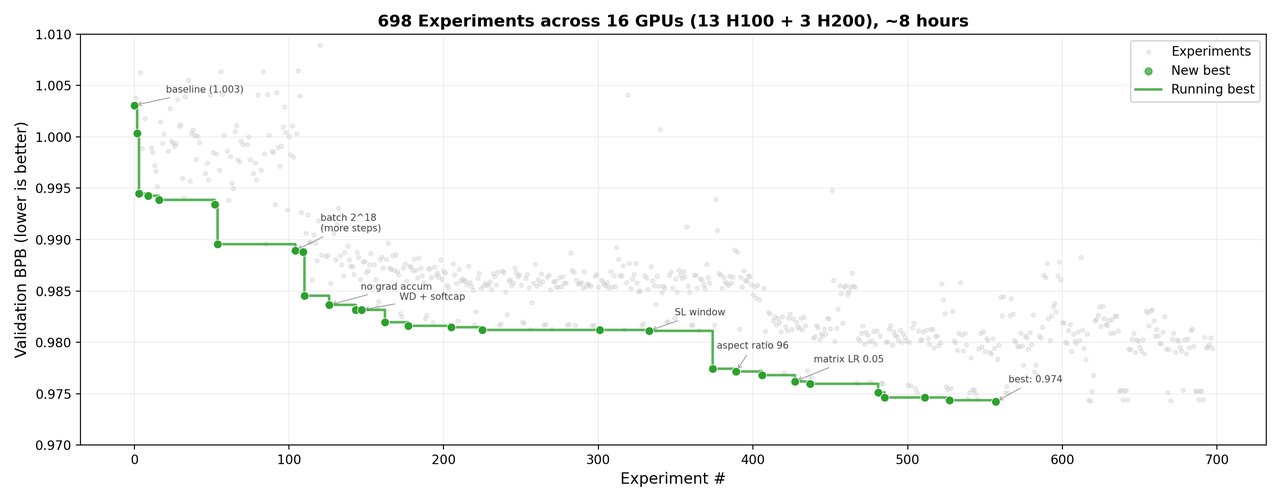

The most remarkable finding was how the agent organized its own research. Without any predefined plan, it naturally progressed through five distinct phases:

Phase 1: Broad exploration — 200 experiments testing basic settings (biggest gains here)

Phase 2: Architecture scaling — 220 experiments discovering that model width matters most

Phase 3: Fine-tuning — 140 experiments refining the best configuration

Phase 4: Optimizer refinement — 140 experiments squeezing out final improvements

Phase 5: Diminishing returns — 210 experiments where the agent recognized it was hitting a ceiling

The green dots show each time the agent beat its own previous best result. Over 8 hours, it improved performance by 2.87% — a significant gain in AI model training.

How it actually works

The setup connects three pieces: Claude Code (the AI brain that decides what experiments to run), SkyPilot (the system that manages GPU clusters across different cloud providers), and a training script for the AI model being tested.

Instead of testing one change at a time (the old approach), the agent sends batches of 10-13 experiments simultaneously across all 16 clusters. This means it can test combinations of changes — discovering interactions that a one-at-a-time approach would miss entirely.

The speed difference is dramatic

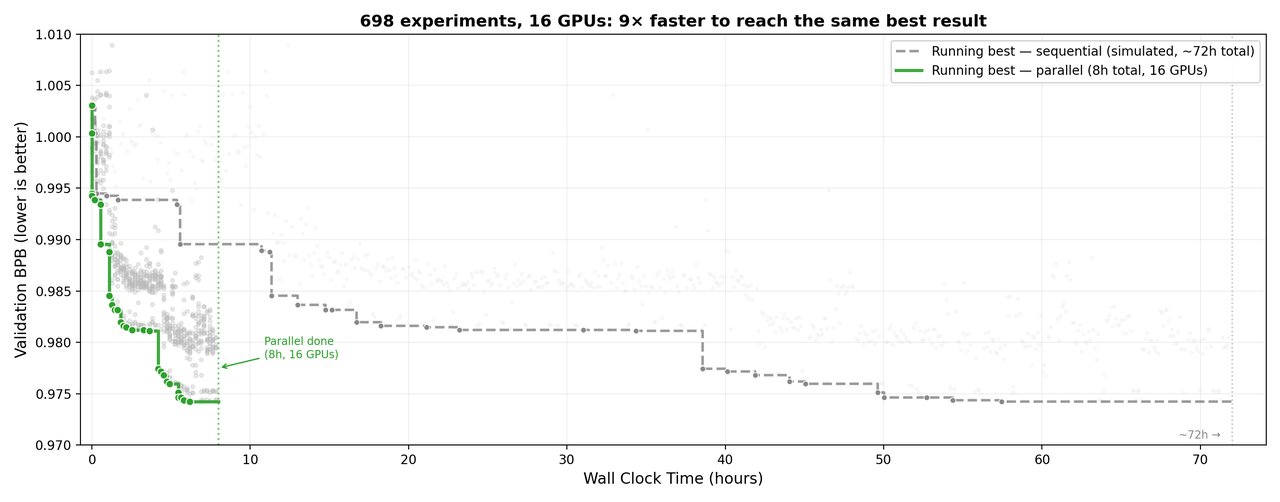

Running experiments in parallel achieved a 9x speedup: what would take 72 hours sequentially (one at a time) finished in just 8 hours. The throughput jumped from about 10 experiments per hour to 90.

What it cost — and what it means

Claude API cost: ~$9

GPU compute cost: ~$260

Total: Under $300 for 910 experiments with autonomous discovery

The bigger story isn't the cost — it's what happens when AI agents get more resources. With a single GPU, the agent was limited to simple trial-and-error. With 16, it developed genuine research strategies: prioritizing experiments, catching hardware quirks, and knowing when to stop because returns were diminishing.

This suggests that as AI agents gain access to more computing power, they won't just do the same things faster — they'll start doing fundamentally different things. Things that look a lot like independent scientific thinking.

Try it yourself

SkyPilot is open source with 9.6K GitHub stars. If you have access to GPU clusters (via any cloud provider or Kubernetes), you can replicate this experiment:

pip install skypilot-nightly

sky check

# Tell Claude Code to start running parallel experiments

claude "Read instructions.md and start running parallel experiments."You'll need Claude Code installed and a cloud provider configured with SkyPilot. The full blog post includes detailed setup instructions and the exact YAML configuration files used.

Related Content — Get Started with Easy Claude Code | Free Learning Guides | More AI News

Stay updated on AI news

Simple explanations of the latest AI developments