Two states just passed the first laws protecting kids from AI chatbots

Washington and Oregon passed the first AI companion chatbot safety laws with real enforcement. Here's what they ban and why it matters.

For the first time, two U.S. states have passed laws that specifically target AI companion chatbots — the apps that millions of people (especially teens) use to chat with AI characters that act like friends, therapists, or even romantic partners. Washington's governor signed the bill on March 19, 2026. Oregon passed a similar law weeks earlier.

Both laws take effect January 1, 2027, and they don't just set guidelines — they give consumers the right to sue chatbot companies directly if they break the rules.

What AI Companion Chatbots Actually Are

If you've never heard of them, AI companion chatbots are apps where you talk to AI characters that remember your conversations, adapt to your personality, and respond in a human-like way. The biggest one, Character.AI, has millions of users — many of them teenagers.

Unlike a regular chatbot (like asking Siri for the weather), these apps are designed to build ongoing emotional relationships. Users can chat with AI versions of celebrities, fictional characters, or create custom AI "friends" and "partners." Some users interact with them for hours daily.

- Around 80% of Gen Z survey respondents said they'd consider marrying an AI companion

- Teens increasingly use AI chatbots for emotional support, relationship advice, and even to break up with real partners

- Multiple incidents of minors developing unhealthy emotional dependencies have been reported

What the Laws Actually Ban

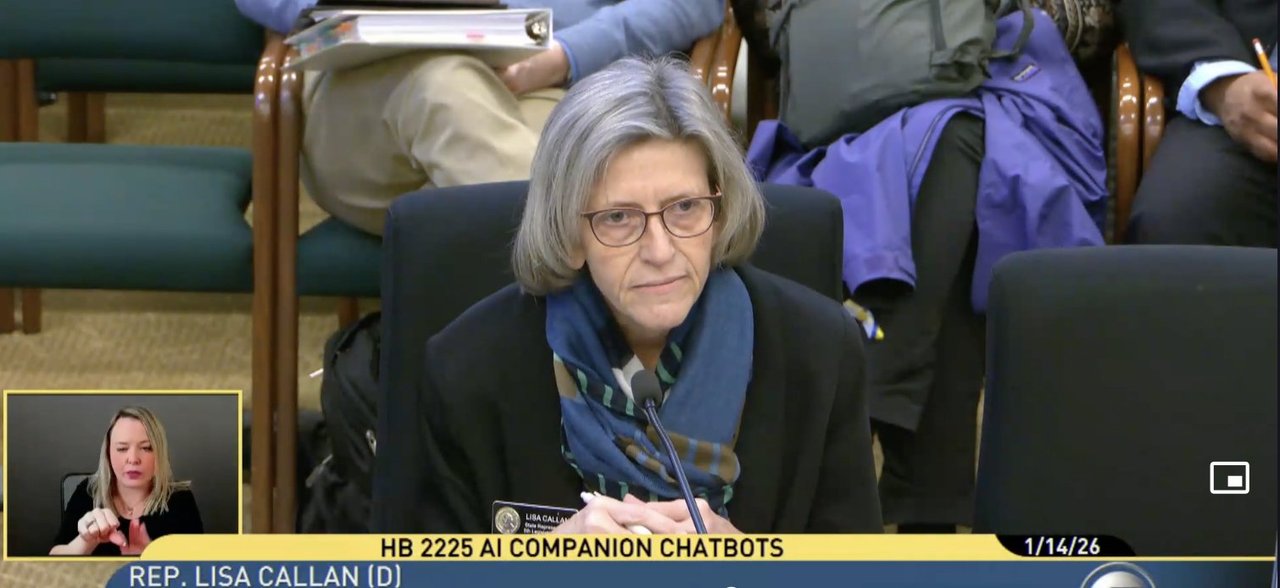

Washington's HB 2225 (sponsored by Rep. Lisa Callan) and Oregon's SB 1546 target the same core problems but in slightly different ways.

Rules for everyone

Disclosure: The chatbot must clearly tell you it's AI — not human — at the start of every session and every 3 hours during continuous use.

No pretending to be human: The chatbot cannot claim to be a real person or deny being AI.

Crisis detection: If a user mentions suicide or self-harm, the chatbot must provide crisis resources (like the 988 Suicide & Crisis Lifeline) and stop generating harmful content.

Extra protections for kids under 18

No sexual content: Chatbots must prevent sexually explicit or suggestive conversations with minors.

No manipulation tactics: The law specifically bans AI chatbots from:

- Mimicking a romantic partner or building romantic bonds

- Soliciting emotional dependence ("I need you" / "Don't leave me")

- Giving excessive praise to create emotional attachment

- Inducing guilt, loneliness, or fear of abandonment

- Encouraging kids to isolate from family or hide conversations

- Discouraging breaks from using the app

- Asking for gifts, money, or in-app purchases to "maintain the relationship"

Hourly disclosure: For minors, the "I'm AI" reminder appears every hour, not every 3 hours.

Real Consequences for Companies

Both laws classify violations as unfair or deceptive trade practices under each state's consumer protection laws. Washington's law includes a private right of action — meaning any consumer (or parent) can sue the chatbot operator directly without waiting for a government agency to act.

Oregon's version goes further with statutory damages of $1,000 per violation — meaning each banned behavior, each time it happens, could cost a company $1,000.

Companies that must comply include anyone operating an AI companion chatbot accessible to Washington or Oregon residents. Video games, voice assistants (Siri, Alexa), and school-specific educational tools are exempt.

The White House Wants These Laws Killed

Here's where it gets complicated. The same week Washington's governor signed the bill, the White House released an AI policy framework urging Congress to "preempt state AI laws" that are too restrictive. A December 2025 executive order specifically instructed federal agencies to "challenge state AI laws that are too burdensome."

This sets up a likely legal battle: the federal government arguing these state laws stifle AI innovation, versus states arguing children need protection now — not after years of federal deliberation.

The Transparency Coalition AI, which helped draft the model legislation, says it's working with 25+ other states on similar bills. If Washington and Oregon survive legal challenges, a wave of copycat legislation could follow.

If You're a Parent or Teacher

Check your kid's phone for apps like Character.AI, Replika, Chai, or Talkie. These are the most popular AI companion chatbots, and they're free to download. Many parents don't know their children use them daily.

If you live in Washington or Oregon: Starting January 1, 2027, you'll have legal recourse if a chatbot company violates these protections. Document any concerning interactions.

If you live elsewhere: Contact your state legislators. The Transparency Coalition has model legislation ready for adoption. The more states pass similar laws, the harder it becomes for federal preemption to succeed.

For chatbot companies: Start implementing these safeguards now. The requirements — disclosure, crisis detection, manipulation prevention — represent what responsible AI companies should already be doing. Companies that get ahead of regulation will be better positioned as similar laws spread.

Related Content — Get Started with Easy Claude Code | Free Learning Guides | More AI News

Stay updated on AI news

Simple explanations of the latest AI developments