Amazon just revealed the chip that actually powers Claude

TechCrunch toured Amazon's secret Trainium chip lab in Austin. 1.4M chips already power Claude, ChatGPT, and Apple's AI — challenging Nvidia's 90% grip.

Every time you ask Claude a question or send a prompt to ChatGPT, the answer comes from a chip most people have never heard of. It's not made by Nvidia. It's built by Amazon — in a lab in Austin, Texas that journalists just got to see for the first time.

TechCrunch published an exclusive tour of Amazon's Annapurna Labs facility on March 22, 2026, revealing the factory floor where AWS builds its Trainium AI chips — custom processors designed specifically to train and run the world's most powerful AI models.

1.4 Million Chips — and Three of the Biggest Names in AI

Amazon has already deployed 1.4 million Trainium chips across three generations. Here's who's using them:

Anthropic (Claude) — runs on over 1 million Trainium2 chips. When you chat with Claude, Trainium is doing the math behind every response.

OpenAI (ChatGPT) — signed a $50 billion deal with AWS for 2 gigawatts of Trainium computing capacity to train next-generation models.

Apple — reportedly testing Trainium chips for internal AI workloads, though neither company shared specifics.

That means three of the world's most-used AI systems now depend on the same Amazon-built chip — not Nvidia's GPUs, which still control over 90% of the AI accelerator market.

Inside the Austin Lab

The tour was led by Kristopher King, the lab's director, and Mark Carroll, Annapurna's head of engineering. Journalists saw UltraServers (massive server racks) packed with 144 Trainium chips each, being stress-tested before shipping to AWS data centers worldwide.

Carroll emphasized the stakes: "If there's a failure or unavailability during this phase, you have to go back, or even start from scratch." Each server undergoes rigorous testing before it's cleared to handle real AI workloads.

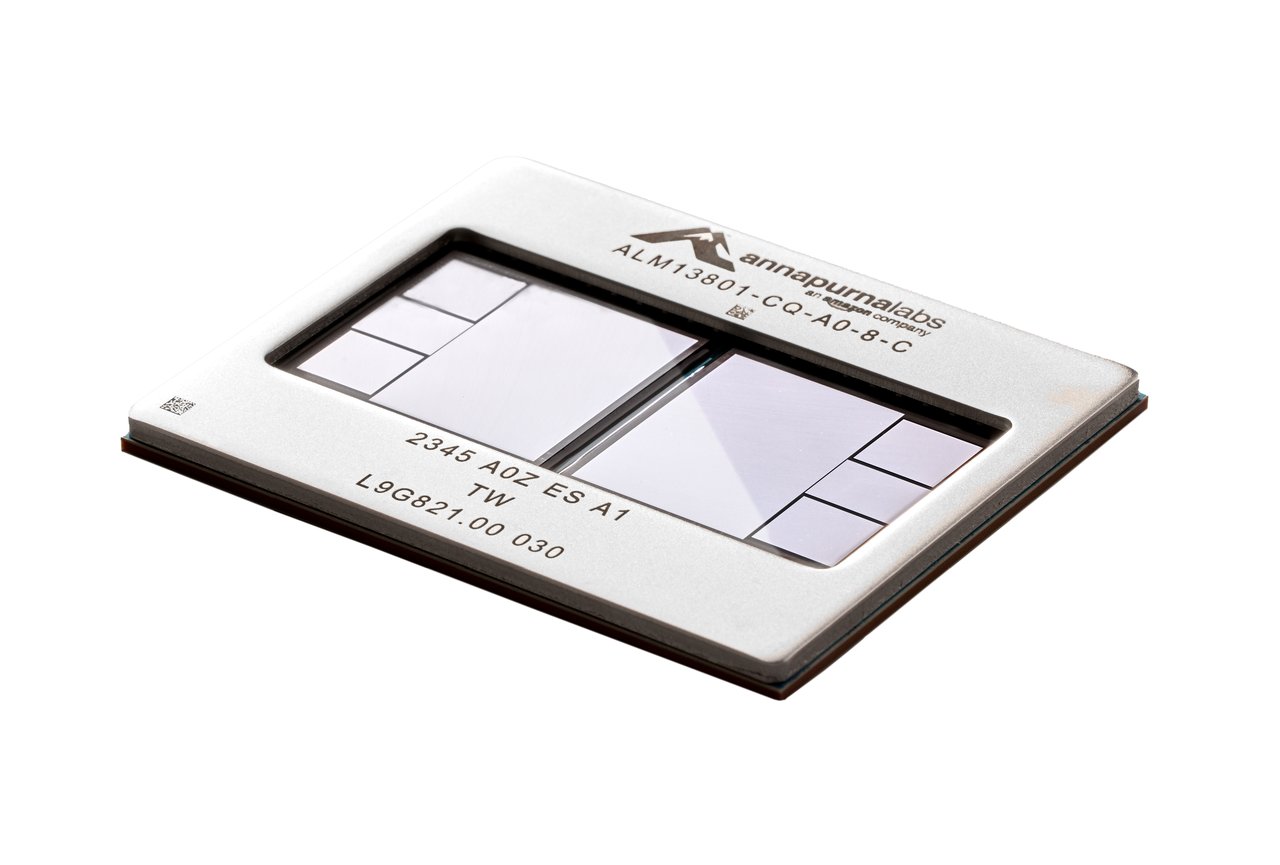

Trainium3: Smaller Than a Credit Card, Twice as Powerful

The latest generation — Trainium3 — is AWS's first chip built on a 3-nanometer process (the same cutting-edge manufacturing used for the newest iPhone chips). It's fabricated by TSMC, the world leader in advanced chipmaking.

Here's what it delivers compared to the previous generation:

• 2x compute performance — 2.52 petaflops (trillions of calculations per second)

• 1.5x more memory — 144 GB of high-bandwidth memory per chip

• 4x better energy efficiency — same work, a quarter of the electricity

• 5x more AI output per megawatt — critical as energy costs soar

• 30–40% cheaper than equivalent Nvidia GPU instances on AWS

A Trainium4 is already in development, promising 6x the performance of Trainium3. Amazon's chip team has been accelerating: the first generation took 18 months to develop, the second took just 9.

Why This Matters If You Use AI

You don't need to buy a Trainium chip — Amazon doesn't sell them. They only exist inside AWS data centers, powering services you already use. But here's why this story matters to you:

Cheaper AI is coming. Nvidia's near-monopoly has kept AI compute expensive. Every serious alternative — like Trainium — puts downward pressure on prices. If Amazon can deliver 30–40% savings at scale, that cost reduction eventually reaches you through cheaper subscriptions, more generous free tiers, and faster responses.

Reliability improves. When Claude or ChatGPT has a single hardware supplier, one shortage can slow everything down. Multiple chip sources mean more resilient AI services — fewer outages, less throttling during peak hours.

The AI arms race is really a chip race. The company that controls the best chips controls the future of AI. Amazon just showed it's not content to let Nvidia own that future alone.

The $50 Billion Bet

Amazon's deal with OpenAI — $50 billion for exclusive Trainium computing — is one of the largest infrastructure agreements in tech history. It means OpenAI's next models (potentially GPT-6 and beyond) could be trained entirely on Amazon's chips rather than Nvidia's.

Combined with Anthropic already running Claude on over a million Trainium chips, and Apple quietly testing the hardware, Amazon's Annapurna Labs has gone from a little-known chip division to the backbone of the AI industry — all without selling a single chip to the public.

Related Content — Get Started with Easy Claude Code | Free Learning Guides | More AI News

Stay updated on AI news

Simple explanations of the latest AI developments