MiniMind actually lets you build your own GPT — in 2 hours, for $3

MiniMind is a 42K-star open-source project that trains a working AI chatbot from scratch in 2 hours on a single GPU for under $3 — no cloud subscription needed.

A GitHub project with 42,000 stars just proved you don't need millions of dollars or a warehouse of servers to build your own AI. MiniMind trains a fully functional GPT-style chatbot from scratch — in about 2 hours, on a single GPU, for roughly $3.

That's not a typo. While OpenAI reportedly spent over $100 million training GPT-4, MiniMind builds a working language model with 26 million parameters (about 1/7,000th the size of GPT-3) for less than the cost of a coffee.

Not a toy — a real training pipeline

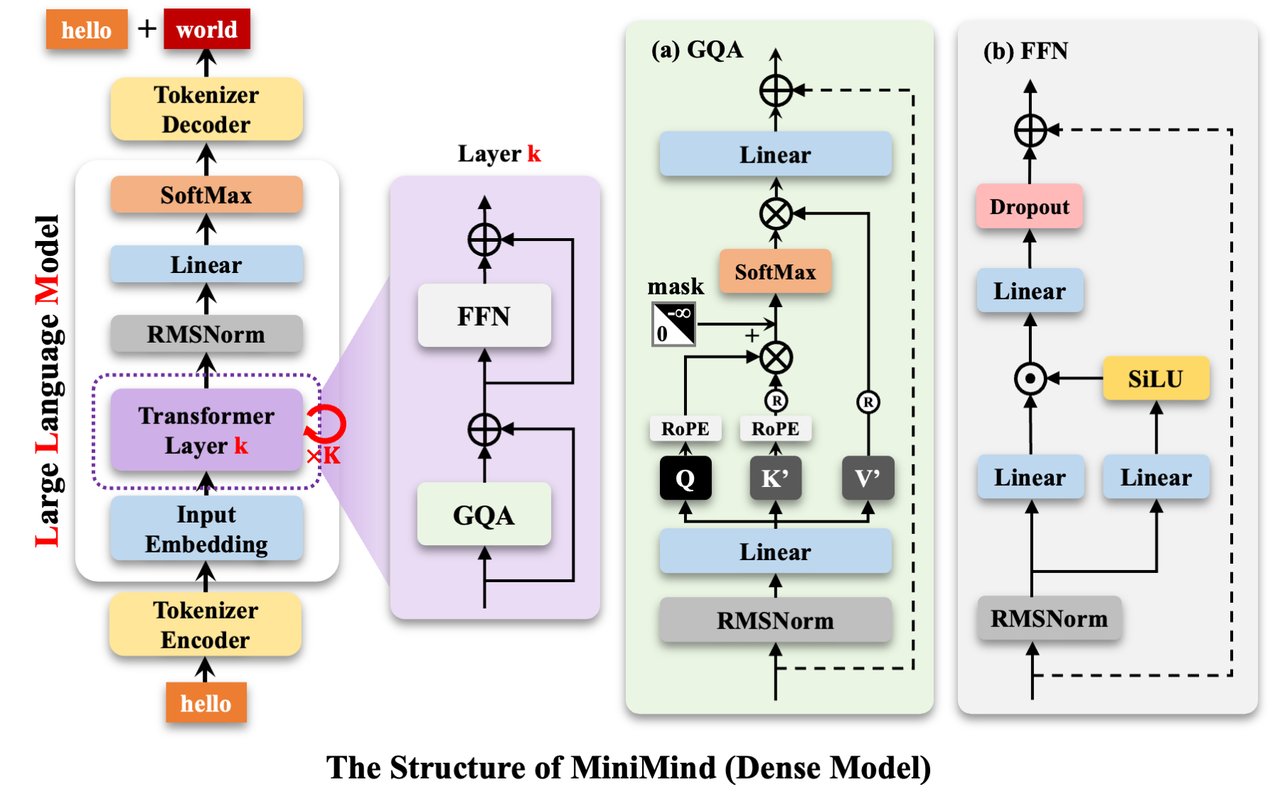

MiniMind isn't a simplified demo. It's the entire process that companies like OpenAI use, shrunk down to run on hardware you might already own. The full pipeline includes:

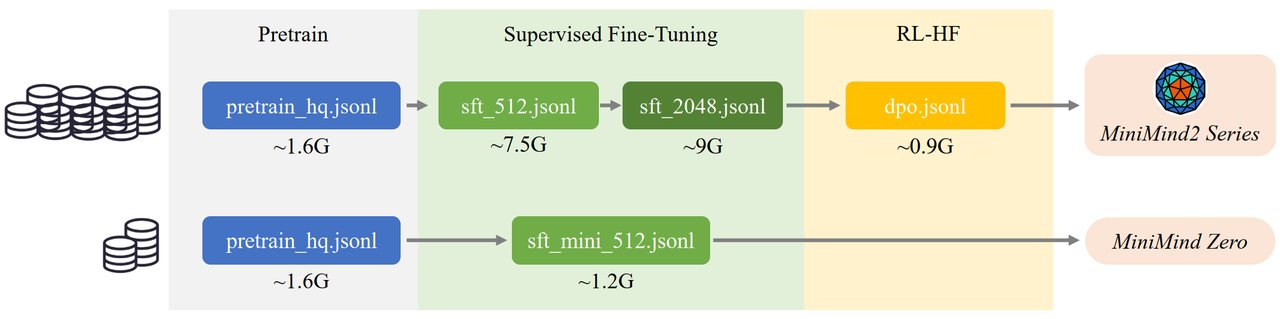

6 training stages — the same ones used by GPT and Claude:

• Pre-training — the AI reads millions of text samples and learns language patterns

• Supervised fine-tuning (SFT) — teaching it to have conversations, not just predict words

• LoRA — a shortcut for adding specialized knowledge without retraining everything

• DPO/RLHF — training it to prefer helpful, safe answers (the same technique behind ChatGPT's politeness)

• Distillation — transferring knowledge from a larger AI into your tiny one

• Reasoning — DeepSeek-R1 style chain-of-thought thinking

Every line of code is written in plain PyTorch — no black-box frameworks hiding the logic. The creator designed it so you can read and understand every single step of how an AI learns.

Three model sizes to choose from

MiniMind offers three variants depending on how much time and hardware you have:

MiniMind2-Small — 26M parameters, 0.5 GB memory, trains in ~2 hours for $0.40

MiniMind2 — 104M parameters, 1.0 GB memory, full training in ~12 hours for $23

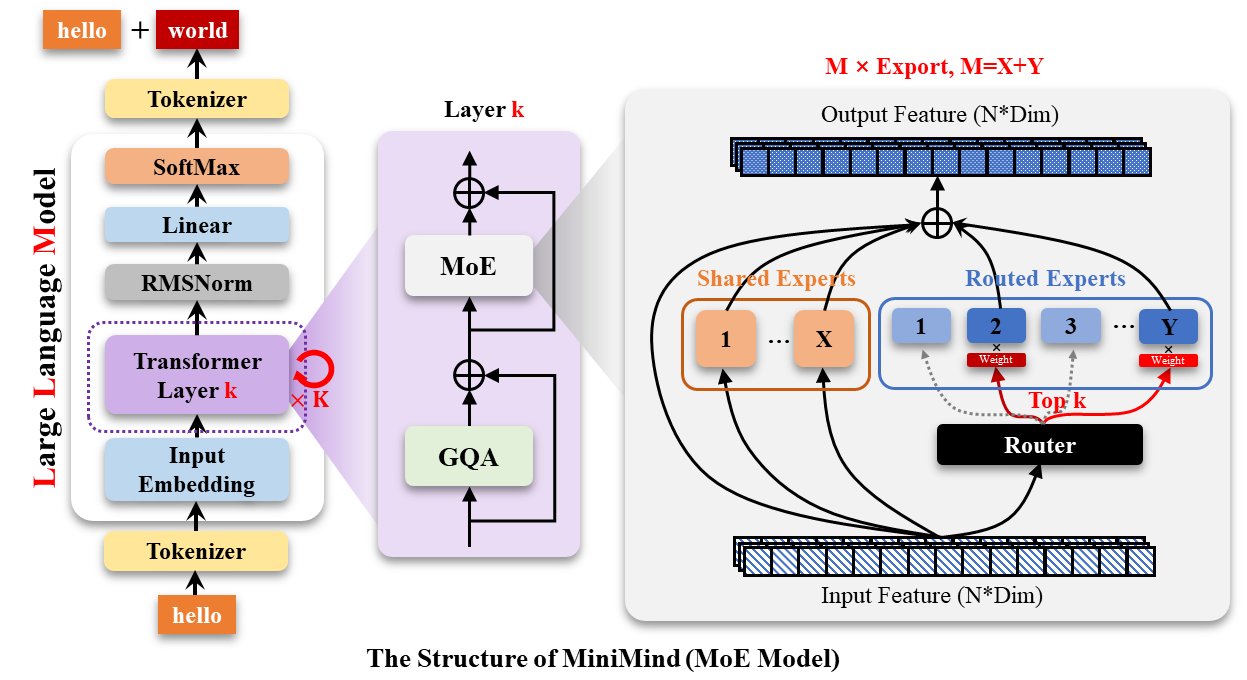

MiniMind2-MoE — 145M parameters with Mixture of Experts (a technique where the AI activates only relevant "expert" sub-networks for each question)

Even the largest variant uses just 1 GB of GPU memory — your gaming laptop can handle it.

Run it right now

If you have an NVIDIA GPU (even a few years old), you can start training your own AI today:

# Clone the project

git clone https://github.com/jingyaogong/minimind.git

pip install -r requirements.txt

# Or skip training and use the pre-built model instantly

ollama run jingyaogong/minimind2The ollama command lets you chat with a pre-trained MiniMind in your terminal immediately — no training required. If you want to train your own, the project includes all the datasets you need (totaling about 20 GB) with clear instructions for each step.

MiniMind also comes with a web interface you can launch with one command:

streamlit run scripts/web_demo.pyWho this is actually for

Students and career-switchers: If you're learning AI, this is the clearest hands-on tutorial that exists. You'll understand how ChatGPT works at a mechanical level — not from a blog post, but by literally building one.

Developers: Need a custom chatbot for a specific task? MiniMind's LoRA fine-tuning lets you specialize the model on your own data without the $23 full training cost. The included OpenAI-compatible API server means you can plug it into existing apps.

Curious non-coders: Even if you never run the code, MiniMind's architecture diagrams and documentation explain why AI works — not just that it works. The project's philosophy is "the simplest path is the greatest."

Why 42,000 developers starred this

The AI industry has a transparency problem. Models like GPT-5 and Claude Opus cost hundreds of millions to train, and their inner workings are locked behind corporate walls. MiniMind flips that completely — every dataset, every training script, every model weight is open under the Apache 2.0 license.

It won't write your novel or pass the bar exam. But it proves that the core technology behind the most powerful AIs in the world can be understood, reproduced, and run by a single person on a single machine. That's why it's trending.

Related Content — Get Started with Easy Claude Code | Free Learning Guides | More AI News

Stay updated on AI news

Simple explanations of the latest AI developments