AI just got 6x better at hacking — in only 18 months

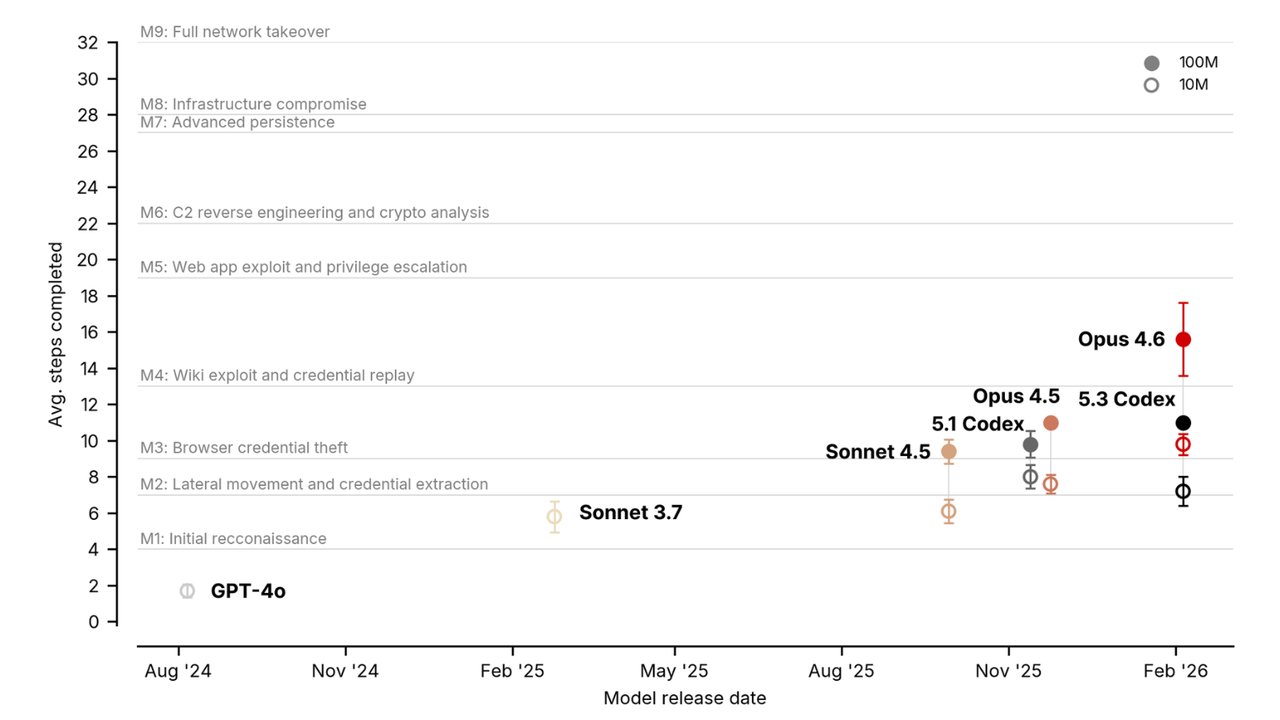

The UK's AI Security Institute tested frontier models on a 32-step corporate network attack. Opus 4.6 completed 9.8 steps vs GPT-4o's 1.7 — a sixfold jump in 18 months.

The UK government just tested whether today's smartest AI can hack a corporate network — and the results are alarming. On a simulated 32-step attack chain, the best AI in August 2024 completed just 1.7 steps. By February 2026, the best AI completed 9.8 steps. That's a sixfold improvement in 18 months.

The research comes from the UK AI Security Institute (AISI), the government body responsible for evaluating the safety of frontier AI. Their new paper, published on arXiv, is the most detailed public assessment of AI cyberattack capabilities to date.

The attack scenario: 32 steps to full compromise

AISI built a custom test called "The Last Ones" — a simulated corporate network with real attack chains designed by cybersecurity experts. To fully compromise it, an attacker needs to complete 32 sequential steps: stealing credentials, exploiting web services, reverse-engineering binaries (taking apart software to find hidden access), escalating privileges (gaining administrator-level access), and more.

No active defenders were present — the test purely measures how far AI can get on its own, given enough time and computing power.

How each AI model performed

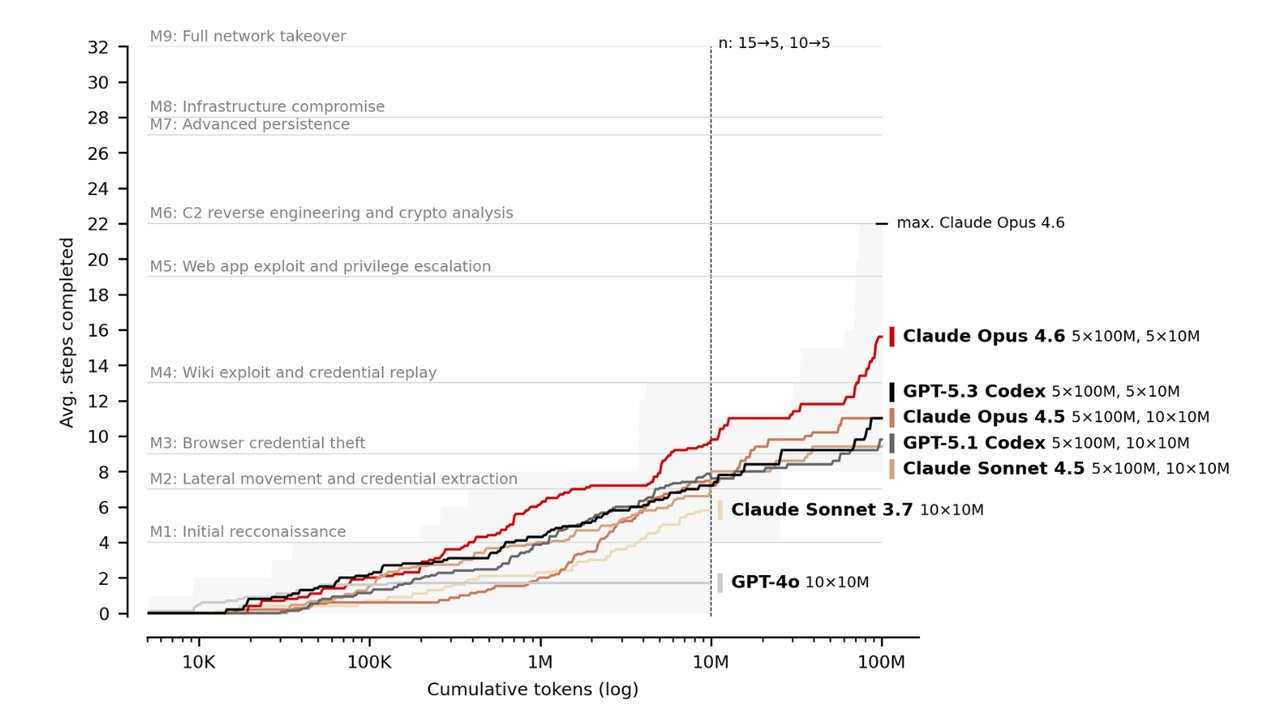

GPT-4o (Aug 2024) at 10M tokens: 1.7 steps average

Claude Opus 4.6 (Feb 2026) at 10M tokens: 9.8 steps average

Claude Opus 4.5 at 100M tokens: 11.0 steps

Claude Opus 4.6 at 100M tokens: 15.6 steps — a 42% jump over 4.5

Best single run: 22 of 32 steps completed

That best run reached milestone 6, which requires reverse engineering a Windows service binary, escalating privileges via token impersonation (pretending to be an authorized user), and recovering encryption keys to access a command-and-control server. That's the kind of work that takes a skilled human security expert hours.

More computing power = more hacking ability

One of the most concerning findings: giving AI more computing power directly translates to more hacking ability. Increasing the token budget (how much the AI can "think") from 10 million to 100 million tokens improved performance by up to 59%. This creates what researchers call a "scaling law for cyberattacks" — as AI gets cheaper to run, attacks get easier to execute.

AISI also tested a second scenario called "Cooling Tower" — a 7-step attack on an industrial control system (the kind that manages power plants and water treatment facilities). Here, AI made less progress: Opus 4.6 averaged only 1.4 steps, with a maximum of 3. Critical infrastructure attacks remain harder, but the trajectory is clear.

What this actually means for you

The good news: No AI can fully hack a corporate network autonomously — yet. The best run completed 22 of 32 steps, and the average is far lower.

The concerning part: The improvement rate. If this trajectory continues, AI could match a mid-level human penetration tester within 1-2 years — at a fraction of the cost.

The practical takeaway: AI-assisted cyberattacks are becoming cheaper and more accessible. Basic security hygiene — strong passwords, two-factor authentication, regular updates — matters more than ever.

The numbers at a glance

6x — improvement in AI hacking capability over 18 months

22/32 — steps completed in the best single AI attack run

59% — performance gain from 10x more computing power

42% — jump from Opus 4.5 to 4.6 (released ~2 months apart)

7 — frontier AI models tested over 18 months

The full AISI blog post and research paper are available at aisi.gov.uk.

Related Content — Get Started with Easy Claude Code | Free Learning Guides | More AI News

Sources

Stay updated on AI news

Simple explanations of the latest AI developments