Spotify just let artists block AI music from hijacking their profiles

Spotify's new Artist Profile Protection lets musicians approve or reject releases before they appear. It's the platform's first real weapon against AI-generated music fraud.

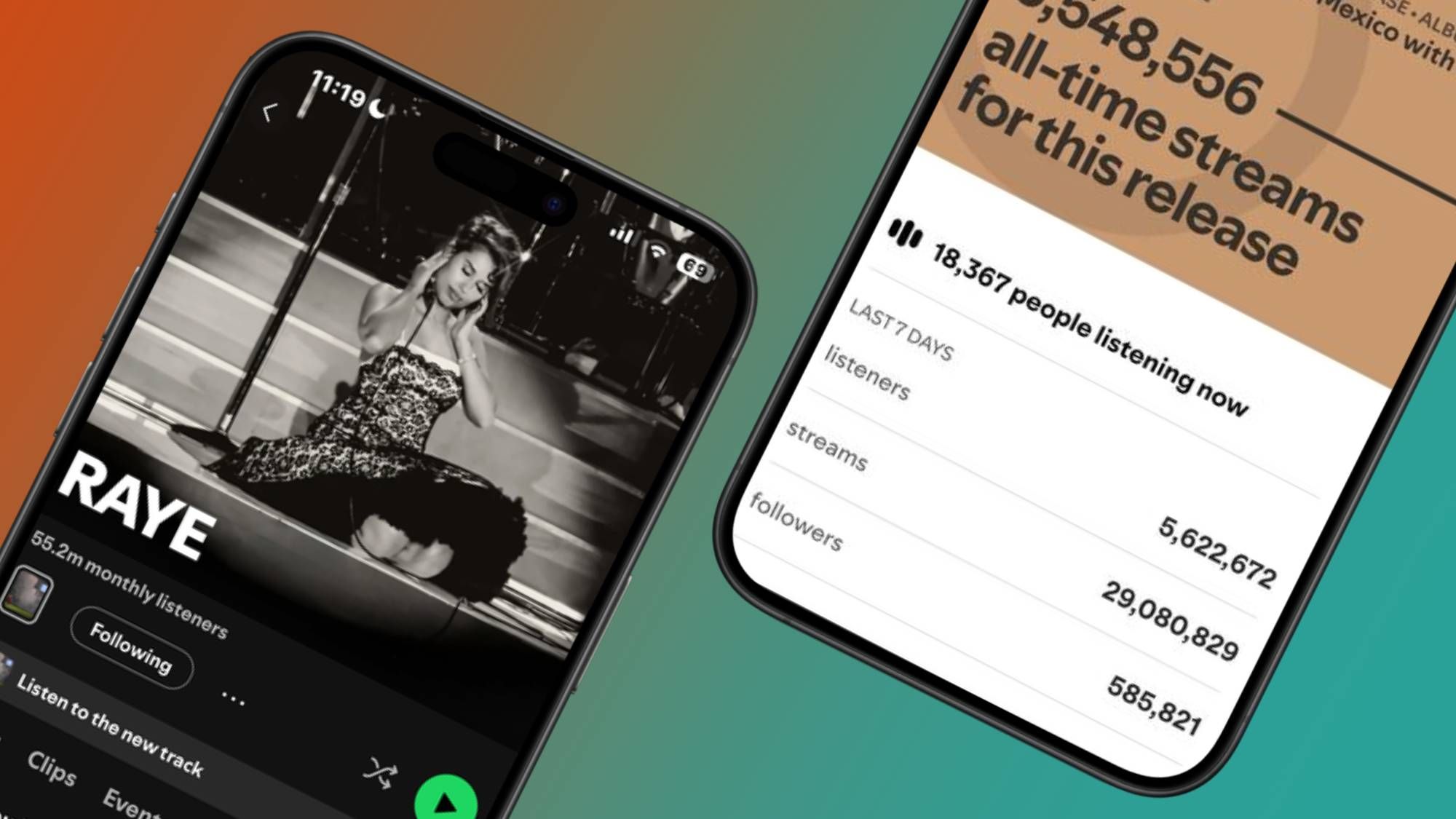

Someone uploads an AI-generated track to Spotify. They tag it with your name. Now it shows up on your artist page, messes with your streaming stats, and confuses your fans. Until this week, artists had no way to stop it.

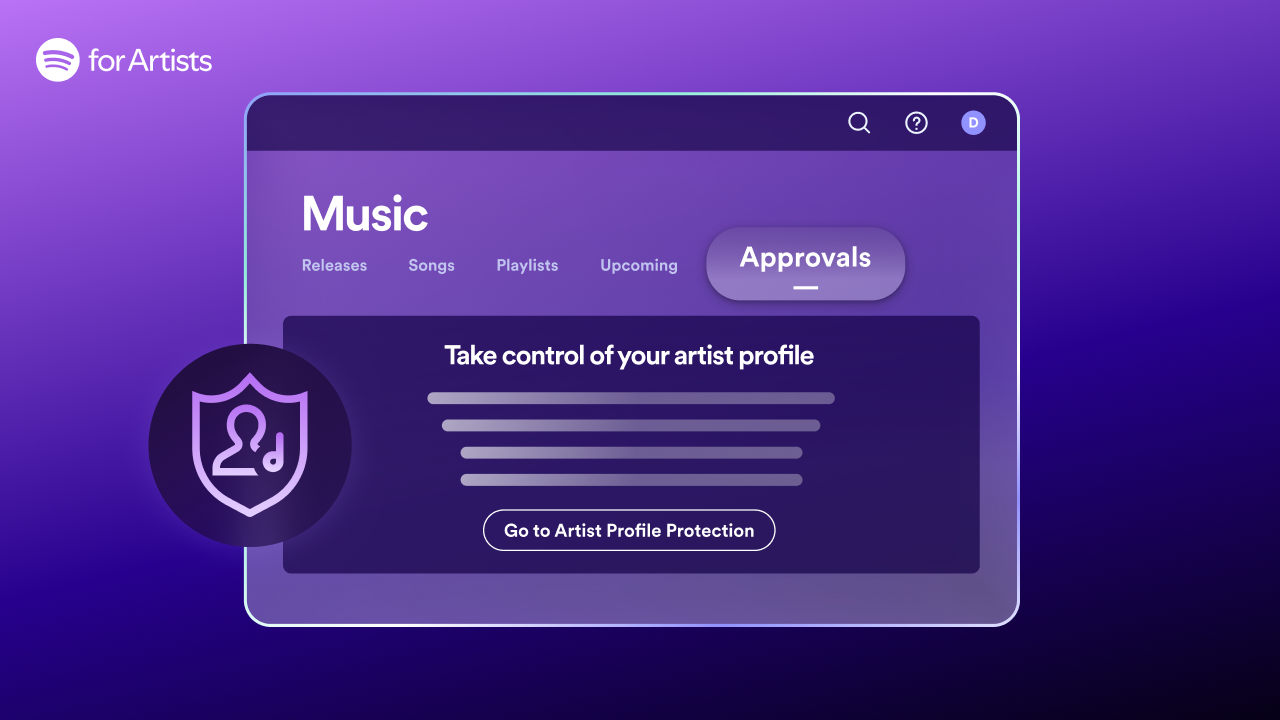

Spotify just launched Artist Profile Protection — a new beta feature that gives musicians a simple power they've never had: the ability to approve or reject any release before it goes live on their profile.

How the approval system works

When an artist turns on Profile Protection in their Spotify for Artists dashboard, three things change:

1. Email alerts for every new release

Whenever someone delivers music to Spotify with your name attached, you get an email notification before the release date.

2. Approve or decline with one click

If you approve, the track goes live normally — it counts toward your stats, shows up in Release Radar (the personalized new music feed), and gets recommended to your listeners. If you decline, it won't appear on your profile at all.

3. Artist Key for trusted partners

You get a unique code — like a digital passkey — that you share with your record label or distributor. Any release sent with your Artist Key skips the approval step and goes straight to your profile. No bottleneck for legitimate releases.

The AI music fraud problem is getting worse

This feature exists because AI-generated music has made an existing problem dramatically worse. Even before AI tools could generate full songs in seconds, artists dealt with metadata mix-ups — tracks from unknown musicians accidentally landing on the wrong artist's page because they share a name.

Now, bad actors are deliberately tagging AI-generated tracks with popular artist names to steal streams and royalty payments. Earlier this month, a North Carolina man pleaded guilty to making $8 million using AI-generated music and bot-driven fake streams.

Spotify has called protecting artist identity a top priority for 2026.

What artists should know before turning it on

The feature is currently in limited beta, available through Spotify for Artists settings on desktop and mobile web. Team Admins and Editors can manage the settings.

There's one important catch: you have to actively manage it. If you forget to approve a legitimate release, it won't go live on your profile. Declined tracks may still appear on other streaming platforms — Spotify's protection only covers Spotify.

Who this is built for: Artists who have experienced repeated incorrect releases on their profiles, artists with common names that get confused with others, and anyone who wants tighter control over their public catalog.

If you're a musician, here's what to do

Log into Spotify for Artists on desktop or mobile web. Look for Artist Profile Protection in your settings. Turn it on, and share your Artist Key with your distributor or label so legitimate releases aren't delayed.

If you're a listener and notice tracks on your favorite artist's page that seem off — low quality, generic titles, or clearly AI-generated — this is the feature that should eventually clean that up.

Related Content — Get Started with Easy Claude Code | Free Learning Guides | More AI News

Stay updated on AI news

Simple explanations of the latest AI developments