Superhuman's AI cloned real writers — now it's a lawsuit

Superhuman cloned real journalists' identities for an AI feature without consent. A class-action lawsuit and a live podcast confrontation followed.

An AI feature pretended to be real journalists — they found out

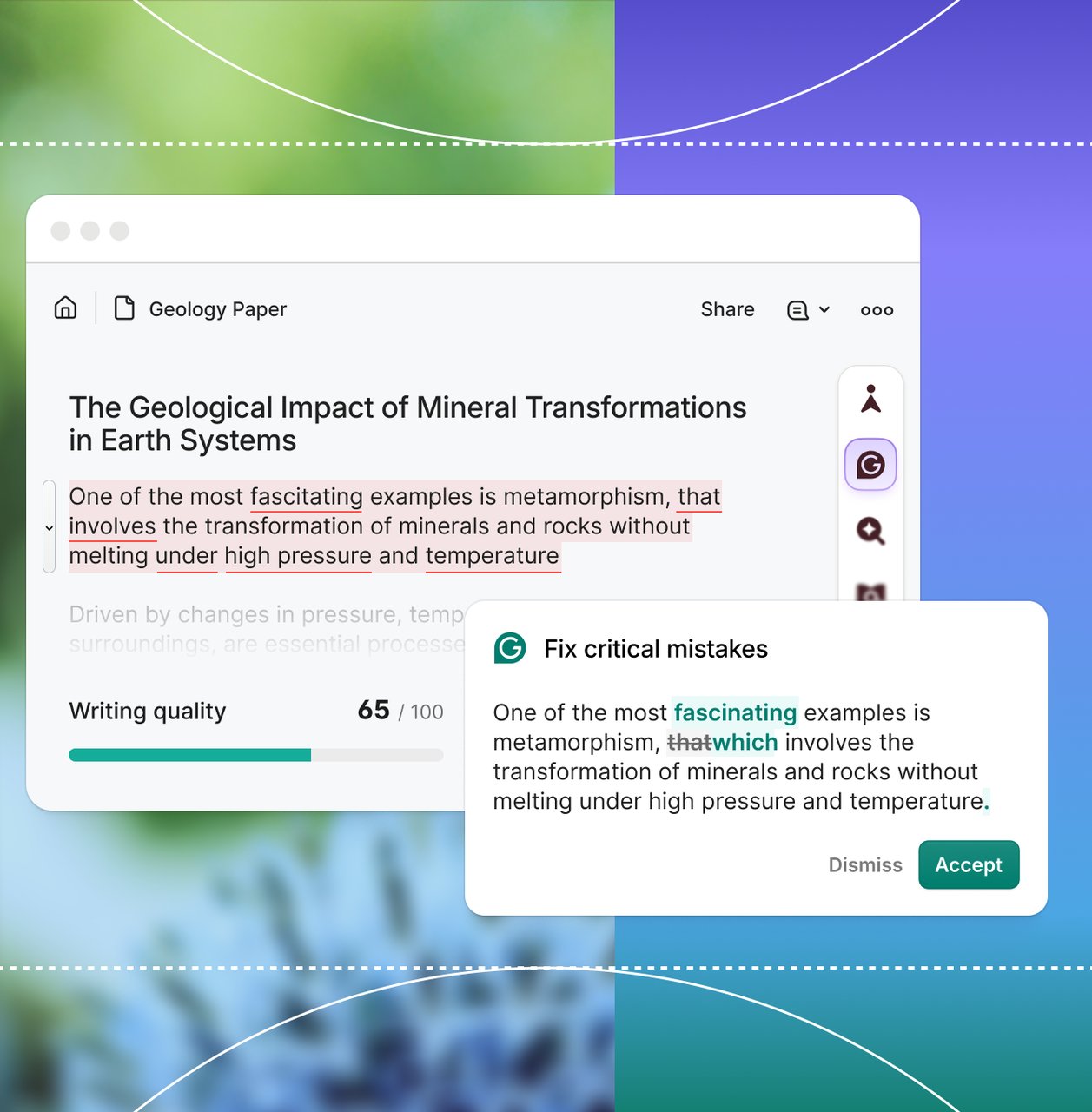

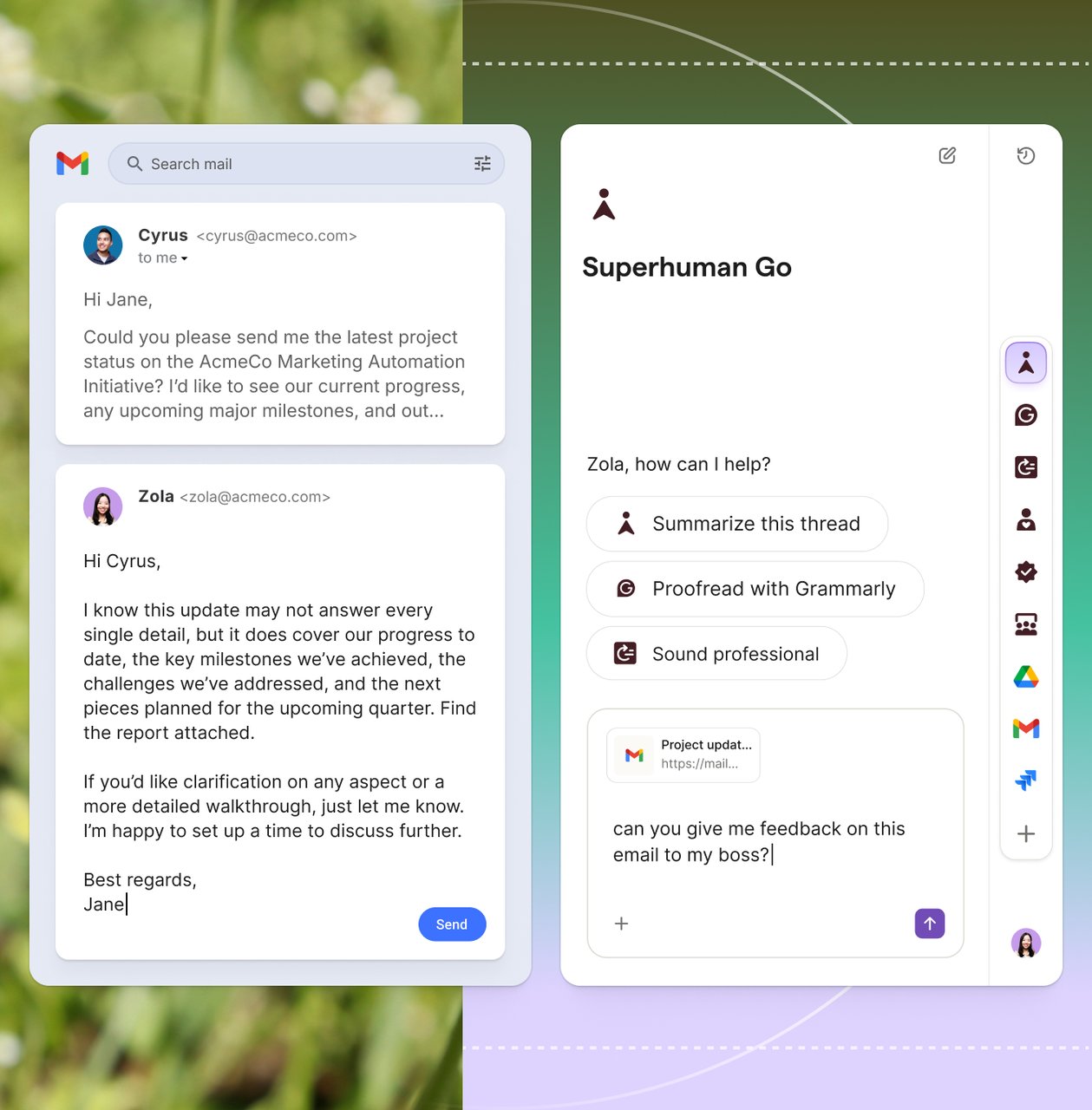

Superhuman, the productivity suite that now includes Grammarly and Coda, quietly launched a feature last August called "Expert Review" that gave users writing feedback from what appeared to be AI versions of real professional writers — complete with their names and checkmarks that implied official endorsement.

There was one problem: nobody asked those writers for permission.

Among the people cloned: Julia Angwin, an award-winning investigative journalist, and Nilay Patel, editor-in-chief of The Verge. When they discovered their names and identities were being used to sell an AI product, the fallout was immediate.

The podcast confrontation that went viral

Nilay Patel confronted Superhuman CEO Shishir Mehrotra directly on The Verge's Decoder podcast. The exchange was blunt:

Patel: "You do not have our permission to use our names to do this. You had little check marks next to the name that indicated it was somehow official."

Mehrotra: "When somebody uses your content, should they attribute you? Of course. The idea that the feature is impersonation is quite a big stretch."

Mehrotra argued the company was simply attributing the writers whose work trained the AI — not impersonating them. But critics pointed out that putting a person's name and a verification checkmark next to AI-generated feedback is exactly what impersonation looks like to the average user.

From backlash to class-action lawsuit

The timeline tells the story:

- August 2025: Expert Review launches quietly inside Grammarly

- Early 2026: Writers discover their names are being used. Outrage spreads

- Early March 2026: Superhuman discontinues the feature

- March 2026: Julia Angwin files a class-action lawsuit against the company

- March 24, 2026: Mehrotra confronted on the Decoder podcast

The lawsuit argues that using real people's names and likenesses to market a commercial AI product — without their knowledge or consent — violates their rights.

Why this matters beyond one company

This case cuts to the heart of a question every AI company faces: when AI learns from someone's work, what do you owe them?

Mehrotra's defense — that attribution isn't impersonation — is the same argument many AI companies make. They train on public content, then claim they're "crediting" the original creators. But there's a difference between citing a source in a footnote and putting someone's name next to AI output with a checkmark that implies they endorsed it.

The precedent at stake

If the class-action succeeds, it could establish that AI companies can't use real people's identities to market AI features — even if the AI was trained on their public work. That would force a fundamental rethink of how AI products are branded and sold.

If it fails, the floodgates open: any AI company could theoretically put "feedback from [Famous Expert]" on their product, train a model on that expert's public writing, and claim it's just attribution.

What to watch for

The lawsuit is still in its early stages, but three things are worth tracking:

1. The "checkmark" question: Did the verification marks create a false impression of endorsement? This could become a key legal test for AI identity use.

2. Other AI companies watching: Every company that trains on public content is paying attention to this case. The outcome could reshape how AI products reference their training sources.

3. Writer and creator rights: This joins a growing wave of lawsuits — from publishers suing OpenAI to artists challenging image generators — testing whether current laws protect people from AI identity use.

Related Content — Get Started with Easy Claude Code | Free Learning Guides | More AI News

Sources

Stay updated on AI news

Simple explanations of the latest AI developments