Every top AI just failed a test any human can pass

ARC-AGI-3 gave AI video-game-like puzzles humans find easy. ChatGPT-level AIs scored under 1%. A $2M prize challenges anyone to close the gap.

Under 1% — On Games Humans Find Fun

The ARC Prize Foundation — a nonprofit backed by leaders from OpenAI, Google, xAI, and Anthropic — just launched ARC-AGI-3, and the results are humbling for the AI industry.

They gave the world's most advanced AIs a set of interactive, video-game-like challenges. No written instructions. No stated goals. Just a screen, some controls, and a puzzle to figure out — the same way you'd pick up a new game on your phone.

Humans completed these challenges with ease. Many found them genuinely fun. The best AI agent in the world? 12.58% efficiency. The biggest names in AI — ChatGPT, Claude, Gemini — scored under 1%.

It Looks Like a Video Game — That's the Point

Previous AI tests were like multiple-choice exams — static puzzles with clear right answers. ARC-AGI-3 is completely different. It's the first interactive AI benchmark (a standardized test that measures how smart AI really is), and it works like a video game.

Each environment drops the AI into an unfamiliar world with no instructions, no rules, and no stated objectives. The AI has to:

Think of it this way: when you pick up a new game, you tap around, notice patterns, and quickly figure out what you're supposed to do. That's exactly what ARC-AGI-3 tests — and it's exactly what today's AI can't do.

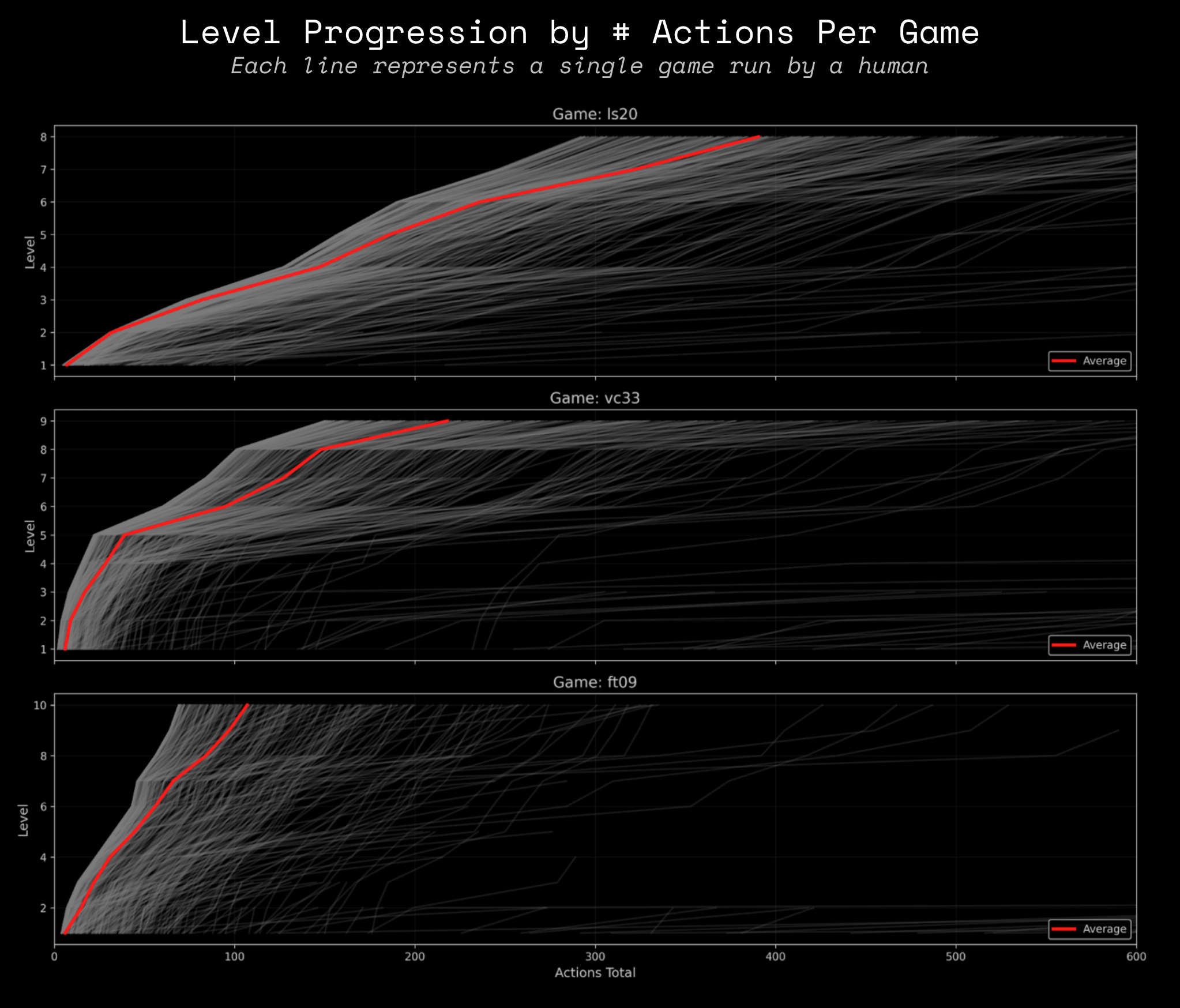

The full benchmark spans 1,000+ levels across 150+ environments, covering everything from map navigation to pattern matching to volume adjustment puzzles. During a preview phase, over 1,200 human players completed 3,900+ games — most while having a good time.

12.58% vs 100% — The Results Are In

The winner was an agent called StochasticGoose. It used CNN-based reinforcement learning — essentially training the AI to predict what happens next based on what it sees on screen, similar to how a self-driving car learns to read road signs. It managed 12.58% efficiency and completed 18 levels.

The runner-up, Blind Squirrel, took a different approach — mapping every possible state of the game into a decision tree. It scored 6.71% and completed 13 levels.

But here's the real headline: the large language models behind ChatGPT, Claude, and Gemini — the AIs millions of people use daily — scored under 1%. These are the same AIs that can write essays, solve math problems, and generate code. Put them in an environment where they need to learn from experience? They're essentially lost.

A $2 Million Open Challenge

The ARC Prize 2026 competition is now open with $2 million in total prizes across three tracks:

There's one big catch: all winning solutions must be open-sourced under permissive licenses. And during evaluation on Kaggle (the world's largest data science competition platform), no internet access is allowed — teams can't call ChatGPT, Claude, or any cloud AI. The AI must run entirely on local hardware.

Key dates:

- June 30 & September 30: Milestone checkpoint submissions

- November 2: Final submission deadline

- December 4: Results announced

Play the Games Yourself

You don't need to be a researcher or engineer to try this. ARC Prize has a public game set where anyone can play the ARC-AGI-3 challenges directly in their browser. See how you compare to the best AI — spoiler: you'll almost certainly win.

For developers who want to build and test AI agents against the benchmark, the toolkit is open-source and installs in one command:

pip install arckitIt supports multiple AI backends including OpenAI, Anthropic, Google, and open-weight models — though competition submissions must run locally without any cloud APIs.

As the ARC Prize Foundation puts it: "As long as there is a gap between AI and human learning, we do not have AGI." With humans at 100% and the best AI at 12.58%, that gap is still enormous. The $2 million question: can anyone close it by December?

Related Content — Get Started with Easy Claude Code | Free Learning Guides | More AI News

Sources

Stay updated on AI news

Simple explanations of the latest AI developments