You can't prove you're human anymore — your family knows it

A BBC journalist failed to convince her own aunt she wasn't AI. With voice cloning needing just 3 seconds of audio, 99.9% of people can't spot a deepfake.

A BBC journalist recently tried to prove to her aunt that she was a real person on a phone call — not an AI clone of her voice. Her aunt wasn't convinced. The aunt had set up codewords with her kids and husband for exactly this situation, but the journalist wasn't in the loop.

This isn't a sci-fi scenario anymore. It's Tuesday. And the numbers behind it are terrifying.

99.9% of People Fail the Deepfake Test

A 2025 study by iProov tested 2,000 people in the US and UK, showing them a mix of real and AI-generated images and videos. The result: only 0.1% could correctly identify which were fake across all samples.

That means 99.9% of us would be fooled. And it gets worse — over 60% of participants were confident they could spot deepfakes, regardless of whether their answers were actually correct.

3 Seconds of Your Voice Is All It Takes

Voice cloning (technology that copies someone's voice from a recording) has crossed what researchers call the "indistinguishable threshold" — the point where synthetic audio sounds identical to a real human, complete with natural breathing, pauses, and emotion.

How little it takes to clone your voice:

• 3 seconds of audio creates an 85% accurate voice match

• 60 seconds of audio creates a near-perfect clone

• $1 and 20 minutes — that's what it cost to create the Biden deepfake robocall

• "Free voice cloning software" searches rose 120% in just one year

Researcher Siwei Lyu told Fortune: "The telltale signs that once exposed fake voices have largely vanished." The frontier is now shifting from pre-recorded clips to real-time synthesis — faking voices live during a conversation.

The Scam That Uses Your Own Family Against You

The most devastating application is the "virtual kidnapping" scam. Here's how it works:

1. Scammers scrape your social media for details — vacation photos, pet names, check-in locations

2. They clone a family member's voice from any public video or voicemail

3. They call you pretending to be your child: "Mom, I've been in a wreck"

4. They demand a ransom — typically $2,500 to $15,000

1 in 4 Americans has already received an AI-generated deepfake voice call. Of those who engaged, 77% lost money. Total deepfake fraud losses in the US hit $1.1 billion in 2025 — triple the $360 million in 2024.

The Internet Reacted — With Dread

The BBC article went viral on Hacker News with 141 points and 158 comments. The reactions paint a bleak picture:

"LinkedIn is completely destroyed" — users report the platform is overrun with AI bot accounts

"Money will have to be wasted on unnecessary flights" — video calls may no longer be trusted for business

"The majority of my extended family are not smart enough to resist continuous attacks" — one commenter's honest assessment

"Soon only humans won't pass the Turing test" — the darkest joke in the thread

Several commenters reported real incidents: hijacked email accounts used to craft AI-generated donation pleas with personal details, and companies experiencing account takeover attempts via deepfake voice calls.

What You Can Actually Do Right Now

The BBC journalist's aunt had the right idea — just not the right execution. Here's what security experts recommend:

Set up a family codeword. Pick a phrase that only your family knows. Use it to verify identity on any unexpected call asking for money. A deepfake can't guess a word it's never heard.

Hang up and call back. If someone calls claiming to be family in an emergency, hang up and dial their real number directly.

Limit voice samples online. Every video, voicemail, and voice note you post gives cloners more material. Consider who can access your audio.

Never send money under time pressure. The urgency is the scam. Real emergencies can wait 5 minutes for a verification call.

The Trust Infrastructure Is Crumbling

80% of companies have no established protocols for deepfake attacks. Only 5% of business leaders report having comprehensive prevention in place. And 1 in 5 consumers had never even heard of deepfakes before being tested.

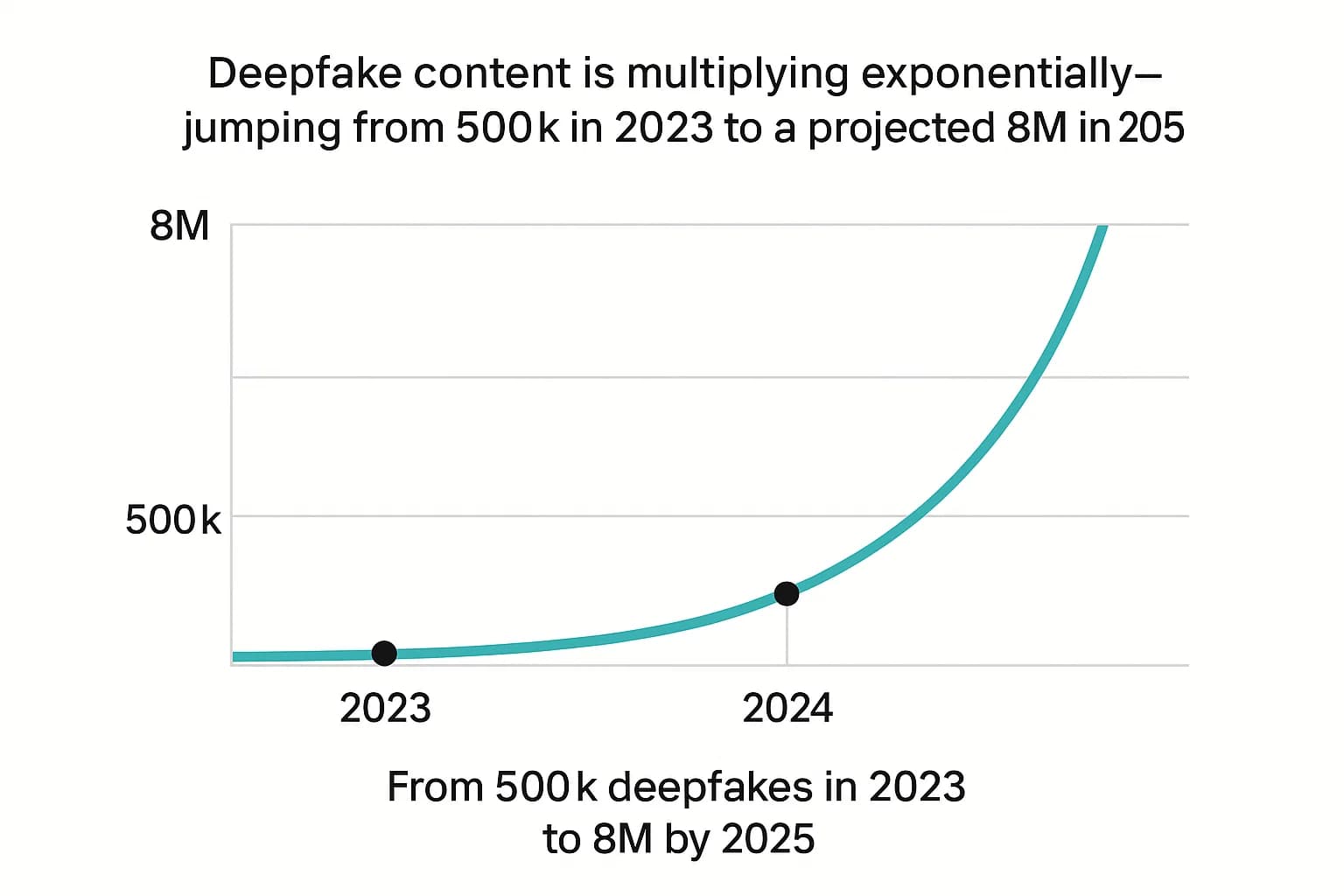

The volume is exploding: from 500,000 deepfake files online in 2023 to an estimated 8 million in 2025 — a 900% annual growth rate. Retailers now report receiving over 1,000 AI-generated scam calls daily.

As one Hacker News commenter put it: the silver lining might be that people start rebuilding trust through in-person interactions and local communities — reversing decades of internet-driven isolation. But for now, the aunt in the BBC story represents the new normal: it's rational to doubt whether the person on the phone is real.

Related Content — Get Started with Easy Claude Code | Free Learning Guides | More AI News

Stay updated on AI news

Simple explanations of the latest AI developments