NYC hospitals just dropped Palantir — the UK doubled down

NYC hospitals killed a $4M Palantir deal after activists exposed patient data scanning. The UK just signed a $420M contract for the same software.

New York City's largest public hospital system just announced it will not renew its $4 million Palantir contract after investigative journalists and community activists exposed what the surveillance tech company was doing with patient data. The contract ends in October 2026 — but across the Atlantic, the UK government is pressuring NHS hospitals to adopt the same company's software.

Palantir — a data analytics company (a firm that builds software to find patterns in massive datasets) originally funded by the CIA — had been using its platform to automatically scan patient health notes at NYC Health + Hospitals, the largest municipal healthcare system in the United States. The goal was to improve Medicaid billing efficiency, but critics said giving a military contractor access to vulnerable patients' medical records crossed a line.

How a $4 Million Deal Became a Public Crisis

The story unfolded in three stages:

Timeline of the Palantir contract collapse:

2023 — NYC Health + Hospitals quietly begins paying Palantir for data analysis services

February 15, 2026 — The Intercept publishes investigation exposing the nearly $4M contract

March 16, 2026 — CEO Mitchell Katz tells NYC City Council the contract will not be renewed

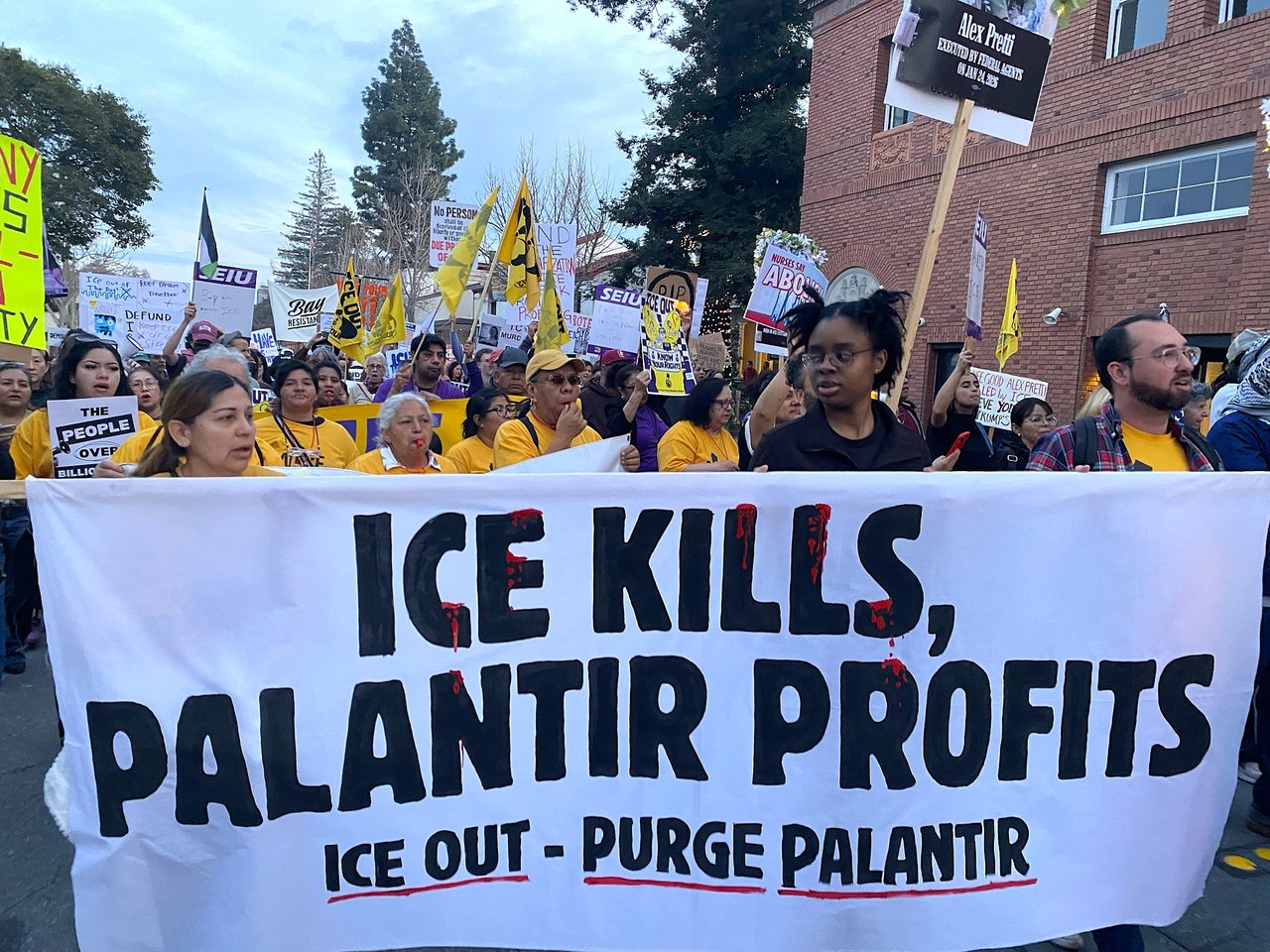

March 21, 2026 — ACT UP protesters march to Palantir's NYC office in a die-in protest

October 2026 — Contract officially expires; data work moves in-house

CEO Katz insisted the arrangement was "always intended to be a short-term solution" and that the hospital system would bring data analysis in-house. Critics saw the statement as damage control after weeks of sustained public pressure.

Why Activists Called It Dangerous

The outrage wasn't just about data. Palantir's other clients include Immigration and Customs Enforcement (ICE), the Department of Defense, and intelligence agencies. Activists argued that a company involved in deportation raids, lethal airstrike targeting, and citizen surveillance had no business handling the health records of New York's most vulnerable populations — many of whom are undocumented immigrants relying on safety-net hospitals.

"Palantir makes money by enabling mass violence in the U.S. and around the world." — Kenny Morris, American Friends Service Committee organizer

Jennifer Hernandez from Make the Road called Palantir "one of ICE's top contractors" and said it has no place serving safety-net hospitals where immigrant communities seek care. The American Friends Service Committee says it will continue pushing to isolate Palantir from other institutions and pension funds nationwide.

Meanwhile in the UK: The Opposite Is Happening

While New York is cutting ties, the British government is doing the opposite. NHS England signed a £330 million contract (about $420 million) with Palantir to build a "Federated Data Platform" (a system that connects patient records across different hospitals into one searchable database). The UK government has been actively pressuring hospital trusts to adopt it.

But the pushback is growing there too. Amnesty International, Privacy International, and the Good Law Project have all warned hospitals that adopting the platform is not mandatory. Their core fear: the system could let government departments like the Home Office (the UK's immigration agency) access patient data.

The resistance is working — fewer than 25% of England's 215 hospital trusts are actively using Palantir's platform despite government pressure.

The Bigger Question for AI in Healthcare

This story highlights a growing tension in healthcare AI. Hospitals desperately need data tools to improve efficiency — NYC Health + Hospitals was using Palantir to recover money from insurance companies, a legitimate goal. But when those tools come from companies whose other products enable surveillance and military operations, the trust equation breaks down.

Why this matters for anyone who visits a hospital:

• Your medical records contain some of the most sensitive data about you — diagnoses, medications, mental health notes

• Companies that handle this data often have other government contracts you'd never know about

• In the US, there's no federal law requiring hospitals to disclose which AI companies access your records

• The NYC story only came to light because of investigative journalism — not government oversight

NYC's decision to drop Palantir is a rare win for transparency in healthcare AI. But with the UK going in the opposite direction — and Palantir's stock still trading near all-time highs — the company isn't going anywhere. The question is whether more communities will follow New York's lead and demand to know exactly who is reading their medical records.

Related Content — Get Started with Easy Claude Code | Free Learning Guides | More AI News

Sources

Stay updated on AI news

Simple explanations of the latest AI developments