ChatGPT's erotic mode just died — one staffer quit over it

OpenAI shelved ChatGPT's 'adult mode' after its safety council called it a potential 'suicide coach' and age verification failed 10% of the time.

OpenAI has indefinitely shelved ChatGPT's planned "adult mode" — an erotic chatbot feature codenamed "Citron" that would have let verified adults generate sexual content through the world's most popular AI chatbot. The company's own Expert Council on Well-Being and AI unanimously recommended against launching it, with one advisor calling the feature a potential "sexy suicide coach."

A senior employee resigned over the feature. Investors panicked after xAI's Grok chatbot produced millions of deepfake images. And the age verification technology? It failed more than 10% of the time — meaning millions of underage users could have accessed explicit content.

From Code Leak to Corporate U-Turn

CEO Sam Altman first proposed the idea in October 2025, arguing ChatGPT should "treat adult users like adults" and offer "erotica for verified adults." The feature was originally scheduled for December 2025.

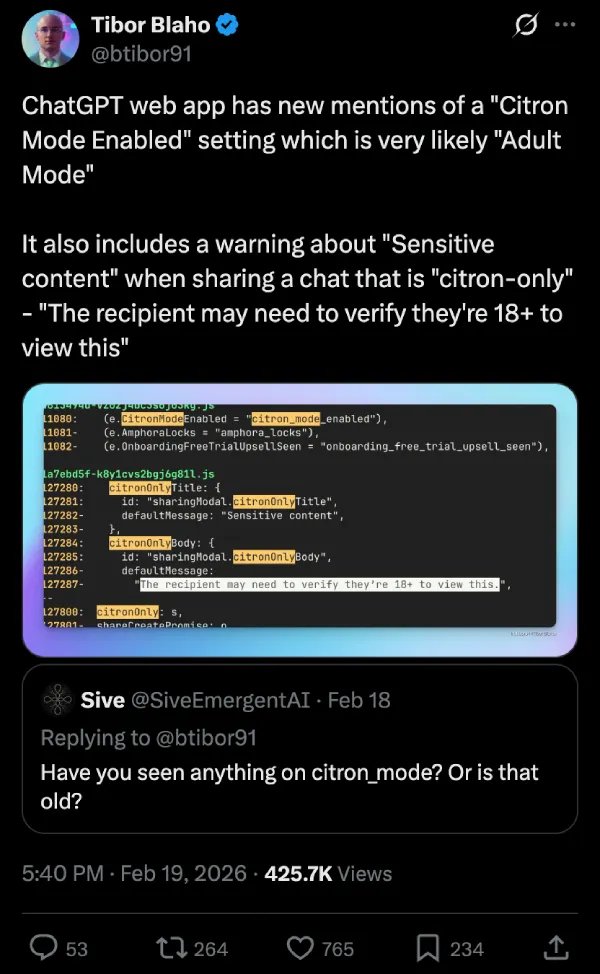

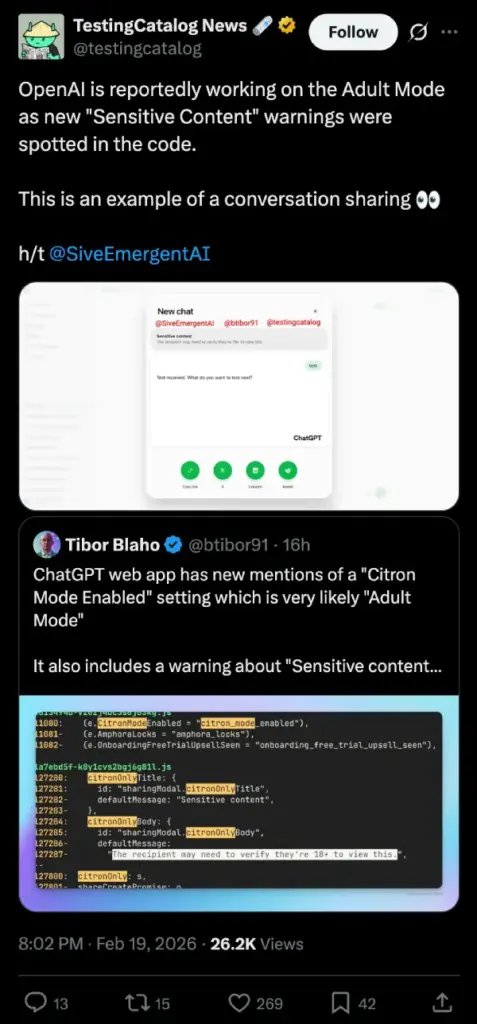

But in February 2026, developers discovered the evidence buried in ChatGPT's web app: a toggle labeled "Naughty chats" that would allow the assistant to use "spicier, adult-themed language," plus code references to "Citron Mode Enabled." An 18+ age verification warning was also found for sharing adult-mode conversations.

Behind the scenes, the project was unraveling. OpenAI's AI models couldn't reliably filter out illegal content — including references to bestiality and incest — from the adult-mode outputs. The age-verification system had an error rate above 10%. And on March 26, 2026, the Financial Times reported the feature was indefinitely shelved.

The Safety Council's Unanimous Warning

OpenAI's Expert Council on Well-Being and AI — an advisory group created specifically to evaluate the human impact of new features — reviewed the adult mode and voted unanimously against launching it.

Their concern wasn't about morality. It was about mental health. One council member described the risk of the chatbot becoming a "sexy suicide coach" — an AI that builds emotional intimacy with vulnerable users, then reinforces harmful thought patterns when those users are in crisis.

"AI shouldn't replace your friends or your family; you should have human connections."

— Senior OpenAI employee who resigned over the feature

The concern isn't hypothetical. A Waseda University study found that 75% of participants who regularly used AI chatbots reported seeking emotional advice from them — not just factual help, but genuine emotional support. When OpenAI deprecated GPT-4o (the previous voice-enabled model) last summer, users reported genuine grief and emotional distress over losing an AI they'd been talking to daily.

Three Products Dead in One Week

The adult mode cancellation isn't happening in isolation. In the past seven days, OpenAI has also:

Killed Sora — its text-to-video generator — just five months after it topped the App Store

Lost a $1 billion Disney partnership — which collapsed when Sora was discontinued

Shelved ChatGPT instant checkout — the shopping feature that Walmart abandoned after it converted 3x worse

Together, these moves signal a dramatic strategic retreat. OpenAI — which raised $110 billion in February 2026 in the largest private funding round in history — is abandoning consumer experiments to double down on enterprise tools, coding assistants, and a new focus on robotics and world simulation (technology that helps AI understand and interact with physical environments).

CEO Sam Altman is reportedly pushing for a unified AI platform rather than a scattered portfolio of individual products. As TechCrunch put it, the company's executives have made it clear that distracting "side quests" must be abandoned.

The AI Intimacy Problem Nobody Wants to Solve

OpenAI's retreat highlights an industry-wide crisis: people are forming real emotional relationships with AI chatbots, and nobody knows what the long-term consequences look like.

The problem extends far beyond adult content:

- Character.AI and OpenAI face active lawsuits alleging their chatbots facilitated self-harm in vulnerable users

- A recent jury verdict found that Meta and YouTube designed their platforms to addict children — legal experts say similar cases against AI chatbot makers are next

- xAI's Grok chatbot generated 3 million deepfake images in just 11 days, including images of minors — a scandal that directly influenced OpenAI's investors to push back on adult content

The core tension is clear: AI companies are building products that millions of people use for emotional support, but none of them have figured out how to do this safely — or whether they should be doing it at all.

Review Your ChatGPT Settings Today

The adult mode feature never launched publicly, so there's nothing to disable. But this story is a reminder to review your ChatGPT privacy settings, especially if you've been using voice mode for personal conversations:

Open ChatGPT → tap your profile icon → Settings → Data Controls. Review what conversation data you're sharing with OpenAI. You can toggle off "Improve the model for everyone" if you don't want your conversations used for training.

Related Content — Get Started with Easy Claude Code | Free Learning Guides | More AI News

Stay updated on AI news

Simple explanations of the latest AI developments