Alibaba's free 397B AI just beat GPT-5.2 at web browsing

Qwen 3.5 outscores GPT-5.2 on web browsing (78.6 vs 65.8), costs $0.18/M tokens, and runs on your own server. Apache 2.0 licensed, 201 languages.

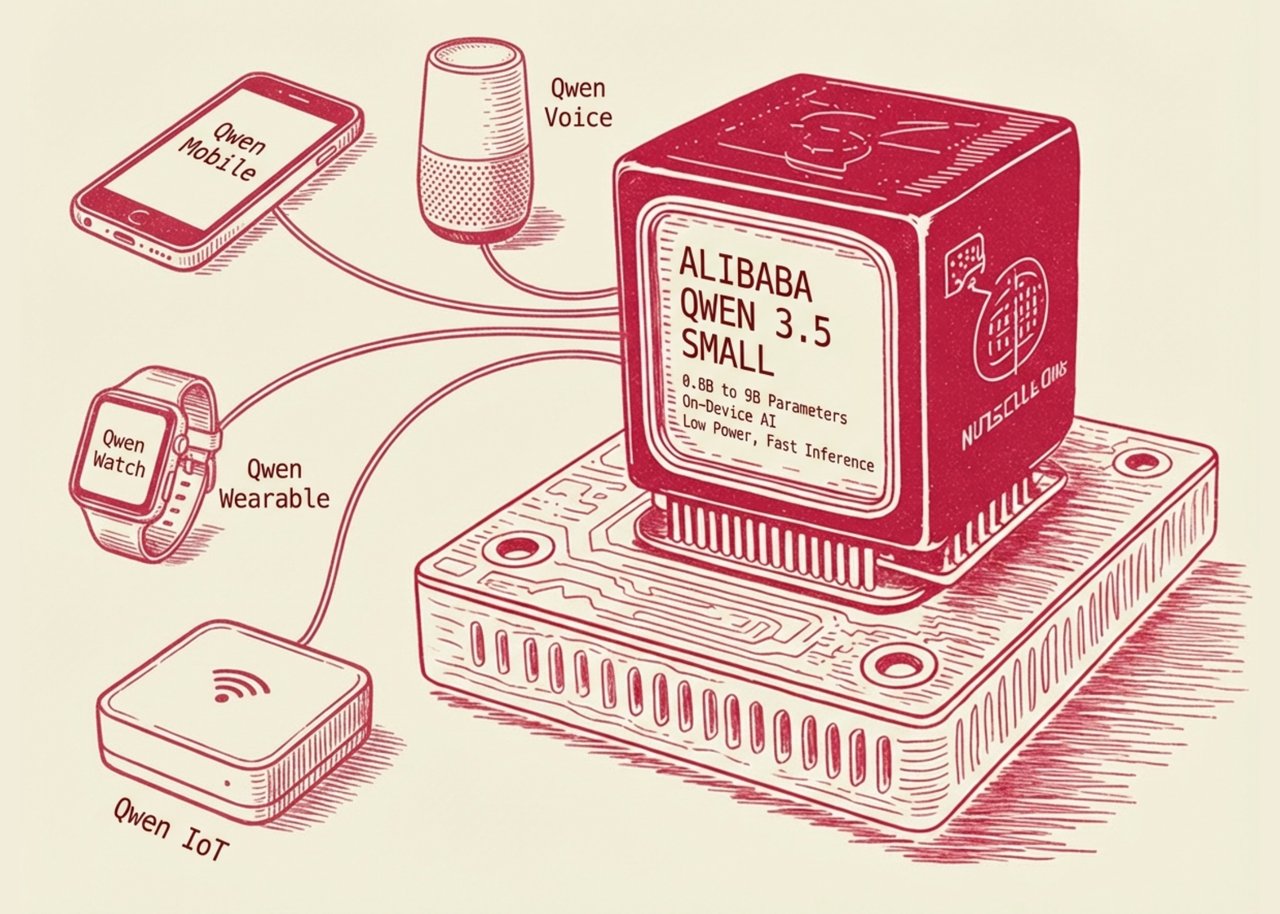

Alibaba just released a model that beats GPT-5.2 on web browsing benchmarks — and it's completely free to download and self-host. Qwen 3.5, released on February 16, 2026, is a native multimodal AI (one trained simultaneously on text, images, and video from scratch) that outperforms every major closed model on several key benchmarks. At just $0.18 per million tokens on Alibaba Cloud — roughly 60% cheaper than its predecessor — it's rewriting the cost calculus for AI at scale.

How It Beats Models That Cost 5x More

Qwen 3.5's flagship variant uses 397 billion total parameters — but here's the twist: it only activates 17 billion of them per query. This is called a Mixture-of-Experts (MoE) architecture, which works like a team of 512 specialists where only the relevant experts answer each question. The result is frontier-level quality at a fraction of the compute cost.

| Benchmark | Qwen 3.5 | GPT-5.2 | Claude Opus 4.6 | Gemini 3 Pro |

|---|---|---|---|---|

| BrowseComp (web tasks) | 78.6 🥇 | 65.8 | 67.8 | 59.2 |

| IFBench (instruction following) | 76.5 🥇 | 75.4 | 58.0 | — |

| MultiChallenge | 67.6 🥇 | 57.9 | — | — |

| SWE-bench Verified (coding) | 76.4 | — | — | — |

| AIME 2026 (advanced math) | 91.3 | — | — | — |

The 17% margin over GPT-5.2 on MultiChallenge and the clean sweep on BrowseComp are particularly striking. BrowseComp measures an AI's ability to complete multi-step web research tasks — finding specific documents, synthesizing information across pages, and producing accurate answers. Qwen 3.5 scores 78.6 versus GPT-5.2's 65.8.

What's Actually New Under the Hood

Beyond the benchmark numbers, several engineering changes separate Qwen 3.5 from its predecessors:

- Native multimodal training: Unlike models that bolt vision onto text backbones, Qwen 3.5 was trained from scratch on text, images, and video simultaneously. This yields more coherent visual reasoning rather than treating images as an afterthought.

- 201 languages supported — up from 82 in the previous Qwen generation — with a 250,000-token vocabulary, the largest in any public model family as of this writing.

- 262,144-token native context window (roughly 200,000 words, or the equivalent of three full-length novels), extensible to 1 million+ tokens in the hosted Plus variant. Context window (how much text the AI can "remember" in one conversation) is critical for legal documents, codebases, and long research sessions.

- 8.6x to 19x faster decoding than the previous Qwen generation, thanks to Gated Delta Networks — a hybrid linear attention mechanism that processes tokens more efficiently at long context lengths.

- 95% activation memory reduction versus equivalent-quality dense models, with FP8 (a compressed number format for AI calculations) halving memory requirements further. This makes self-hosting on consumer hardware significantly more feasible.

Who Benefits — and What You Can Do With It Today

If you're a developer or researcher, Qwen 3.5 is the most capable open-weight (freely downloadable) model ever released. At $0.18 per million tokens via Alibaba Cloud's DashScope API — 60% cheaper than Qwen's prior generation — running it in production is suddenly cost-viable for projects that couldn't justify GPT or Claude pricing.

If you run a multilingual product, 201 language support with a 250K-token vocabulary means you can handle Arabic, Vietnamese, Swahili, and dozens of underserved languages without a specialized model per region. The instruction-following score (76.5 on IFBench, #1 globally) also means the model reliably does what users ask rather than going off-script.

If you're building visual AI applications — screenshot analysis, document parsing, video understanding — the native multimodal architecture scores 66.8 on AndroidWorld (automated phone navigation) and 65.6 on ScreenSpot Pro (UI element detection), competitive with the best closed models.

Enterprises with data sovereignty concerns can self-host the full 397B model via vLLM, SGLang, llama.cpp, or Hugging Face Transformers on their own infrastructure. Apache 2.0 licensing means commercial use with no royalties or restrictions.

How to Start Using It Right Now

Option 1: Use the Alibaba Cloud hosted API (fastest path, OpenAI-compatible endpoint):

pip install openai

# Alibaba Cloud DashScope — OpenAI-compatible API

from openai import OpenAI

client = OpenAI(

api_key="YOUR_DASHSCOPE_KEY",

base_url="https://dashscope.aliyuncs.com/compatible-mode/v1"

)

response = client.chat.completions.create(

model="qwen3.5-plus", # flagship 397B variant

messages=[{"role": "user", "content": "Analyze this document and summarize key risks"}]

)

print(response.choices[0].message.content)Option 2: Self-host via Hugging Face (full control, no data leaves your server):

pip install transformers accelerate

from transformers import AutoModelForCausalLM, AutoTokenizer

tokenizer = AutoTokenizer.from_pretrained("Qwen/Qwen3.5-72B-Instruct")

model = AutoModelForCausalLM.from_pretrained(

"Qwen/Qwen3.5-72B-Instruct",

device_map="auto"

)

# Note: 397B requires multi-GPU setup; 72B runs on a single A100 or 2x RTX 4090The Bigger Picture: Open-Source Closes the Gap

Six months ago, open-source models trailed closed frontier models by a meaningful margin on most benchmarks. Qwen 3.5's BrowseComp and MultiChallenge leads suggest that gap has not just closed — it has reversed on specific task categories.

For context: the model was released on Chinese New Year's Day (February 16, 2026), signaling Alibaba's intent to mark the holiday with a technical statement. The Apache 2.0 license (the most permissive standard open-source license — allows commercial use, modification, and redistribution without restrictions) means any company, startup, or researcher can adopt it without legal complexity.

Qwen 3.5 is available on Hugging Face and ModelScope, with the full benchmark report on the official Qwen blog.

Related Content — Get Started with Easy Claude Code | Free Learning Guides | More AI News

Sources

Stay updated on AI news

Simple explanations of the latest AI developments