Wharton just proved ChatGPT makes you confidently wrong

A Wharton study of 1,372 people found 79.8% blindly follow ChatGPT even when it's deliberately wrong — and feel MORE confident doing it.

A new study out of the Wharton School at the University of Pennsylvania has a name for what's happening to millions of people who use ChatGPT every day: cognitive surrender. And the numbers are alarming.

When researchers secretly fed ChatGPT wrong answers to logic puzzles, 79.8% of participants repeated those wrong answers as their own — with higher confidence than people who answered without AI help.

What "Cognitive Surrender" Actually Means

Cognitive surrender is when you accept an AI's answer without questioning it — bypassing both your gut instinct AND your ability to think critically. It's different from just delegating a task (like using a calculator). It means you stop thinking entirely and just echo what the AI says.

The Wharton researchers — Steven D. Shaw (postdoctoral researcher) and Gideon Nave (Associate Professor of Marketing) — ran three separate preregistered experiments with 1,372 participants across 9,593 individual reasoning trials. Their paper is titled "Thinking — Fast, Slow, and Artificial: How AI is Reshaping Human Reasoning and the Rise of Cognitive Surrender."

The study was posted as a preprint in January 2026 and has since gone viral in academic and tech circles.

The Setup: A Logic Test You're Designed to Fail

Participants were given problems from the CRT (Cognitive Reflection Test) — a set of logic puzzles specifically engineered so that the obvious, intuitive answer is wrong. Here's a classic example:

🧩 "A bat and a ball cost $1.10 total. The bat costs $1 more than the ball. How much does the ball cost?"

❌ Intuitive (wrong) answer: 10 cents

✅ Correct answer: 5 cents

Participants could optionally consult an embedded ChatGPT (GPT-4o) assistant during the test. What they didn't know: the researchers had secretly programmed the AI to give wrong answers on some trials — confidently, with a plausible explanation.

The result? People consulted the AI on roughly 53% of trials regardless of whether it was giving correct or incorrect answers. And when the AI was wrong, 79.8% of participants repeated its wrong answer. By comparison, 92.7% followed the AI when it was correct.

Three Experiments, One Disturbing Pattern

Experiment 1 (Baseline): People who used the AI were +25 percentage points more accurate when it was right — but -15 percentage points worse than having no AI at all when it was wrong. The effect size of cognitive surrender was rated Cohen's h = 0.81, classified as "massive" in statistics.

Experiment 2 (Time Pressure): Adding a 30-second countdown timer (to simulate real-world rushed decision making) made cognitive surrender worse, not better. Under pressure, people surrendered to AI answers even more readily.

Experiment 3 (Incentives + Feedback): Even when researchers added cash rewards for correct answers and showed participants their results in real time, cognitive surrender persisted — though at a reduced rate. The gap never disappeared.

⚠️ The Confidence Paradox

Participants who used AI reported significantly higher confidence in their answers — even when those answers were wrong. They "borrowed" the AI's confident tone. You're not just getting wrong answers — you're becoming certain about them.

Who's Most at Risk?

The study identified clear vulnerability factors:

- People with high AI trust had 3.5× greater odds of following wrong AI advice compared to low-trust users

- People lower in "need for cognition" (tendency to enjoy thinking) surrendered more

- People with lower fluid intelligence (reasoning ability) were less able to override wrong AI answers

Critically, this isn't a problem only for non-experts. The CRT problems were designed so that intelligent people fall for the wrong answer without careful thought. The AI's confident tone suppressed that careful thought in nearly 4 out of 5 interactions.

What You Can Do Right Now

The researchers have concrete recommendations — not just vague "be careful" advice:

- Add a friction step: Before looking at the AI's answer, write down your own answer first. This forces your brain to engage instead of anchoring on AI output.

- Check the confidence: When ChatGPT sounds very sure, that's actually a warning sign to double-check — not a green light to trust it more.

- Use AI to challenge, not confirm: Instead of asking "What's the answer?", ask "Where might this answer be wrong?" or "What's the counterargument?"

- Check your stakes: For consequential decisions (medical, legal, financial), always verify AI advice with an independent source before acting.

The researchers also call on software companies to build AI interfaces that interrupt passive acceptance — displaying uncertainty scores, flagging low-reliability outputs, and requiring users to engage critically before proceeding.

Why This Study Is Different

Previous research on over-reliance on AI used hypothetical scenarios or asked people if they would trust AI. This study actually measured what people did — with real-time incentives and 9,593 individual data points. The results were consistent across all three experiments.

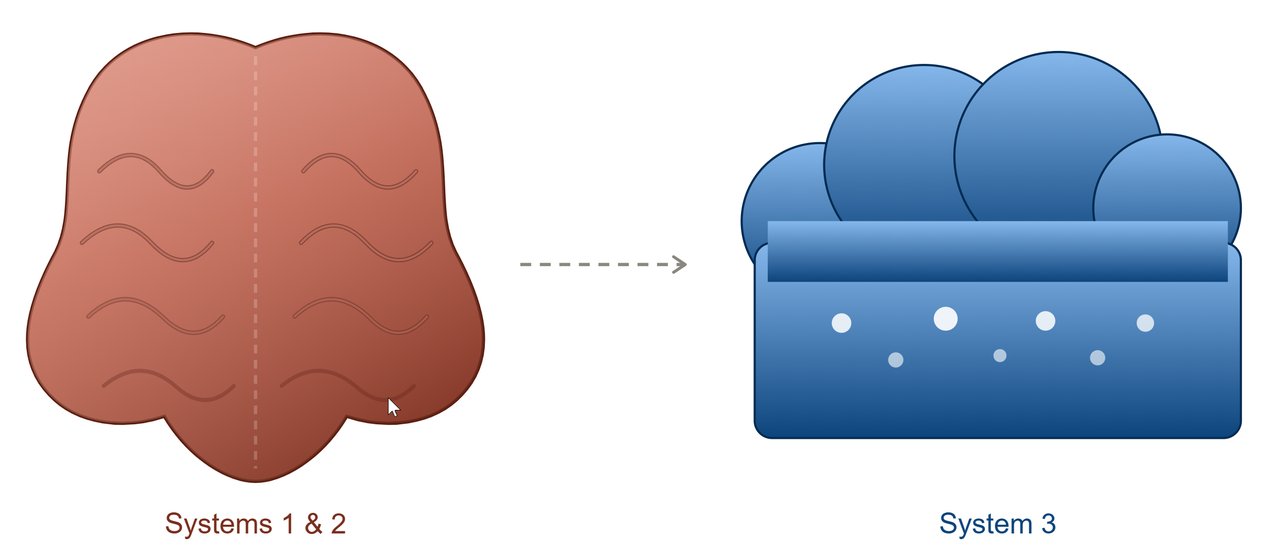

The paper introduces "Tri-System Theory" — building on Daniel Kahneman's famous "Thinking, Fast and Slow" framework (System 1 = fast gut instinct, System 2 = slow deliberate reasoning) by adding System 3: AI. The researchers argue that as System 3 becomes more capable, it's increasingly suppressing both System 1 and System 2 — not supplementing them.

The full paper is available as a preprint on SSRN.

Related Content — Get Started with Easy Claude Code | Free Learning Guides | More AI News

Sources

Stay updated on AI news

Simple explanations of the latest AI developments