Claude AI Market Share Halved — Anthropic Plans $60B IPO

Claude AI's market share crashed from 29% to 13% as Chinese rivals offer 90% quality at 7% cost — yet Anthropic pushes ahead with a record $60B+ IPO in 2026.

Anthropic — the company behind Claude AI — just reported $19 billion in annual recurring revenue (ARR, the total yearly income from subscriptions). That's up from $1 billion just 15 months ago. A 19x jump that would make any startup founder weep with joy.

There's just one problem: the company is still losing money, its market share has been cut in half, the U.S. government branded it a security risk, and Chinese competitors are offering 90% of Claude's quality at 7% of the price.

And yet, Anthropic is planning one of the biggest tech IPOs in history — potentially raising more than $60 billion as early as October 2026.

Anthropic Revenue: $1B to $19B ARR — and Still Bleeding Cash

Anthropic's revenue trajectory reads like science fiction. The company hit $1 billion in ARR around January 2025, then scaled to $19 billion by March 2026. That's roughly a 10x annual growth rate sustained for three consecutive years — a feat no AI company has matched.

The targets get even more ambitious: $18 billion for 2026, $55 billion for 2027, and a staggering $148 billion by 2029.

But here's the catch: Anthropic generated only $5 billion against $10 billion in inference and training costs (the expense of running AI models on servers and teaching them new capabilities). That means the company is spending roughly $2 for every $1 it earns. Its most recent Series G round raised $30 billion at a $380 billion post-money valuation (the company's total estimated worth after investment) — led by sovereign wealth fund GIC and investment firm Coatue.

That $380 billion figure puts Anthropic at a 27x revenue multiple (valuation divided by annual revenue). For context, even the frothiest software companies in the 2021 bubble rarely traded above 40x. Whether public markets will stomach that ratio is the $60 billion question.

Chinese AI Models Are Undercutting Claude AI 6x on Price

While Anthropic was scaling revenue, Chinese AI labs were scaling something else entirely: market share.

On OpenRouter — a popular platform that lets developers compare and switch between different AI models through a single connection — Anthropic's share plunged from 29.1% in March 2025 to just 13.3% in March 2026. That's more than a 54% decline in 12 months.

Meanwhile, Chinese AI models now account for 61% of total token consumption (the units AI models use to measure and process text) on the platform. The top six models by popularity are all Chinese-made: Xiaomi's MiMo-V2-Pro, Step 3.5 Flash, DeepSeek V3.2, MiniMax M2.7, MiniMax M2.5, and GLM 5 Turbo. Claude Opus 4.6 and Claude Sonnet 4.6 sit at 7th and 8th place.

The reason is brutally simple: price. Chinese providers charge $2–3 per million output tokens versus Anthropic's roughly $15 — a nearly 6x gap. Independent testing found that MiniMax M2.7 delivered 90% of Claude's quality for just 7% of the cost ($0.27 per task versus $3.67).

As the U.S.–China Economic and Security Review Commission noted: "Chinese labs have narrowed performance gaps with top Western large language models." In plain English: the quality advantage that justified Claude's premium price is shrinking, while the price gap has already become a canyon.

Anthropic hasn't taken this lying down. The company accused DeepSeek, MiniMax, and Moonshot AI of creating over 24,000 fraudulent accounts and conducting more than 16 million distillation exchanges (a technique where one AI model learns by systematically studying another AI's outputs) — essentially alleging industrial-scale intellectual property theft.

Trump Calls Anthropic "Woke" — Brands Claude AI a National Security Risk

In a storyline that reads more like a political thriller than a tech earnings report, Anthropic found itself in direct conflict with the Trump administration.

It started when Defense Secretary Pete Hegseth demanded Anthropic remove AI safety guardrails (built-in limits that prevent AI from generating harmful or dangerous content) for autonomous weapons systems and domestic surveillance programs. CEO Dario Amodei — who founded Anthropic in 2021 with his sister Daniela specifically because he believed AI safety wasn't being taken seriously — refused.

The response was swift. President Trump designated Anthropic a "supply chain risk," ordered all federal agencies to stop using Claude, and publicly called the company "woke."

A federal judge, Rita F. Lin, issued a preliminary injunction (a court order temporarily blocking a government action) to halt the ban. Her ruling was scathing:

"Nothing supports the Orwellian notion that an American company may be branded a potential adversary and saboteur of the U.S. for exposing a disagreement with the government."

The irony wasn't lost on the public. After the government rift, Claude became the #1 most-downloaded free app on Apple's App Store in the United States — a massive consumer awareness boost that no marketing budget could buy.

Still, the damage to enterprise trust was real. More than 100 enterprise customers expressed concerns about continuing to use Claude after the ban. Given that enterprise clients constitute 80% of Anthropic's revenue, that's an existential-level customer concentration risk (when most income depends on a small group of buyers who could all leave at once).

Worth noting: OpenAI CEO Sam Altman stated he also wouldn't permit his models for domestic surveillance — suggesting the Trump administration was singling out Anthropic rather than enforcing a universal policy.

Claude AI's Censorship Problem Is Driving Away Power Users

Safety isn't just a political issue for Anthropic — it's becoming a product problem too.

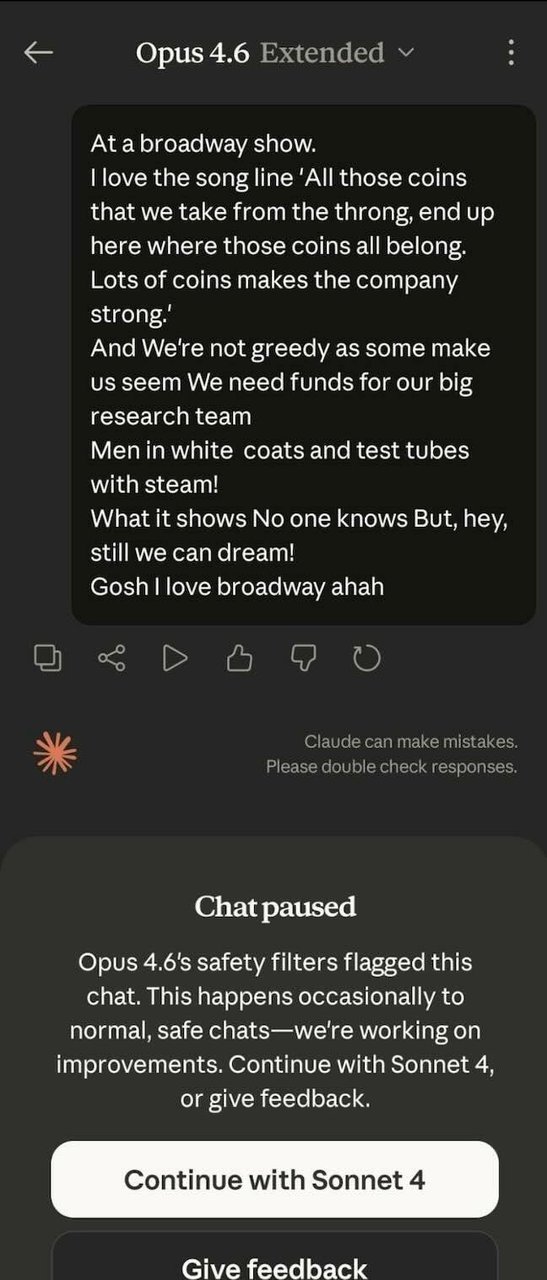

Claude Opus 4.6, the company's most powerful model, has been described by security researchers as "very, very, very heavily censored." The model's CBRN filters (chemical, biological, radiological, and nuclear safety checks designed to prevent misuse) trigger false positives on completely innocent topics — including, in one widely shared example, the Broadway musical Urinetown.

At least one security researcher publicly canceled a $200/month Claude subscription over the excessive censorship. For a company that charges premium prices specifically because of its quality advantage, alienating power users (advanced professionals who push the tool to its limits and influence industry adoption) is a dangerous trend — especially when cheaper, less filtered Chinese alternatives are one click away.

If you're trying to figure out which AI tools actually fit your daily workflow, our practical AI automation guides cover real comparisons without the marketing hype.

Anthropic's $60 Billion IPO: The Biggest Tech Gamble of 2026

Despite everything — the losses, the market share collapse, the government conflict, the censorship backlash — Anthropic is pressing ahead with IPO plans. Early discussions with bankers are underway, law firm Wilson Sonsini is already involved, and the target window is Q4 2026, potentially October, with a raise exceeding $60 billion.

That would make it one of the largest technology debuts in history. According to PitchBook, both the Anthropic and OpenAI IPOs combined could "create more value than all VC-backed IPOs since 2000 have collectively."

OpenAI, valued at $730 billion (nearly 2x Anthropic's valuation), is planning its own IPO around the same timeframe after securing a $110 billion funding round. The two former sister companies — both born from the same original OpenAI lab — will be competing for the same investor dollars in the same quarter.

Anthropic has been busy shoring up its position: a $100 million expansion of the Claude Partner Network, the launch of The Anthropic Institute for AI safety research, and the acquisition of AI startup Vercept to improve Claude's computer-use capabilities (the ability for AI to directly interact with software on your screen, clicking buttons and filling forms like a human would).

But the fundamental tension remains. Anthropic was founded to make AI safer. That mission now creates its deepest challenges: refuse the Pentagon, get labeled a security threat. Filter aggressively, lose power users. Charge premium prices for safety, watch Chinese competitors offer 90% of the value at a fraction of the cost.

The company that was built to make AI safer is now being punished — by markets, by governments, and by users — for doing exactly that. Whether $60 billion worth of investors agree that safety is worth the premium will determine whether Anthropic's founding bet pays off or becomes its epitaph.

For a step-by-step look at how to set up and use AI tools like Claude in your own projects, check out our getting started guide.

Related Content — Get Started | Guides | More News

Stay updated on AI news

Simple explanations of the latest AI developments