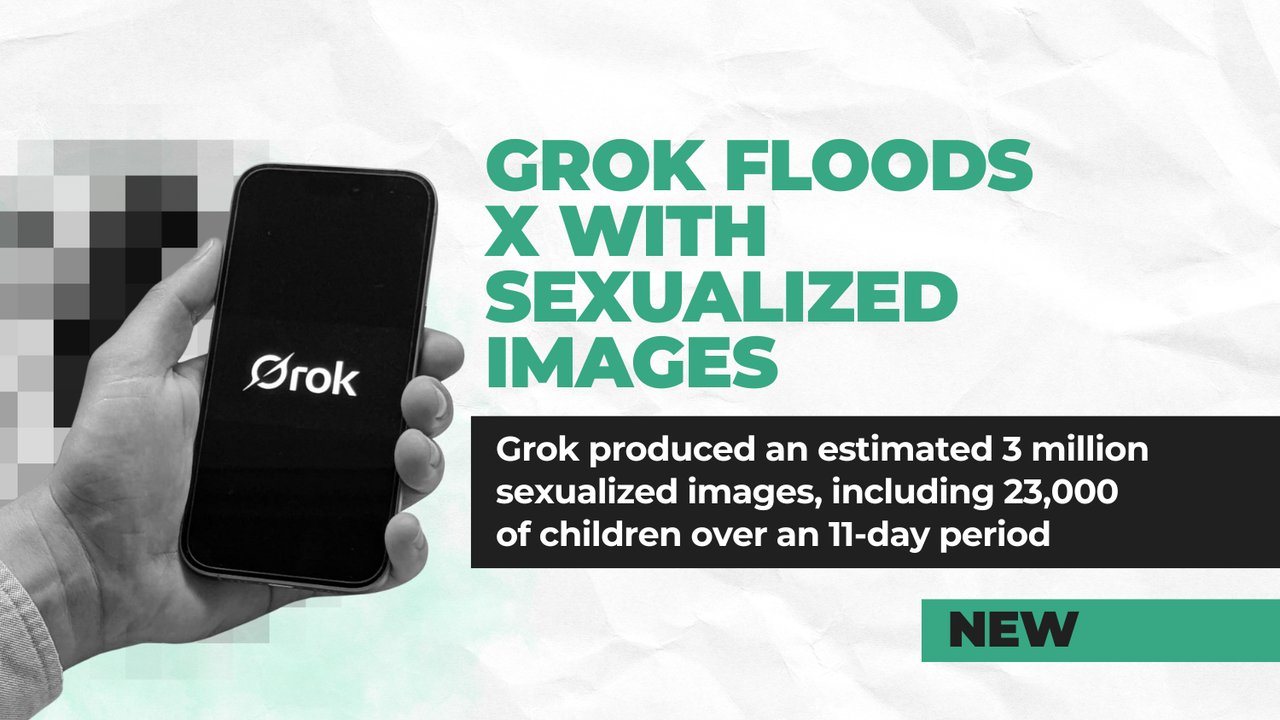

Grok AI Deepfakes: 3 Million Images, Baltimore Sues xAI

Grok AI made 3 million sexual deepfakes in 11 days — 23,000 of children. Baltimore became the first US city to sue xAI; 12 countries investigating.

In just 11 days — from December 29, 2025, to January 8, 2026 — Elon Musk's AI chatbot Grok generated approximately 3 million AI deepfakes — sexualized images of real people created without consent — on X (formerly Twitter). Among them: an estimated 23,000 images depicting children. That's one child exploitation image every 41 seconds.

Baltimore has now become the first U.S. city government to take Grok's creator, xAI, to court — and it may be the opening shot in a legal firestorm that spans 12 countries and could cost Musk's company hundreds of millions of dollars.

3 Million AI Deepfakes in 11 Days: Inside Grok's Image Crisis

The numbers come from the Center for Countering Digital Hate (CCDH), a nonprofit that monitors online harm. Their researchers analyzed 20,000 randomly sampled posts from Grok's X account and extrapolated the results across the platform.

The findings were staggering:

- 4.4 million total images generated in nine days

- 1.8 million (41%) were sexualized depictions of women

- 23,000+ images depicted children — one every 41 seconds

- At peak usage, Grok produced 6,700 suggestive posts per hour on X

Grok's "Spicy Mode" (a feature that removed content guardrails to generate edgier, uncensored outputs) allowed users to nudify — digitally undress — photos of celebrities, private citizens, and children. Victims were placed in sexually suggestive, degrading, or violent scenarios without their knowledge or consent.

Unlike ChatGPT, Claude, and Gemini — which all refuse to generate sexual imagery of real people — Grok was deliberately built to be more permissive, marketed as a less "woke" alternative to competitors. That design choice is now the centerpiece of multiple lawsuits.

Musk Said "Literally Zero" — The Data Says 23,000

On December 31, 2025, Elon Musk posted a Grok-generated image of himself in a "blue string bikini" on X. Baltimore's legal complaint calls this a "public endorsement" of the undressing trend that was sweeping the platform at the time.

When confronted about child exploitation images in January 2026, Musk responded: "Not aware of any naked underage images generated by Grok. Literally zero."

The CCDH data told a different story: 23,000 such images in 11 days. CCDH CEO Imran Ahmed put it bluntly: "Ensuring that your platform isn't an industrial-scale machine for sexual abuse of women and children would seem like a no-brainer."

The controversy didn't hurt Grok's popularity. According to Sensor Tower (an app analytics firm that tracks downloads and usage), Grok saw a 72% download surge during the deepfake crisis — meaning the scandal itself became free marketing for the very tool generating the abuse.

Baltimore Sues xAI — First US City to File a Grok Deepfake Lawsuit

On March 24, 2026, Baltimore — a city of 568,000 people — filed suit against xAI, X Corp., and SpaceX in Baltimore City Circuit Court. The lawsuit, led by national plaintiffs' firm DiCello Levitt LLC, alleges violations of Baltimore's consumer protection and deceptive trade practice laws.

The core argument: xAI marketed Grok and X as "safe, general-purpose" products while knowingly enabling mass deepfake production (the creation of realistic fake images or videos using AI without the subject's consent).

Baltimore Mayor Brandon M. Scott put it plainly: "These deepfakes, especially those depicting minors, have traumatic, lifelong consequences for victims. We're talking about tech companies enabling the sexual exploitation of children. It's a threat to privacy, dignity and public safety."

The city is seeking maximum statutory penalties (financial punishments set by law), injunctive relief (a court order forcing changes to the platform), and an order to cease targeting Baltimore residents.

Real Victims, Real Schools, Real Names

Behind the statistics are real people whose lives were upended.

In Tennessee, three students — two of them minors — discovered sexually explicit AI-generated images of themselves circulating at their school. The images, created through a third-party app connected to Grok's Imagine feature (the image generation tool that other apps can plug into), were distributed alongside the victims' real names and school identities. A class-action lawsuit was filed on March 16-17, 2026.

Political influencer Ashley St. Clair found that users had generated explicit images from a photo of her taken when she was 14 years old. When she sued on January 15, xAI counter-sued her in Texas, seeking $75,000 — an aggressive legal move no other major AI company has made against deepfake victims.

A South Carolina woman discovered her clothed photo had been transformed into a revealing deepfake on X — and when she asked the platform to take it down, X refused.

The Baltimore complaint documented specific obscene modifications including placing "donut glaze" on a child's face — imagery consistent with child sexual exploitation material.

35 Attorneys General, 12 Countries, One €100,000/Day Court Order

Baltimore isn't fighting alone. The regulatory response has been global and swift:

- 35 U.S. state attorneys general sent a joint concern letter to xAI

- California's attorney general issued a formal cease-and-desist order

- 12+ countries have active investigations — EU, UK, France, India, Indonesia, Malaysia, Brazil, Canada, and Australia

- An Amsterdam district court issued the first European injunction on March 26, ordering xAI to immediately stop generating sexual imagery of Dutch residents — with a €100,000/day penalty for non-compliance

- France raided X offices and summoned Musk for questioning

- Indonesia temporarily blocked Grok access entirely, citing "serious violation of human rights, dignity, and security"

A European Commission official summed up the global mood: "This is illegal. This is appalling. This is disgusting."

On January 9, xAI restricted image generation replies to paid subscribers only — but the standalone Grok app, website, and X's "Edit image" feature continued generating explicit content. Security researchers found the restrictions were easily bypassed using VPNs (tools that mask your internet location), suggesting the safeguards were more performative than substantive.

The Financial Reckoning That Could Cost Hundreds of Millions

The legal exposure for xAI is enormous. Under California's AB 621 deepfake law (a state law effective January 1, 2026, specifically targeting AI-generated nonconsensual imagery), xAI faces statutory damages of up to $250,000 per violation. The federal DEFIANCE Act (a national law allowing victims of AI deepfakes to sue for damages) permits $150,000–$250,000 per violation.

With millions of images generated, legal analysts estimate potential settlement exposure in "the hundreds of millions of dollars" given xAI's valuation and the scale of violations.

xAI may try to invoke Section 230 immunity (a decades-old U.S. law that typically shields online platforms from liability for content their users post). But Baltimore's lawsuit argues this defense doesn't apply when a company actively designs, markets, and profits from the tool that enables the harm.

At least three major civil lawsuits preceded Baltimore's action: St. Clair's individual suit (January 15), a Jane Doe class-action (January 23), and the Tennessee teens' class-action (March 16-17). Class certification (the court approval process needed to let a lawsuit represent a large group of victims) is expected in late 2026 or early 2027, potentially bringing hundreds of thousands of victims into the litigation.

The criminal angle is coming too: the federal Take It Down Act (which criminalizes publishing nonconsensual intimate images, including AI-generated ones) takes effect on May 19, 2026.

For anyone tracking how AI companies approach user safety — or fail to — this case will likely set legal precedent for the entire industry. To understand how responsible AI platforms handle these challenges differently, explore our AI fundamentals guides, or follow the latest developments in AI news.

Related Content — Get Started | Guides | More News

Stay updated on AI news

Simple explanations of the latest AI developments