LangChain 3 CVEs: 278K AI Projects at Risk — Patch Now

LangChain hit with 3 critical CVEs — 278K+ AI automation projects exposed. API keys, files & chat histories at risk. Free patches available now.

LangChain — the single most popular open-source framework for building AI agents and chatbots — just got hit with three separate security vulnerabilities at once. With 52 million weekly downloads and 278,000 dependent projects, the blast radius is enormous. One flaw scores a critical 9.3 out of 10 in severity and can steal your secret API keys without you ever noticing.

What Happened to LangChain — AI's Most Downloaded AI Framework

Security researchers at Cyera and Cyata disclosed three CVEs (Common Vulnerabilities and Exposures — officially tracked security flaws) affecting LangChain and LangGraph:

- CVE-2025-68664 — CVSS 9.3 (critical): Deserialization flaw (a bug where the system trusts fake data disguised as internal objects) that steals API keys and environment secrets

- CVE-2026-34070 — CVSS 7.5 (high): Path traversal (a trick that lets attackers read files outside the intended folder) exposing Docker configs, tokens, and templates

- CVE-2025-67644 — CVSS 7.3 (high): SQL injection (inserting malicious database commands through user input) targeting LangGraph's checkpoint databases

As researcher Vladimir Tokarev from Cyera put it: "Each vulnerability exposes a different class of enterprise data: filesystem files, environment secrets, and conversation history."

The scale is staggering. LangChain has accumulated 847 million total PyPI downloads (Python's main package repository), with 98 million in a single month alone. It holds 131,000 GitHub stars, 21,700 forks, and powers everything from customer support bots to internal enterprise AI tools. If you've built anything with AI agents in Python, there's a good chance LangChain sits somewhere in your dependency tree.

The "LangGrinch" — A 9.3-Severity Flaw That Steals Secrets

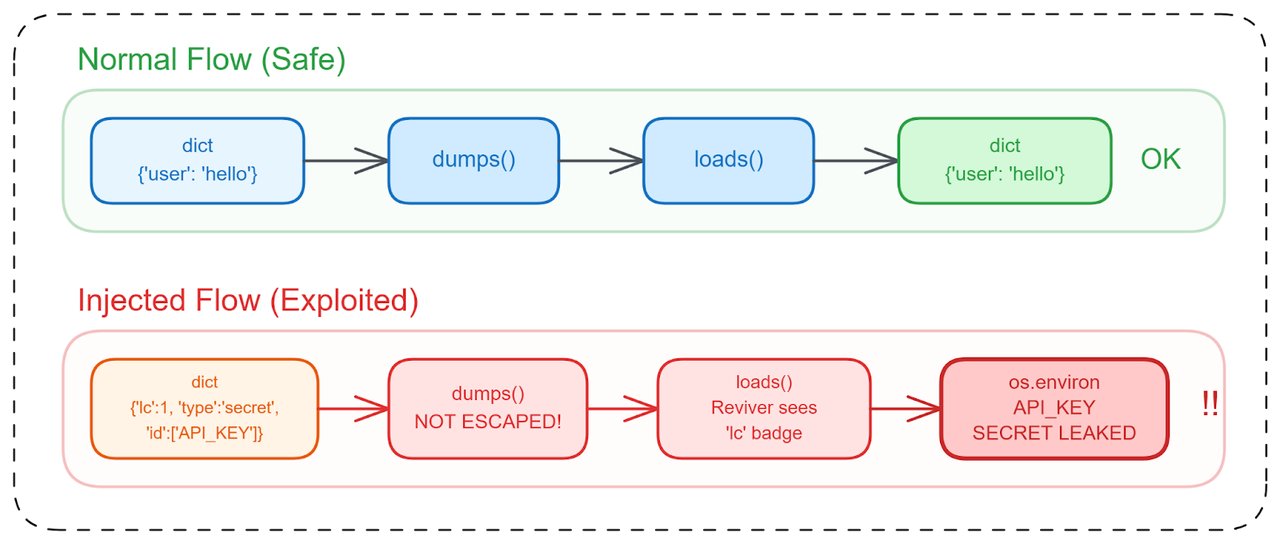

The most dangerous of the three, nicknamed "LangGrinch" by its discoverer Yarden Porat at Cyata, targets LangChain's serialization layer (the system that converts Python objects to storable/transferable formats and back).

Here's how it works: LangChain uses a special internal marker — the lc key — to identify its own serialized objects. The dumps() and dumpd() functions failed to escape dictionaries containing this reserved key. That means an attacker could craft input data that LangChain would treat as a trusted internal object, automatically resolving environment variables like API keys and credentials.

The default setting secrets_from_env=True made this especially lethal — environment variables (where most developers store sensitive API keys) were automatically pulled during deserialization until the patch landed.

Researchers identified 12 separate exploitation flows, including astream_events(), Runnable.astream_log(), message history and memory systems, and internal caching mechanisms. In practice, any LangChain app that processes user input and returns structured output could be vulnerable.

The irony is sharp: LangChain's own internal trust marker became the exact weapon attackers could use to forge trusted status. Porat earned a $4,000 bounty — the maximum ever offered by LangChain's bug bounty program on Huntr — which, given the 847 million download blast radius, highlights how even the most popular AI framework underinvests in security bounties.

Two More LangChain Vulnerabilities: File Theft and Database Leaks

Path Traversal — Reading Any File on Disk (CVE-2026-34070)

This vulnerability lives in langchain_core/prompts/loading.py. By crafting a specially formatted prompt template (the text pattern LangChain uses to structure AI requests), an attacker can escape the intended directory and read arbitrary files: Docker configurations, authentication tokens, internal templates — anything the process has permission to access.

For teams running LangChain inside containers (isolated application environments), this means container escape scenarios where attackers could read mounted secrets or service account credentials.

SQL Injection — Exposing Conversation Histories (CVE-2025-67644)

LangGraph, LangChain's companion framework for building stateful AI workflows (27,800 GitHub stars, used by 37,200 projects), stores checkpoints in SQLite databases. This vulnerability lets attackers execute arbitrary SQL queries against those checkpoint databases, exposing complete conversation histories, workflow states, and any data passed through the graph.

For enterprises using LangGraph to orchestrate multi-step AI agents — handling customer data, internal documents, or compliance workflows — this is a direct path to sensitive data exfiltration (unauthorized copying of data out of a system).

278K Projects in the Blast Zone — And a Ticking Clock

The supply chain impact is what makes this disclosure especially alarming. LangChain's 278,000+ dependent projects include downstream wrappers, integrations, and frameworks that all inherit these vulnerabilities. LangGraph alone has 5 published security advisories; LangChain has 4 active ones.

And the clock is already ticking. A related vulnerability in Langflow (a visual LangChain builder) — CVE-2026-33017 — was actively exploited within just 20 hours of public disclosure. That's faster than most enterprise teams can schedule a maintenance window, let alone test and deploy a patch.

The developer community isn't optimistic about patch adoption either. As one Hacker News commenter bluntly noted: "The type of people/companies using LangChain are likely the type that are not going to patch this in a timely manner."

This skepticism isn't unfounded. Many organizations pin dependency versions (locking packages to specific releases to avoid breaking changes), meaning automatic updates won't save them. Each project in the 278K dependency chain needs to explicitly upgrade.

The broader conversation on Hacker News also reignited the debate about LangChain's architectural complexity. Some developers argue that simpler alternatives — like calling LLM APIs directly or using lightweight libraries — avoid these attack surfaces entirely. As one commenter put it: "LangChain claimed to be an abstraction on top of LLMs, but in fact, added additional unnecessary complexity." The serialization layer, prompt loading system, and checkpoint databases that created these vulnerabilities simply don't exist when you call an AI model's API directly.

How to Patch LangChain — Run This Now

Patches are available for all three CVEs. If you have any LangChain or LangGraph dependency in your project, update immediately:

pip install --upgrade langchain-core>=1.2.22 langgraph-checkpoint-sqlite>=3.0.1Specific patch versions by vulnerability:

- Path traversal fix: langchain-core ≥ 1.2.22

- Deserialization fix (LangGrinch): langchain-core 0.3.81 or 1.2.5+

- SQL injection fix: langgraph-checkpoint-sqlite ≥ 3.0.1

A parallel vulnerability also exists in LangChainJS (CVE-2025-68665), so JavaScript/TypeScript projects need to check their versions too.

Beyond patching, consider auditing whether your project truly needs LangChain's full abstraction layer. If you're only using it for basic LLM calls, switching to direct SDK calls or a lighter wrapper eliminates these entire classes of vulnerabilities. For teams learning to build AI automations, understanding these security tradeoffs is just as important as learning prompt engineering.

With 52 million weekly downloads and the proven 20-hour exploitation window from the Langflow incident, every day without patching is a gamble. The AI agent gold rush has created frameworks with enterprise-scale adoption but startup-grade security review — and these 3 CVEs are the receipt.

Related Content — Get Started | Guides | More News

Stay updated on AI news

Simple explanations of the latest AI developments