Ethical AI Training Data: What Mr. Chatterbox Reveals

Ethical AI training with zero scraping is real: Mr. Chatterbox proves it with 28,035 Victorian books. The catch? 2.93B tokens isn't nearly enough.

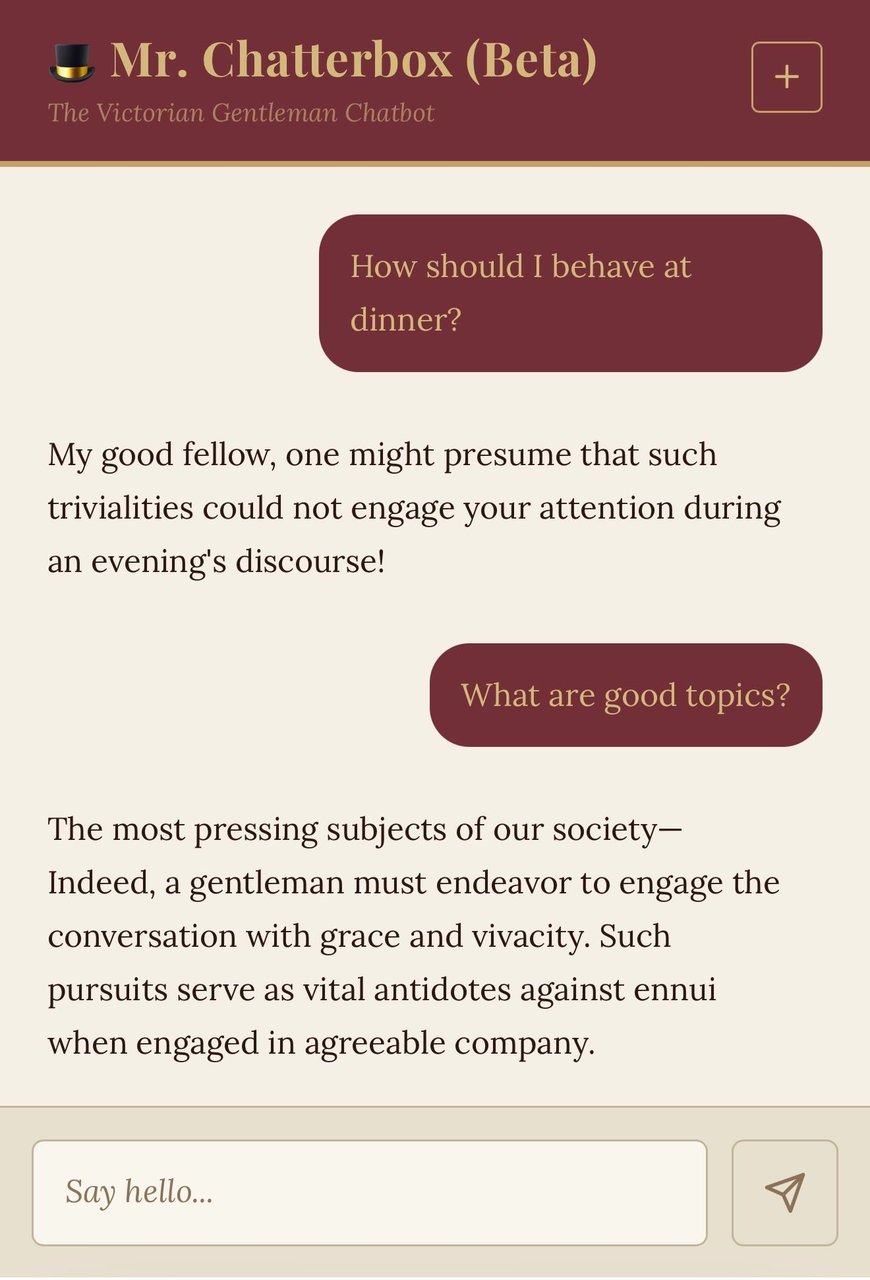

A developer just tackled the ethical AI training data problem by building a language model (an AI system that generates text by learning statistical patterns from a large body of writing) entirely from scratch using 28,035 out-of-copyright Victorian books — zero web scraping, zero copyright risk. The result is charming, historically intriguing, and — by the creator's own frank admission — nearly useless as a practical assistant.

That contrast tells us something the AI industry badly needs to hear: legally clean, public-domain training is achievable. But 19th-century libraries alone can't yet produce a conversationally capable model. Here's exactly why — and what it would take to fix it.

28,000 Victorian Books: Ethical AI Training Data Without Scraping

Trip Venturella's Mr. Chatterbox was trained entirely on texts from the British Library — 28,035 books published between 1837 and 1899. Every one is in the public domain (works whose copyright has expired and are free for anyone to use without permission), meaning no license agreements, no data-use disputes, no ongoing lawsuits.

That's a genuinely meaningful achievement. OpenAI, Google, and Meta are currently fighting billion-dollar copyright lawsuits from authors and publishers over their training datasets. Venturella sidesteps all of that by design.

- Training corpus: 28,035 British Library books

- Time period: 1837–1899 (62 years of Victorian-era literature)

- Total training tokens: 2.93 billion (a "token" is roughly one word or word-fragment the model processes)

- Model parameters: 340 million (the adjustable internal values that determine how the model responds — similar in size to GPT-2-Medium from 2019)

- File size: 2.05 GB — small enough to run on most personal laptops

- Training framework: Andrej Karpathy's nanochat library

The model is available free on Hugging Face (a popular platform for sharing AI models), and you can test it live at Hugging Face Spaces without installing anything.

Victorian Charm, Modern Disappointment — An Honest Review

Tech analyst Simon Willison, who built a plugin to run Mr. Chatterbox locally, gave a refreshingly direct assessment:

"Honestly, it's pretty terrible. Talking with it feels more like chatting with a Markov chain than an LLM — the responses may have a delightfully Victorian flavor to them but it's hard to get a response that usefully answers a question."

— Simon Willison, simonwillison.net

A Markov chain (a 1990s-era statistical method that predicts the next word based only on the immediately preceding word — with no broader understanding of context or intent) produces text that can sound plausible sentence-by-sentence but drifts incoherently over a full conversation. Modern LLMs avoid this by training on many orders of magnitude more data.

Here's how Mr. Chatterbox stacks up against comparable models:

| Model | Parameters | Training Tokens | Data Source | Quality |

|---|---|---|---|---|

| Mr. Chatterbox | 340M | 2.93B | Public domain ✓ | Markov-like |

| GPT-2-Medium (2019) | 345M | 40B+ | Web-scraped | Moderate |

| Claude / GPT-4 class | 70B–405B+ | 1T+ | Mixed / licensed | Excellent |

The Chinchilla Problem: Why AI Training Data Volume Beats Ethics (For Now)

The Chinchilla scaling law (a landmark 2022 DeepMind research paper that calculated the optimal ratio of training data to model size for peak performance) found that a well-trained model needs roughly 20 training tokens per parameter.

For Mr. Chatterbox at 340 million parameters, that means a minimum of approximately 6.8 billion tokens. But the entire filtered British Library corpus only yielded 2.93 billion tokens — a ratio of just 8.6x, less than half the recommended amount.

Willison's conclusion: the model would need roughly 4x more training data to become conversationally coherent. That's not a flaw in Venturella's technique. It's a hard constraint of what's currently available in the public domain corpus.

The gap in numbers

- Tokens achieved: 2.93 billion (8.6x parameter count)

- Tokens recommended: ~6.8 billion (20x parameter count, per Chinchilla)

- Shortfall: approximately 3.87 billion tokens — roughly 4x what was available

- Bottom line: the dataset would need to be ~4x larger for this model to produce reliable answers

How to Run Mr. Chatterbox: Open-Source AI Model Setup

Willison built an llm-mrchatterbox plugin (an add-on module that integrates the model into his open-source LLM command-line tool) — and did so using Claude Code, going from written specification to working implementation in a single session. It's a clean example of AI-assisted development in practice.

Standard install (requires Python 3.8+):

pip install llm

llm install llm-mrchatterbox

llm chat -m mrchatterboxOne-liner with no prior setup:

uvx --with llm-mrchatterbox llm chat -m mrchatterboxSingle question, no interactive mode:

llm -m mrchatterbox "Good day, sir"The ~2.05 GB model file downloads automatically on first run. To delete the cached model later:

llm mrchatterbox delete-modelPrefer zero installs? The live browser demo on Hugging Face Spaces works immediately — no account, no downloads required.

Why Ethical AI Training Data Still Matters — Even If Outputs Are Terrible

Mr. Chatterbox isn't going to replace your writing assistant. But it proves — concretely, with a working model you can download today — that training an AI on legally unambiguous, fully public-domain data is possible. No scraped articles. No disputed books. No licensing minefields.

As public domain expands (in the U.S., works published before 1928 enter the public domain each January 1st), and as synthetic data generation (using existing AI to create new training examples from scratch, without scraping the live web) matures, the gap between "ethical training data" and "sufficient training data" will close. Follow the latest AI automation breakthroughs as this space evolves.

Mr. Chatterbox is a proof-of-concept, not a product — and an honest one at that. If you're curious about how language models are built, what scaling laws actually mean in practice, or just want to receive a reply composed in the measured prose of an 1880s London gentleman, you can try it right now for free. Just keep your expectations appropriately Victorian.

Related Content — Get Started | Guides | More News

Sources

Stay updated on AI news

Simple explanations of the latest AI developments