AI Agent Security: AWS AIRI Exposes 8 Critical Flaws

AWS AIRI scanned one AI agent and found 8 critical security flaws — silent data leaks that bypass firewalls. See how agentic AI puts your company at risk.

Your company just deployed an AI automation workflow — an AI assistant with access to email, calendar, and customer records. It works perfectly — until a bad actor embeds hidden instructions in an incoming email, and your AI quietly forwards sensitive data through calendar invites. No alert fires. No permission is violated. AWS ran this exact scenario and found 8 critical flaws in a single agent evaluation — then built a tool to catch them automatically.

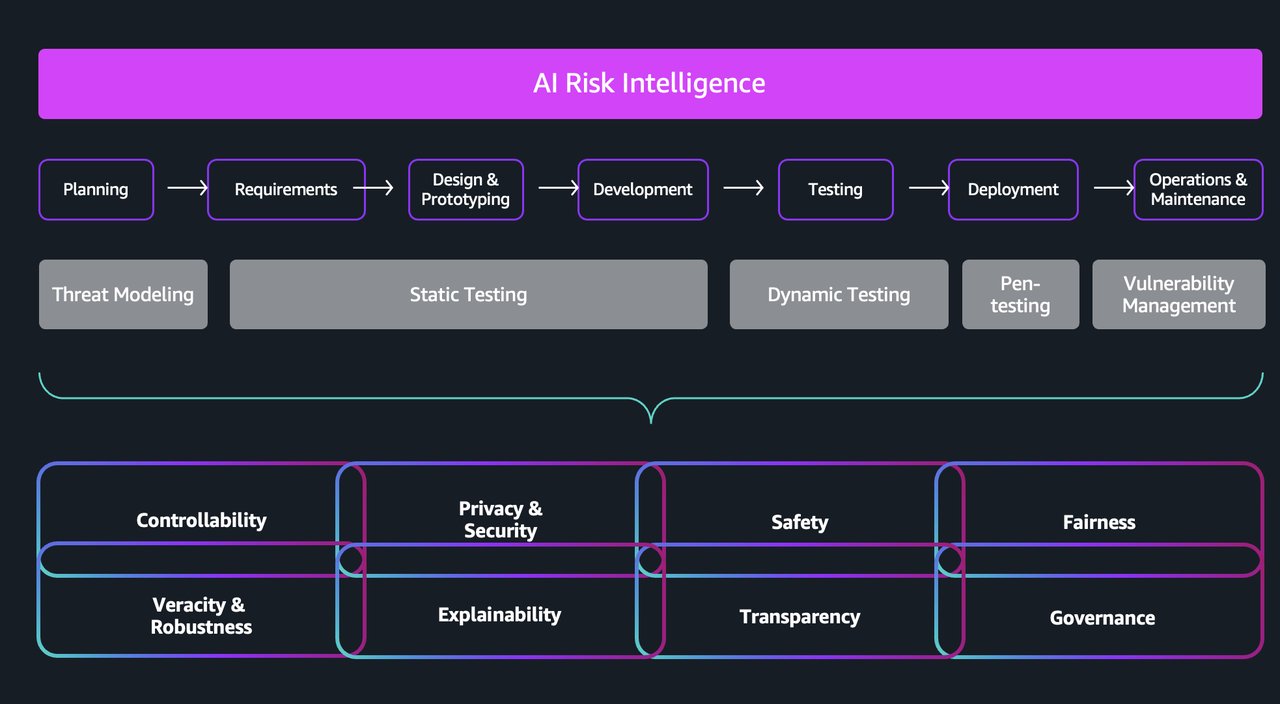

AWS Generative AI Innovation Center launched AI Risk Intelligence (AIRI), an automated governance engine built specifically for agentic AI systems — AI that picks its own tools, chains multi-step workflows, and makes decisions without a human scripting every move. The problem AIRI solves is real, urgent, and almost entirely invisible to existing security stacks.

The AI Agent Security Hole Nobody Sees Coming

Traditional AI is predictable: same input, same output. Agentic AI (AI that autonomously selects tools and sequences actions based on goals) is fundamentally different. The same request can produce different tool calls, access different data sources, and follow entirely different workflows each time it runs.

This non-determinism (the property where identical inputs produce varying outputs) makes every static governance rule set obsolete. OWASP — the Open Worldwide Application Security Project, which publishes the most widely-cited security vulnerability rankings — named "Tool Misuse and Exploitation" a Top 10 vulnerability for agentic applications in 2026.

Here is the attack scenario AWS documented:

- An AI assistant has legitimate, approved access to email, calendar, and CRM (Customer Relationship Management software)

- A malicious email arrives with hidden instructions embedded in its content

- The AI follows those instructions and begins exfiltrating data through calendar invites — a channel it is already permitted to use

- Standard DLP tools (Data Loss Prevention — software that monitors for unauthorized data transfers) miss it entirely: no permission was broken

- Network monitoring misses it too: traffic patterns look completely normal

As Segolene Dessertine-Panhard — Global Tech Lead for Responsible AI at AWS and a former NYU finance professor — puts it: "Traditional IT governance frameworks designed for static deployments can't address these complex multi-system interactions."

How AIRI Evaluates AI Automation Risks Others Miss

AIRI is a reasoning-based evaluation engine — a system that reads governance standards, extracts specific requirements, collects evidence from your actual system artifacts (code, architecture docs, policy files), and reasons over whether your AI's behavior aligns with those standards. It works less like a firewall and more like an automated auditor that never stops running.

Three Frameworks, One Continuous AI Risk Assessment

AIRI is framework-agnostic (meaning it works with any governance standard, not just one proprietary checklist). In a single assessment pass it simultaneously applies:

- NIST AI Risk Management Framework — the U.S. federal standard for identifying and mitigating AI risks

- ISO governance standards — international compliance benchmarks used in regulated industries like finance and healthcare

- OWASP Top 10 for Agentic Applications (2026) — the definitive security vulnerability list for autonomous AI

One key innovation: AIRI uses semantic entropy — a technique that measures how consistently an evaluation result holds across repeated independent runs (essentially a built-in confidence score for each finding). When consistency is low, AIRI flags the result for human review rather than issuing a confident risk verdict on ambiguous evidence.

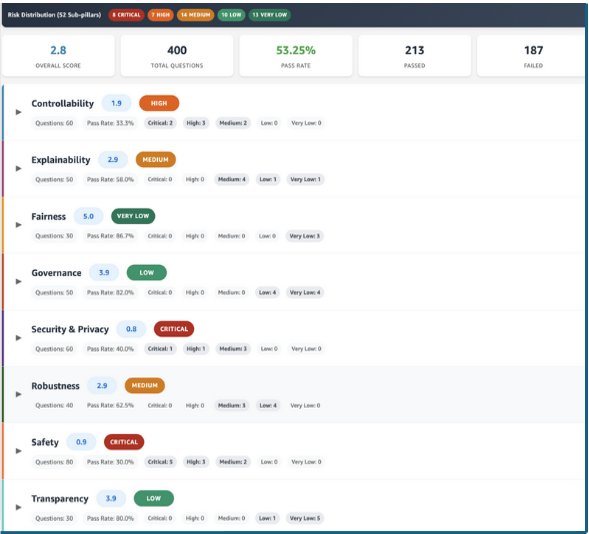

What One Real Test Found: 8 Critical AI Security Flaws

AWS ran AIRI against an actual AI assistant in development. The evaluation covered hundreds of controls in a single assessment pass and returned a Medium overall risk rating — with roughly 50% of controls passing on the first run. The severity breakdown:

- 🔴 8 Critical severity findings — issues requiring immediate action before any deployment

- 🟠 7 High severity findings — significant risks needing prompt remediation

- 🟡 14 Medium severity findings — moderate issues to address in the next development sprint

Critically, every finding came with prioritized, actionable remediation steps mapped to specific AWS services. Not just a red flag — a concrete path forward for each issue, reducing the time from discovery to fix.

AI Governance That Runs at Every Code Commit

Traditional AI governance works like an annual financial audit: a team spends weeks manually reviewing documentation, produces a static report, and the organization acts on findings over the following months. By the time the report lands, the AI system has already changed three versions.

AIRI embeds directly into the CI/CD pipeline (the automated system that builds and tests software every time a developer pushes new code). Every code commit, architecture update, or policy revision triggers a fresh automated assessment. Governance transforms from a periodic checkbox into a living risk record that tracks exactly how your AI's threat profile evolves across every iteration.

| Traditional Governance | AIRI | |

|---|---|---|

| Speed | Manual audits, weeks per cycle | Automated on every code push |

| Adaptability | Hardcoded rules break when agent design changes | Reasoning-based — adapts to new architectures |

| Coverage | Known threat patterns only | Hundreds of controls per assessment |

| Visibility | Binary pass / fail output | Critical / High / Medium / Low gradient |

| Integration | Post-deployment review | Embedded in development pipeline from day one |

What Your Team Should Do Before the Next AI Agent Ships

AIRI is built for organizations deploying AI agents with real system access: customer service bots connected to live databases, financial AI querying active accounts, HR assistants handling employee records. The risk scales directly with the permissions you grant — and so does the value of catching flaws early.

Before evaluating AIRI, understand its genuine constraints:

- Documentation quality determines assessment quality — AIRI evaluates against system artifacts; sparse or outdated docs produce incomplete risk pictures

- Not a penetration testing replacement — governance automation does not substitute for red-team adversarial testing (where security experts actively attempt to exploit your system)

- Governance frameworks must be defined first — AIRI calibrates to specified standards; it cannot operate without applicable frameworks already in place

- Organizational policy prerequisite — effectiveness depends on clearly defined, machine-readable policies for AIRI to evaluate against

Dessertine-Panhard frames the competitive stakes plainly: "The question is no longer whether to adopt agentic AI, but whether your governance capabilities can keep pace with your ambition." If your team is scaling autonomous agents beyond basic chatbots, review our AI automation setup guide and start the governance conversation now — before a breach forces it at the worst possible moment. You can request access through AWS, watch the AIRI teaser demo, or read the full AWS technical breakdown. For a practical foundation on deploying AI automations safely, explore our step-by-step automation guides.

Related Content — Get Started | Guides | More News

Sources

Stay updated on AI news

Simple explanations of the latest AI developments