LiteLLM Supply Chain Attack: 40,000 Downloads Poisoned

LiteLLM supply chain attack on PyPI poisoned 40,000 installs from 3M daily downloads. Check your version now + Anthropic's 3-agent AI automation blueprint.

A supply chain attack just hit LiteLLM — a tool downloaded 3 million times per day by developers building AI automation applications — and over 40,000 copies of the poisoned version were already installed before anyone noticed. That's the headline, but the real story is bigger: as AI infrastructure matures rapidly, the ability to secure it isn't keeping pace.

This week brought four infrastructure shifts worth understanding: a critical security compromise in one of AI development's most-used tools, Anthropic's structured three-agent blueprint for keeping AI reliable over multi-hour tasks, an experimental tool called TigerFS that lets you browse a PostgreSQL database like a folder on your desktop, and GitHub automating the most tedious part of accessibility compliance — all while a new security report reveals a 4.5x risk multiplier hiding inside most AI deployments.

40,000 Downloads Compromised — and 3 Million More Were in the Blast Radius

LiteLLM is what developers use to connect their applications to multiple AI providers — OpenAI, Anthropic, Google — through a single interface. Think of it as a universal remote for AI models. Because it handles so much AI traffic, it's become one of the most critical pieces of plumbing in the modern development ecosystem.

Attackers successfully uploaded malicious versions to PyPI (the Python package repository — the central store where Python developers download libraries and tools), and those versions were downloaded over 40,000 times before the attack was discovered. Any developer who ran pip install litellm during the exposure window may have installed compromised code on their machines or production servers.

The attack method is called a supply chain attack (poisoning the software distribution pipeline rather than attacking individual users directly). It's particularly dangerous because most developers implicitly trust packages from established, widely-used open-source projects — and LiteLLM's 3 million daily downloads made it an exceptionally high-value target.

Check your version right now: Run pip show litellm in your terminal and compare the version against the official LiteLLM GitHub releases page. Update to the latest verified release immediately.

The Hidden 4.5x AI Security Risk in AI Automation Deployments

The LiteLLM attack isn't isolated — it's a symptom of a broader maturity gap in AI infrastructure security. A report from Teleport (a security infrastructure company focused on machine identity) found that AI systems configured with excessive permissions experience 4.5 times more security incidents than those using least-privilege access (a security principle meaning each system receives only the specific permissions it needs — nothing beyond that).

Most teams building AI agents today are moving fast. To avoid hitting permission errors mid-demo or mid-deployment, they grant broad access to databases, file systems, APIs, and external services. That shortcut is now measurably costing them security:

- Over-privileged AI systems: 4.5× more security incidents vs. properly scoped systems

- Least-privilege AI systems: Significantly smaller attack surface, fewer breach vectors

- Current industry state: Most teams have not implemented proper access scoping for their AI agents

- Root cause: Speed-to-deployment culture treats permissions as an afterthought

The fix isn't technically complicated — it requires discipline over cleverness. Define exactly what each AI agent needs to access, deny everything else, and review permissions on a regular schedule. The hard part is building that habit while shipping fast.

Anthropic's Three-Agent Blueprint for Long-Running AI Automation

One of the most persistent problems with AI agents (autonomous AI systems that execute complex, multi-step tasks without constant human guidance) is coherence decay over time. Give an AI agent a coding task that takes 3 hours, and by hour 2, it's often contradicting decisions it made in hour 1. Without structured memory management, long sessions drift.

Anthropic's new three-agent harness architecture addresses this directly. Instead of one AI agent attempting to plan, execute, and review its own work simultaneously, the system separates responsibilities into three distinct, specialized roles:

- Planner agent: Breaks the overall task into structured steps and maintains the strategic view across the entire session

- Generator agent: Executes specific work — writing code, drafting content, calling external tools — within the planner's defined scope

- Evaluator agent: Reviews the generator's output against the planner's original specifications before any step is marked complete

The design is specifically built for long-running autonomous workflows extending over multiple hours. By separating generation from evaluation, errors get caught at each step rather than compounding into larger failures downstream — the same division of labor that makes good engineering teams effective: write, review, validate before shipping.

For teams building with Claude Code or similar autonomous coding tools, this architecture is worth studying as a structural template. It's particularly valuable for: automated code refactoring across large codebases, multi-stage content pipelines, complex data processing workflows, and any task where coherence across steps matters. You can explore the foundational concepts through the AI automation guides to see how to apply this pattern.

TigerFS: Your Database, Navigated Like a Desktop Folder

Among this week's more unconventional ideas: what if, instead of writing SQL queries or configuring specialized connectors (API wrappers that translate between an AI agent and a database) to give an AI agent database access, you just mounted the database as a folder?

That's TigerFS. It's an experimental open-source filesystem that connects to a PostgreSQL database (a widely used system for storing and querying structured data) and exposes all its tables and rows as a directory structure on your computer. You can then use standard Unix command-line tools — ls to list tables, cat to read rows, find and grep to search — without writing a single SQL query.

# Traditional approach — write SQL

psql -d mydb -c "SELECT * FROM users WHERE active = true;"

# TigerFS approach — use file commands (experimental)

ls /mnt/mydb/

cat /mnt/mydb/users/activeFor AI agents, this is a meaningful interface simplification. An agent that already understands file operations can interact with a database immediately, without a developer configuring a database-specific connector. The abstraction layer disappears.

Critical caveat: TigerFS is explicitly labeled experimental — not ready for production databases storing real user data. Treat it as a proof of concept that demonstrates what's possible. The underlying idea — using filesystem abstractions to simplify AI-to-data interfaces — is likely to influence production tooling significantly over the next 12–18 months.

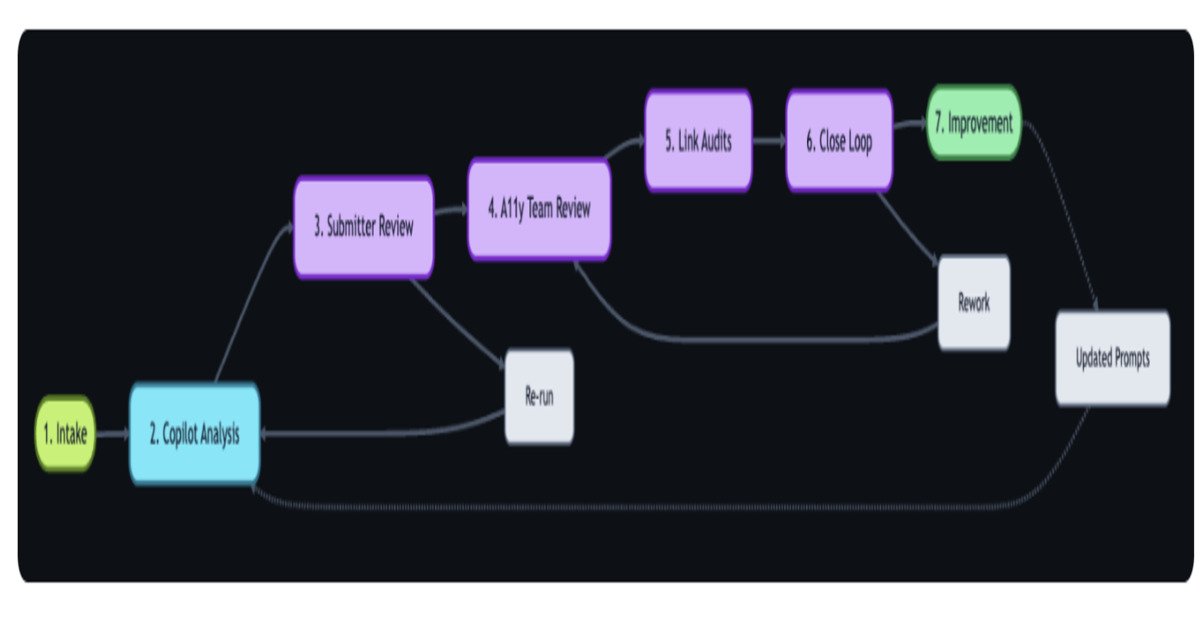

GitHub's Accessibility Fix: AI Triage, Human Sign-Off

Accessibility compliance — ensuring digital products work for people with disabilities, including those using screen readers, voice navigation, or keyboard-only input — is legally required in many jurisdictions and chronically under-resourced in most engineering teams. The manual review process is expensive and slow.

GitHub built an AI-powered workflow that automates the most repetitive parts. The system integrates GitHub Actions (an automated task-triggering system that runs when developers take specific actions), Copilot (GitHub's AI coding assistant), and the GitHub Models API (the underlying AI model access layer) to perform automatic WCAG compliance analysis (WCAG stands for Web Content Accessibility Guidelines — the international standard for digital accessibility) every time accessibility feedback is submitted.

Critically, the system keeps humans in the validation loop — the AI handles initial triage and analysis, but a human reviews the AI's recommendations before any action is finalized. This "AI triage, human validation" pattern is becoming the dominant model for automation in compliance-sensitive contexts. It captures most efficiency gains while maintaining accountability for decisions affecting real users.

Reported outcomes include faster accessibility issue resolution times and better cross-functional team collaboration. For organizations currently handling accessibility feedback through manual ticket routing and review, this represents a substantial potential time saving with lower regulatory risk.

The Bigger Picture: AI Infrastructure Is Maturing Faster Than Its Security

Adrian Cockcroft — formerly chief cloud architect at Netflix and a key figure in cloud-native architecture — presented at QCon San Francisco 2025 on what he calls the "director-level" approach to AI development: orchestrating swarms of autonomous AI agents at scale, rather than managing individual agents manually. His argument is that cloud-native development succeeded by abstracting infrastructure complexity away from developers. AI-native development will succeed the same way.

“The future of engineering lies in building platforms that orchestrate AI-driven development” — Adrian Cockcroft, QCon San Francisco 2025

The tension running through every development this week is the same one: teams are racing to build more powerful, more autonomous AI automation systems, but the security infrastructure supporting those systems isn't mature enough to be treated as implicitly trustworthy. The LiteLLM attack (40,000 compromised installs from a 3-million-daily-download project) and the 4.5× security incident multiplier for over-privileged AI are data points, not outliers.

The teams positioned to win this transition will be the ones solving both sides simultaneously — building capable, long-running AI workflows like Anthropic's three-agent harness while enforcing the kind of least-privilege access discipline that keeps those systems from becoming breach vectors. Right now, most teams are solving one or the other. The gap between the two is where most of the risk lives.

Start with what you can control today: verify your LiteLLM version, audit the permissions you've granted your AI tools, and read the setup guide for structured approaches to building AI agents that are both capable and secure. The tools are improving fast — your security posture needs to keep up.

Related Content — Get Started | Guides | More News

Sources

Stay updated on AI news

Simple explanations of the latest AI developments