8 years stalled, 3 months done — Claude Code's hidden trap

A developer sat on a SQLite idea for 8 years, built it in 3 months with Claude Code — and revealed vibe-coding's most dangerous trap.

In 2018, Lalit Maganti had a brilliant idea: build a professional-grade parser and formatter for SQLite — the database engine quietly powering billions of apps worldwide. Eight years later, he still hadn't started.

Then he opened Claude Code (Anthropic's AI-powered terminal coding assistant), and shipped the entire project in three months.

The story of building Syntaqlite is now one of the most honest accounts of AI-assisted development you'll find in 2026. Not because it's a clean success — though it is a success — but because of what it exposes about the hidden danger inside "vibe-coding" (a term for writing software with AI assistance without fully thinking through the design first).

The 400-Rule Wall That Froze a Developer for 8 Years

SQLite's grammar — the formal set of rules that defines what valid SQL looks like for the SQLite database engine — contains more than 400 distinct rules. To build a proper parser (a program that reads SQL text and checks whether it follows all those rules), you need to implement every single one. Miss a rule, and your tool breaks on real-world SQL that developers actually write.

That's what stopped Maganti cold. Not lack of skill. Not lack of motivation. The sheer tedium of hand-scaffolding 400+ grammar rules made the project feel like it would take a year just to get past the boring parts — before reaching the interesting engineering problems he actually cared about.

Claude Code changed this equation overnight. Instead of facing an abstract mountain of "I need to understand how SQLite's parsing works," Maganti could frame each session as a concrete, tractable prompt.

“AI basically let me put aside all my doubts on technical calls, my uncertainty of building the right thing and my reluctance to get started by giving me very concrete problems to work on. Instead of ‘I need to understand how SQLite’s parsing works’, it was ‘I need to get AI to suggest an approach for me so I can tear it up and build something better.’”

— Lalit Maganti, creator of Syntaqlite

The result: Syntaqlite is a production-ready SQLite toolkit with a high-fidelity parser, formatter, and verifier covering all 400+ grammar rules. You can try the live playground in your browser right now — no install required — or pull it via pip:

pip install syntaqliteThe Prototype That Had to Die

Maganti's development process had two completely distinct phases. The first was built to be thrown away.

In the opening sprint, he leaned heavily on Claude Code to generate code quickly. Parsers got scaffolded. Tests passed. The prototype ran. Then Maganti looked at what he had — and deleted the entire codebase on purpose.

The reason: it worked, but it made no sense as a system. The code had no coherent high-level architecture (the overall design blueprint that determines how different parts of a program fit together and communicate long-term). Individual functions compiled. Individual tests passed. But there was no clear structure a human could reason about, extend, or safely maintain over months of development.

This is the central paradox of AI code generation in 2026: AI can verify compilation. It can verify test results. It cannot verify that a design is good — because good design has no objective definition. It's a judgment call requiring human experience, intuition, and the ability to imagine the system two years from now.

Where AI Wins, Where Humans Must Lead

| AI excels at | Humans must still decide |

|---|---|

| Scaffolding repetitive code (400+ grammar rules) | Choosing between architectural approaches |

| Implementing known patterns quickly | Balancing short-term vs. long-term trade-offs |

| Writing tests that pass a given spec | Knowing when a passing test proves the wrong thing |

| Refactoring existing code at scale | Deciding whether to refactor at all |

The Cheap Refactoring Trap — A New Kind of Technical Debt

Here's where Maganti's account gets genuinely uncomfortable:

“I found that AI made me procrastinate on key design decisions. Because refactoring was cheap, I could always say ‘I’ll deal with this later.’ And because AI could refactor at the same industrial scale it generated code, the cost of deferring felt low. But it wasn’t: deferring decisions corroded my ability to think clearly because the codebase stayed confusing in the meantime.”

— Lalit Maganti

This describes a new species of technical debt (the accumulated cost of quick-fix decisions that create bigger problems later) — and it's subtler than the classic version. Traditional technical debt happens when you know the quick solution is wrong but ship it anyway. AI-driven technical debt happens when you don't even realize you're deferring a decision, because the AI handles the deferral so smoothly it feels like forward progress.

The loop plays out in five steps:

- You encounter an architectural question with no clear answer.

- AI generates code that sidesteps the question — and that code compiles and passes tests.

- You move on, feeling productive.

- Three weeks later, the same question resurfaces at a higher level of the codebase.

- Now the codebase is more complex, the question is harder to isolate, and the confusion has spread to 6 more files.

Maganti spent "weeks in the early days following AI down dead ends, exploring designs that felt productive in the moment but collapsed under scrutiny." Those weeks represent real calendar time — and they happened during the 3-month build, not before it.

Phase 2: The Slower Build That Actually Shipped

The second development cycle looked radically different. Maganti made explicit architectural decisions before writing code. He reserved Claude Code for implementation — not design. He treated AI the way a senior engineer treats a talented junior: great for execution, not for judgment calls.

Compared directly to Simon Willison's sqlite-ast project — a January 2026 attempt at similar SQLite tooling — Syntaqlite is categorically more robust. Willison himself describes sqlite-ast as "far less production-ready." Both developers used Claude Code. The difference was human-controlled architecture.

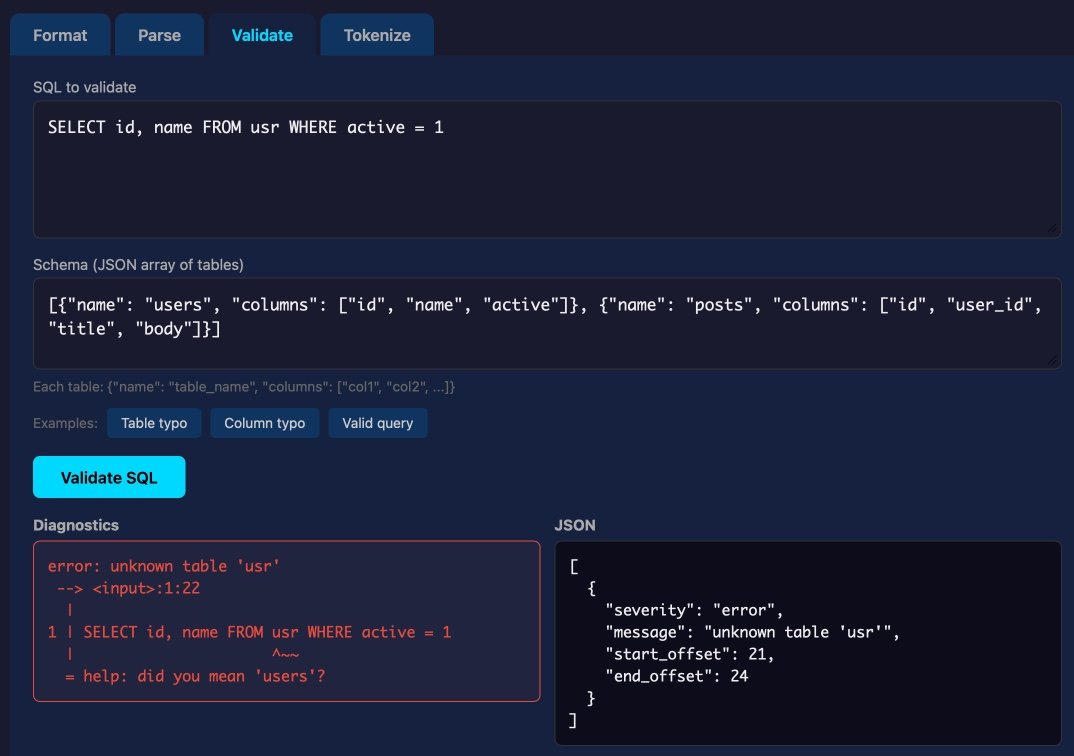

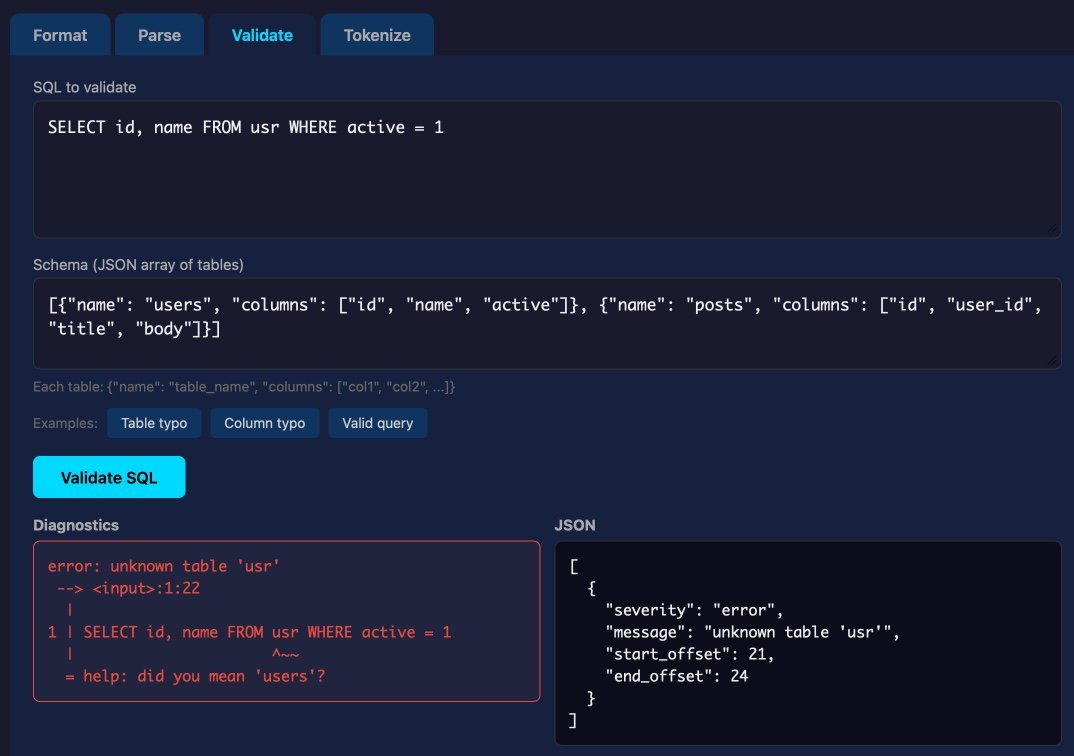

The finished Syntaqlite playground runs in your browser via WebAssembly (a technology that executes compiled code directly in modern browsers at near-native speed — no server required). It handles four operations simultaneously on any SQL you paste in:

- Validation — checks SQL for syntax errors against all 400+ SQLite grammar rules

- Formatting — standardizes style (indentation, capitalization, keyword spacing)

- Parsing — converts SQL text into an AST (Abstract Syntax Tree — a structured data representation that other developer tools can analyze and transform)

- Tokenization — splits SQL text into its smallest meaningful units: keywords, identifiers, operators, literals

The Same Week: 2 Million Americans Used ChatGPT as a Doctor

While Syntaqlite tells the story of AI's limits in professional software development, a separate dataset published this week reveals something equally striking about AI in everyday life — specifically healthcare.

New data from OpenAI shows that ChatGPT users send 2 million messages per week about health insurance in the United States. That's not casual curiosity — it's a sustained pattern of people turning to an AI chatbot because no better option is available to them.

Three numbers from this dataset stand out:

- 600,000 weekly healthcare-classified messages come from people in medical deserts — regions more than a 30-minute drive from the nearest hospital

- 7 out of 10 healthcare messages sent to ChatGPT happen outside standard clinic hours (roughly 9 AM–5 PM)

- For millions of Americans, ChatGPT isn't a convenience tool — it's the only accessible source of health guidance at 11 PM on a Sunday

The contrast is worth sitting with. Syntaqlite is a skilled developer using AI to solve a technical problem he chose to work on, with full ability to evaluate and discard bad AI output. The ChatGPT healthcare users are people with no alternatives, turning to AI for medical guidance with far less ability to judge whether the output is accurate.

Both stories are part of the same 2026 reality — AI acceleration moving faster than our frameworks for using it safely.

The Actual Rules Developers Should Take Away

Maganti's conclusion from 3 months of AI-accelerated building isn't "AI is overhyped" or "AI is transformative." It's considerably more precise than either extreme:

- Vibe-coding works for proof-of-concept. If your goal is "does this idea hold up?" — AI can answer that in days instead of months.

- Vibe-coding breaks for production. If your goal is a codebase humans will maintain for years, architectural decisions still require deliberate human judgment.

- Treat the first AI-generated prototype as research data, not product. Expect to discard it. That's not failure — that's the process working correctly.

- Design debt is invisible until it isn't. When AI makes refactoring feel free, you stop noticing design debt accumulate — until the codebase becomes too tangled to reason about.

- Break the procrastination loop, then take the wheel. Use AI to get moving. Then make the hard calls yourself.

For reference, Simon Willison simultaneously shipped two companion tools this week that follow similar "AI-assisted, human-directed" principles:

# Scan your project for accidentally committed passwords or API keys

uv run scan-for-secrets <secret> -d <your-directory>

# Track multiple Datasette instances across dozens of terminal windows

datasette install datasette-ports && datasette portsEight years of procrastination. Three months of building. One unusually honest post-mortem. The real output of Syntaqlite isn't a SQLite parser — it's a precise map of where AI acceleration actually helps, and where it quietly makes things worse.

Related Content — Get Started | Guides | More News

Sources

Stay updated on AI news

Simple explanations of the latest AI developments