SpaceX 1M Orbital AI Data Centers: 4 Physics Problems

SpaceX filed to orbit 1 million AI data centers to solve Earth's power crisis. Heat, radiation, debris, and repair gaps could delay the plan to 2050.

SpaceX filed paperwork with the FCC (Federal Communications Commission — the US agency that controls radio spectrum and satellite permissions) in January 2026 to launch 1 million orbital AI data centers into Earth's orbit. The stated goal: "fully unleash the potential of AI without triggering an environmental crisis on Earth." Jeff Bezos has publicly declared the tech industry will move toward large-scale computing in space. Google announced plans for an 80-satellite test constellation as early as 2027. And in November 2025, a Washington State startup called Starcloud became the first to successfully orbit an Nvidia H100 GPU (a powerful AI chip that typically fills a desktop-sized server box) — the opening shot in the race to move AI computing off the planet.

The ambition makes sense on paper. A single large AI data center today consumes as much electricity as a mid-sized city and millions of gallons of water for cooling. Space offers something Earth cannot: unlimited solar power and a vacuum that could — in theory — radiate waste heat into the cosmos forever. But the engineers building this hardware say the gap between that vision and physical reality is enormous. Four near-impossible problems may delay the plan by 20 to 30 years.

The Pitch: Why Orbital AI Data Centers Make Sense

The logic behind orbital AI is not as far-fetched as it sounds. In sun-synchronous orbit (a specific flight path where a satellite always faces the Sun), solar panels generate electricity continuously — no night cycles, no clouds, no power grid needed. This also eliminates freshwater cooling, which is one of the fastest-growing environmental criticisms of AI companies: a single large model training run can consume hundreds of millions of liters of water.

Thales Alenia Space, a major European aerospace firm, conducted a formal feasibility study in 2024 and concluded that gigawatt-scale orbital data centers could reach orbit before 2050. Starcloud's vision is more aggressive: Earth-scale orbital facilities by 2030. SpaceX's filing is the most extreme — 1 million facilities, framed as an environmental alternative to building thousands of new power plants. SpaceX's Starship mega-rocket helps make the numbers slightly less daunting: it can carry 6 times more payload than the Falcon 9 rocket currently used for most satellite launches, dramatically cutting cost per kilogram to orbit.

Problem 1: Nothing Cools Itself in Space

On Earth, data centers cool chips using convection — blowing air or pumping water across hot components. In the vacuum of space, convection doesn't exist. The only way to shed heat is through radiation (emitting infrared energy as electromagnetic waves into the void), which is 5 to 10 times less efficient than convective cooling at Earth's surface.

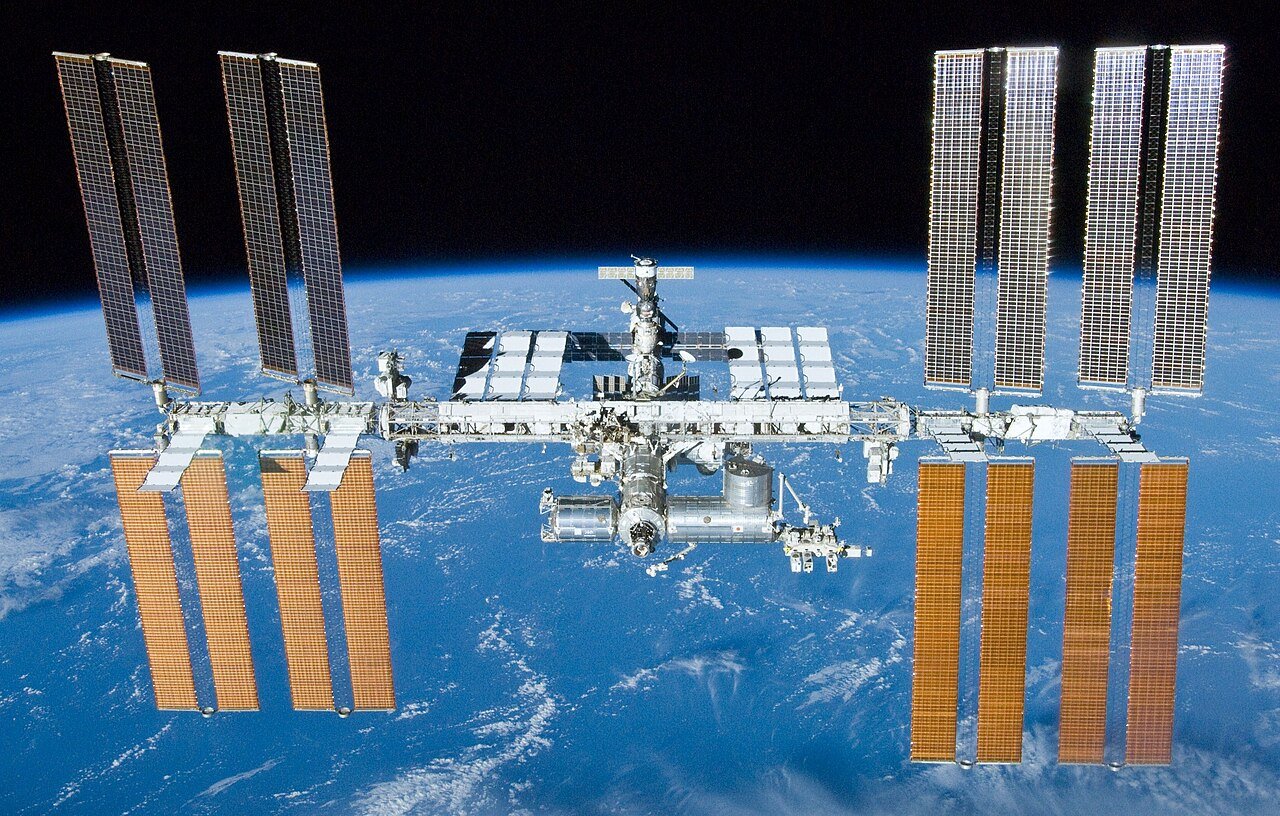

The arithmetic is brutal. In sun-synchronous orbit, equipment temperatures would never drop below 80°C. Most consumer-grade electronics fail between 60°C and 70°C. The radiator panels needed to reject heat from a gigawatt-scale facility would need to measure hundreds of meters across — larger than the International Space Station's 109-meter wingspan, which required 13 years of crewed assembly missions to complete.

"Thermal management and cooling in space is generally a huge problem."

— Lilly Eichinger, CEO, Satellives (Austrian space technology company)

Yves Durand, former Director of Technology at Thales Alenia Space, described the central dilemma: "You have free energy and power in space, but there are a lot of disadvantages. It's quite possible that those problems will outweigh the advantages." His firm spent 2024 studying exactly this question and concluded a 25-year runway is needed — minimum.

Problem 2: Radiation Corrupts Everything, Constantly

Earth's magnetic field and atmosphere shield ground-based electronics from the relentless particle bombardment arriving from the Sun and deep space. In low Earth orbit (the band of space 200 to 2,000 km above Earth's surface), that protection vanishes. Ken Mai, a principal systems scientist at Carnegie Mellon University's electrical engineering department, identifies three distinct radiation damage mechanisms that attack orbiting chips simultaneously:

- Single-event upsets: A high-energy particle strikes a memory cell and flips a bit from 0 to 1 (or vice versa), silently corrupting data mid-calculation. This happens randomly and continuously throughout the satellite's life.

- Cumulative ionizing damage: Radiation gradually degrades transistor (the microscopic on/off switches that make chips compute) performance over months. The chip gets slower and less reliable with no sudden failure — it just quietly degrades.

- Atomic displacement: Particles physically knock atoms out of their positions in the chip's crystal structure, permanently altering its electrical properties. No repair is possible once this occurs.

The traditional engineering solution is radiation-hardened chips — processors with redundant circuits and radiation-resistant materials. These chips cost significantly more and perform years behind state-of-the-art consumer hardware like the Nvidia H100 that Starcloud flew. In mid-March 2026, Nvidia's Chen Su (Head of Edge AI Marketing) announced that Nvidia plans radiation resilience "at the system level rather than radiation-hardened silicon alone" — using software error-correction, redundant boards, and system shielding. This approach has never been validated at data center scale in orbit, and the Sun's 11-year activity cycle regularly produces radiation bursts that exceed current satellite design tolerances.

Problem 3: Orbit Is Already Running Out of Room

SpaceX's Starlink constellation already performs hundreds of thousands of collision-avoidance maneuvers annually — automated engine burns nudging satellites away from incoming debris. That's today's reality with roughly 7,000 active Starlink satellites. SpaceX's new filing proposes scaling that to 1 million.

"You can fit roughly four to five thousand satellites in one orbital shell... You can't have one million satellites around Earth unless it's a monopoly."

— Greg Vialle, Founder, Lunexus Space (orbital recycling company)

| Orbital Capacity Factor | Number |

|---|---|

| Safe satellites per orbital shell | 4,000–5,000 |

| Maximum across all low Earth orbit shells combined | ~240,000 |

| SpaceX's FCC filing target | 1,000,000 |

| SpaceX plan exceeds safe orbital capacity by | 76% |

| Minimum safe distance between satellites | 10 km |

The re-entry debris risk compounds this further. Today, roughly 3 to 4 pieces of old satellites and rocket bodies fall back into Earth's atmosphere every day. If SpaceX replaced 1 million satellites every 5 years (the typical operational lifespan of a LEO satellite), that rate would spike to one piece re-entering every 3 minutes. Scientists warn this level of atmospheric debris could damage the ozone layer and subtly alter Earth's thermal balance — trading one environmental crisis for another, at orbital scale.

The Kessler Syndrome Threat

Astronomers and physicists have long warned about the Kessler Syndrome (a theoretical cascade where one satellite collision generates enough debris to trigger dozens more, making certain orbital altitudes permanently unusable). SpaceX's 1 million-satellite plan would pack orbits so densely that a single catastrophic collision could start exactly this chain reaction — locking humanity out of certain orbital zones for centuries, and taking GPS, weather satellites, and internet coverage with it.

Problem 4: Repair Is an Unsolved Engineering Problem

A ground-based data center employs on-site engineers who can swap a failed server board in minutes. In orbit, repair requires either a crewed astronaut mission (each costs hundreds of millions of dollars and takes months to plan) or robotic assembly and maintenance systems. The critical issue: those robotic systems do not exist yet at the scale and dexterity required for real data center maintenance.

Yves Durand at Thales Alenia Space describes the only viable path forward: "The good thing with orbital data centers is that you can start with small servers and gradually increase... You can use modularity. You can learn little by little and gradually develop industrial capacity in space." This means decades of incremental experimentation — not the 1 million-facility moonshot SpaceX filed for in January.

Starcloud's November 2025 H100 launch illustrates the scale of the gap. That mission orbited a single chip in a single satellite — a scientific proof of concept. Bridging the distance from one orbiting chip to an Earth-scale orbital data center (their stated 2030 vision) requires breakthroughs in space robotics, radiation tolerance, and thermal engineering that remain entirely unsolved. Even Durand's optimism has limits: "It's a very challenging problem."

What Actually Comes First: The Realistic Roadmap

The near-term applications for orbital AI are narrower but genuinely useful: processing satellite imagery in orbit rather than downloading terabytes of raw data to the ground, running navigation AI on weather satellites, or analyzing telescope data without ground relay. These workloads fit in a single hardened chip — exactly what Starcloud demonstrated.

The independent engineering timeline, based on current assessments:

- 2027: Google launches 80 test satellites — the first real-world engineering data on radiation tolerance, heat management, and continuous power generation in low Earth orbit

- 2030: Starcloud's Earth-scale facility target (widely considered optimistic by outside engineers given four unsolved fundamental problems)

- 2050: Thales Alenia Space's assessment for European gigawatt-scale deployment, based on their formal 2024 feasibility study

While orbital AI infrastructure matures toward 2050, AI automation tools available today are already transforming business workflows on the ground — no launch vehicle required.

Meanwhile, Earth's AI power and water crisis continues at full speed. The same data centers that orbital computing promises to replace are expanding rapidly — new construction, new grid contracts, new water agreements with municipalities that didn't sign up to become AI cooling stations. If orbital computing takes until 2050, every megawatt consumed and every liter of water used for the next 25 years of AI growth will be felt on the ground.

SpaceX's 1 million-satellite FCC filing is best read as a regulatory land-grab: staking orbital spectrum and slot claims before competitors can. Google's 80-satellite constellation test is the first genuine engineering data point. Starcloud's single H100 chip in orbit is the proof of concept. None of it is a data center. But the race to move AI off the planet has officially begun — and the physics of space are already pushing back hard.

Related Content — Get Started | Guides | More News

Sources

Stay updated on AI news

Simple explanations of the latest AI developments