AI Data Centers in Space: Cisco CEO on Earth's Power Crisis

Cisco CEO says AI space data centers are inevitable as Earth's power grid fails to keep up. One of only 3 firms globally with the chips AI infrastructure needs.

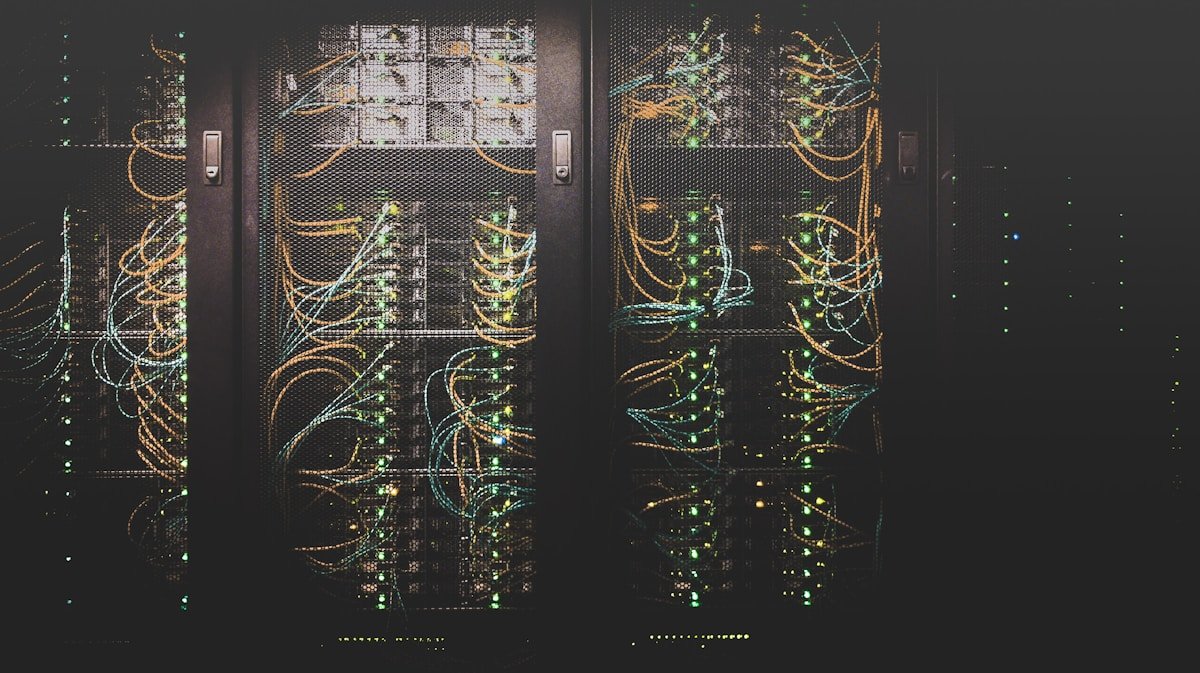

AI data centers — the massive buildings powering every tool from ChatGPT to Gemini — are hitting a wall. Those buildings consume extraordinary amounts of electricity, water, and land. And across the United States, communities are pushing back. Permits are denied. Construction stalls. The grid can't keep up.

So where does AI expand next? According to Cisco CEO Chuck Robbins, the answer may literally be upward. Appearing on The Verge's Decoder podcast, Robbins confirmed what few executives have said publicly: orbital data centers — facilities built in space, powered by continuous solar energy — are not science fiction. They're an inevitable part of AI's future. "Absolutely. Yes? And we will," Robbins said when asked directly.

The Power Crisis Pushing AI Data Centers Off-Planet

The numbers tell the story. A single large-scale AI training cluster can consume as much electricity as a small city. US data center power demand is projected to roughly triple by 2030. And increasingly, local communities are saying no — bipartisan political opposition has delayed or outright blocked data center construction in states from Virginia to Texas to Idaho.

Robbins acknowledged this plainly: "People don't want these in their backyards." The cooling requirements for ground-based facilities — industrial refrigeration systems consuming millions of gallons of water annually — add further weight to the political and physical burden.

The space alternative has two structural advantages no amount of earthly engineering can replicate. First, orbital solar power is, as Robbins described it, "unlimited and unimpeded" — no night cycle, no cloud cover, no atmospheric absorption losses. Second, community opposition disappears entirely when the data center is 400+ kilometers above the nearest neighborhood.

Thermal management (keeping electronics from overheating) also works differently in orbit. Without an atmosphere, heat is radiated away as infrared energy into the void of space. The engineering challenges are real and currently unsolved, but the constraints are fundamentally different — and potentially more favorable than fighting Earth's thermodynamics with brute-force cooling systems.

The 2016 Acquisition That Makes This More Than Talk

Here's what separates Cisco's space ambition from wishful thinking: a decade-old bet that now looks like genius. In 2016, Cisco quietly acquired Leaba, an Israeli company building custom networking silicon (specialized computer chips designed for one task — connecting servers — rather than general computation). At the time, it looked like a niche infrastructure play. Today, it's a strategic moat that competitors cannot easily cross.

That acquisition makes Cisco one of only 3 companies globally capable of manufacturing the specialized chips that connect GPU clusters (banks of graphics processors that do the heavy mathematical work powering AI models). Without these chips, there is no large-scale AI infrastructure at all. Competitors relying on "merchant silicon" — generic off-the-shelf chips available to any buyer — simply cannot match the performance characteristics that hyperscale AI demands.

"Success in business is always a combination of good decisions and a lot of luck," Robbins said. "The luck struck in 2016."

From Near-Zero to Multi-Billion: The Hyperscaler Explosion

Five to six years ago, Cisco's revenue from hyperscalers — the industry shorthand for Amazon Web Services, Microsoft Azure, and Google Cloud, the three companies that collectively operate the world's largest cloud infrastructure — was essentially zero.

Today it's a multi-billion dollar segment. Cisco's enterprise data center networking business posted double-digit growth in 6 of the last 8 quarters. Total company revenue reached approximately $20 billion last quarter. For comparison, Nvidia's networking division — which Cisco competes with directly — reported $31 billion in its last fiscal year. That's a larger figure, but it reflects Nvidia's dominance in AI accelerators, not a Cisco loss.

The reason both can grow simultaneously: hyperscalers deliberately diversify their vendor relationships. They prefer "optionality" — spreading purchases across multiple suppliers — specifically to avoid dangerous dependence on any single company. That structural preference lets Cisco expand alongside Nvidia in what Robbins calls a "coopetition" (simultaneous cooperation and competition) relationship.

Cisco also carries a weapon Nvidia doesn't: 40+ years of deeply embedded enterprise relationships. Replacing Cisco infrastructure means replacing decades of accumulated processes, software integrations, and institutional knowledge. That switching cost protects Cisco's base in ways no chip spec sheet can replicate.

How Early Is "Early"? The Timeline Reality Check

Robbins was refreshingly candid about where the space planning actually stands. The idea was raised by his head of product just 2–3 months ago. "I looked at him like he was crazy," Robbins admitted. A deeper technical roadmap is now scheduled for review with the chief product officer in approximately 6 months.

This is early-stage R&D, not a product announcement. But Cisco's engineers are already asking the concrete questions: What atmospheric conditions will hardware face in orbit? How do you handle thermal expansion without convection cooling (heat transfer through moving air — which doesn't exist in a vacuum)? What radiation hardening (protecting electronics against cosmic rays and solar particle events) will networking chips require at orbital altitude?

Robbins also cited Elon Musk explicitly: "I wouldn't doubt [Elon]. We're going to prepare so our technology is ready." SpaceX's Starship rocket has dramatically reduced projected launch costs per kilogram compared to any previous system, changing the economic math for orbital infrastructure in ways that would have been considered fantasy a decade ago.

What "Leading Edge, Not Bleeding Edge" Means in Practice

Robbins' exact framing: "I'd like to be on the leading edge. How about we say that? Maybe not bleed, but let's lead." That positioning — preparing infrastructure without betting the company on unproven technology — is exactly the kind of calculated ambition that allowed Cisco to capitalize on the Leaba acquisition years before it became obvious. The 2016 bet looked odd at the time. In 2026, it's one of only 3 keys to the AI infrastructure kingdom.

Why Every AI Tool You Use Depends on This Story

Here's the part that matters for everyday users. ChatGPT, Gemini, Claude, Midjourney, Copilot — every AI automation tool runs on hardware connected by exactly the kind of networking chips Cisco builds. When that infrastructure hits a wall — power constraints, community opposition, regulatory barriers — the cost and availability of AI services feel it first.

Robbins also flagged a second major shift with direct implications for companies deploying AI today: security in the "agentic era" (the emerging period where AI agents — autonomous software programs that can browse the web, send emails, call APIs (software connections between services), and interact with other systems without constant human oversight — are deployed at scale across enterprise infrastructure).

Each AI agent is a new attack surface. Traditional cybersecurity catches threats at the software level. Cisco's core competency — network security — catches threats at the infrastructure layer, before they ever reach software. As organizations roll out dozens or hundreds of AI agents handling sensitive workflows, Cisco's "secure connectivity" positioning becomes not just valuable but arguably essential infrastructure for the AI economy.

"We securely connect everything. That's basically what we do," Robbins said — a deceptively simple mission statement for the company quietly preparing to take that mission into orbit.

Related Content — Get Started | Guides | More News

Sources

Stay updated on AI news

Simple explanations of the latest AI developments