Goose vs Claude Code: Free Local AI Coding Agent

Block's Goose is a free, local Claude Code alternative with no rate limits or subscription. Railway raises $100M to deploy AI agents in under 1 second.

If you've been paying $100–$200 a month to use Claude Code, Block just handed you a free exit ramp.

Goose — an open-source AI coding assistant built by Block (the fintech company behind Square and Cash App) — does nearly everything Claude Code does, but runs entirely on your local machine, costs nothing, and has no rate limits. No 5-hour resets. No monthly subscription. No data sent to a cloud server.

At the same time, Railway — the deployment platform quietly powering 2 million developers — just raised $100 million to ensure AI agents can actually ship the code they write. The two stories are connected: as AI writes code faster, the bottleneck shifts from writing to deploying.

Claude Code's $200 Problem — and Goose's Free Answer

Claude Code, Anthropic's premium AI coding assistant, is powerful. Depending on your usage, you'll pay anywhere from $20 to $200 per month. Heavy users hit rate limits every 5 hours — a friction point that breaks developer flow precisely when productivity peaks.

Goose attacks both problems directly.

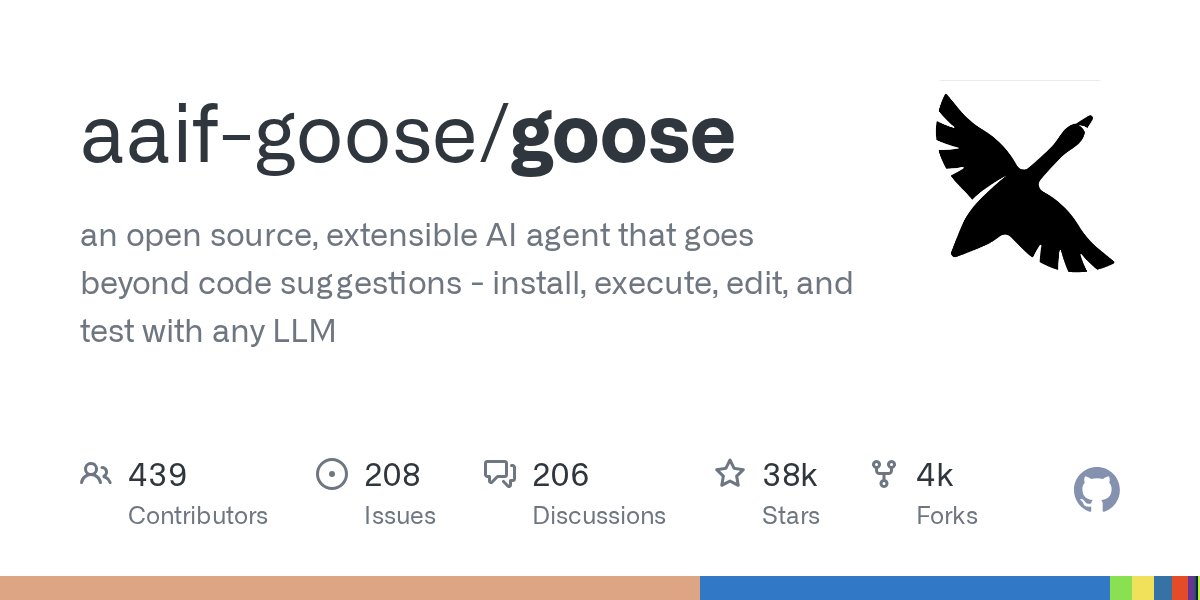

Built by engineers at Block and published on GitHub, Goose is a fully local AI coding agent (a program that runs on your own computer and autonomously edits, debugs, and improves your code). It connects to any AI model you choose — including models running through Ollama (a free tool for running AI models entirely on your laptop, without internet) — and keeps all your data on your own hardware.

The head-to-head comparison is stark:

- Claude Code: $20–$200/month, cloud-based, rate limits reset every 5 hours

- Goose: $0, runs locally on your machine, no rate limits, no subscription

- GitHub traction: 26,100+ stars, 362 contributors, 102 releases

"Your data stays with you, period," said Parth Sareen, a Goose engineer, during a public livestream. That single sentence has become the tool's rallying cry — and it's resonating. The repository is among the fastest-growing open-source AI projects of 2026.

How Goose Works — and How to Set It Up

Goose functions as an agentic coding loop (a cycle where the AI reads your code, makes a targeted change, checks the result, and tries again until the task is complete). You describe what you want in plain English — "add input validation to my signup form" — and Goose works through the implementation autonomously, editing files, running commands, and reading output.

Because Goose is model-agnostic (it works with any AI model, not locked to one provider), you can pair it with:

- A free local model via Ollama — zero API costs, fully offline, nothing leaves your machine

- OpenAI's GPT-4o — if you already have an API key

- Anthropic's Claude — ironically the same model powering Claude Code, but without the subscription wrapper

# Get started with Goose (free, open-source)

git clone https://github.com/block/goose

cd goose

# Follow the setup guide in README.md

# Runs on macOS, Linux, and Windows (via WSL)Ready to set up your own local AI coding environment? Our AI automation setup guide walks you through Goose and Ollama step by step.

The practical trade-off: Goose lacks Claude Code's polished interface and commercial support. It's a developer tool for people comfortable with a terminal and GitHub. But for that audience, it eliminates every financial barrier to AI-assisted coding — and the 26,100 GitHub stars suggest that audience is large and growing fast.

The Deployment Bottleneck: Why Railway's $100M Round Matters Right Now

There's a second story here that most coverage misses — and it's directly connected to the AI coding explosion.

As tools like Goose make writing software dramatically faster, a new bottleneck has emerged: deployment speed. If your AI agent produces a working feature in 3 seconds but pushing it live takes 3 minutes on AWS or GCP, the agent sits idle 98% of the time. The intelligence is instant; the infrastructure is not.

That's the precise problem Railway has been solving since 2020 — and why a 30-person company just closed a $100 million Series B led by TQ Ventures.

Railway, founded by Jake Cooper (28, previously at Wolfram Alpha, Bloomberg, and Uber), deploys applications in under 1 second — versus 2–3 minutes with traditional tools like Terraform or AWS CloudFormation (infrastructure-as-code tools that define and manage servers through configuration files). Cooper described the AI-era pressure plainly:

"When godly intelligence is on tap and can solve any problem in three seconds, those amalgamations of systems become bottlenecks. What was really cool for humans to deploy in 10 seconds or less is now table stakes for agents."

Railway's Numbers — The Case for Paying Attention

Railway's growth metrics explain why investors backed a 30-person team with nine figures:

- 2 million developers on the platform

- 10 million+ deployments processed per month

- 1+ trillion requests through its edge network

- 31% of Fortune 500 companies use Railway

- 3.5x revenue growth year-over-year; 15% month-over-month growth

- Deployment time: under 1 second vs. 2–3 minutes for competitors

- 10x developer velocity increase reported by customers

- Up to 65% cost savings vs. traditional cloud providers

The customer cost data is striking. Daniel Lobaton, CTO of G2X, cut infrastructure costs from $15,000/month to roughly $1,000/month — an 87% reduction — after migrating from his previous provider. "The work that used to take me a week on our previous infrastructure, I can do in Railway in like a day," he said. "If I want to spin up a new service and test different architectures, it would take so long on our old setup. In Railway I can launch six services in two minutes."

Rafael Garcia, CTO of Kernel, put it in human terms: "At my previous company Clever, which sold for $500 million, I had six full-time engineers just managing AWS. Now I have six engineers total, and they all focus on product. Railway is exactly the tool I wish I had in 2012."

How Railway Prices — and Why It's Different

Traditional cloud providers like AWS charge for provisioned capacity — you pay even when your server is doing nothing. Railway bills per second of actual use:

- Memory: $0.00000386 per GB-second

- Compute: $0.00000772 per vCPU-second

- Storage: $0.00000006 per GB-second

- Idle virtual machines: Free — no charge for sleeping services

That idle-is-free policy is significant for AI agent workflows, where services may sit dormant between tasks. In 2024, Railway abandoned Google Cloud entirely to build its own data centers — a move called vertical integration (owning both the software platform and the physical hardware underneath it). That's how Railway achieves sub-second deployments at prices that undercut AWS and GCP.

The angel investor list reads like a developer-infrastructure hall of fame: Tom Preston-Werner (GitHub co-founder), Guillermo Rauch (Vercel CEO), and Spencer Kimball (Cockroach Labs CEO). These are people who built the platforms developers already live inside — and they bet on Railway.

Your Free AI Dev Stack — Right Now, No Subscription Needed

The convergence of Goose and Railway points toward a complete, zero-subscription AI automation development stack for individual developers and small teams. Here's the full setup:

- AI coding assistant: Goose — free, local, open-source, no rate limits

- Local AI model: Ollama — run Llama 3, Mistral, or Qwen entirely on your laptop with zero API costs

- Instant deployment: Railway — free tier available; per-second billing after that, idle services always free

Total monthly cost for a solo developer combining local models through Goose with Railway's free tier: $0. That's a direct functional alternative to Claude Code's $20–$200/month range.

For teams requiring commercial support, SLAs (service level agreements — vendor-guaranteed uptime commitments), or enterprise contracts, Claude Code and AWS remain the more robust, better-supported options. But for individual developers, vibe coders, freelancers, and startups exploring AI automation, the free stack is now genuinely competitive — and Cooper's long-term thesis makes the stakes clear: "The amount of software that's going to come online over the next five years is unfathomable compared to what existed before — we're talking a thousand times more software. All of that has to run somewhere."

Related Content — Get Started | Guides | More News

Sources

Stay updated on AI news

Simple explanations of the latest AI developments