OpenAI Stargate UK Paused — Energy Costs Block AI Data...

OpenAI halted Stargate UK over electricity costs while Oracle raises $14B and Meta spends $3B. AI infrastructure's biggest bottleneck is now energy, not money.

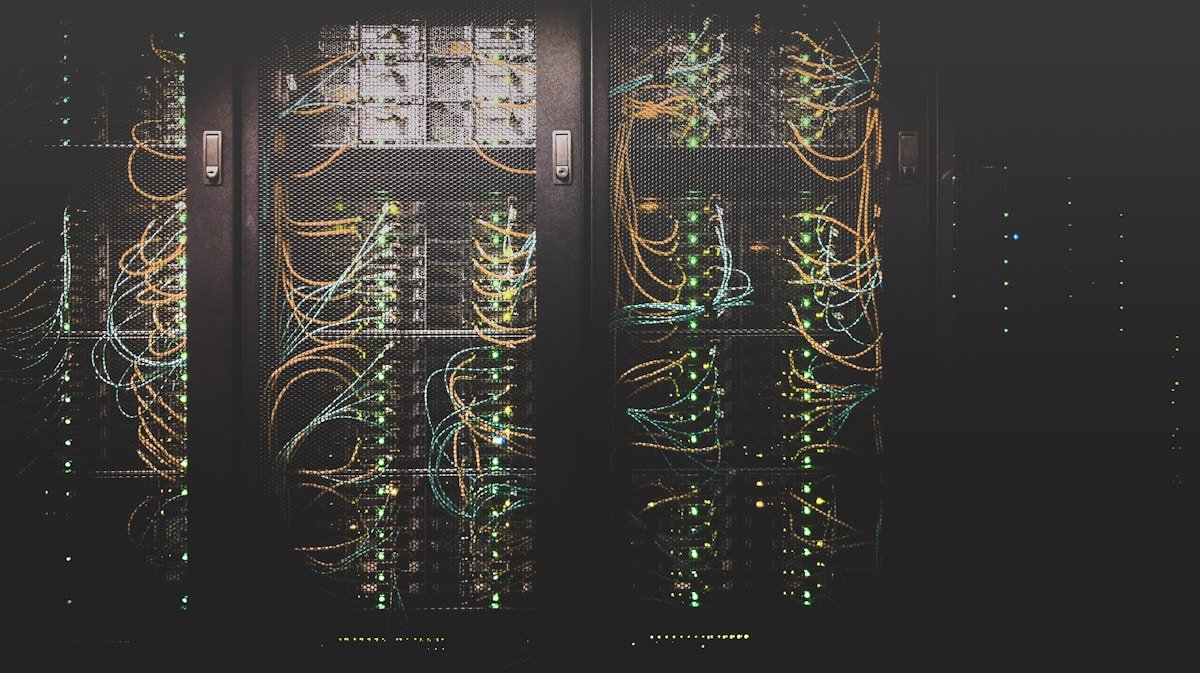

OpenAI just halted its Stargate UK data center project — and the reason isn't money, regulation, or politics. It's electricity. That single constraint is now the most important bottleneck in the global AI infrastructure arms race, and it's reshaping where the biggest players in AI automation can actually build.

A $500 Billion AI Infrastructure Vision, Blocked by the Power Grid

The Stargate initiative (OpenAI's global data center buildout, co-backed by SoftBank, Oracle, and Microsoft with total commitments exceeding $500 billion) was supposed to be the physical backbone of the next generation of AI. The UK site was its European beachhead. As of April 9, 2026, Bloomberg reports the project is paused — citing escalating energy costs that make the economics unworkable.

UK commercial electricity rates are among the highest in the developed world, running roughly 2–3x more per kilowatt-hour than comparable sites in Texas or the UAE. When a single AI training run requires 30–50 megawatts of continuous power for months — and running that model at scale (called "inference," the process where the AI generates answers for real users) demands that power permanently — those cost differences become existential at data center scale.

A facility running thousands of AI accelerator chips (specialized processors that handle the math-heavy work of AI, consuming 300–700 watts each, around the clock) can easily require more electricity than a mid-sized city neighborhood. The UK grid simply wasn't designed — and isn't priced — for that demand.

While OpenAI Waits, the Competition Deploys $17 Billion in One Week

Here's the contradiction at the center of this story: OpenAI's pause happened in the same week that three other major players made massive, no-hesitation AI infrastructure commitments.

- Oracle's $14 billion raise: PIMCO (one of the world's largest bond fund managers, overseeing more than $2 trillion in assets) is marketing $14 billion in debt specifically tied to Oracle data center construction. This isn't venture capital — it's institutional bond markets treating AI infrastructure like a utility investment.

- Meta's $3 billion build: Investment banks Natixis and MUFG are actively marketing Meta's $3 billion data center project to outside investors. Meta isn't pausing for energy concerns — it's bringing in major banking partners to fund an accelerated build.

- UAE's G42 for OpenAI: Despite documented regional security concerns, G42 (the UAE's flagship AI investment company, backed by Abu Dhabi sovereign wealth) is pushing ahead with data center infrastructure built specifically for OpenAI. Abu Dhabi has cheap, state-subsidized power — making the Middle East structurally more attractive for AI compute right now than the UK.

Combined, that's $17 billion in new AI infrastructure capital in active deployment during a single week — while the company most associated with the AI revolution pauses its European expansion because of electricity bills. The irony is entirely intentional to notice.

Goldman, Snowflake, and the "Picks and Shovels" AI Investment Thesis

Goldman Sachs publicly announced this week it is increasing capital expenditure on AI infrastructure, describing its approach as a "picks and shovels" strategy (a reference to the California Gold Rush, where hardware and supply vendors often made more money than the gold miners themselves — here, it means investing in AI infrastructure and compute rather than AI software products directly).

Meanwhile, Snowflake's CEO stated the company is seeing "strong returns on AI investment" — a meaningful signal that enterprise customers are now paying for AI automation tools at real revenue scale, not just running limited pilots. PIMCO marketing $14 billion in Oracle data center debt to institutional investors tells the same story from a different angle: the financial establishment now treats AI infrastructure as a bond-grade asset class with predictable cash flows, not a speculative technology bet.

This matters for anyone tracking AI adoption: when bond funds and investment banks start moving serious capital into infrastructure, it means the underlying demand (businesses paying for AI automation) is real and growing fast enough to service long-term debt. Learn how enterprises are deploying these tools in our AI automation guides.

Anthropic's Quiet Distribution Play: Apple and Amazon Test "Mythos" First

Away from the data center war, Anthropic made a targeted move that could matter more in the long run: granting Apple and Amazon early access to test its next AI model, internally called "Mythos" — described as more powerful than any of Anthropic's current public offerings. Exclusive early access creates structural advantages before a model even launches publicly:

- Apple: Engineers testing Mythos could embed it into iOS, Siri, and Xcode (Apple's developer tool) months before any competitor gets to pitch their model to Apple's teams

- Amazon: The AWS team could build Mythos into Bedrock (Amazon's managed cloud AI platform for enterprise businesses) as a flagship offering, locking in large enterprise contracts ahead of public availability

- The real play: Distribution before launch is worth more than any benchmark score — it creates commercial inertia that's hard to displace even when competitors release stronger models later

Anthropic also completed a tender offer (a structured process where early investors and employees can sell some of their equity to new buyers) this week — but employees overwhelmingly chose to hold their shares rather than cash out. When insiders who know the company's actual product roadmap refuse to take money off the table, it's one of the most reliable real-world signals of internal confidence in future valuation.

The Energy Wall: What Actually Determines Who Wins the AI Race

The OpenAI Stargate UK pause is a preview of a constraint that will increasingly define the AI industry over the next 18 months. Here's the unforgiving math that every major player now faces:

- Training a frontier AI model: 30–50 megawatts of continuous power for several months

- Running that model at global scale for paying users: that same power draw, permanently, 24/7/365

- The UK national grid: not designed for this level of sustained industrial demand at these price points

- The US grid outside Virginia, Texas, and the Pacific Northwest: increasingly strained by the same problem

The winners of the AI infrastructure race won't necessarily be the companies with the best models or the most venture capital. They'll be the ones with access to cheap, reliable, large-scale electricity — through nuclear agreements (Microsoft has already signed deals with nuclear plant operators), hydroelectric geography, or state-subsidized energy partnerships like G42 in the UAE. Kia Motors also confirmed this week it will deploy Boston Dynamics' Atlas robots (humanoid machines capable of dynamic movement and factory tasks) in US manufacturing plants — a reminder that the physical layer of AI automation is accelerating alongside the digital one.

You can track this AI infrastructure race in our news feed before any benchmark or product launch tells you who's ahead. Watch new data center permit filings, utility power purchase agreements, and nuclear energy policy changes in the UK and EU. Those signals will telegraph the AI infrastructure winners months before any product announcement does.

Related Content — Get Started | Guides | More News

Sources

Stay updated on AI news

Simple explanations of the latest AI developments