Meta Muse Spark: 16 Free AI Tools You Didn't Know Existed

Meta Muse Spark packs 16 free tools into meta.ai — Python execution, image analysis, Instagram search, and Gmail sync. Free with a Facebook login.

Meta Muse Spark — Meta's free AI model on meta.ai — launched this week with 16 built-in tools most users never discover. Researcher Simon Willison reverse-engineered it by simply asking the model to list its own capabilities. Meta's decision not to hide this information gave him a full, documented inventory: 16 tools that redefine what free AI automation looks like in one interface.

Meta Muse Spark: First Closed-Weight Model Since Llama 4

Llama 4 dropped in April 2025 as Meta's last major model release — and that one came with downloadable weights you could run locally. Muse Spark is different: it's a closed-weight hosted model (meaning the underlying model code and parameters are not publicly available), accessible only through meta.ai or a private API preview for selected developers.

You need a Facebook or Instagram account to log in — there's no standalone email signup. API access is limited to a private preview with no public launch date announced yet.

Benchmark performance is competitive against Claude's Opus 4.6, Google's Gemini 3.1 Pro, and OpenAI's GPT 5.4 on selected tasks. The clear weak spot: Terminal-Bench 2.0 — a standardized test measuring AI performance on real command-line programming workflows — where Muse Spark falls significantly behind. Meta publicly acknowledged "performance gaps in long-horizon agentic systems and coding workflows," which is unusually candid for a product launch.

Meta AI's 16 Free Tools — Fully Documented

Simon Willison — the independent researcher behind simonwillison.net, well known for thorough hands-on AI analysis — simply asked Muse Spark to describe its own capabilities. The model complied without resistance. Willison noted: "credit to Meta for not telling their bot to hide these, since it's far less frustrating if I can get them out without having to mess around with jailbreaks."

Here's the full breakdown across 4 categories:

Search and Web Tools (4 tools)

- Web search — standard real-time internet queries

- Page open — visits and reads the full content of a URL

- Find — pattern matching (text search) within fetched page content

- Meta content search — queries Instagram, Threads, and Facebook posts created after January 1, 2025. Posts older than that are not indexed.

Code Execution and File Tools (4 tools)

- Python code interpreter (a sandboxed environment that executes Python code in real time) — runs Python 3.9.25 with pandas (data analysis), numpy (numerical math), matplotlib and plotly (data visualization), scikit-learn (machine learning), and OpenCV (a computer vision toolkit for processing images) pre-installed. Note: Python 3.9 officially reached end-of-life in October 2022.

- SQLite 3.34.1 — an embedded database engine (released January 2021) for structured SQL queries on tabular data

- File search — searches inside uploaded documents including PDFs

- Container file editing — view, insert, and string-replace operations enabling iterative code editing inside the sandboxed workspace

Visual and Generative Tools (5 tools)

- Visual grounding (a tool that identifies and locates objects in images) — returns results as coordinate points, bounding boxes (rectangles drawn around detected objects), or object counts. In Willison's test, it correctly identified 8 objects in a single image.

- Image generation — powered by Meta's Emu model at 1280×1280 pixel resolution, with outputs automatically saved to the sandbox for downstream Python processing

- SVG rendering — displays vector graphics code directly in the chat interface

- HTML rendering — renders HTML/CSS output in sandboxed iframe containers (isolated mini-browsers inside the chat)

- Sub-agent spawning — delegates complex or parallelizable tasks to separate sub-processes

Integration Tools (3 tools)

- Google Calendar and Gmail — direct connection to your Google workspace inside the chat

- Outlook Calendar and Outlook email — Microsoft mail and calendar integration

- Catalog search — product shopping integration for e-commerce queries

Meta Muse Spark Thinking Mode vs Instant: Real Difference

Willison ran what he calls the "pelican test" — a standard prompt asking the model to illustrate a pelican riding a bicycle. It's repeatable enough to compare across models and modes side-by-side.

- Instant mode (optimized for response speed): produced a basic, functional result — recognizable shapes, minimal anatomical detail

- Thinking mode (the model reasons through the problem step-by-step before answering): produced a noticeably better output — more anatomically correct pelican, a structured bicycle frame, and a helmet the model added without being asked

A third mode called "Contemplating" is on Meta's roadmap — designed for extended reasoning on complex, multi-step problems, similar to Google's Gemini Deep Think feature. No release date has been announced.

AI Automation in Action: The 5-Step Raccoon Data Pipeline

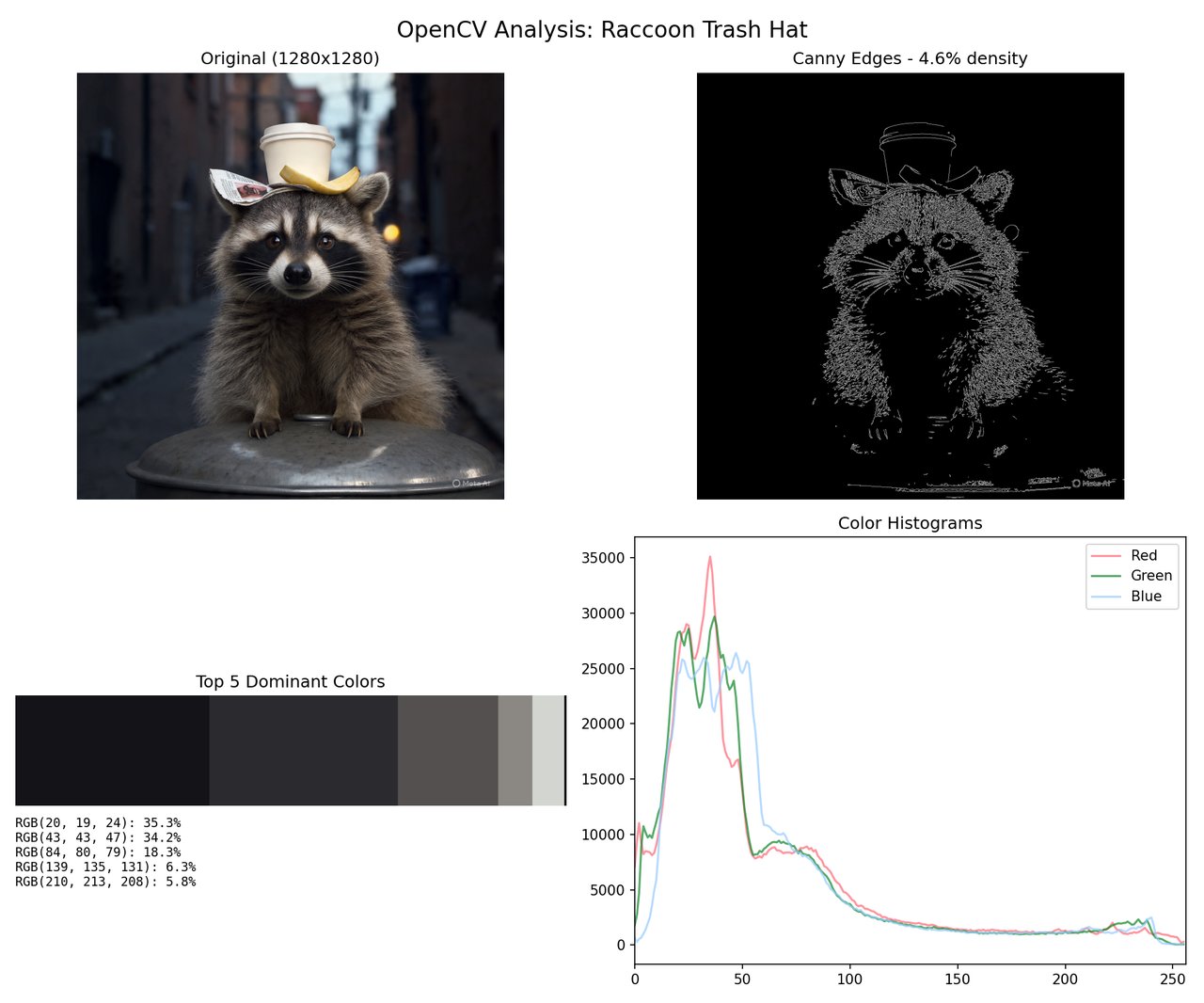

The most striking demonstration in Willison's post isn't a benchmark score — it's a raccoon. He asked Muse Spark to generate an image of "a raccoon wearing a trash hat," then immediately analyze it using Python's OpenCV library (a widely-used toolkit for processing and analyzing images computationally).

The full analysis ran entirely inside the chat interface, automatically:

- Emu generated the raccoon image at 1280×1280 pixel resolution

- The model saved it to the sandboxed environment without any manual step

- Python applied Canny edge detection (an algorithm that traces object outlines and boundaries by finding sharp transitions in pixel brightness)

- K-means color clustering (a technique grouping similar pixel colors together) identified 5 dominant colors: 35.3%, 34.2%, 18.3%, 6.3%, and 5.8% of the image

- Overall edge density calculated at approximately 4.6% of total image pixels

The model's caption on the final output: "Coffee cup crown, banana peel brim, newspaper feather. Peak raccoon fashion." That combination of pixel-level numerical analysis and playful narration shows what tool-chaining looks like in practice: image generation feeding directly into computer vision analysis, all in one session with no setup.

Six Honest Limits Before You Log In

Meta's own candor on performance gaps makes this section straightforward. These limits are real and worth knowing:

- Python 3.9 is end-of-life. Officially unsupported since October 2022. Newer versions of machine learning libraries may not install cleanly in this environment.

- No pixel-level image segmentation. Visual grounding returns points, bounding boxes, and counts — not masks (filled pixel-level outlines used in advanced vision work). More sophisticated computer vision workflows will hit this limitation quickly.

- Social content search starts January 1, 2025. Any Instagram, Threads, or Facebook post older than that returns empty results. Historical research won't work here.

- No public API access yet. Developers who want to build on Muse Spark must apply for the private preview. No public SDK or open endpoint currently exists.

- Facebook or Instagram login required. No workaround for users without a Meta account.

- Terminal-Bench 2.0 performance gap. For agentic coding or multi-step command-line workflows, current competitors score higher on this benchmark — and Meta has confirmed it's investing to close this gap.

Try Meta Muse Spark Free — No Installation, 3 Steps

Anyone with a Meta account can access it immediately in any browser:

1. Visit meta.ai

2. Log in with your Facebook or Instagram account

3. Switch to "Thinking" mode using the toggle in the chat interface

(produces noticeably better output quality on most tasks)

Gmail and Google Calendar connections are available in the integrations panel once you're logged in. For hands-on Python work, upload a CSV, PDF, or image and ask Muse Spark to analyze it — the code interpreter launches automatically without any configuration. If you're already building AI-assisted workflows, the combination of Python execution, image analysis, and live social content search from 2025 onward is genuinely unlike what ChatGPT or Claude currently offer together in one place. The 16 tools are more capable than the surface-level chat interface suggests — and now you know exactly what to ask for.

Related Content — Get Started | Guides | More News

Sources

Stay updated on AI news

Simple explanations of the latest AI developments