Meta AI Neural Computer: 98.7% Cursor Accuracy, 4% Math

Meta AI & KAUST built a neural computer where AI replaces the OS — 98.7% cursor accuracy, only 4% on math. Maps AI automation's next unsolved frontier.

What if AI automation didn't need Windows, macOS, or Linux at all? Researchers at Meta AI and King Abdullah University of Science and Technology (KAUST) have demonstrated working prototypes of a radical answer: a neural computer where the neural network is the operating system — not software sitting on top of one.

The catch: their prototype scored 98.7% accuracy on cursor control and only 4% on basic arithmetic. That gap is the entire story of where AI is headed — and why we're probably 3–5 years from it mattering to anyone outside a research lab.

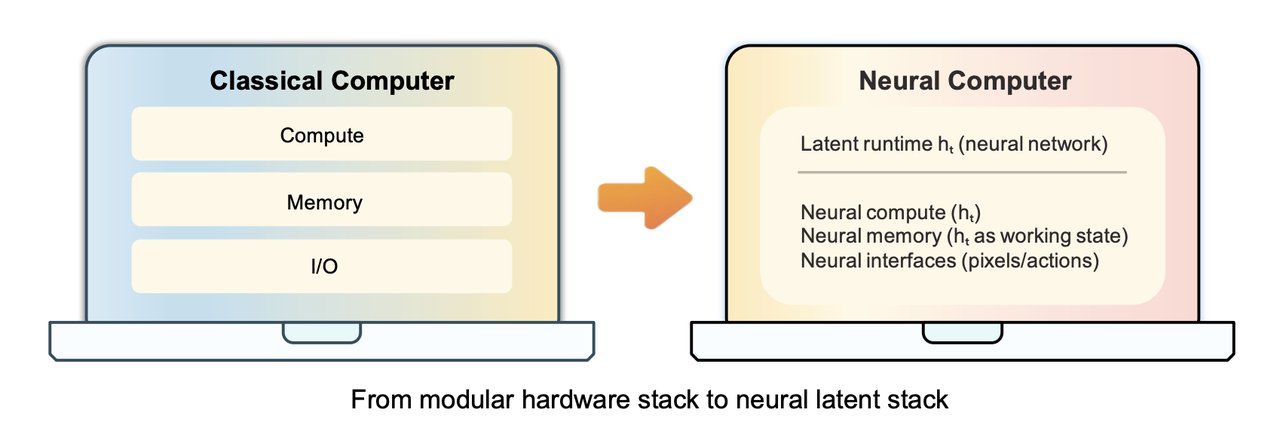

What a Neural Computer Actually Is — AI Without a Traditional OS

To understand why this matters, you need to know how AI normally works today. An AI agent is like a highly capable employee who still needs a desk, a computer, and an operating system underneath them. They call APIs (interfaces that let programs talk to each other), navigate graphical interfaces built for humans, and depend on external software infrastructure to do anything useful.

A Neural Computer (NC) flips this entirely. Instead of AI sitting on top of a computer, the researchers proposed that the neural network itself becomes the running computer. Computation (math processing), working memory (temporary data held during a task), and I/O (inputs and outputs — what appears on screen and what gets typed) are all folded into a single learned model state. No external OS. No API calls. No separate memory module.

The research team framed it this way:

"The latent state carries what the operating system stack ordinarily would — executable context, working memory, and interface state — inside the model rather than outside it."

This differs from every other AI architecture in the current landscape:

- AI Agents — operate through existing software stacks (OS, APIs, terminals). A Neural Computer replaces that stack entirely.

- World Models — learn to predict how an environment evolves. A NC doesn't just predict; it executes.

- Neural Turing Machines — add a differentiable memory tape (a learnable external memory module bolted onto a neural network) to networks. A NC aims to eliminate external infrastructure rather than extend it.

Two Neural Computer Prototypes: 1,100 Hours of Video, 38,000 GPU Hours

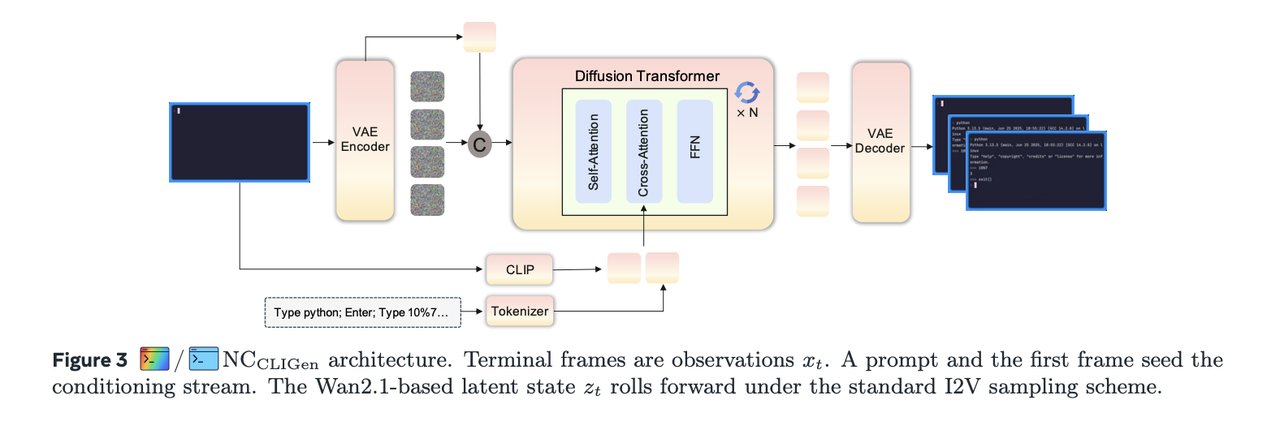

The team built two working prototypes, both running on Wan2.1 (a video generation model — a neural network trained to produce realistic video frame sequences from text prompts):

NC CLIGen: The Terminal Prototype

This prototype simulates a command-line terminal (the text-based interface developers type commands into). It was trained on data sourced from asciinema.cast — a public repository of recorded terminal sessions — and produced two distinct training datasets:

- General dataset: 823,989 video streams totalling 1,100 hours, requiring 15,000 H100 GPU hours to train

- Clean dataset: 128,000 curated examples (78,000 regular traces + 50,000 Python math validation traces), requiring 7,000 H100 GPU hours

NC CLIGen achieved 40.77 dB PSNR (Peak Signal-to-Noise Ratio — a measure of pixel rendering accuracy where higher values mean sharper, more faithful output) and 0.989 SSIM (Structural Similarity Index — how visually similar two images are, where 1.0 is a perfect match). For rendering what a terminal looks like, these are genuinely strong numbers.

But on arithmetic probe tasks (basic math questions inserted during evaluation), NC CLIGen scored only 4% accuracy without help. For context: Sora-2 (OpenAI's video generation model) scores 71% on the same test. Wan2.1, the base model this was built on, scored 0%.

The team discovered a revealing trick: re-prompting the model with the correct answer spelled out explicitly in the prompt raises accuracy to 83% — with zero changes to the model itself. Their conclusion: the model is "a strong renderer but not a native reasoner." It faithfully reproduces what you show it, but doesn't internally derive answers through symbolic logic.

NC GUIWorld: The Desktop Prototype

The second prototype simulates a full graphical desktop at 1024×768 resolution on Ubuntu 22.04 with XFCE4 (a lightweight Linux desktop environment), running at 15 frames per second. Training consumed approximately 23,000 GPU hours across 64 GPUs over ~15 days per full training run.

The headline result: 98.7% cursor accuracy using SVG mask/reference conditioning (a technique that feeds the model visual outlines of screen objects so it knows what to target) versus only 8.7% with coordinate-only supervision (simply specifying numeric X/Y pixel positions). The lesson is stark: showing the model what to click matters 11× more than telling it where to click.

110 Curated Hours Beat 1,400 Random Ones — Data Quality in AI Automation Training

The most consequential finding from NC GUIWorld wasn't an accuracy score — it was about training data strategy. The team trained on three categories of behavioral data and measured results using FVD (Fréchet Video Distance — a metric for how realistic and temporally consistent generated video is; lower is better):

- Goal-directed trajectories from Claude CUA (Claude navigating a real desktop to complete specific tasks): 110 hours → FVD 14.72

- Random Fast exploration (automated random mouse movements and keystrokes): 400 hours → FVD 20.37

- Random Slow exploration: 1,000 hours → FVD 48.17

The 110-hour curated dataset outperformed 1,400 hours of random data combined. The research team's conclusion was direct: "Data quality proved as consequential as architecture." More GPU hours, more raw video — none of it matters if the actions in the data don't mean anything.

Caption quality drove the same lesson home. Detailed captions averaging 76 words per training example improved PSNR by 5 dB (from 21.90 dB to 26.89 dB) versus short semantic descriptions. Precise language translates directly to precise pixels — the model needs exact descriptions to render exact visual states.

Training also hit a natural ceiling at 25,000 training steps. Extending runs to 460,000 steps produced no meaningful further gains. Once a model has extracted all available signal from its dataset, additional compute becomes waste.

The Four Problems Between AI Automation and a True Neural Computer OS

The research team defines the ultimate target — a Completely Neural Computer (CNC) — as requiring four simultaneous conditions: Turing complete (capable of running any computable function), universally programmable, behavior-consistent unless explicitly changed, and exhibiting native machine-level semantics (understanding its own structure the way a CPU understands its instruction set). Current prototypes satisfy none of these four fully.

Specific unsolved gaps, stated honestly in the paper:

- Symbolic computation: 4% arithmetic accuracy unaided. Sora-2 scores 71%. Closing this gap is the single largest obstacle to practical use.

- Routine reuse: The model can't reliably persist and recall learned procedures. There's no concept of "subroutine" yet — each session starts fresh.

- Execution consistency: Multiple runs of the same task produce different outputs. Reproducibility — foundational to any real computer — remains unsolved.

- Long-horizon task coherence: Behavior degrades over extended sequences. The model drifts from its original intent as task complexity accumulates.

Both prototypes were also evaluated in open-loop mode (replaying pre-recorded prompts and actions, not live interaction). That's a significant asterisk — real-world performance with live environments is uncharted. The research team estimates 3–5 years before these gaps close enough for production viability. Track the latest AI automation research as it lands in our news section.

Run MolmoAct Today: Depth-Aware Vision You Can Try Now

While Neural Computers remain research-only, a complementary model is already available: MolmoAct-7B-D-0812 — a multimodal model (an AI that processes both images and text simultaneously) supporting depth-aware spatial reasoning, visual trajectory tracing (predicting step-by-step paths a robot arm should follow through a 3D scene), and robotic action prediction from plain-language instructions.

# Install core dependencies

pip install torch>=2.0.0 torchvision

pip install transformers==4.52 accelerate einops Pillow numpy

# Load MolmoAct

from transformers import AutoModelForCausalLM, AutoProcessor

model_name = "allenai/MolmoAct-7B-D-0812"

model = AutoModelForCausalLM.from_pretrained(

model_name,

torch_dtype="bfloat16", # 16-bit floating-point format — halves memory vs. full precision

device_map="auto" # auto-assigns model layers to available GPU memory

)

processor = AutoProcessor.from_pretrained(model_name)Hardware: a single RTX 3090 with 24 GB VRAM handles MolmoAct well. For full NC GUIWorld-style inference, you'd need 64+ GB VRAM across multiple GPUs.

The full Neural Computers paper is at arxiv.org/abs/2604.06425. The gap between 98.7% cursor accuracy and 4% arithmetic isn't an embarrassment — it's the most honest map yet of what AI must solve before it can truly replace the software stack underneath it. Watch for follow-up work on symbolic reasoning. That's the unlock. You can explore our AI automation guides to stay current as this research evolves.

Related Content — Get Started | Guides | More News

Sources

Stay updated on AI news

Simple explanations of the latest AI developments