AI Energy Cost: ChatGPT Uses 10× More Power Per Query

ChatGPT uses 10× more electricity than Google search. AI data centers could consume 9% of US power by 2030—but energy regulations are stuck in 2015.

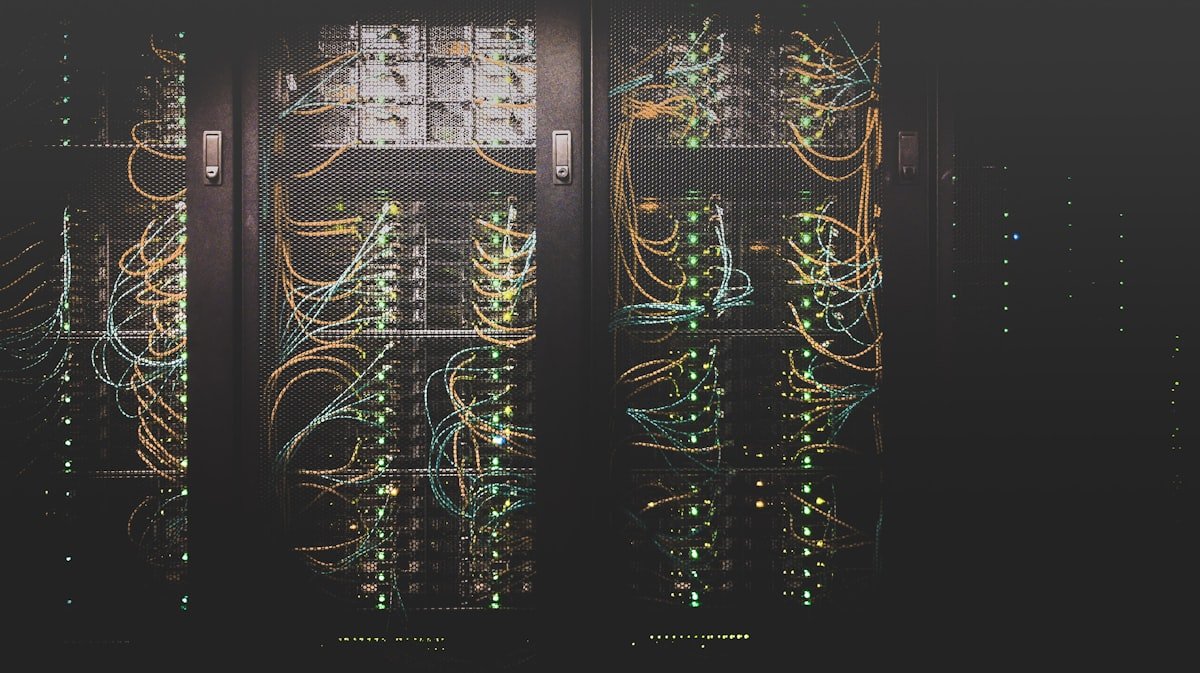

The next time you ask ChatGPT a question, something happens that isn't visible on your screen: a warehouse-sized building full of specialized computer chips spins up to answer you — using roughly 10 times more electricity than a standard Google search. Multiply that by hundreds of millions of daily queries, and the math starts to matter for your power grid — and eventually, your electricity bill. AI energy consumption at this scale is outpacing the infrastructure and regulations designed to manage it.

Policy researchers at the Brookings Institution are now tracking what they call a critical gap: AI companies are expanding at record speed, but the regulations governing how that electricity gets supplied — and who pays when it runs short — were written for a different era. That gap is widening fast.

The AI Energy Cost Nobody Talks About

When people calculate the cost of AI, they usually add up subscription fees: $20/month for ChatGPT, $10/month for Copilot, maybe $200/month for Claude's professional plan. The electricity that powers every one of those queries doesn't show up on the invoice — but it's accumulating somewhere.

Here's what the numbers actually look like:

- A single ChatGPT query uses approximately 0.001 to 0.01 kWh of electricity — 6 to 10 times more than a standard Google search

- OpenAI reportedly requires more than 1 gigawatt of power to run its operations — enough to supply roughly 750,000 American homes simultaneously

- Goldman Sachs projected that data center (large buildings that house computer servers) power demand will grow 160% by 2030, driven primarily by AI workloads

- The International Energy Agency (IEA) estimates data centers could consume 9% of total US electricity by 2030, up from roughly 2.5% today

- AI server racks (equipment cabinets holding dozens of specialized chips) now draw 80–100 kilowatts each — far above the 5–10 kilowatts typical of traditional office server rooms

To ground that in something familiar: the average American refrigerator runs on about 400 kWh per year. A data center complex hosting a major AI model can consume that much power in minutes, running continuously, day and night.

The Power Grid Wasn't Designed for Always-On AI Data Centers

America's electrical grid (the interconnected network of power plants, transmission lines, and substations that delivers electricity to homes and businesses) was largely planned in the mid-20th century. It was sized for factories, office buildings, and air conditioners — not for facilities that demand enormous, constant power loads around the clock.

The mismatch goes beyond just higher total demand. AI data centers create a fundamentally different kind of electricity load:

- Always-on: AI inference (the process of running a trained model to answer user questions in real time) operates 24 hours a day, 7 days a week — unlike factories that slow down at night

- Clustered: Northern Virginia alone hosts approximately 35% of global data center capacity, concentrating enormous demand in a single transmission zone

- Power-dense: Next-generation AI chips require specialized liquid cooling and power conversion infrastructure that traditional data centers never needed

- Unpredictable at scale: Grid operators (organizations responsible for balancing electricity supply and demand in real time) had no models for AI load growth when they made their 5-year capacity plans

Grid operators like PJM Interconnection — which manages electricity for 13 mid-Atlantic and Midwest states, covering 65 million people — have already flagged AI-driven demand as a reliability risk in their planning documents. In plain terms: too many data centers coming online too fast could, in a worst-case scenario, cause supply shortfalls in regions where AI infrastructure is concentrated.

Energy Regulations Haven't Caught Up With AI Demand

The core problem that Brookings researchers have identified isn't that the grid can't adapt — it's that the rules governing how it adapts haven't kept pace. Three specific gaps are most urgent:

Interconnection queues stretching 3–7 years: Any new power source — nuclear, solar, or natural gas — must wait in an interconnection queue (a waiting list to connect a new generator to the public power grid) that currently stretches 3–7 years in most US regions. AI companies need power now, not in 2031.

Rate structures built for predictable loads: Electricity pricing was designed for usage patterns that don't shift dramatically hour to hour. AI data centers, which can ramp their power draw up or down rapidly as workloads shift, don't fit these models. The result is pricing that neither incentivizes efficiency nor fairly distributes costs across all electricity customers on the same regional grid.

No AI-specific emissions accounting: The carbon footprint (climate-warming greenhouse gas emissions) of a ChatGPT conversation isn't tracked or regulated under any existing EPA framework. The agency's current reporting requirements were written before generative AI (AI systems that produce text, images, or code on demand) was a relevant commercial category.

FERC (the Federal Energy Regulatory Commission — the US agency responsible for overseeing electricity markets and transmission infrastructure) has opened preliminary proceedings on data center power demands. But formal rulemaking typically takes 2–4 years from initiation to enforcement — a timeline that bears no relationship to how fast AI infrastructure is being built right now.

Big Tech's Private AI Power Fix — and Why It Doesn't Scale

Facing regulatory gridlock and grid capacity constraints, the largest AI companies have begun securing their own dedicated power sources outside the public electricity system entirely:

- Microsoft signed a 20-year deal to restart the Three Mile Island nuclear plant in Pennsylvania, securing 835 megawatts of reliable power dedicated exclusively to its AI data center operations

- Google committed to purchasing electricity from small modular reactors (compact nuclear plants designed to fit in a single building, rather than spanning a city block) from Kairos Power

- Amazon acquired a nuclear-powered data center in Pennsylvania, adding 960 megawatts of carbon-free power to its AI infrastructure portfolio

These deals make business sense for trillion-dollar companies. They don't help the mid-size businesses, universities, hospitals, and government agencies that also run AI workloads but can't negotiate private nuclear contracts. And they don't help the communities surrounding data centers that absorb the infrastructure costs — new substations, upgraded transmission lines, and higher base electricity rates for every customer sharing the same regional grid.

Brookings researchers tracking AI's energy implications point to this as a growing equity issue: the largest AI players are solving their power problems privately, while the public infrastructure they ultimately depend on for resilience and backup remains underfunded and underregulated — with costs quietly distributed to everyone else.

Track Your AI Energy Consumption Before Regulations Arrive

The policy changes are coming, even if slowly. Several regulatory developments expected in the next 12–18 months will determine how AI energy costs get allocated — and who ends up paying:

- FERC data center rulemaking — New interconnection and cost-allocation rules expected in late 2026 will determine whether large AI customers pay more for grid access, or whether those costs stay distributed across all ratepayers

- State-level load forecasting mandates — Virginia, Texas, and Georgia are advancing requirements for utilities to include AI growth scenarios in long-term infrastructure plans

- EPA reporting expansion — A potential update to existing Clean Power Plan authority could require AI companies to disclose per-query carbon data for the first time, making the environmental cost of AI visible to consumers and regulators alike

You don't have to wait for regulators to act. If your team uses Microsoft Azure, Google Cloud, or AWS for AI workloads, each platform offers a carbon footprint dashboard that shows your electricity consumption and emissions in near-real-time — and almost nobody uses it. Starting to track your AI energy use now gives you a baseline before reporting requirements land. Explore more efficient AI automation workflows that cut both operational cost and energy draw without sacrificing output — the two problems are more connected than most teams realize.

Related Content — Get Started | Guides | More News

Sources

Stay updated on AI news

Simple explanations of the latest AI developments