Google AI Adoption: Hassabis Calls Viral Post 'Clickbait'

Demis Hassabis called Yegge's Google AI adoption post 'clickbait.' But 40K engineers use agentic coding weekly. Which side is right?

Google AI adoption became a public flashpoint last week. Steve Yegge — a veteran engineer who spent years at both Google and Amazon — posted a claim that hit a nerve: Google's internal AI adoption looks exactly like John Deere's. The data, if accurate, suggests the world's leading AI company is not leading its own employees. Twenty percent are agentic power users (engineers who let AI write, run, and fix code autonomously), 60% still rely on tools like Cursor (an AI-powered code editor), and 20% refuse AI entirely. Google's leadership responded the same day — and did not hold back.

Demis Hassabis, Google DeepMind's CEO, called the post "completely false and just pure clickbait." Addy Osmani, a principal engineering leader at Google, fired back with a concrete counter-number: over 40,000 software engineers use agentic coding (AI that autonomously plans, writes, and tests code) every single week. Both figures are real. They are measuring different things — and that gap is the actual story.

The Google AI Adoption Claim That Lit Up Tech

Yegge's argument wasn't that Google ignores AI. It was that the distribution of adoption — how employees split between heavy, casual, and non-users — mirrors every other major organization in the industry, including ones with no particular reason to lead on AI:

- 20% — full agentic users: AI writes and deploys code with minimal human checkpoints

- 60% — casual users: chat tools like Cursor, humans in the loop for every decision

- 20% — outright refusers who don't use AI tools at all

What made this land like a grenade was the specific comparison: Yegge named John Deere — a 180-year-old agricultural equipment manufacturer — as having the same internal distribution as the company that built Gemini. He also pointed to an 18-plus month hiring freeze as a structural cause: without new engineers arriving from companies where AI-first workflows were standard, Google had no outside mirror to show how far its internal culture had drifted.

Yegge posted the original observation on Twitter, writing: "Most of the industry has the same internal adoption curve: 20% agentic power users, 20% outright refusers, 60% still using Cursor or equivalent chat tool. It turns out Google has this curve too." That one sentence, comparing the world's AI infrastructure company to a tractor brand, sparked a CEO-level rebuttal within hours.

Google's Leadership Fires Back on AI Adoption — Publicly

Addy Osmani posted a direct counter on the same day:

"Over 40K SWEs use agentic coding weekly here. Googlers have access to our own versions of Antigravity, Gemini CLI, custom models, skills, CLIs and MCPs for our daily work."

— Addy Osmani, Google

Demis Hassabis, Google DeepMind's CEO, was more pointed:

"Maybe tell your buddy to do some actual work and to stop spreading absolute nonsense. This post is completely false and just pure clickbait."

— Demis Hassabis, CEO Google DeepMind

Here is the detail worth unpacking: Osmani's 40,000 is a raw count, not a proportion. Google employs well over 200,000 engineers. If 40,000 are agentic users, that is roughly 20% — which is precisely what Yegge claimed for the power-user tier. Yegge was not denying that 40,000 users exist. He was arguing the 60%/20% split at the lower end was still the dominant reality. Both sides may be right. They are just answering different questions.

If you are evaluating where your own team sits on this curve, our AI workflow integration guides break down the practical difference between a casual AI user and a genuine agent user — and what it actually takes to shift between them.

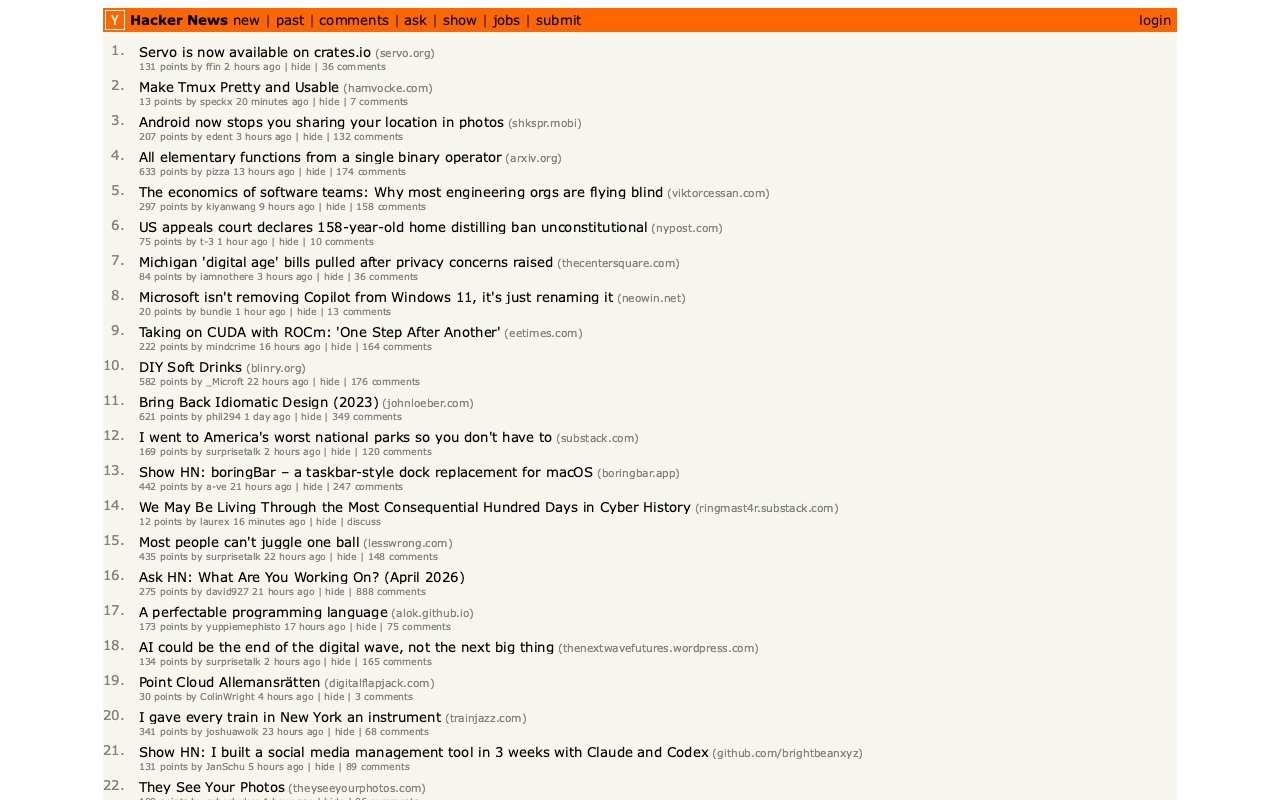

This screenshot — the Hacker News homepage rendered accurately by a command-line tool — was built in a few hours by developer Simon Willison using Claude Code and the new Servo browser engine crate (a crate is a Rust library package, similar to an npm module for JavaScript). He ran the entire project from his phone. It is a concrete illustration of what real agentic use looks like: not asking AI for code suggestions, but giving it a goal and receiving a working tool.

AI Security Just Became a Token-Spending Arms Race

While the Google debate played out on social media, a separate development may carry longer-term consequences: the UK's AI Safety Institute published an independent evaluation of Claude Mythos — Anthropic's cybersecurity-specialized AI model — and the findings reframe how security economics work for every organization that runs software.

Technology analyst Drew Breunig captured the core implication directly:

"If Mythos continues to find exploits so long as you keep throwing money at it, security is reduced to a brutally simple equation: to harden a system you need to spend more tokens discovering exploits than attackers will spend exploiting them."

— Drew Breunig

A "token" is the unit of text an AI processes — every word sent or received costs tokens, which cost real money. The implication: AI has converted cybersecurity into a proof-of-work arms race (a competition where the winner is whoever can sustain the higher computational spend). Defenders must now run AI against their own systems continuously, finding vulnerabilities before an attacker buys the same capability on the open market.

OpenAI entered the space simultaneously with GPT-5.4-Cyber, a model variant fine-tuned for defensive cybersecurity, and a "Trusted Access for Cyber" program (launched February 2026) requiring government photo ID verification via Persona (a digital identity verification service). The two approaches differ on one critical point:

| Feature | Claude Mythos (Anthropic) | GPT-5.4-Cyber (OpenAI) |

|---|---|---|

| Independent third-party evaluation | ✓ UK AI Safety Institute published | ✗ Not published |

| Identity verification | Project Glasswing application form | Government photo ID scan via Persona |

| Access model | Reviewed application | Self-service + premium gate |

One underreported consequence of the token-spend security model: open source libraries just became significantly more valuable. When a developer runs an AI vulnerability scan (an automated AI-powered search for security flaws) on a widely-used open source library, that audit cost is shared across every user of the library. Closed-source software bears the full cost alone. The economics of shared security review received a structural boost that the "vibe-code everything" camp has not yet fully accounted for.

One Expert's Case Against Vibe Coding and AI Writing Everything

Bryan Cantrill — co-founder of Oxide Computer and a respected voice in systems engineering — published a counterargument to the general AI optimism this week. His concern is not that AI produces bad code. It is that AI produces far too much code and has no structural incentive to minimize:

"The problem is that LLMs inherently lack the virtue of laziness. Work costs nothing to an LLM. LLMs do not feel a need to optimize for their own (or anyone's) future time, and will happily dump more and more onto a layercake of garbage."

— Bryan Cantrill

An LLM (large language model — the AI system powering tools like Claude Code and ChatGPT) has no incentive to write minimal, maintainable code. Human engineers are "lazy" in the productive sense: they resist writing code they do not need to own. AI has no such constraint. Cantrill's argument is that the result is proliferating abstraction layers — software that technically works but that no experienced engineer would have chosen to write.

This connects back to the Yegge/Google debate. The 60% of engineers still using chat tools — rather than full autonomous agents — may partly be making a deliberate choice: staying in the loop precisely because they have seen what happens when they step back entirely. Whether that caution is wisdom or inertia likely depends on which 20% tier you have already decided to join.

AI Automation Tools You Can Run Right Now

Separate from the macro debates, several practical developments shipped this week worth knowing about:

- Servo browser engine hit crates.io — Servo (a high-performance browser engine written in Rust) is now available as a standard library package at crates.io/crates/servo, meaning Rust developers can embed a full web renderer in their apps without building from source. This is the first time Servo has been usable as an ordinary library.

- Claude Code built servo-shot in hours — Simon Willison used Claude Code on his phone to build servo-shot, a CLI screenshot tool using the Servo crate. It rendered Hacker News with accurate HTML and CSS parsing on the first run.

- SQLite 3.53.0 shipped — The embedded database used in nearly every mobile app, browser, and desktop application worldwide added ALTER TABLE support for NOT NULL and CHECK constraints, plus new JSON functions — changes developers had requested for years.

To try servo-shot yourself (requires Rust installed on your machine):

git clone https://github.com/simonw/research

cd research/servo-crate-exploration/servo-shot

cargo build

./target/debug/servo-shot https://news.ycombinator.com/Whether Google's AI adoption curve matches John Deere's or not, the tools are real, the security economics have shifted, and the gap between how AI is discussed in public and how it is actually deployed inside large organizations is closing — just not as uniformly, or as quickly, as either side of this week's argument wants to admit. Watch the 60% in the middle. That is where the next phase of AI automation adoption will actually be decided.

Related Content — Get Started | Guides | More News

Sources

Stay updated on AI news

Simple explanations of the latest AI developments