Qwen 35B Beats Claude Opus 4.7 — Runs Free on MacBook

Qwen 35B beats Claude Opus 4.7 twice in visual tests — free, no subscription, runs offline on MacBook Pro. One 20.9GB download replaces your cloud AI bill.

Qwen 35B — a free 20.9GB local AI model from Alibaba — just outperformed Anthropic's flagship Claude Opus 4.7 in 2 back-to-back visual tests, running entirely on a MacBook Pro with no cloud subscription required. Simon Willison, the open-source engineer behind the popular datasette database tool, ran the tests on April 16, 2026 and published all five SVG outputs side by side.

The Benchmark That Was Never Supposed to Work

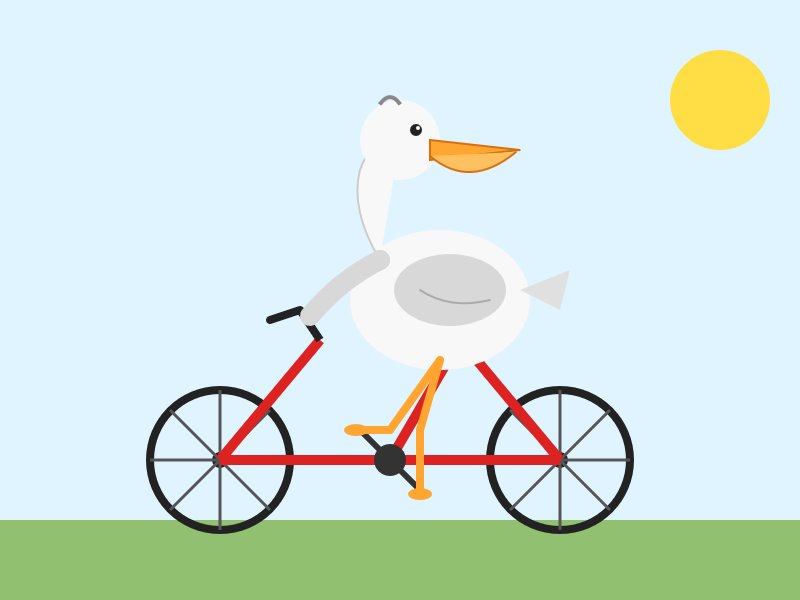

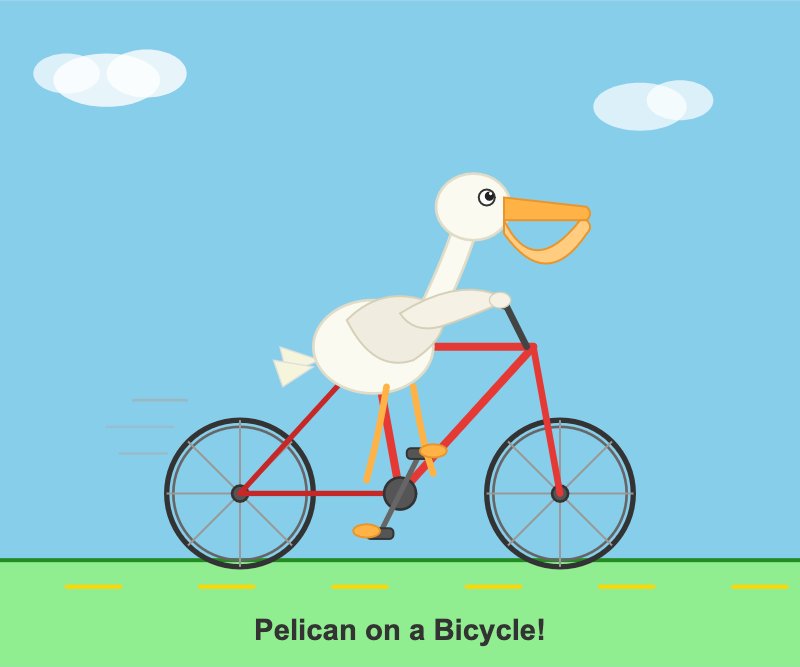

Willison's "pelican test" asks an AI to generate an SVG (Scalable Vector Graphics — an image format described entirely in code, not pixels, that any AI can produce as plain text) of a pelican riding a bicycle. The task is intentionally absurd. Over several years of testing, a pattern emerged: models that drew better pelicans tended to perform better at real-world tasks too.

"The pelican benchmark has always been meant as a joke," Willison wrote on April 16, 2026. "It's mainly a statement on how obtuse and absurd the task of comparing these models is." He ran it anyway — pitting two very different systems head-to-head:

- Claude Opus 4.7 — Anthropic's newest flagship, accessed via cloud API (application programming interface — a remote connection to an AI service over the internet), requiring an account and a paid subscription

- Qwen3.6-35B-A3B — Alibaba's open-source 35-billion-parameter model, quantized (compressed to reduce file size with minimal quality loss) down to a 20.9GB file, running locally via LM Studio

Qwen won both rounds.

What the Images Actually Showed

Claude Opus 4.7's pelican had the wrong bicycle frame — a structural error visible at a glance. Even when Willison activated thinking_effort: max (a parameter that instructs Claude to spend extra time reasoning step-by-step before generating output), the frame was still incorrect.

Qwen's pelican had the correct frame — and went further. The SVG output included sunglasses, a bowtie, a cigarette, emojis, and a caption. Willison described Claude's version as "competent but dull" by comparison.

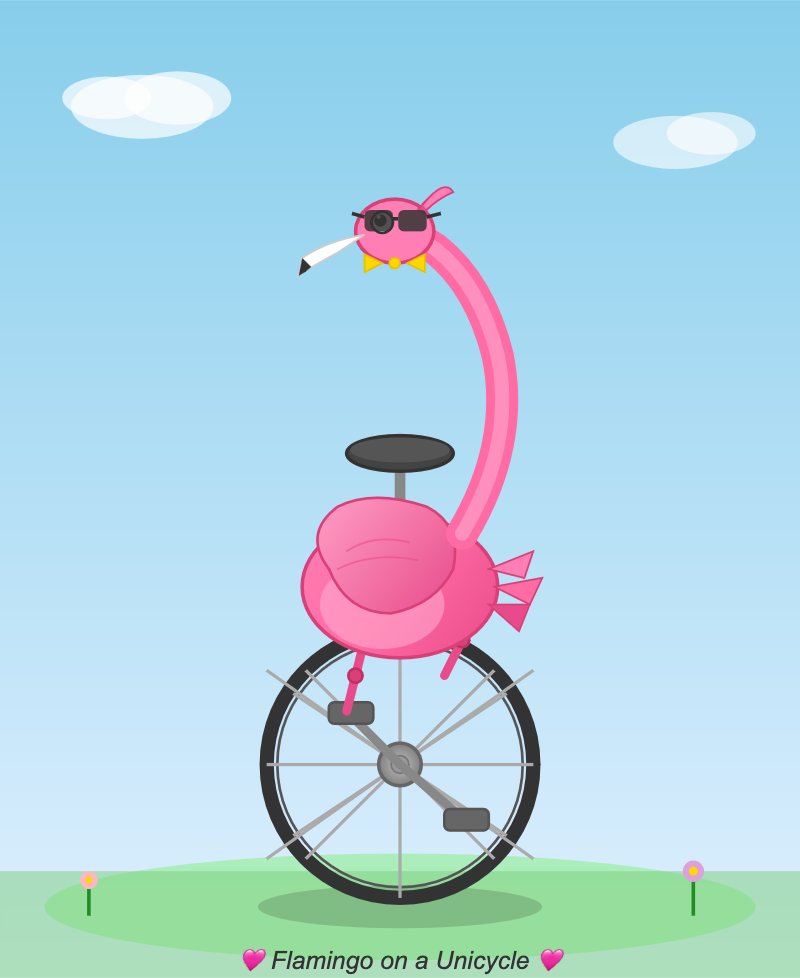

A second prompt — "Generate an SVG of a flamingo riding a unicycle" — produced the same outcome. Qwen's flamingo had character and visual flair. Claude's was technically fine but visually forgettable.

Running Qwen 35B: 35 Billion Parameters on a Laptop

The model Willison tested is a quantized build of Alibaba's Qwen 3.6 series. Quantization works like compressing a photograph to JPEG format — the file shrinks dramatically while retaining most of its quality. The specific file is Qwen3.6-35B-A3B-UD-Q4_K_S.gguf, weighing 20.9GB. The .gguf extension is a standard container format for quantized language models (large AI systems trained on billions of text and code samples) designed to run efficiently on consumer hardware without cloud access or specialized software setup.

To run it on a MacBook Pro M5 — or any modern laptop with 32GB of RAM or more:

# Option 1: GUI download via LM Studio (free desktop app — Mac/Windows/Linux)

# Step 1: Download LM Studio from https://lmstudio.ai/

# Step 2: Inside LM Studio, search for: Qwen3.6-35B-A3B-GGUF

# Step 3: Download file: Qwen3.6-35B-A3B-UD-Q4_K_S.gguf (~20.9 GB one-time)

# Step 4: Load model and open the chat interface — no internet needed after this

# Option 2: Command-line via the llm tool

pip install llm

llm install llm-lmstudio

# To also connect to Claude Opus 4.7 from the same command line:

pip install --upgrade llm-anthropicNo API key. No subscription fee. No data sent over the internet. The model downloads once and runs offline indefinitely. For developers or designers who generate custom SVG assets, diagrams, or illustrations regularly, this eliminates a recurring cloud API cost entirely. If you're building AI automation workflows on local hardware, the local AI setup guide covers compatible hardware requirements and configuration steps for open-source models like Qwen 35B.

Why Willison Still Backs Claude for General Work

Willison is careful about what this result proves. "I very much doubt that a 21GB quantized version of their latest model is more powerful or useful than Anthropic's latest proprietary release," he wrote. His conclusion is deliberately narrow: "If the thing you need is an SVG illustration of a pelican riding a bicycle though, right now Qwen3.6-35B-A3B running on a laptop is a better bet than Opus 4.7!"

The pelican test has real limitations worth keeping in mind:

- Narrow scope. Generating code that renders a visually correct bird image is unrelated to reasoning through complex software bugs, parsing legal language, or producing production-grade code at scale.

- Subjective judgment. "Better" here means Willison preferred one visual output — not a standardized benchmark score with reproducible numbers across multiple independent testers.

- Quantization involves trade-offs. The 20.9GB file is a compressed version of the full Qwen model. Compression always costs some capability, and the uncompressed model would likely behave differently.

- Hardware dependency. A MacBook Pro M5 is a premium device. Performance will vary significantly on older laptops or machines with less than 32GB of unified memory.

Still, the finding points to a genuine shift: open-source local models are closing the gap with proprietary cloud systems on specific creative tasks, and the hardware required to run them now sits within reach of millions of professionals who already own Apple Silicon laptops.

Two Developer Tools Updated in the Same Week

Willison's discovery coincided with releases of two open-source tools relevant to developers working across both local and cloud-based AI:

llm-anthropic 0.25 (April 16, 2026) — The plugin for Willison's llm command-line tool (a terminal application for querying AI models from multiple providers through one consistent interface) now supports Claude Opus 4.7, including the thinking_effort: xhigh parameter for extended multi-step reasoning chains. It also removes an obsolete structured-outputs beta header that was required from November 2025 but is no longer needed.

datasette 1.0a28 (April 17, 2026) — The latest alpha of Willison's datasette (a Python tool for browsing and publishing SQLite databases — the lightweight, single-file databases powering many mobile apps, internal analytics dashboards, and offline-first tools) patches a file descriptor leak (a bug where opening many database connections gradually consumes operating system resources until the process crashes), adds a new RenameTableEvent for plugin developers tracking SQL table renames, and introduces an automatic pytest (Python's most widely used testing library) plugin that properly closes all database connections after test runs. The datasette.io website now hosts 115+ news entries cataloguing the project since launch.

You can try the Qwen model right now — download LM Studio for free, search for Qwen3.6-35B-A3B-GGUF, and run your own version of the pelican test. If the 20.9GB one-time download produces better SVG illustrations than your current cloud tool, you've just found a zero-cost replacement for that specific workflow. For deeper comparisons of local versus cloud AI models in real automation workflows, explore the AI automation guides.

Related Content — Get Started | Guides | More News

Stay updated on AI news

Simple explanations of the latest AI developments