Google Auto-Diagnose: 90% Accuracy for AI Test Failures

Google's AI automation tool Auto-Diagnose hits 90% accuracy diagnosing integration test failures in 56 seconds — live for 22,962 developers across 39 teams.

Every developer knows the feeling: a CI build fails, and you're staring at 16 log files, thousands of lines deep, trying to figure out which one actually contains the problem. Google just published data showing their internal AI automation system — called Auto-Diagnose — hits 90.14% accuracy solving exactly that problem, and it's already running for 22,962 developers across the company.

That's not a research demo. Auto-Diagnose has processed 224,782 total test executions across 39 Google teams — and it returns a diagnosis in 56 seconds at the median, before most developers would finish context-switching to the failing test tab.

The Problem That Quietly Drains Every Engineering Team

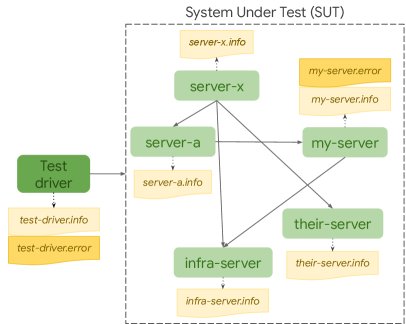

Integration tests (automated checks that verify multiple parts of a system work together, not just individual functions in isolation) fail constantly in large codebases. At Google's scale, the pain compounds: thousands of builds per day, logs spanning dozens of services, and a developer who wrote one small change trying to determine if it broke something in a completely unrelated module.

The old approach: read the logs manually, one by one. The new approach: let a large language model (an AI that reads and reasons over long, complex text) process all the relevant log files simultaneously, identify the root cause (the specific underlying reason for the failure), and return a structured, actionable explanation — in under a minute.

Auto-Diagnose is built on top of Gemini 2.5 Flash, Google's production LLM (large language model — the AI reasoning engine powering the diagnosis), and deployed inside Google's internal Critique system — the code review platform (the tool where engineers check and approve each other's code before it goes live) used companywide.

Every Number That Cleared Google's Production Bar

Deploying an AI tool inside Google requires clearing strict internal quality thresholds. Auto-Diagnose cleared every one:

- 90.14% root-cause accuracy — manually verified across 71 real-world integration test failures spanning 39 teams

- 84.3% "Please fix" rate — the percentage of developers who immediately took action after reading the AI's diagnosis

- 5.8% "Not helpful" rate — well below Google's internal 10% ceiling required for production deployment

- Ranked #14 out of 370 Critique tools — placing in the top 3.78% of all tools in Google's code review system

- 56-second p50 latency, 346-second p90 — the median diagnosis arrives before most developers even finish reading the first log file

52,635 Tests: Scale That Separates a Demo From a Product

Across 52,635 distinct failing tests and 224,782 total executions, Auto-Diagnose maintained its accuracy and speed. The 22,962 developers figure represents significant coverage of Google's engineering workforce — these are active users receiving AI-generated diagnoses on their failing tests, not accounts that merely have access to the tool.

Three Steps, One Minute: Inside the AI Automation Architecture

The technical design (how the system connects its parts to produce a result) keeps the process lean and the latency predictable:

- Log ingestion: Auto-Diagnose collects all output from the failing test — including stack traces (the sequence of function calls that led to the error), environment variables, dependency versions, and service-level context

- LLM reasoning: Gemini 2.5 Flash reads the consolidated log context and produces a structured diagnosis — identifying the root cause, the affected component, and a suggested fix path

- Critique integration: The diagnosis appears directly inside the code review interface, in the same workflow where the developer is already working — no separate dashboard, no context switch required

The research team specifically noted that context-switching (the cognitive cost of stopping one task to start a different one) was a core design constraint. Delivering the diagnosis before a developer mentally leaves their current task was intentional — not just a latency optimization, but a deliberate choice about where engineer attention gets spent.

Why 84% of Engineers Clicked "Please Fix" Without Investigating Further

Survey satisfaction scores are easy to dismiss. Behavioral data is harder. The 84.3% "Please fix" rate is not a thumbs-up metric — it's the percentage of engineers who read the Auto-Diagnose output and immediately clicked to resolve the issue. That requires the diagnosis to be:

- Specific enough to point at a real cause, not a vague direction like "check service dependencies"

- Accurate enough that engineers trusted it on first read without cross-checking manually

- Actionable enough that the next step was obvious, requiring no further investigation

The 5.8% "Not helpful" rate — sitting nearly half of Google's own internal 10% ceiling — confirms that false positives and vague outputs are rare rather than occasional. At 22,962 active developers and 224,782 test executions, maintaining that precision is the harder engineering challenge, and Auto-Diagnose has cleared it consistently across all 39 teams.

The Paper Is Public — Your Team Can Build This AI Automation Tool Now

The full research is available at arxiv.org/abs/2604.12108 — covering architecture, prompt engineering design, and evaluation methodology across all 39 Google teams. Engineering teams running their own CI pipelines (continuous integration systems — automated build and test runners like GitHub Actions, Jenkins, or CircleCI) can use this as a direct blueprint for building something similar.

The four key replication requirements the paper documents:

- A capable reasoning LLM with a large context window (to read multiple log files simultaneously without losing context between them)

- Output embedded directly inside the existing review workflow — not a separate tool that requires switching away from the current task

- Structured output that links the diagnosis to a specific fix action, not just a description of the problem

- A "Not helpful" feedback signal tracked over time to catch quality decay before it erodes engineer trust

If your team spends time manually triaging integration test failures, the research is public now, Gemini 2.5 Flash is accessible, and the paper documents exactly what an 84%-actionable diagnosis system looks like at production scale. Check the automation guides at aiforautomation.io for more on integrating AI into your development workflow.

Related Content — Get Started | Guides | More News

Sources

Stay updated on AI news

Simple explanations of the latest AI developments