Claude Opus 4.7: 40% Higher Costs Despite Flat $5/M Pricing

Claude Opus 4.7 costs ~40% more than 4.6 despite the same $5/M price — new tokenizer inflates text 1.46× and images 3×. Free audit tool inside.

Claude Opus 4.7's Hidden Cost Behind Identical Price Tags

Claude Opus 4.7 launched on April 16, 2026 with an unchanged price: $5 per million input tokens, $25 per million output tokens — identical to Opus 4.6. But developer and researcher Simon Willison ran the actual numbers, and the real bill tells a different story. His token comparison tool found the same system prompt consumed 1.46× more tokens on Opus 4.7 than on 4.6 — translating to an estimated 40% cost increase without changing a single line of code.

The reason is structural: Opus 4.7 is the first Claude model to ship with a new tokenizer (the internal system that splits your text into countable units the model processes). When the tokenizer counts more tokens for identical text, you pay more — even if the per-token rate stays flat. Anthropic's own published inflation range of 1.0–1.35× turned out to underestimate what Willison measured on a real production system prompt: 1.46×.

What Simon Willison's Claude Token Counter Measured

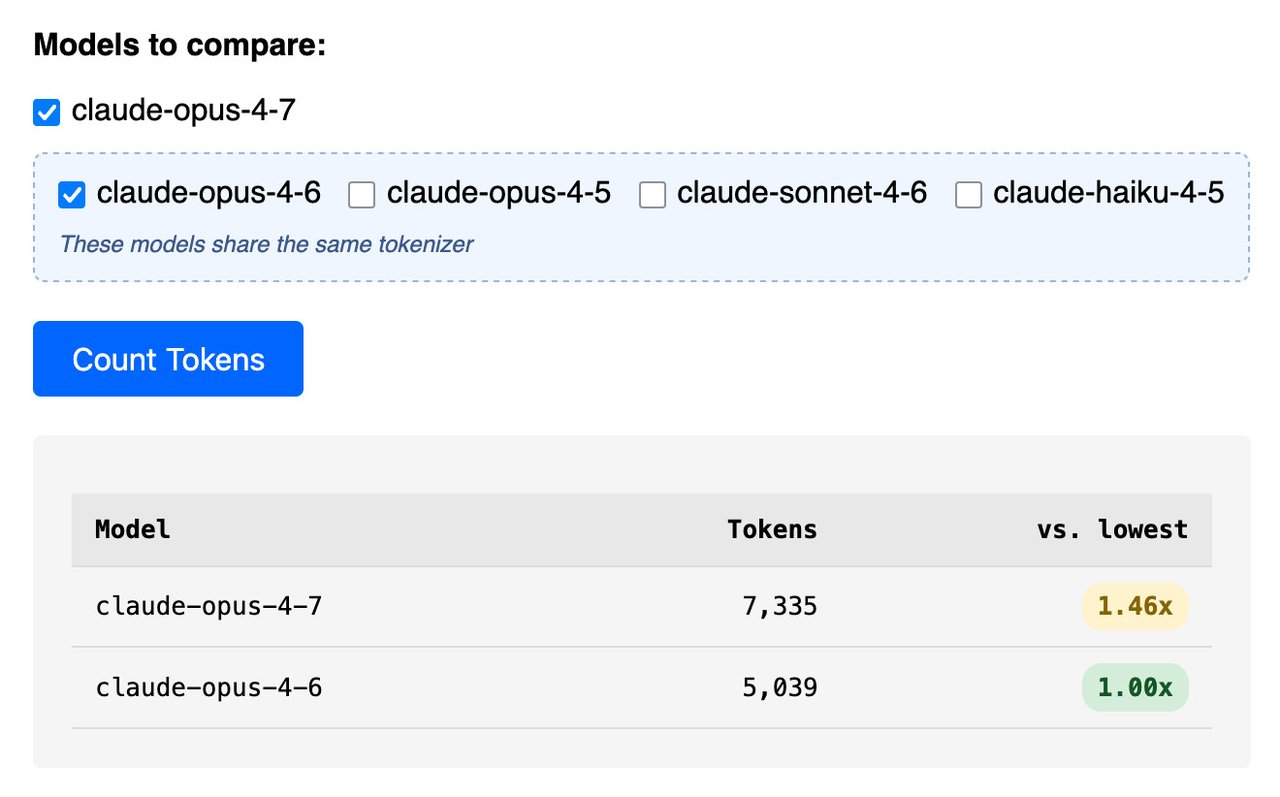

Willison upgraded his Claude Token Counter — a free browser-based utility — to support side-by-side model comparisons across all four current Claude models. When he ran Anthropic's own published system prompt through both models, the numbers exceeded what Anthropic's documentation had promised:

- Opus 4.7 system prompt: 7,335 tokens

- Opus 4.6 system prompt: 5,039 tokens

- Ratio: 1.46× — above Anthropic's stated 1.0–1.35× range

- Estimated cost increase: ~40% despite identical per-token pricing

A token, in this context, is the smallest unit of text an AI model processes — roughly 3–4 characters or about three-quarters of a word in English. The more tokens your prompt consumes, the more you pay. Token inflation means the same English sentence gets split into more pieces by the new tokenizer, and each additional piece costs money.

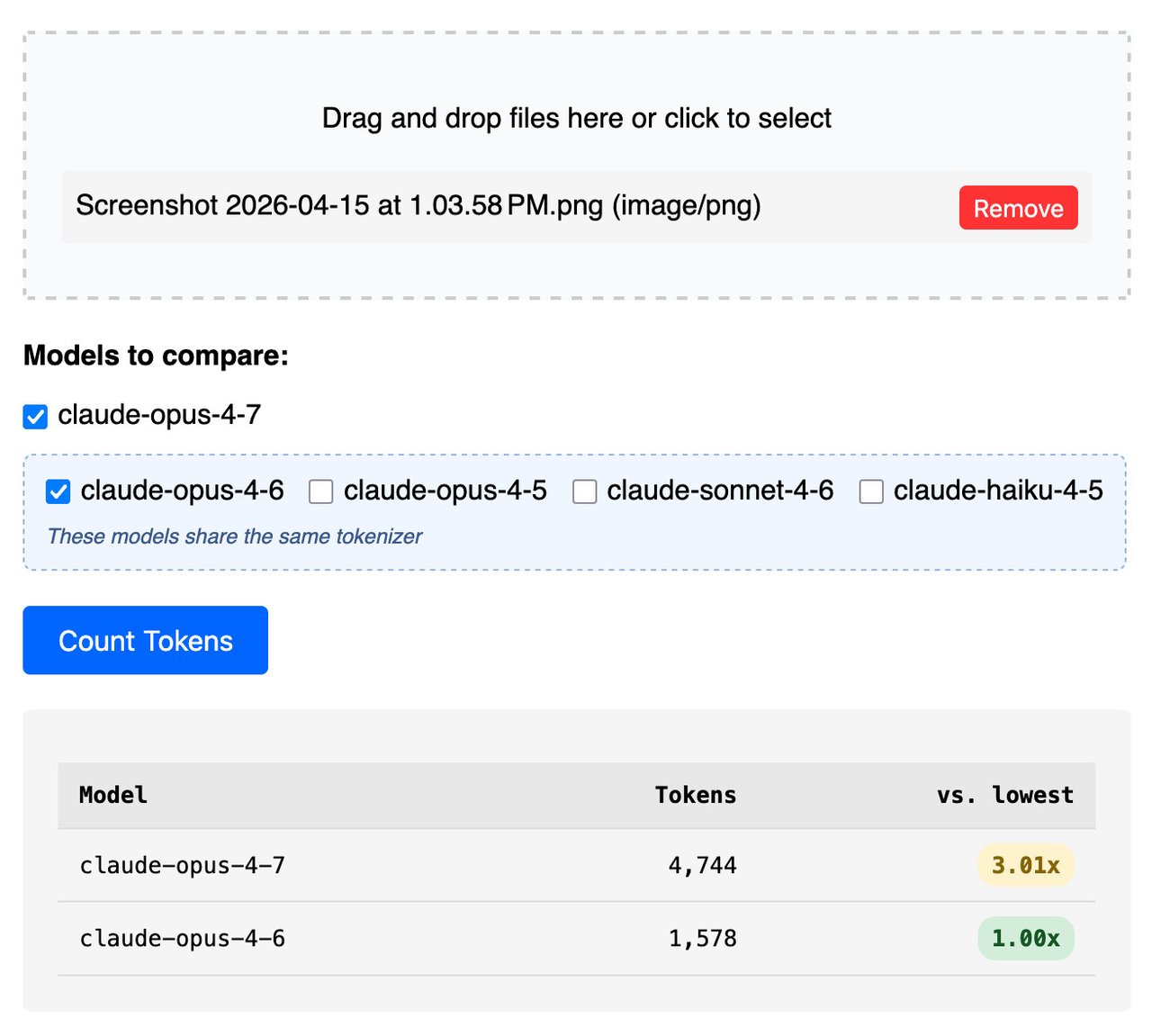

For images, the disparity was even larger. Willison tested a single 3.7 MB PNG measuring 3,456 × 2,234 pixels and found:

- Opus 4.7: 4,744 tokens

- Opus 4.6: 1,578 tokens

- Ratio: 3.01× — three times the token cost for an identical image file

There is a logical reason for this: Opus 4.7 now accepts images up to 2,576 pixels on the long edge (~3.75 megapixels total), compared to roughly 1.2 megapixels for prior Claude models — more than a 3× capacity increase. Higher-resolution image processing requires more computation, which maps to more tokens. The vision capability gain is genuine, but it comes with a proportional cost increase that developers must account for.

How to Audit Your Claude API Costs — No Account Required

The comparison tool is free, browser-based, and requires no login. To check what your prompts will cost across Claude model versions:

- Go to tools.simonwillison.net/claude-token-counter

- Paste your system prompt text, or upload an image file

- Select two or more models to compare: Opus 4.7, Opus 4.6, Sonnet 4.6, or Haiku 4.5

- Click "Count Tokens" — an instant side-by-side table shows the difference

If you're using the Claude API (Anthropic's developer interface for building apps with Claude) directly, you can also count tokens programmatically before committing to a full generation request — useful for cost forecasting before you push to production:

POST https://api.anthropic.com/v1/messages/count_tokens

{

"model": "claude-opus-4-7-20260416",

"system": "Your system prompt here",

"messages": [{ "role": "user", "content": "Your user message here" }]

}For developers who want to trace how Claude's internal system prompt has evolved since the Claude 3 launch in July 2024, Willison also published a Git history visualization — each change committed with a timestamp matching Anthropic's release dates, creating a 20-month navigable changelog:

git clone https://github.com/simonw/research.git

cd research/extract-system-prompts

# git log --oneline to browse all system prompt versions by release dateTo see exactly which tools Claude has loaded in any given session, Willison recommends prompting Claude directly: "List all tools you have available to you with an exact copy of the tool description and parameters." Anthropic does not publish tool descriptions externally, so this remains the most reliable discovery method. Our AI automation tools guide covers more on navigating Claude's cost model.

What Else Changed Between Claude Opus 4.6 and 4.7

Beyond token mechanics, Willison's full system prompt diff catalogued several other changes Anthropic shipped with Opus 4.7. The system prompt — the hidden set of instructions that shapes Claude's behavior in every conversation — now includes:

- Three new tools added: Claude in Chrome (a web browsing agent), Claude in Excel (spreadsheet editing), and Claude in PowerPoint (slide creation and editing)

- A

tool_searchmechanism: Before claiming it cannot access something — your calendar, location, memory, or external files — Claude now callstool_searchinternally to check whether a relevant tool exists but simply wasn't loaded into the current session - Expanded child safety section: A new

<critical_child_safety_instructions>tag with detailed content policies - Anti-verbosity instructions: Explicit discouragement of long-winded responses and pushy conversation-continuation tactics — behavioral nudges toward concise, direct answers

- Removed: Prior guidance discouraging emotes and asterisks in responses, and a specific clarification about the Trump presidency (the knowledge cutoff is now January 2026)

Despite the expanded system prompt, the total count of named tools in the Claude chat interface remains at 23 — unchanged from Opus 4.6. Willison also maintains a public archive of all Claude system prompts dating back to Claude 3 (July 2024), making it possible to audit exactly what instructions Anthropic adds, removes, or revises with each release.

Knowledge Cutoff: Already Three Months Stale at Launch

One practical footnote: Opus 4.7's knowledge cutoff is January 2026 — already three months behind at the April 2026 release date. This is a standard lag from large-scale training pipelines (the multi-month process of training, evaluating, and safety-testing a model before it reaches the public), but it means Opus 4.7 has no awareness of events from early 2026 onward unless you supply that context explicitly in your prompts.

Flat Pricing, Rising Bills: Claude Opus 4.7 Budget Math

Willison's core finding is a practical lesson for any team running Claude at scale: static per-token pricing does not equal a static bill when the underlying tokenizer changes. A team spending $10,000 per month on Claude Opus 4.6 could see that figure climb to roughly $14,000 on Opus 4.7 — with zero changes to their prompts or API usage patterns. The inflation is structural, not behavioral.

For image-heavy workflows — document analysis, OCR (optical character recognition, which converts images of text into machine-readable text), screenshot processing, or visual question answering — the 3× token inflation per image may make Opus 4.6 the more economical choice unless Opus 4.7's enhanced 3.75 megapixel vision resolution demonstrably improves accuracy on your specific task. The upgrade decision should follow a cost audit, not just a changelog announcement.

You can run that audit right now at Willison's free tool: tools.simonwillison.net/claude-token-counter. Paste in your top 3–5 system prompts, compare Opus 4.6 versus 4.7 side-by-side, and see the actual cost delta before committing to a migration. If you're building on Claude for the first time and want to understand how token pricing affects your AI automation build from the start, our getting started guide walks through the essentials.

Related Content — Get Started | Guides | More News

Sources

Stay updated on AI news

Simple explanations of the latest AI developments