Google Aletheia: AI Solves Novel Math Proofs at 91.9%

Google Aletheia scored 91.9% on elite math proofs and solved 6 novel problems no AI cracked. Enterprise AI automation just crossed a research-level threshold.

Google's Aletheia AI scored 91.9% on IMO-ProofBench — a benchmark of International Mathematical Olympiad-level proofs — and solved 6 out of 10 novel math problems in the FirstProof challenge with zero human guidance. If AI automation can now discover mathematical proofs on its own, every profession built on expert knowledge is about to change faster than most people expect.

What Google Aletheia's Autonomous AI Actually Did

Most AI benchmarks test whether a model can recognize a correct answer. IMO-ProofBench requires something harder: the system must construct a valid mathematical proof (a step-by-step logical argument proving a statement is true) entirely from scratch — the kind of reasoning that takes professional mathematicians hours or days.

Aletheia runs on Gemini 3 Deep Think (Google's most capable reasoning model as of April 2026) in a fully agentic loop — forming hypotheses, selecting proof strategies, checking its own work, and revising approaches, all without a human in the loop. The April 2026 FirstProof results:

- 91.9% accuracy on IMO-ProofBench

- 6 of 10 novel problems solved in FirstProof — problems no previous AI had cracked

- 0 human researcher interventions during the entire proof-discovery process

- 60% success rate on genuinely novel, previously unsolved mathematical problems

The remaining 4 unsolved problems mark the frontier where current AI reasoning still breaks down. But 60% success on original, previously unsolved research-level problems is a qualitatively different threshold than scoring well on existing training datasets. IMO-style proof construction requires the same cognitive skills as patent analysis, legal reasoning, scientific literature review, and financial modeling — domains where autonomous AI capability will have enormous practical consequences.

To understand the scale of what this means: Google's AI wasn't given hints, partial proofs, or multiple-choice options. It started from the problem statement and produced a complete, verifiable argument. The same underlying capability that cracks novel math will, within years, draft novel contracts, audit novel financial instruments, and synthesize novel scientific hypotheses — without a researcher in the room.

Four Enterprise AI Agent Systems That Shipped This Week

Aletheia wasn't the only major AI development in the week of April 17–22, 2026. Four enterprise-grade agent systems reached production or public preview, each solving a different piece of the infrastructure puzzle organizations face when deploying AI automation at scale.

Anthropic: Managed Agents for Claude

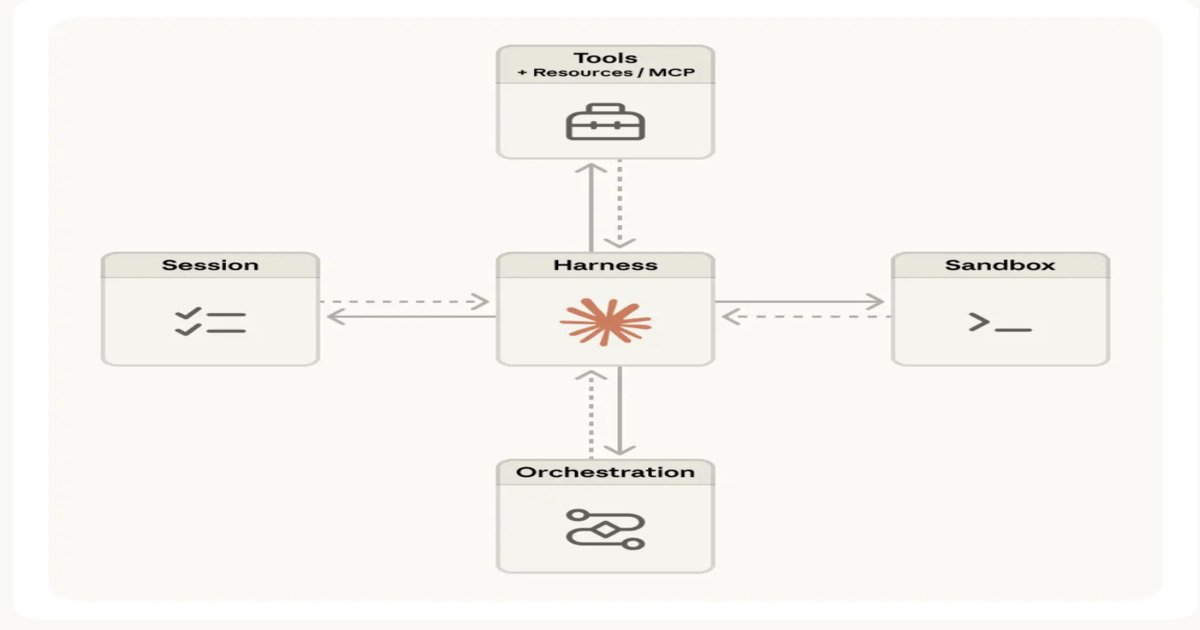

Anthropic released Managed Agents — an execution layer (a system that handles the infrastructure of running AI workflows, including memory management, authentication, and error recovery) for Claude. Previously, teams building multi-step AI workflows had to manage orchestration, sandboxing (isolated execution environments that prevent agents from accidentally affecting other systems), and session state themselves. Managed Agents abstracts those concerns away, enabling long-running tasks — workflows that take minutes or hours rather than seconds — to run reliably at scale without custom engineering infrastructure.

LinkedIn: Cognitive Memory Agent

LinkedIn's Cognitive Memory Agent (CMA) introduces three-layer persistent memory for AI systems: episodic memory (what happened in a specific past conversation), semantic memory (general knowledge the agent has accumulated over time), and procedural memory (how to perform specific recurring tasks). This directly addresses one of the most painful limits of current AI agents: they forget context between sessions. With CMA, a multi-agent system (a group of AI programs collaborating on a shared task) can maintain continuity across days or weeks — essential for enterprise workflows that span multiple sessions and involve multiple specialized agents working in coordination.

AWS: DevOps Agent + Agent Registry

AWS reached general availability for its DevOps Agent — an AI-powered system that investigates incidents, analyzes deployments, and automates operational tasks across AWS environments without requiring engineers to manually triage every alert. Alongside it, AWS launched the Agent Registry (currently in preview): a centralized catalog for discovering, governing, and reusing AI agents across an organization. The registry exists specifically because companies are experiencing agent sprawl (too many disconnected AI tools with no central oversight or governance), and it supports both MCP (Model Context Protocol — an open standard for AI agents to communicate with external tools) and A2A (Agent-to-Agent protocol — a standard for different AI systems to hand work off to each other) integrations.

Meta Caught 4× More Bugs — Without Writing Extra Tests

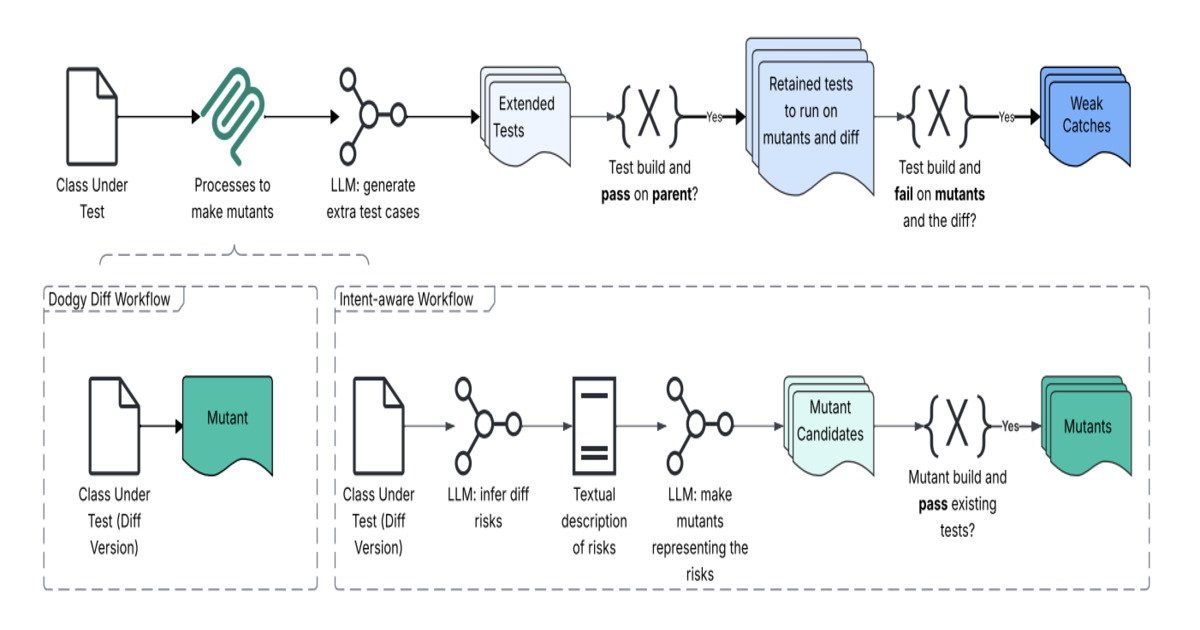

Software testing is one of the most time-consuming, highest-stakes parts of development. Meta's engineering team shipped Just-in-Time (JiT) testing — a system that generates tests dynamically during code review, analyzing the specific changes being made and creating targeted tests on the fly, rather than relying on static test suites (pre-written test files that only catch known failure modes from previous bugs).

The outcome was a 4× improvement in bug detection — four times more defects caught before code reaches production. For an organization running thousands of code changes per day, that multiplier compounds: fewer production incidents, less emergency engineering time, and lower costs from downstream failures. Meta reported this result across a large-scale, real-world codebase — not a controlled benchmark.

The broader implication: if dynamically generated tests become standard practice, the bottleneck in software quality will shift from "do we have enough test coverage?" to "are our AI systems asking the right questions about what can go wrong?" That's a fundamentally different engineering problem — and one most teams aren't equipped for yet. Learn more about AI-assisted development workflows in our automation guides.

The AI Security Infrastructure Gap Nobody Has Closed Yet

All of this progress comes with a pointed warning from the infrastructure community. The Cloud Native Computing Foundation (CNCF — the organization that governs Kubernetes and most major open-source cloud tools) issued an explicit statement in April 2026: Kubernetes is not sufficient for securing LLM workloads.

Kubernetes (an open-source system for deploying and managing applications across clusters of servers) was designed to orchestrate containers — it tracks whether a container is running, how much memory it's consuming, and whether it needs restarting. But it has zero visibility into what an AI agent is deciding inside that container. An agent that chooses to exfiltrate data, call unauthorized external services, or ignore rate limits looks identical to a legitimate agent from Kubernetes' perspective. The threat model (the complete set of risks a security system is designed to detect and prevent) for AI workloads is fundamentally different from what Kubernetes was built to address — and no widely adopted solution exists yet.

GitHub's recent outages — attributed to architectural coupling (when system components are so tightly connected that one failure cascades into others) and load scaling failures — reinforce the point. If GitHub, one of the most battle-hardened software platforms in existence, is struggling under rapid growth, the infrastructure behind newer AI agent systems faces the same or steeper challenges.

What This Week's AI Automation Releases Mean for Your Team

These aren't incremental updates. In the span of one week, Google proved AI can tackle original research autonomously at 91.9% accuracy, LinkedIn built memory infrastructure for stateful AI teams, AWS shipped governance tools for organizations overwhelmed by disconnected agents, and Meta automated a testing process that previously required senior engineering judgment — achieving 4× better results.

The consistent pattern: 2026 is the year AI agents stop being "chat assistants you configure once" and become "autonomous workers you need to manage." That shift delivers real capability gains — and equally real security and infrastructure gaps that existing tools weren't designed to handle.

Three concrete steps worth taking before your next sprint: (1) Evaluate whether your AI workflows retain session memory, or whether your agents start from scratch every interaction. (2) Check whether your security tooling can inspect AI behavior inside containers, not just container health metrics. (3) Explore JiT-style dynamic test generation — Meta's 4× result suggests the ROI is immediate and measurable. If you're unsure where to start, our practical guides break down which tools fit different team sizes and stacks.

Related Content — Get Started | Guides | More News

Sources

Stay updated on AI news

Simple explanations of the latest AI developments