Claude Opus 4.7: 40% Token Inflation at the Same Price

Claude Opus 4.7 keeps the $5/M price but burns 40% more tokens per task. Simon Willison's free token counter reveals your real cost before you upgrade.

Anthropic's Claude Opus 4.7 landed on April 16, 2026 with a number that looked reassuring: the same price as the model it replaced. But researcher Simon Willison ran the actual numbers — and found your real bill quietly jumped by 40%.

Willison's blog has become one of the most reliable early-warning systems for hidden AI pricing surprises. This time he built a free token-counting tool that compares Opus 4.7 against 4.6 side by side — and the results reveal exactly where the extra cost hides.

Claude Opus 4.7: Same Price, Higher Real Bill

Anthropic set Opus 4.7 at $5 per million input tokens and $25 per million output tokens — identical to Opus 4.6. On paper, upgrading costs nothing extra. In practice, the new tokenizer (the internal system that converts your text into numbered chunks an AI can process) breaks the same content into 40% more pieces. More pieces means more tokens means more money, even at the identical per-token rate.

Anthropic acknowledged the tradeoff in its own announcement: "Opus 4.7 uses an updated tokenizer that improves how the model processes text. The tradeoff is that the same input can map to more tokens — roughly 1.0–1.35× depending on the content type."

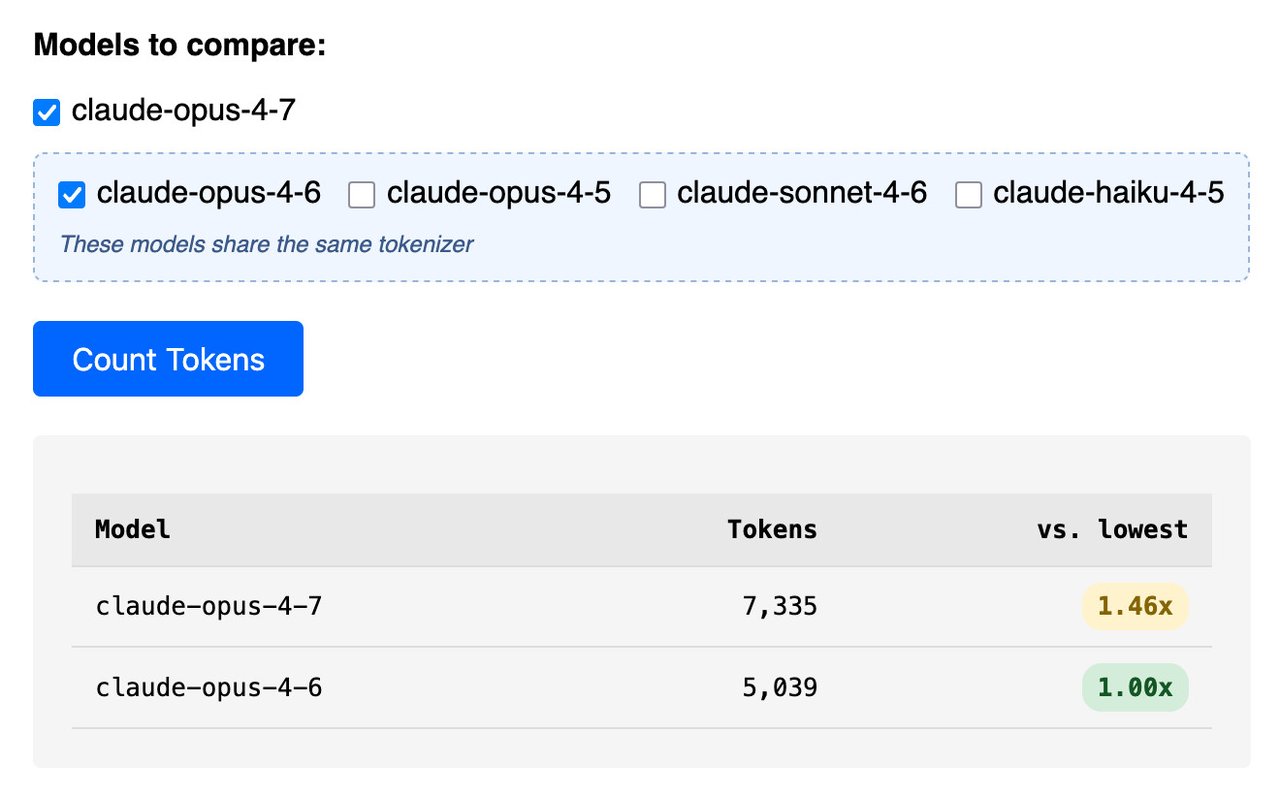

Willison's real-world testing found the ceiling sits higher than that official range. His system prompt analysis showed 1.46× token inflation — not the stated 1.35× — suggesting live production content hits worse than Anthropic's estimate.

Three Content Types, Three Very Different Surprises

Willison's Claude Token Counter makes the gap visible across content types. The inflation is not uniform, and where it hits hardest depends entirely on what you send the model:

- Text and system prompts — 1.46× inflation. Your standard API call now costs nearly 46% more in token terms compared to Opus 4.6. This affects every text-based chat, coding, or summarization task.

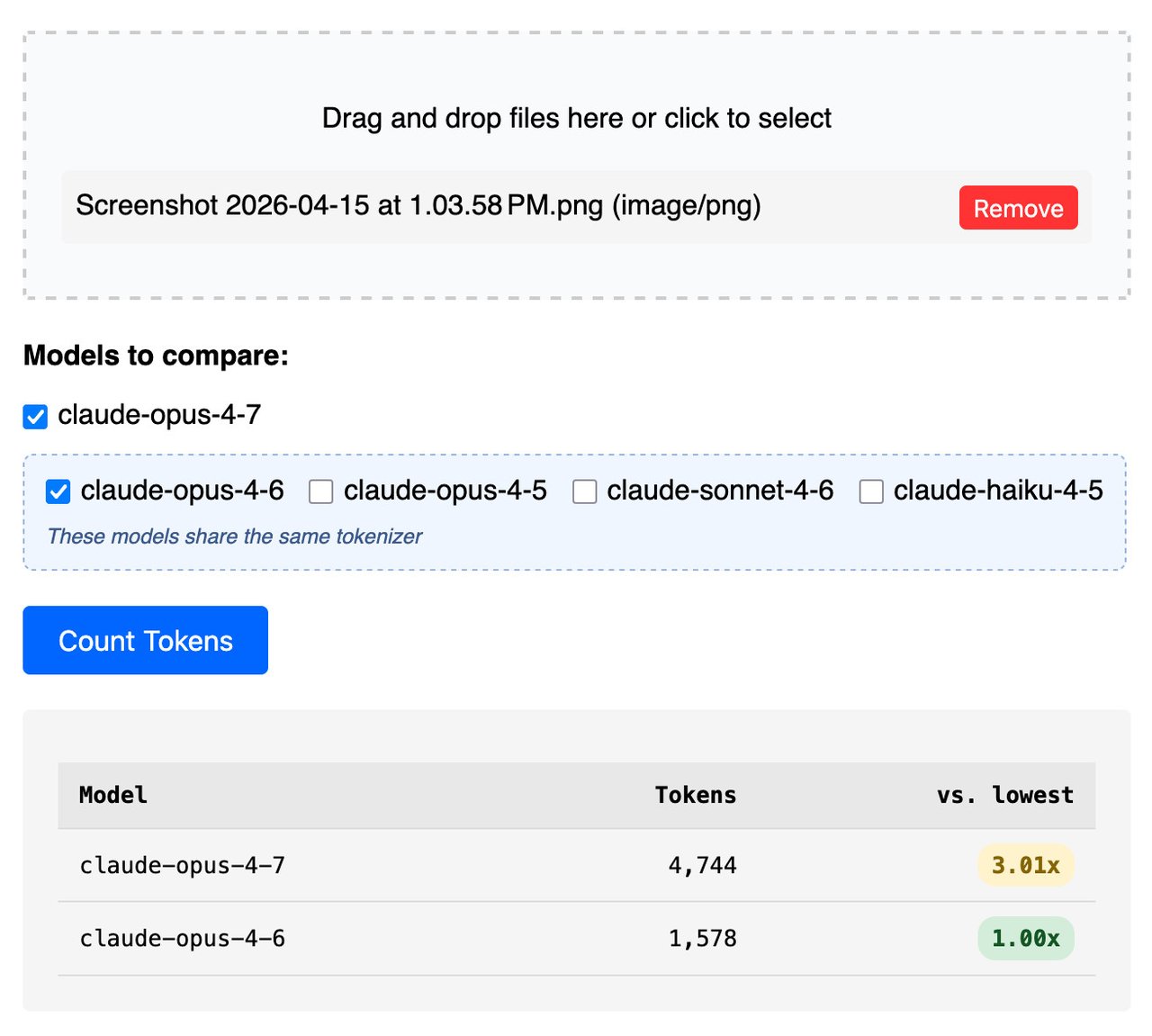

- High-resolution images — 3.01× inflation. Opus 4.7 now supports 3.75 megapixels (up to 2,576 pixels on the long edge), roughly three times the resolution ceiling of previous Claude models. That capability comes with tripled token costs for genuinely large images. A standard 682×318 screenshot showed zero difference between versions — but a 3,456-pixel-wide image tripled in token count.

- Large PDFs — 1.08× inflation. A 15MB, 30-page document only costs 8% more. Document-heavy workflows are the safest migration path to Opus 4.7 by far.

The critical question for any team: which content type dominates your API calls? Text-heavy chatbots face a near-certain 46% token increase. Image-processing pipelines that send high-resolution photos face bills up to three times higher. PDF summarization tools escape with minimal impact. Knowing your mix before migrating is the difference between a planned budget increase and a surprise invoice.

The Token Counter — Test Your Content Before You Commit

The tool lives at tools.simonwillison.net/claude-token-counter. Paste any content — a system prompt, a document excerpt, an image URL — and it returns the exact token count for four models simultaneously: Opus 4.7, Opus 4.6, Sonnet 4.6, and Haiku 4.5.

The cross-model comparison is the actionable part. You can see immediately whether a task is cheaper on Sonnet 4.6 than Opus 4.7 — or whether Haiku 4.5 handles it well enough at a fraction of the token weight. For teams running thousands of API calls (automated requests to the model) daily, identifying a 40% inflation before migration is a real financial decision, not a footnote.

Willison also released llm-openrouter 0.6 this week. The new llm openrouter refresh command pulls real-time model availability from OpenRouter (a platform that hosts dozens of AI models under one roof), making it easy to compare current options across providers. Install via:

pip install llm-openrouter

llm openrouter refreshWhat Else Changed in Claude Opus 4.7's System Prompt

Willison maintains an archive of Anthropic's system prompts (the hidden instructions that shape how Claude behaves) dating back to July 2024. The diff between Opus 4.6 and 4.7 reveals several behavioral changes beyond the tokenizer:

- tool_search: Claude now uses a

tool_searchcommand to check for available-but-deferred tools before claiming it can't do something — reducing the false "I don't have that capability" responses that frustrated developers in earlier versions. - Conciseness instruction added: The system prompt now explicitly tells Claude to attempt tasks without quizzing users first. Verbatim: "When a request leaves minor details unspecified, the person typically wants Claude to make a reasonable attempt now, not to be interviewed first."

- New platform integrations surfaced: The 4.7 system prompt mentions Claude in Chrome, Claude in Excel, Claude in PowerPoint, and Claude Cowork — indicating Anthropic is embedding Claude into mainstream productivity software beyond its standalone interface.

- Expanded child safety guardrails: A new

<critical_child_safety_instructions>section appears with more explicit safety rules. Developers building creative or educational tools should test edge-case content against 4.7 to confirm the new guardrails don't affect their workflows. - Emote language removed: The *italic action description* pattern present in Opus 4.6 has been stripped from 4.7, making responses cleaner in plain-text contexts.

The Bigger Shift: AI Automation Agents Drive API-First SaaS

The Opus 4.7 token story sits inside a broader trend Willison documented this same week. Salesforce CEO Marc Benioff announced "Headless 360" on April 19:

"Welcome Salesforce Headless 360: No Browser Required! Our API is the UI. Entire Salesforce & Agentforce & Slack platforms are now exposed as APIs." — Marc Benioff, Salesforce CEO

The logic is straightforward: when an AI agent needs to update a CRM record, navigating a browser GUI (the old robotic process automation approach — think bots clicking through menus) is slow and fragile. A direct API call is faster and more dependable. Developer Brandur Leach put it plainly: "Suddenly, an API is no longer a liability, but a major saleable vector to give users what they want — a way into the services they use and pay for so that an agent can carry out work on their behalf."

For Claude API users, this shift makes per-token economics more important, not less. A 40% token inflation on Opus 4.7 matters far more in an agent loop that runs 200 sub-tasks than in a single one-off chat prompt. The more you automate with Claude, the more the tokenizer change hits your bottom line.

Run the Token Counter now with your actual system prompts before committing to Opus 4.7. If you're starting to build Claude-powered workflows, the AI automation guide covers how to pick the right model tier for your budget — and when cheaper alternatives are genuinely good enough.

Related Content — Get Started | Guides | More News

Sources

Stay updated on AI news

Simple explanations of the latest AI developments