Claude Code Jumps to $100/mo — Codex Stays Free at $20

Claude Code quietly jumped to $100/mo with no warning. OpenAI Codex stays free at $20. Qwen's 27B AI model beats a 397B predecessor and runs on your laptop.

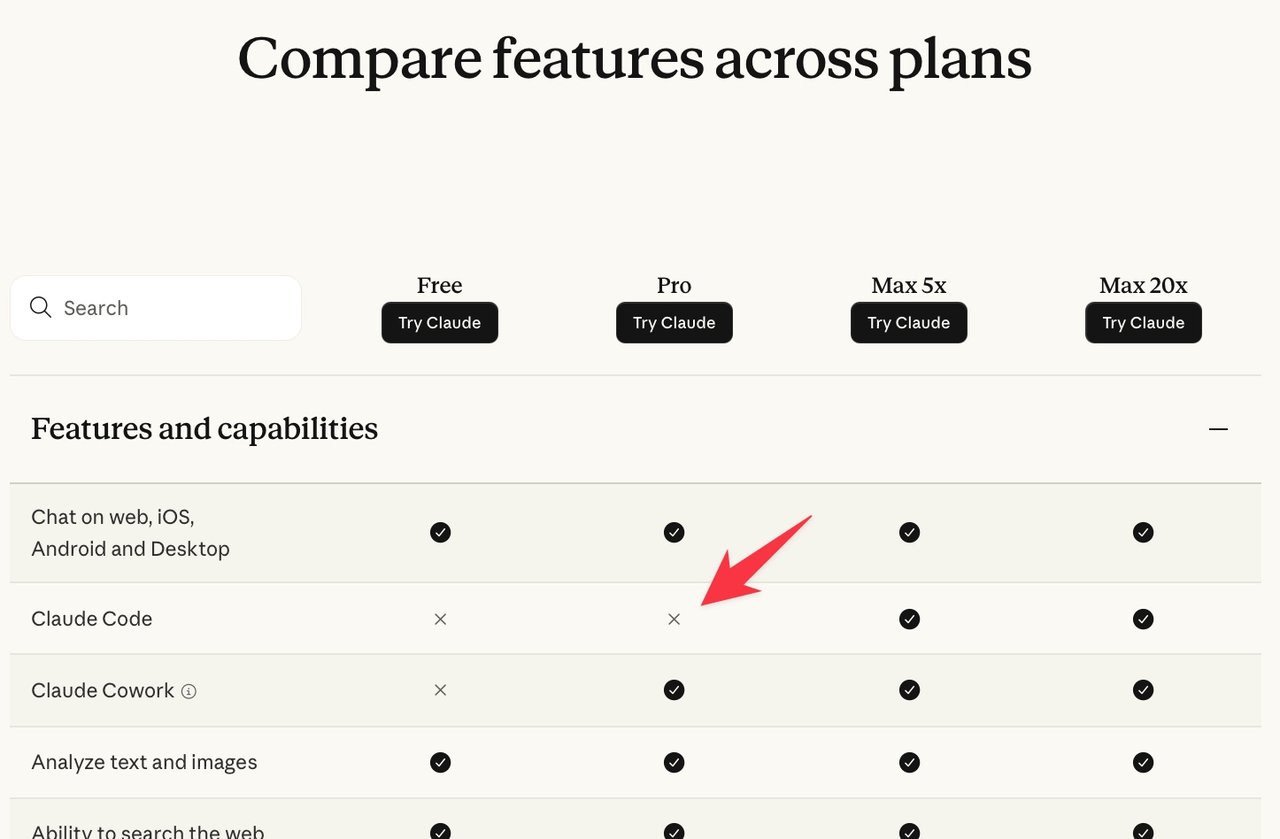

Anthropic silently updated its pricing to restrict Claude Code — its AI automation agent that writes and runs code inside your terminal — to Max plans starting at $100 per month, removing it from the $20/month Pro plan with no public announcement. The move landed the same day GitHub Copilot paused new signups entirely, while OpenAI went the opposite direction: Codex stays free and on the $20 Plus plan.

Claude Code's $80 Price Hike: No Official Announcement

Claude Code was available to $20/month Pro subscribers. On April 22, developers noticed the pricing page had changed: Claude Code now requires a Max plan — $100/month minimum, or $200/month for the 20x usage tier (a version with higher monthly usage limits). No blog post. No email. No changelog entry.

The only official word came from Amol Avasare, Anthropic's Head of Growth, in a tweet: "For clarity, we're running a small test on ~2% of new prosumer signups. Existing Pro and Max subscribers aren't affected." But the pricing grid was publicly visible to anyone who visited the page — and the Internet Archive captured the change in real time. The "small test" framing didn't match what developers were seeing.

The backlash was immediate across Reddit, Hacker News, and Twitter — developers calculating a 5x price increase on a tool they'd integrated into daily vibe coding and AI automation workflows. Analyst Simon Willison put the strategic risk plainly: "I'm not convinced that [Claude Code's] reputation is strong enough for it to lose the $20/month trial and jump people directly to a $100/month subscription."

Adding to the confusion: Claude Cowork — functionally a rebranded version of Claude Code — remains available on the $20 Pro plan, creating a product identity problem at exactly the wrong moment.

GitHub Copilot Hit the Same Compute Wall — and Paused New Signups

Anthropic wasn't alone. GitHub announced it had paused new individual plan signups for Copilot, citing a structural mismatch between its pricing model and actual compute costs from agentic use.

"Agentic workflows have fundamentally changed Copilot's compute demands," the company stated. "Long-running, parallelized sessions now regularly consume far more resources than the original plan structure was built to support."

Agentic workflows (where an AI runs multiple steps autonomously — searching your codebase, editing files, running tests, and iterating — without you approving each action) can burn 10x to 100x more tokens (the units of text an AI processes) per session than a simple chat interaction. GitHub charges per-request, not per-token, which means a single long agentic session can erase margins that weren't modeled into the original pricing.

The resulting changes to GitHub Copilot:

- Claude Opus 4.7 access restricted to the $39/month Pro+ plan

- Older Opus models dropped from the lineup entirely

- Windsurf, a competing AI coding tool, had already abandoned a similar credit-based pricing model under identical compute pressure

- Microsoft runs 75 products under the "Copilot" brand — 15 specifically titled "GitHub Copilot" — making it nearly impossible for users to track which product is affected by which change

OpenAI's Counter: Codex Stays Free on Both Plans

While Anthropic went quiet and GitHub scrambled, OpenAI's Codex engineering lead Thibault Sottiaux responded publicly: "I don't know what they are doing over there, but Codex will continue to be available both in the FREE and PLUS ($20) plans. We have the compute and efficient models to support it."

Codex (OpenAI's AI coding agent — separate from the older Codex model series) now occupies exactly the price slot Claude Code vacated. For individual developers evaluating coding agents today, Codex on the free or $20 Plus plan is the clearest short-term alternative while Anthropic's pricing stabilizes. Check our getting-started guides for AI coding workflows if you're setting up an AI automation setup from scratch.

Qwen3.6-27B: Beats a 397B Model and Runs on Your Laptop

The same week delivered a significant benchmark result from an unexpected direction. Alibaba's Qwen team released Qwen3.6-27B — a 27-billion-parameter model (a measure of how much knowledge and capability is encoded into the AI) that outperforms the previous-generation Qwen3.5-397B-A17B on all major coding benchmarks, despite being 15 times smaller by file size.

The specs that matter for daily use:

- Full size: 55.6GB vs. 807GB for the 397B model — a 15x file size reduction

- Quantized version: 16.8GB in GGUF format (a compressed format that lets you run AI models locally without a GPU server), fits on a MacBook Pro with 16GB+ RAM

- Speed: 25.57 tokens/second generation — fast enough for interactive coding sessions

- Complex output test: Generated 4,444 tokens for a pelican-on-bicycle SVG image in 173 seconds; 6,575 tokens for an opossum-on-e-scooter SVG in 265 seconds — both producing valid, correctly structured output

The model is open-source and MIT licensed. You can run it on your own machine using llama.cpp (an open-source tool for running AI models on consumer hardware — no cloud subscription required):

# Install llama.cpp (Mac)

brew install llama.cpp

# Run Qwen3.6-27B locally — no subscription, no API key

llama-server \

-hf unsloth/Qwen3.6-27B-GGUF:Q4_K_M \

--no-mmproj \

-np 1 \

-c 65536 \

--jinja \

--temp 0.6 \

--top-p 0.95 \

--top-k 20 \

--reasoning onWhen a 27B model matches a 397B predecessor, the pricing justification for large proprietary models weakens significantly. Qwen3.6-27B runs on your machine, costs nothing per month, and posts competitive benchmarks. Watch out for it becoming the default local coding alternative as Copilot and Claude Code continue raising their rates.

Firefox Found 271 Security Bugs With Claude — One Risk Worth Watching

Firefox 150 shipped with fixes for 271 security vulnerabilities, all identified using Claude Mythos Preview in a collaboration between Mozilla and Anthropic. Firefox CTO Bobby Holley called it a turning point: "Defenders finally have a chance to win, decisively."

For Firefox's hundreds of millions of users, the outcome is meaningful — AI-assisted security catching 271 issues that manual audits might have missed is a real safety gain. But the same week also demonstrated a structural risk: if Anthropic quietly changes pricing or access terms for Claude Mythos — as it appears to have done for Claude Code — what happens to Mozilla's security workflow? Organizations building critical processes on proprietary AI tools with opaque pricing policies should plan for that scenario before they're locked in. Follow our latest AI automation news as this story develops.

Related Content — Get Started | Guides | More News

Sources

Stay updated on AI news

Simple explanations of the latest AI developments