ChatGPT Images 2.0 just topped Gemini Nano Banana by 241...

ChatGPT Images 2.0 leads Gemini by 241 ELO and wins 80% of quality tests. Gemini renders in 2–8s and charges half the price per image.

On April 21, 2026, OpenAI shipped ChatGPT Images 2.0 — powered by a new model called gpt-image-2 (the underlying AI engine that runs behind the chat interface) — and within 12 hours it climbed to a 1,512 ELO score on LMArena. That placed it 241 points ahead of Google's Gemini Nano Banana. In ELO systems (a ranking method borrowed from competitive chess, where each point represents a measurable win-probability edge), a 241-point gap means GPT Image 2 wins roughly 80% of direct head-to-head matchups. The catch: Gemini generates those images at roughly 2× the speed, for half the per-image price.

The 241-Point Quality Lead — and What It Actually Means

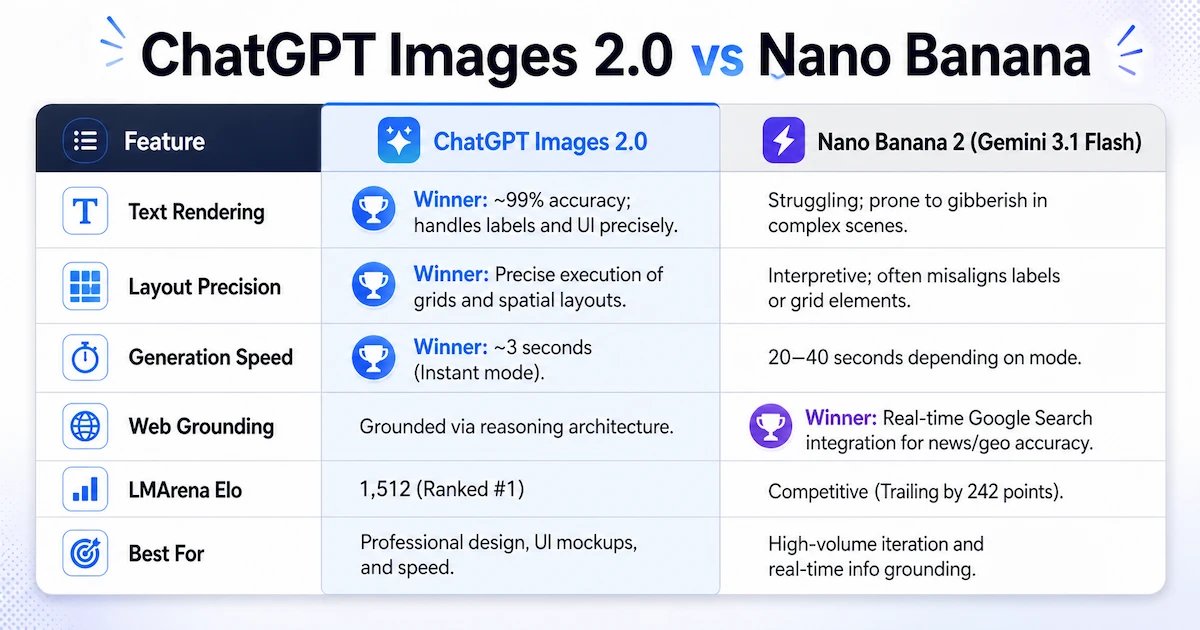

LMArena is a public leaderboard where real users cast blind preference votes in side-by-side AI image comparisons — one of the more trusted quality signals in the industry because it measures human preference at scale rather than automated lab metrics. ChatGPT Images 2.0 landed at 1,512 ELO; Gemini Nano Banana sits at 1,271. That gap opened within 12 hours of launch and has held steady.

The most decisive edge: text rendering. ChatGPT Images 2.0 achieves ~99% character accuracy across Latin, Japanese, Korean, Chinese, Hindi, and Bengali scripts. DALL-E 3 — which OpenAI is retiring on May 12, 2026 — routinely scrambled letters and invented nonsense glyphs. Gemini Nano Banana still produces garbled or misspelled text in complex, multi-element compositions. For marketers building multilingual ad creatives or designers generating UI mockups, that accuracy gap is the entire ballgame.

Spatial layout precision is the second category where GPT Image 2 pulls ahead. When a prompt specifies "left-aligned headline, product image right, price tag bottom-center," ChatGPT Images 2.0 follows it literally. Gemini interprets — placing elements approximately right, then improvising when the composition gets crowded. For teams generating dozens of layout variants per campaign, that reliability gap compounds fast.

Where Gemini Nano Banana Fights Back

Google's Nano Banana (an internal code name for Gemini's image generation engine — now in its second major version, also referred to as Gemini 3.1 Flash Image) holds two concrete advantages: speed and cost.

Generation times side by side:

- Gemini Nano Banana 2: 2–8 seconds per image

- ChatGPT Images 2.0 — Instant Mode: ~3–5 seconds

- ChatGPT Images 2.0 — Thinking Mode: 10–15 seconds

For a team generating 500 images daily, the Thinking Mode latency gap means a Gemini workflow finishes in under 70 minutes; the same job on ChatGPT Images 2.0 Thinking Mode takes 2+ hours. For draft ideation passes where speed matters more than perfection, Gemini wins by a margin that's hard to ignore.

Photorealism in natural environments is another Gemini stronghold. Outdoor scenes with atmospheric depth — golden-hour landscapes, overcast urban streets, storm-lit coastlines — consistently earn higher human preference scores on Gemini. The model applies richer contrast and stronger texture to natural light conditions. ChatGPT Images 2.0 leans toward cleaner, commercial-studio aesthetics by default.

Google's bigger differentiator may be personalization. Since April 16, 2026, Gemini can draw from your Google Photos library to generate images featuring you, your family, or specific real locations — without lengthy prompt engineering. Asking "generate a birthday card photo with me and my dog at the beach" now automatically pulls matching visual context from connected accounts. OpenAI has no equivalent feature yet.

By adoption metrics alone, Nano Banana Pro's reach is enormous: the model surpassed 1 billion image generations in 53 days — a pace that suggests Gemini's image tools command a wide, active audience even before the quality gap with ChatGPT fully closes.

What's Actually New Inside ChatGPT Images 2.0

The flagship addition is Thinking Mode — a reasoning pass where the model plans layout, composition, and spatial relationships before drawing a single pixel. Available exclusively to Plus ($20/month), Pro ($200/month), and Business subscribers, Thinking Mode unlocks four capabilities that weren't possible in gpt-image-1.5:

- Real-time web search during generation — the model can look up current product images, living people, or recent events it wasn't trained on

- Document analysis before generating — upload PDFs, screenshots, or brand guidelines and the model reads them first

- Up to 8 consistent outputs per prompt — essential for storyboards, manga sequences, or product variation sheets that require visual continuity across frames

- Self-review pass — the model checks its output for perspective errors, text typos, and proportion issues before returning the result

A single prompt like "design a 4-slide deck explaining quantum tunneling to a 12-year-old" now produces 4 coherent slides with consistent design language — previously that required 4 separate prompts and manual style matching between outputs.

Technical output specs also jumped. GPT Image 2 generates at native 2K resolution — double the previous gpt-image-1.5 — with 4K upscaling in beta. Aspect ratio support now covers 3:1 ultra-wide panoramas and 1:3 tall mobile formats, which matters for creators managing content across multiple platform format specs at the same time.

The Price Breakdown — From Free Tier to Enterprise Scale

At the free tier, both tools are broadly equivalent: a few images per day at no cost. The gap opens at API scale — that's the technical pathway developers use to access image generation inside their own apps and automated workflows:

- GPT Image 2 API: $0.04–$0.08 per 1024px image

- Gemini Nano Banana API: $0.02–$0.04 per image

At 10,000 images per month, Gemini saves $200–$400 versus GPT Image 2. At enterprise scale — an e-commerce platform auto-generating 500,000 product shots — the annual cost difference exceeds $120,000. That's a budget line that changes procurement decisions, regardless of quality rankings.

Subscription tiers align closely at the individual level: Google AI Pro ($19.99/month) includes 100 images/day with Nano Banana Pro access; ChatGPT Plus ($20/month) includes unlimited standard-mode images with full Thinking Mode included. For solo creators, the monthly outlay is nearly identical. The cost divergence becomes decisive only at API and business scale.

Picking the Right Tool for Your Work

The 241-point ELO lead is statistically significant — but it doesn't make Gemini Nano Banana the wrong choice for every workflow. The decision maps cleanly to use case:

- ChatGPT Images 2.0 wins for: marketing assets with precise text, multilingual infographics, UI mockups, multi-slide decks, brand-consistent image batches. The 99% text rendering accuracy and Thinking Mode's spatial planning justify the price premium for client-facing deliverables.

- Gemini Nano Banana 2 wins for: photorealistic outdoor scenes, Google Photos-personalized images, high-volume draft generation, Google Workspace integration, API-scale cost efficiency where $200–$400/10K images adds up fast.

- Hybrid approach: many agencies now run ChatGPT Images 2.0 for final hero images and Gemini for ideation rounds — capturing quality where it's visible while keeping draft costs low.

DALL-E 3 users have until May 12, 2026 to migrate. Inside ChatGPT, the transition is automatic. For API integrations, update the model parameter in your requests from dall-e-3 to gpt-image-2 and test your prompts — some outputs will look noticeably different. You can try ChatGPT Images 2.0 now at chatgpt.com (standard mode, free tier) and Gemini Nano Banana at gemini.google.com. Run the same branded infographic prompt on both — text rendering is where the quality gap becomes immediately obvious.

Related Content — Get Started | Guides | More News

Sources

Stay updated on AI news

Simple explanations of the latest AI developments