Google's Aletheia silently solved 6 novel math proofs —...

Google's AI solved 6 of 10 never-seen math proofs autonomously at 91.9% accuracy. Meanwhile 5 cloud giants shipped agent platforms in 14 days.

Google's Aletheia (an autonomous AI research system built by DeepMind) independently solved 6 out of 10 brand-new, never-before-published mathematical research problems — scoring 91.9% on IMO-ProofBench (a standardized test of university-level proof difficulty). No human wrote a single proof step. This happened in the same two-week window — April 18–24, 2026 — when five other major cloud companies each shipped AI agent infrastructure platforms for enterprise use, signaling a fundamental shift from AI as a consumer product to AI as operational backbone.

When Math Became a Machine's Sport

Aletheia was tested in the FirstProof challenge — a competition designed specifically to give AI systems genuinely novel mathematical problems that have not appeared in any training dataset. It solved 6 of 10. The IMO-ProofBench score of 91.9% (IMO stands for International Mathematical Olympiad — one of the world's hardest formal math competitions) places Aletheia at a level that rivals professional research mathematicians on proof tasks.

The system runs on Gemini 3 Deep Think — Google's highest-capability reasoning model — and operates in a fully agentic loop (meaning it autonomously plans, attempts, fails, revises, and re-attempts without any human prompting). Unlike AI assistants that answer textbook math questions, Aletheia is doing genuine research: generating hypotheses, constructing formal proofs, and verifying its own conclusions from scratch.

Why does this matter beyond academia? Automated proof discovery accelerates drug discovery (where mathematical proofs underpin the accuracy of molecular simulations), cryptography (the math that protects every bank transaction and password on the internet), and compiler verification (the process that ensures software does exactly what it is supposed to do at the hardware level). Research institutions that currently spend years on a single theorem could do that work in weeks.

Five Platforms in Fourteen Days: The Enterprise Agent Race

While Aletheia attracted headlines, something equally significant happened in parallel: five cloud giants simultaneously shipped production-grade AI agent infrastructure in the same 14-day sprint. This is not coincidental — enterprise customers are pushing hard for AI that goes beyond chatbots and into operational systems that actually run business processes.

- Cloudflare Sandboxes (GA): Persistent, isolated Linux environments (think secure virtual computers that reset cleanly between sessions) for running AI agents. Features include snapshot-based session recovery (if an agent crashes mid-task, it picks up exactly where it left off), secure credential injection via an egress proxy (so agents authenticate to external services without exposing passwords in code), and a pay-only-for-active-CPU pricing model — you pay for actual computation, not reserved idle capacity.

- AWS DevOps Agent (GA): Amazon's answer for operations teams — handles incident troubleshooting, deployment analysis, and routine operational tasks using generative AI, integrating directly with existing AWS infrastructure. No migration required.

- Anthropic Managed Agents: A meta-harness architecture (a design pattern that cleanly separates what an agent does from the plumbing of how it runs — orchestration, sandboxing, state management, and credentials are handled automatically by the framework) enabling long-running, multi-step workflows with built-in error recovery and session continuity.

- Google ADK for Java 1.0: Google's Agent Development Kit reached its first stable release for Java developers, adding an app/plugin architecture, external tool integrations, advanced context engineering (techniques that give agents longer and more reliable working memory mid-task), and human-in-the-loop workflows for tasks that require a human sign-off.

- LinkedIn Cognitive Memory Agent (CMA): An infrastructure layer giving AI systems three distinct persistent memory types — episodic (remembering specific past events, like "last Tuesday's meeting"), semantic (storing generalizable facts, like user preferences), and procedural (retaining step-by-step workflows for recurring tasks). This directly addresses the biggest enterprise AI frustration: agents that forget everything the moment a session ends.

The architectural pattern across all five is identical. Every platform solves the same three enterprise problems: isolation (keeping agents from accessing what they shouldn't), state persistence (making agents remember context across long-running tasks), and cost predictability (billing for actual work performed, not reserved capacity). Cloudflare's active-CPU model is the first credible alternative to per-seat SaaS billing for agent workloads — and it will pressure AWS and Anthropic to respond.

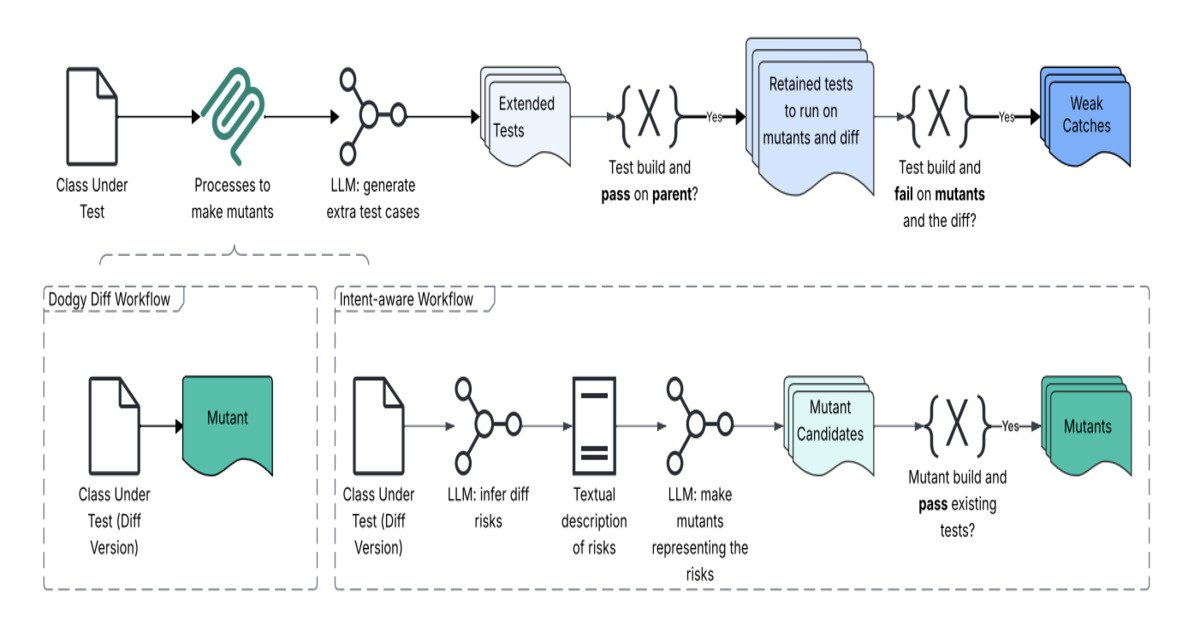

Meta's 4x Bug Detector and the Production Testing Gap

Meta shipped its Just-in-Time (JiT) testing system — and the results are concrete: approximately 4x improvement in bug detection during code review compared to traditional static test suites (pre-written test scripts that check code against fixed scenarios written months or years ago). The system combines three techniques:

- LLM-generated tests on the fly: Instead of relying on old tests, the AI reads each new code change and writes targeted tests specifically for that change — catching issues the original test suite was never designed to find

- Mutation testing: The system deliberately breaks code in small, controlled ways to verify that its generated tests actually catch real failures (not just pass by accident)

- Intent-aware "Dodgy Diff" detection: The AI flags code changes that look suspicious based on the developer's stated intent — catching cases where the code technically compiles but probably does the wrong thing

In practice: a developer submits a pull request (a proposed code change for review), the JiT system reads the change, generates targeted tests on the spot, runs them, and flags issues — before any human reviewer has even opened the file. For engineering teams handling dozens of pull requests per day, this translates directly to hours of saved review time per sprint and fewer bugs that make it to production.

The Security Warning Nobody Wanted to Hear

Not all of April's AI news was optimistic. Security researcher Shuman Ghosemajumder issued a warning that deserves attention well beyond security teams: generative AI has become, in his words, "a high-scale weapon for disinformation and fraud" — what he calls Disinformation Automation.

The most alarming technical finding: CAPTCHA-based security defenses are now effectively obsolete. CAPTCHAs (those "prove you're human" puzzle tests on login pages and checkout flows) were designed around a single assumption — that computers struggle with tasks humans find trivial, like reading distorted text or identifying traffic lights in grainy photos. Modern AI systems handle these tasks with what Ghosemajumder calls "chilling accuracy." The entire premise has collapsed.

His recommendation for engineering and security leaders: move to zero-trust "cyber fusion" strategies — security architectures (system designs) that assume no user, device, or AI agent is trustworthy by default, and continuously verify every action taken. Traditional perimeter security — keep the bad actors out, trust everyone inside — no longer works when the bad actors are AI systems that look, type, and behave exactly like humans. Begin evaluating behavioral biometrics (verification systems that identify users by typing rhythm and mouse movement patterns, rather than puzzle-solving ability) as a layered defense alongside existing auth flows.

What to Act On This Week

The infrastructure window is open. Five enterprise AI agent platforms launched in 14 days means pricing is competitive, documentation is fresh, and vendor support is at its most responsive. Here is where to start, depending on your role:

- Engineering managers: Meta's 4x bug detection improvement is achievable without Meta's internal tooling. AI-assisted code review that generates tests per pull request is available today. See the AI automation guides for team-level starting points.

- Security teams: Audit your CAPTCHA dependency today — treat it as a known gap, not a safeguard. Begin piloting behavioral verification tools alongside existing authentication flows before a breach forces the issue.

- Java developers building agent systems: Google's ADK 1.0 is now stable. Add it to your Maven project:

<dependency>

<groupId>com.google.cloud</groupId>

<artifactId>google-cloud-adk-java</artifactId>

<version>1.0</version>

</dependency>- DevOps and SRE teams: AWS DevOps Agent is generally available today. If you are already on AWS infrastructure, onboarding cost is minimal — it integrates with CloudWatch, CodeDeploy, and existing incident management workflows. Visit the getting started guide for a quick-start checklist.

- Product and e-commerce teams: The DoorDash LLM personalization case study (linked in sources below) shows how static merchandising was replaced with real-time, LLM-generated consumer profiles — with measurable lift. If your recommendation engine is more than 18 months old, it is likely underperforming against this new baseline.

The deeper story is not about individual product launches. It is about who controls the infrastructure layer when AI agents become the default way knowledge work gets done. The companies shipping that infrastructure right now — Cloudflare, AWS, Google, Anthropic — are placing bets that will determine which organizations run AI cheaply and reliably, and which ones pay premium prices for lock-in later. Aletheia solving 6 novel math proofs at 91.9% accuracy is the proof-of-concept. The race to deliver that capability to every enterprise team is what April 2026 was really about.

Related Content — Get Started | Guides | More News

Sources

- InfoQ: Google DeepMind Aletheia Math AI

- InfoQ: Cloudflare Sandboxes GA

- InfoQ: Anthropic Managed Agents

- InfoQ: LinkedIn Cognitive Memory Agent

- InfoQ: Meta JiT Testing AI Detection

- InfoQ: AWS DevOps Agent GA

- InfoQ: Google ADK Java 1.0

- InfoQ: DoorDash LLM Personalization

- InfoQ: Deepfakes and Disinformation (Ghosemajumder)

- InfoQ: Cloudflare MCP Architecture

Stay updated on AI news

Simple explanations of the latest AI developments