Microsoft AGI Clause Dead — OpenAI Pays Through 2030

Microsoft's 7-year AGI clause expired April 27 — OpenAI now pays unconditionally through 2030. Also: VibeVoice transcribes 1-hour audio in 9 min, free locally.

The Microsoft-OpenAI AGI clause — a 7-year legal provision defining one of tech's largest AI partnerships — quietly expired on April 27, 2026. That clause — a condition written into their 2019 deal that would have reshuffled billions in IP rights the moment artificial general intelligence (a hypothetical system capable of performing any cognitive task a human can) was declared — was officially declared dead. Not because AGI arrived. Because neither company could agree on what AGI actually means.

The AGI Clause: A $100 Billion Bet Nobody Could Define

When Microsoft first invested in OpenAI in 2019, the partnership included a dramatic provision: Microsoft's exclusive commercial rights to OpenAI's technology would expire when AGI was achieved. At that point, the rules would reset — OpenAI's nonprofit mission would take over, and Microsoft's commercial leverage would be curtailed.

The problem: nobody could pin down a definition. Over seven years, "AGI" was redefined three times:

- 2019 original: "Pre-AGI technologies" — vague, tied to the OpenAI charter but never quantified

- December 2024: A $100 billion annual profit threshold, reportedly negotiated between teams — the first time a concrete number appeared (per The Information)

- October 2025: An independent expert panel — discretionary human judgment replacing the financial benchmark, with no published selection criteria

None of these definitions survived. On April 27, 2026, OpenAI's announcement used a single phrase that analyst Simon Willison identified as the death certificate: the new terms are now "independent of OpenAI's technology progress." The Verge ran the headline simply: "The AGI clause is dead." The trigger that was supposed to reshape AI's biggest business relationship — quietly dropped.

What Microsoft Won (and What It Gave Up)

The restructured deal changes the commercial relationship in four concrete ways:

- Exclusivity — lost: Microsoft's IP rights are now non-exclusive. Previously exclusive through 2030, OpenAI can now license its models to any cloud provider or partner without restriction.

- Revenue out — stopped: Microsoft no longer pays revenue share to OpenAI, ending an undisclosed but significant outbound cost.

- Revenue in — guaranteed: OpenAI continues paying Microsoft revenue share through 2030, now fully decoupled from any technological milestone. Microsoft gets paid whether or not AGI ever arrives.

- License extended: Microsoft retains a license to OpenAI's IP through 2032 — a 2-year extension past the original 2030 date, though no longer exclusive.

Bloomberg's Matt Levine satirized this exact outcome in 2023: he imagined OpenAI declaring AGI, cutting investors a check for their capped return, and walking away to pursue its mission. The real resolution is more pragmatic — both companies abandoned the gamble entirely. Microsoft traded "we win big if AGI happens" for "we get paid either way." That is a meaningful concession from OpenAI, which gave up the ability to trigger a full partnership reset simply by declaring a milestone achieved.

The shift reflects a broader truth: the definition of AGI has always been whoever holds the pen. A $100 billion profit threshold — the 2024 version — would have required OpenAI to generate more annual profit than Apple did in its best year. The "independent expert panel" version from October 2025 handed that same discretion to unnamed humans with no published criteria. Abandoning the clause altogether may be the most honest outcome either company could reach.

VibeVoice: Transcribe a 1-Hour Podcast in 9 Minutes, Locally

While the AGI deal made headlines, Microsoft also has a quieter open-source audio release worth evaluating. VibeVoice is a Whisper-style speech-to-text model (Whisper being OpenAI's widely-used audio transcription system) with one key advantage: built-in speaker diarization (automatic identification of which person is speaking at each moment — something standard Whisper does not include). Released January 2026 under the MIT license, it mostly flew under the radar until developer Simon Willison published real-world benchmarks this week.

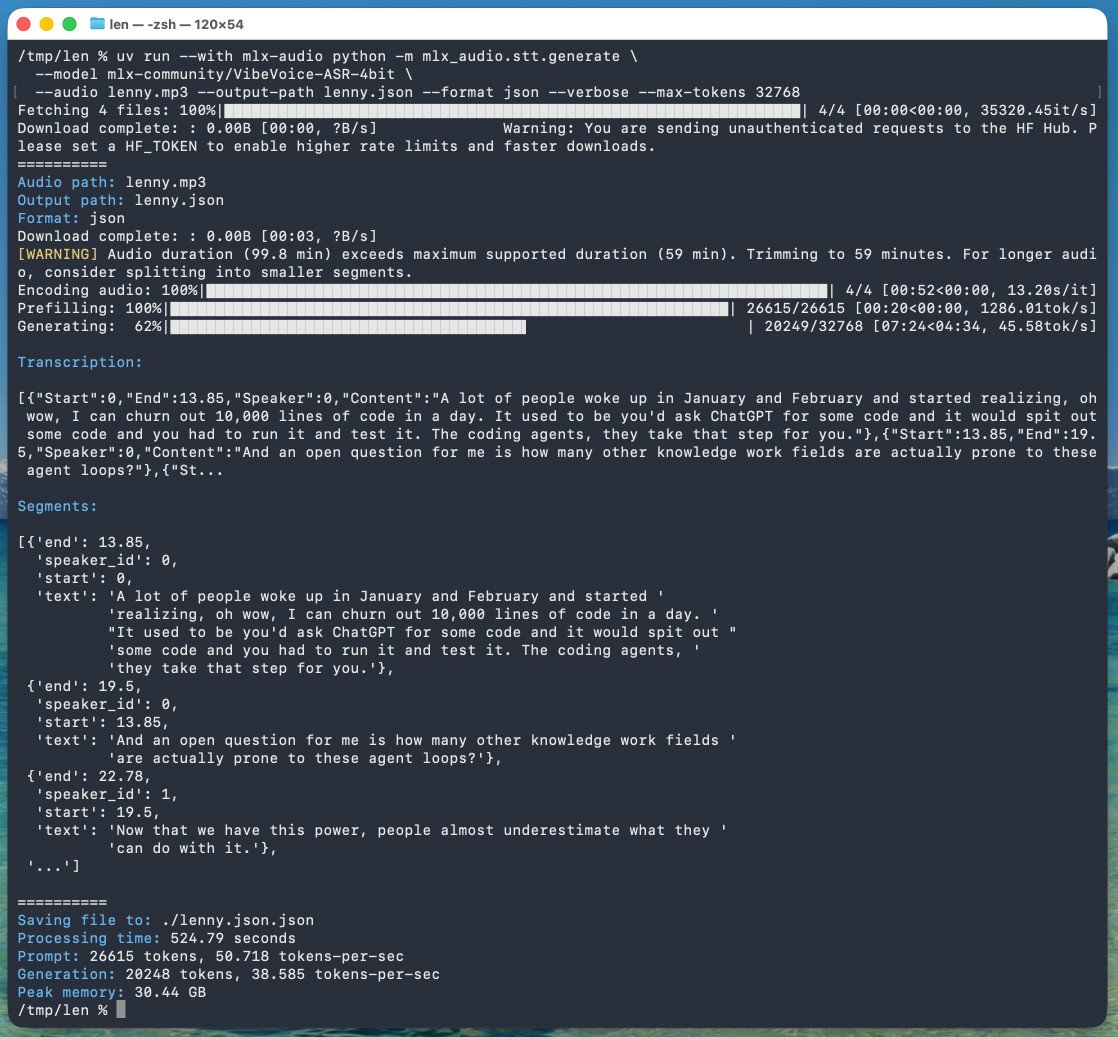

The results on a 128GB M5 Max MacBook Pro:

- Input: 99.8-minute podcast (trimmed to 59 minutes per the 1-hour run limit)

- Processing time: 8 minutes 45 seconds — approximately 6.8× faster than real-time

- Peak memory (actual): 61.5GB during the prefill stage (the tool self-reported only 30.44GB — a 2× undercount from different measurement methods between tool reporting and Activity Monitor)

- Output: structured JSON with speaker labels, timestamps, and text segments — fully queryable in Datasette Lite (a browser-based SQL tool for exploring JSON data without any server setup)

Speaker identification worked better than expected: the model detected three distinct voices — the two podcast hosts plus a separate voice attribution for the intro and sponsor reads, which used a slightly different cadence. That level of granularity normally requires a paid service like Descript ($12–24/month) or AssemblyAI (usage-based pricing). VibeVoice gives you the same output on local hardware at no cost — making it a practical AI automation tool for content creators and developers processing audio without subscriptions.

How to Run VibeVoice Locally on Apple Silicon

The quantized MLX version — MLX being Apple's machine learning framework optimized for Apple Silicon chips — weighs just 5.71GB versus the full 17.3GB base model on HuggingFace. One critical gotcha: the default max-tokens is 8,192, covering only approximately 25 minutes of audio. For a full hour, you must set it to 32,768 manually:

uv run --with mlx-audio python -m mlx_audio.stt.generate \

--model mlx-community/VibeVoice-ASR-4bit \

--audio <path-to-audio-file> \

--output-path <output-dir> \

--format json --verbose --max-tokens 32768Hard limits to plan for before committing: the model caps at 60 minutes per run. Longer recordings need manual splitting into segments with 1-minute overlaps at each boundary to avoid dropped words at transitions. You will also need at least 64GB RAM in practice — Activity Monitor peaks at 61.5GB during the prefill phase, even though the tool itself reports 30.44GB. Token processing ran at 50.7 tokens/sec during prefill and 38.6 tokens/sec during generation.

For a deeper look at local AI audio tools you can run today, check the automation tools guide — it covers setup steps for Apple Silicon workflows without subscriptions.

Google Meet's Real-Time Translation: The Sci-Fi Version Is Here, Sort Of

Also landing this week: Google Meet's speech translation feature is rolling out to mobile devices. The system translates spoken words in real time, then synthesizes a translated voice that mimics the original speaker's intonation and cadence — not just subtitles, but a voice that sounds like the speaker. Currently supported: 6 languages — English, Spanish, French, German, Portuguese, and Italian.

The caveat is real. Google labels this "still very alpha," and instability is documented: the feature worked between two laptops using web browsers but failed between an iPhone and an iPad in testing. Questions about latency measurement, privacy (on-device vs. cloud processing), offline availability, and the language expansion roadmap (notably absent: any Asian, African, or Middle Eastern languages) remain unanswered.

Still, the core capability is genuinely significant once stable — real-time voice translation with speaker-matching synthesis, inside a product already used by more than 2 billion people. When mature, it eliminates the biggest friction point in international meetings: the moment where two people realize they need a third tool. The opt-in prompt appears in the Meet mobile app when the feature reaches your account. Watch the rollout closely; the alpha-to-stable window for Meet features has historically been 3–6 months.

All three developments — the AGI clause death, VibeVoice's local transcription benchmark, and Meet's translation rollout — landed in a single 72-hour window from April 25–27, 2026. They represent three distinct tempos of AI progress: billion-dollar IP deals take seven years to settle; open-source tooling ships quietly in January and gets stress-tested in April; consumer features launch in alpha and improve in production. If you are evaluating audio tools today, get started with local speech workflows while VibeVoice is free, MIT-licensed, and available now.

Related Content — Get Started | Guides | More News

Sources

Stay updated on AI news

Simple explanations of the latest AI developments